* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Lecture 5

Survey

Document related concepts

Transcript

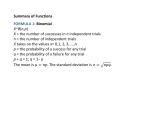

Measurement uncertainties ASTR320 Monday September 12, 2016 Measurements • Astronomy is a purely observational science—we can’t do experiments, all we can do is make measurements remotely • Often astronomical research is done by looking for correlations between measured quantities • How correct the science is depends on how well the quantities are measured • Error analysis is very important… Measurement errors • Two types of errors: – systematic errors that limit the precision and accuracy of results in more or less well-defined ways that can be estimated by considering how the measurement is made – random errors introduced by random fluctuations in measurements that yield different results each time an experiment is repeated • Errors are not the same as mistakes! Accuracy vs. Precision • Accuracy: a measure of how close an experimental result is to the true value • Precision: a measure of how well the result has been determined, without reference to its agreement with the true value Populations and distributions • Parent distribution – Hypothetical distribution of an infinitely large set of measurements of a quantity – Defines the probability of measuring a particular value in a single measurement • Sample population – Actual set of experimental measurements made – A subset of the parent distribution • Consider a given dataset to be a sample taken from a parent population • When making measurements it is important to carefully consider how this sample is constructed – Error analysis provides information about how well the sample is constructed and how reliable are the results Mean, median, mode • Mean – Arithmetic average 𝑥≡ 1 𝑁 𝑁 𝑥𝑖 𝑖=1 • Median – Value midway in the frequency distribution – Half of the measurements are greater than this value, half are below • Mode – Value that occurs most frequently in a distribution – The most probable value Deviations • Deviation: the difference of a given measurement from the mean of the parent distribution 𝑑𝑖 ≡ 𝑥𝑖 − 𝜇 • Average deviation: the average of the absolute values of the deviations 1 𝛼 ≡ lim 𝑥𝑖 − 𝜇 𝑁→∞ 𝑁 • This is a measure of the dispersion of the measurements about the mean Variance of the parent population • Standard deviation is a better (i.e. mathematically easier) way to measure the dispersion of the measurements (i.e., the uncertainty in the measurements) • Variance: the limit of the average of the squares of the deviations from the mean μ: 𝜎2 1 ≡ lim 𝑁→∞ 𝑁 𝑥𝑖 − 𝜇 2 = lim 𝑁→∞ 1 𝑁 𝑥𝑖 2 − 𝜇2 • Standard deviation, σ, is just the square root of the variance – The uncertainty in determining the mean of the parent distribution is proportional to the standard deviation of the distribution Variance of the sample population • The variance of the sample population is subtly different: 𝑆2 1 ≡ 𝑁−1 𝑥𝑖 − 𝑥 2 • This accounts for the fact that the average value has been determined using the data in the sample population and not independently • Note that σ is often used when discussing standard deviation, even though we really mean S Mean and standard deviation • Consider the parent population of measurements with distribution p(x) • The probability density p(x) is defined so that, in the limit of large number of observations, the fraction of measurements of x that yield values between x and x+dx is given by dN=Np(x) dx • Then by definition, the mean is the most probable value, or expectation value, 𝑥 , of the measurements • The standard deviation is the expectation value of the deviations from the mean 𝜎 = (𝑥 − 𝜇)2 • The mean and standard deviation are often used to characterize a set of measurements Binomial distribution • The probability distribution of the number of successes in a sequence of n independent yes/no experiments, each of which yields success with probability p • We’ll use this general case to derive some more useful distributions Coin toss experiment • Take a fair coin that has a 50% chance of landing heads up and 50% chance of landing tails up – If the coin is tossed an infinite number of times, the fraction of times that it lands heads up is ½ – But in any given toss, it is impossible to know which way it lands • Now take n of these coins and toss them all at once (or toss one coin n times) • P(x; n) is the probability of success, i.e., that x coins land heads up (and (n-x) coins land tails up) – In this case p=(1/2)n – More generally, we can assign a probability to each ―success‖ case Probability • If p is the probability of success and q is the probability of failure of n trials – For the coin toss, 𝑝 𝑥 = (1/2)𝑥 ; 𝑞𝑛−𝑥 = (1 − 1/2)𝑛−𝑥 = (1/2)𝑛−𝑥 – For a die, p=1/6, q=1-1/6; so 𝑝 𝑥 𝑞𝑛−𝑥 = (1/6)𝑥 × (5/6)𝑛 • More generally, the probability of observing x of the n items to be in the state with probability p is called the ―binomial distribution‖ Binomial distribution • The mean of the binomial distribution comes from the definition of μ: 1 𝜇 = lim 𝑁→∞ 𝑁 𝑁 𝑖=1 1 𝑥𝑖 = lim 𝑁→∞ 𝑁 𝑁 = lim 𝑁→∞ 𝑁 𝑥𝑗 𝑁𝑃(𝑥𝑗 ) 𝑗=1 𝑥𝑗 𝑃(𝑥𝑗 ) 𝑗=1 • And the binomial distribution probability 𝑛! 𝑃𝐵 𝑥; 𝑛, 𝑝 = 𝑝𝑥 1 − 𝑝 𝑥! 𝑛 − 𝑥 ! • So the mean is 𝑛 𝑛! 𝜇= 𝑥 𝑝 𝑥 1 − 𝑝 𝑛−𝑥 𝑥! 𝑛 − 𝑥 ! 𝑥=0 𝑛−𝑥 = 𝑛𝑝 Binomial distribution • And the variance of a binomial distribution comes from the definition of σ 2 : 𝑛 𝑛! 2 2 𝜎 = (𝑥 − 𝜇) 𝑝 𝑥 (1 − 𝑝)𝑛−𝑥 = 𝑛𝑝(1 − 𝑝) 𝑥! 𝑛 − 𝑥 ! 𝑥=0 • If 1-p is the probability of failure, q, • Then for the coin toss, p=q=1/2, then the distribution is symmetric about the mean, and the median is the most probable value (and equal to the mean), and σ2 is equal to μ/2. • If p is not equal to q, then the distribution is asymmetric Binomial distribution • For the coin toss, p=q=1/2, then the distribution is symmetric about the mean, the median is the most probable value (and equal to the mean), and σ2 is equal to μ/2. • If p is not equal to q, then the distribution is asymmetric Poisson distribution • An approximation to the binomial distribution for the special case when the average number of successes is much smaller than the possible number • Not generally used in astronomy, but useful, e.g., for radioactive decay Gaussian (normal) distribution • Most useful probability distribution because it best describes the actual observed statistics of experimental data • Bonus: it’s simple and easy to use • Derived from binomial distribution for the case that the number of observations n becomes infinitely large and the probability p is finitely large, so np>>1 Gaussian distribution • Gaussian probability density is defined as 1 1 𝑥−𝜇 2 𝑝𝐺 = 𝑒𝑥𝑝 − 2 𝜎 𝜎 2𝜋 • Where μ and σ are the mean and standard deviation. • Remember that the probability density function is defined to be the probability dP that the value of a random observation will fall within an interval dx around x. • In a Gaussian distribution, the width of the curve is determined by the value of the standard deviation, σ • For x=μ+σ, the height of the curve is reduced to 𝑒 −1/2 of its peak value FWHM • Often refer to the full width at half maximum (FWHM) of a Gaussian distribution • Defined to be the range of x between which the Gaussian probability density function 1 1 𝑥−𝜇 2 𝑝𝐺 = 𝑒𝑥𝑝 − 2 𝜎 𝜎 2𝜋 has half of its maximum value. • The FWHM, Г, is Г = 2.354𝜎 Gaussian distribution The mean, standard deviation, FWHM, and probable error of a Gaussian probability distribution with unit area Probable error is the 50% probability limit (𝜎𝑃𝐸 = 0.6745𝜎) Gaussian distribution • Note the probabilities that a measurement falls within 1-σ (68%) and 2-σ (95%) of the mean