* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download sampling - AuroEnergy

Psychometrics wikipedia , lookup

Confidence interval wikipedia , lookup

Foundations of statistics wikipedia , lookup

History of statistics wikipedia , lookup

Taylor's law wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Analysis of variance wikipedia , lookup

Statistical inference wikipedia , lookup

German tank problem wikipedia , lookup

Resampling (statistics) wikipedia , lookup

Chapter 4: Making Statistical Inferences

from Samples

4.1 Introduction

4.2 Basic univariate inferential statistics

4.3 ANOVA test for multi-samples

4.4 Tests of significance of multivariate data

4.5 Non-parametric methods

4.6 Bayesian inferences

4.7 Sampling methods

4.8.Resampling methods

Chap 4-Data Analysis Book-Reddy

1

4.1 Introduction

The primary reasons for resorting to sampling as against measuring the

whole population is:

- to reduce expense

- to make quick decisions (say, in case of a production process),

- often it is impossible to do otherwise.

Random sampling, the most common form of sampling, involves

selecting samples from the population in a random manner

(the samples should be independent so as to avoid bias- not as

simple as it sounds)

Such inferences, usually involving descriptive measures such as the

mean value or the standard deviation, are called estimators. These

are mathematical expressions to be applied to sample data in order

to deduce the estimate of the true parameter.

Chap 4-Data Analysis Book-Reddy

2

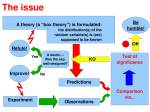

Fig. 4.13 Overview of various types of parametric hypothesis tests treated in

this chapter along with section numbers. The lower set of three sections treat

non-parametric tests.

Two types of tests:

-Parametric and

-Non-parametric

Hypothesis Tests

One sample

One variable

Mean/

Proportion

4.2.2/

4.2.4(a)

Two samples

Two variables

Variance

Probability

distribution

Correlation

coefficient

4.2.5(a)

4.2.6

4.2.7

Non-parametric

4.5.1

One variable

Multi samples

Multivariate One variable

Mean/

Variance Mean

Proportion

4.2.3(a)

4.4.2

4.2.3(b)/ 4.2.5(b)

4.2.4(b)

Hotteling T^2

4.5.2

Chap 4-Data Analysis Book-Reddy

4.5.3

Mean

4.3

ANOVA

3

4.2 Basic Univariate

4.2.1(a) Sampling distribution of the mean

Consider a population from which many random samples are taken.

What can one say about the distribution of the sample estimators?

Let and x be the population mean and sample mean respectively,

and sx be the population std dev and sample std dev

Then, regardless of the shape of the population frequency distribution:

x

4.1

And std dev of the population mean (or SE or standard error of the

mean)

SE

x

(n )1/ 2

4.2

where n is the number of samples selected.

Use sample std dev sx if population std dev is not known

Chap 4-Data Analysis Book-Reddy

4

Fig. 4.1 Illustration

of the Central Limit

Theorem.

The sampling

distribution of x

contrasted with the

parent population

distribution for three

cases with different

parent distributions:

as sample size increases,

the sampling distribution

gets closer to a normal

distribution (and the

standard error of the

mean decreases)

Chap 4-Data Analysis Book-Reddy

5

If a ball bounces to the right k

times on its way down (and to the

left on the remaining pins) it ends

up in the kth bin counting from the

left. Denoting the number of rows

of pins in a bean machine by n, the

number of paths to the kth bin on

the bottom is given by the binomial

coefficient . If the probability of

bouncing right on a pin is p (which

equals 0.5 on an unbiased machine)

the probability that the ball ends up

in the kth bin equals is the

probability mass function of a

binomial distribution.

According to the central limit

theorem the binomial distribution

approximates the normal

distribution provided that n, the

number of rows of pins in the

machine, is large.

Galton’s Boards (1889)

The machine consists of a vertical board with

interleaved rows of pins. Balls are dropped

from the top, and bounce left and right as they

hit the pins. Eventually, they are collected into

one-ball-wide bins at the bottom. The height of

ball columns in the bins approximates a bell

curve

4.2.1(b) Confidence limits for the mean

Instead of the behavior of many samples all taken from one population,

what can one say about only one large random sample.

This process is called inductive reasoning or arguing backwards from a set

of observations to a reasonable hypothesis.

However, the benefit provided by having to select only a sample of the

population comes at a price: one has to accept some uncertainty in our

estimates.

Based on a sample taken from a population:

• one can deduce intervals bounds of the population mean at a specified

confidence level

• one can test whether the sample mean differs from the presumed

population mean

Chap 4-Data Analysis Book-Reddy

7

4.2.1(b) Confidence limits for the mean

sx

The confidence interval of the population mean = x zc / 2

4.5b

n

This formula is valid for any shape of the population distribution

provided, of course, that the sample is large (say, n>30).

Half-width of the 95% CL is (1.96

sx

)

n

: bound of the error of estimation

For small samples (n<30), instead of variable z, use student-t variable.

Eq.4.5 corresponds to the long-run bounds, i.e., in the long run roughly

95% of the intervals will contain .

x

Prediction of a single x value:

1

x tc / 2 .s x (1 )1/ 2

Prediction interval of x =

n

where tc/2

4.6

is the two-tailed critical value at d.f. = n-1 at the desired CL

Chap 4-Data Analysis Book-Reddy

8

Example 4.2.1: Evaluating manufacturer quoted lifetime of light bulbs

from sample data

A manufacturer claims that the distribution of the lifetimes of his best

model has a mean = 16 years and standard deviation = 2 years

when the bulbs are lit for 12 hours every day. Suppose that a city

official wants to check the claim by purchasing a sample of 36 of

these bulbs and subjecting them to tests that determine their

lifetimes.

(i) Assuming the manufacturer’s claim to be true, describe the

sampling distribution of the mean lifetime of a sample of 36 bulbs.

Even though the shape of the distribution is unknown, the Central

Limit Theorem suggests that the normal distribution can be used:

x = =16 and s<x> = 2/ 36 0.33

Chap 4-Data Analysis Book-Reddy

years.

9

ii) What is the probability that the sample purchased by the city officials has a meanlifetime of 15 years or less?

The normal distribution N (16, 0.33) is drawn and the darker shaded area to the left of

x=15 provides the probability of the city official observing a mean life of 15 years or less.

x

15 16

z=

Next, the standard normal statistic is computed as: / n 2 / 36 3.0

This probability or p-value can be read off from Table A3 as p(z 3.0 ) = 0.0013.

Consequently, the probability that the consumer group will observe a sample mean of 15

or less is only 0.13%.

Normal Distribution

1.2

Mean,Std. Dev.

16,0.333

density

1

Fig. 4.2 Sampling distribution of for

a normal distribution N(16, 0.33).

Shaded area represents the

probability of the mean life of the

bulb being < 15 years

0.8

0.6

0.4

0.2

0

14

15

16

x

17

18

Chap 4-Data Analysis Book-Reddy

10

(c) If the manufacturer’s claim is correct, compute the ONE

TAILED 95% prediction interval of a single bulb from the

sample of 36 bulbs.

From the t-tables (Table A4), the critical value is tc =1.7 for

d.f .=36-1=35 and CL=95% corresponding to the one-tailed

distribution.

1 1/ 2

95% prediction value of x= x tc / 2 .s x (1 )

n

1 1/ 2

) = 12.6 years.

= 16 (1.70).2.(1

36

Chap 4-Data Analysis Book-Reddy

11

4.2.2 Hypothesis Tests for Single Sample Mean

During hypothesis testing the intent is to decide which of two

competing claims is true.

For example, one wishes to support the hypothesis that women live

longer than men.

Samples from each of the two populations are taken, and a test, called

statistical inference is performed to prove (or disprove) this claim.

Since there is bound to be some uncertainty associated with such a

procedure, one can only be confident of the results to a degree that

can be stated as a probability. If this probability value is higher than

a pre-selected threshold probability, called significance level of the

test, then one would conclude that women do live longer than men;

otherwise, one would have to accept that the test was nonconclusive.

Chap 4-Data Analysis Book-Reddy

12

Once a sample is drawn, the following steps are performed:

• formulate the hypotheses: the null or status quo, and the

alternate (which are complementary)

• select a confidence level and estimate the corresponding

significance level (say, 0.01 or 0.05)

• identify a test statistic (or random variable) that will be used

to assess the evidence against the null hypothesis

• determine the critical or threshold value of the test statistic

from probability tables

• compute the test statistic for the problem at hand

• rule out the null hypothesis only if the absolute value is

greater than the critical statistic , and accept the alternate

hypothesis

Chap 4-Data Analysis Book-Reddy

13

Be careful that you select the appropriate significance level when

a confidence level is stipulated

f ( x)

f ( x)

p=0.05

-1.645

p=0.025

x

-1.96

1.96

x

Fig. 4.4 Illustration of critical cutoff values between one tailed and two-tailed

tests assuming the normal distribution. The shaded areas represent the

probability values corresponding to 95% CL or 0.05 significance level or p

=0.05. The critical values shown can be determined from Table A3.

Chap 4-Data Analysis Book-Reddy

14

Example 4.2.2. Evaluating whether a new type of light bulb has longer life

Traditional light bulbs have:

mean life = 1200 hours and standard deviation = 3.

To compare the life against that of a new type of light bulb

Use the classical test and define two hypotheses:

• The null hypothesis which represents the status quo, i.e., that the new

process is no better than the previous one H0 : = 1200 hours,

• The research or alternative hypothesis (Ha) is the premise that > 1200

Say, sample size n = 100 and significance or error level of the test is = 0.05.

Use one-tailed test (since the new bulb manufacturing process should have a

longer life, not just different from that of the traditional process).

Chap 4-Data Analysis Book-Reddy

15

The mean life of the sample of x =100 bulbs can be assumed to be normally

distributed with mean 1200 and standard error

/ n (300) /( 100) 30

From the standard normal table(Table A3), the one tailed critical z- value is:

x c 0

z

c

/ n

z =0.05 1.64 which leads to x c =1200+1.64 x 300 /(100)1/2 =1249

• Suppose testing of the 100 tubes yields a value of x =1260. As x x c , one

would reject the null hypothesis at the 0.05 significance (or error) level.

This is akin to jury trials where the null hypothesis is taken to be that the accused is

innocentthe burden of proof during hypothesis testing is on the alternate hypothesis.

Hence, two types of errors can be distinguished:

•

•

Concluding that the null hypothesis is false when in fact it is true is called a Type I

error, and represents the probability (i.e., the pre-selected significance level) of

erroneously rejecting the null hypothesis. This is also called the “false negative” or

“false alarm” rate.

The flip side, i.e. concluding that the null hypothesis is true when in fact it is false,

is called a Type II error and represents the probability of erroneously accepting the

alternate hypothesis, also called the “false positive” rate.

Chap 4-Data Analysis Book-Reddy

16

Normal

Accept

HoDistribution

(X 0.001)

15

Reject Ho

Mean,Std. Dev.

1200,30

N(1200,30)

False negative

density

12

9

6

Area represents

probability of falsely

rejecting null hypothesis

(Type I error)

3

0

1100

1150

1200

(X 0.001) x

15

1250

1300

Normal

Distribution

N(1260,30)

density

12

False positive

Area represents

probability of falsely

accepting the alternative

hypothesis (Type II error)

Mean,Std. Dev.

1260,30

9

6

3

0

1200

1250

Critical value

1300

1350

1400

x

Fig. 4.3 The two kinds of error that occur in a classical test.

(a) If H 0 is true, then significance level = probability of erring (rejecting the true hypothesis H 0).

(b) If Ha is true, then =probability of erring ( judging that the false hypothesis H 0 is acceptable).

The numerical values correspond to data from Example 4.2.2.

Chap 4-Data Analysis Book-Reddy

17

4.2.3 Two Independent Samples

and Paired Difference Tests

(a1) Two independent sample test for evaluating the means of two

independent random samples from the two populations under consideration

whose variances are unknown and unequal (but reasonably close)

Test statistic:

( x 1 x 2 ) ( 1 2 )

4.7

z

2

2

s

s

( 1 2 )1 / 2

n1 n2

For large samples, the confidence intervals of the difference in the population

means can be determined as:

1 2 ( x1 x 2 ) zc .SE ( x1 , x 2 )

s12 s22 1/ 2

4.8

where SE ( x1 , x 2 )=( )

n1 n 2

For smaller sample sizes, the z standardized variable is replaced with the

student-t variable. The critical values are found from the student t- tables

with degrees of freedom d.f.= n1 + n2 -2.

Chap 4-Data Analysis Book-Reddy

18

(a)

(b)

(c)

(d)

Fig. 4.5 Conceptual illustration of four characteristic cases that may arise

during two-sample testing of medians. The box and whisker plots provide

some indication as to the variability in the results of the tests.

- Case (a) clearly indicates that the samples are very much different, while the

opposite applies to case (d).

- However, it is more difficult to draw conclusions from cases (b) and (c), and

it is in such cases that statistical tests are useful.

Chap 4-Data Analysis Book-Reddy

19

Example 4.2.3. Verifying savings from home energy conservation measures

Certain electric utilities fund contractors to weather strip residences to

conserve energy.

Suppose an electric utility wishes to determine the cost-effectiveness of their

weather-stripping program by comparing the annual electric energy use of

200 similar residences in a given community

Samples collected from both types of residences yield:

- Control sample: mean = 18,750 ; s1 = 3,200 and n1 = 100.

- Weather-stripped sample: mean = 15,150 ; s2 = 2,700 and n2 = 100.

The mean difference = ( x1 x 2 ) =18750 – 15150 = 3,600, i.e., the mean

saving in each weather-stripped residence is 19.2% (=3600/18750)

However, there is an uncertainty associated with this mean value

At the 95% CL, corresponding to a significance level =0.05 for a one-tailed

distribution, zc = 1.645 from Table A3, and from eq. 4.8:

2

2

s

s

1 2 (18,750 15,150) 1.645( 1 2 )1/ 2

100 100

Chap 4-Data Analysis Book-Reddy

20

The confidence interval is approximately:

32002 27002 1/ 2 =3600 689 = (2,911 and 4,289).

3600 1.645(

)

100

100

These intervals represent the lower and upper values of saved energy at

the 95% CL.

To conclude, one can state that the savings are positive, i.e., one can be

95% confident that there is an energy benefit in weather-striping the

homes. More specifically, the mean saving is 19.2% of the baseline

value with an uncertainty of 19.1% (= 689/3600) in the savings at

the 95% CL.

Thus, the uncertainty in the savings estimate is as large as the

estimate itself which casts doubt on the efficacy of the conservation

program.

This example reflects a realistic concern in that energy savings in

homes from energy conservation measures are often difficult to

verify accurately.

Chap 4-Data Analysis Book-Reddy

21

4.2.3 Two Independent Samples

and Paired Difference Tests (contd.)

(a2) “Pooled variances” also used when the samples are small and the

variances of both populations are close. Here, instead of using

individual standard deviation values s1 and s2, a new quantity called

the pooled variance sp is used:

2

2

(n

1)s

(n

1)s

1

2

2

with d.f. = n1 + n2-2

s 2p 1

n1 n 2 2

- pooled variance is the weighted average of the two sample variances

Pooled variance approach is said to result in tighter confidence intervals,

and hence its appeal. However, several authors discourage its use

Confidence intervals of the difference in the population means is:

1 2 ( x1 x 2 ) tc .SE ( x1 , x 2 )

where

Chap 4-Data Analysis Book-Reddy

SE ( x1 , x 2 ) [s 2p (

1 1 1/ 2

)]

n1 n 2

22

Example 4.2.4. Comparing energy use of two similar buildings

based on utility bills- the wrong way

Buildings which are designed according to certain performance standards are

eligible for recognition as energy-efficient buildings by federal and

certification agencies. A recently completed building (B2) was awarded

such an honor.

The federal inspector, however, denied the request of another owner of an

identical building (B1) close by who claimed that the differences in energy

use between both buildings were within statistical error.

An energy consultant was hired by the owner to prove that B1 is as energy

efficient as B2. He chose to compare the monthly mean utility bills over a

year between the two commercial buildings based on the data recorded

over the same 12 months and listed in Table 4.1.

Chap 4-Data Analysis Book-Reddy

23

Month

1

2

3

4

5

6

7

8

9

10

11

12

Mean

Std.

Deviation

Building B1

Building B2

Difference in

Utility

cost Utility cost ($) Costs (B1-B2)

($)

693

639

54

759

678

81

1005

918

87

1074

999

75

1449

1302

147

1932

1827

105

2106

2049

57

2073

1971

102

1905

1782

123

1338

1281

57

981

933

48

873

825

48

1,349

1,267

82

530.07

516.03

32.00

Outdoor

temperature

(0C)

3.5

4.7

9.2

10.4

17.3

26

29.2

28.6

25.5

15.2

8.7

6.8

Null hypothesis: mean monthly utility charges for the two buildings are equal .

Since the sample sizes are less than 30, the t-statistic has to be used.

Pooled variance :

s 2p

and the t-statistic:

(12 1).(530.07) (12 1).(516.03)

273, 630.6

12 12 2

2

2

t=

(1349 1267) 0

82

0.38

1 1 1/ 2 213.54

[(273, 630.6)( )]

12 12

One-tailed critical value is 1.321 for CL=90 % and d.f.=12+12-2=22:

Cannot reject null hypothesis Chap 4-Data Analysis Book-Reddy

24

There is, however, a problem with the way the energy consultant performed the test.

Looking at figure below would lead one not only to suspect that this conclusion is

erroneous, but also to observe that the utility bills of the two buildings tend to rise

and fall together because of seasonal variations in the climate. Hence the condition

that the two samples are independent is violated. It is in such circumstances that a

paired test is relevant.

2500

Utility Bills ($/month)

B1

B2

2000

Difference

1500

1000

500

0

1

2

3

4

5

6

7

8

9

10

11

12

Month of Year

Fig. 4.6 Variation of the utility bills for the two buildings B1 and B2 (Example 4.2.5)

Chap 4-Data Analysis Book-Reddy

25

Example 4.2.5. Comparing energy use of two similar buildings based on

utility bills- the right way

Here, the test is meant to determine whether the monthly mean of the

differences in utility charges between both buildings ( - ) is zero or not.

xD

The null hypothesis is that this is zero, while the alternate hypothesis is that it

is different from zero. Thus:

82

xD 0 =

8.88 with d.f. = 12-1=11

t - statistic =

sD / n D

32 / 12

where the values of 82 and 32 are found from Table 4.1.

For = 0.05 with a one-tailed test, from Table A4 critical value t0.05 = 1.796.

Because 8.88 >>this critical value, one can safely reject the null hypothesis.

In fact, Bldg 1 is less energy efficient than Bldg 2 even at = 0.0005

(or CL = 99.95%), and the owner of B1 does not have a valid case at all!

Chap 4-Data Analysis Book-Reddy

26

4.2.4 Single Sample Tests for Proportions

Instances of surveys performed in order to determine fractions or

proportions of populations who either have preferences of

some sort or have a certain type of equipment- can be

interpreted as either a “success” (the customer has gas heat) or

a “failure”- a binomial experiment

Let p be the population proportion one wishes to estimate from

the sample proportion ^ number of successes in sample x

p

total number of trials

n

^

The large sample confidence interval of p for the two tailed

case at a significance level z

^

^

^

p z / 2 [ p(1 p ) / n ]1/ 2

Chap 4-Data Analysis Book-Reddy

4.13

27

Example 4.2.6. In a random sample of n=1000 new residences in

Scottsdale, AZ, it was found that 630 had swimming pools. Find the

95% confidence interval for the fraction of buildings with pools.

630

0.63 . From Table A3, the

In this case, n=1000, while p

1000

13

one-tailed critical value z0.025 1.96 , and hence from eq. 4.2.13,

131 the

^

two tailed 95% confidence interval for p is:

0.63(1 0.63) 1/ 2

0.63(1 0.63) 1/ 2

0.63 1.96[

] p 0.63 1.96[

]

100

100

or 0.5354 < p < 0.7246.

Chap 4-Data Analysis Book-Reddy

28

Example 4.2.7. The same equations can also be used to determine

sample size in order for p not to exceed a certain range or error e. For

instance, one would like to determine from Example 4.2.6 data, the

sample size which will yield an estimate of p within 0.02 or less at

95% CL

Then, recasting eq. 4.13 results in a sample size:

n

z

2

^

^

2

p

(1

p

)

(1.96

)(0.63)(1 0.63)

/2

2239

2

2

e

(0.02)

It must be pointed out that the above example is somewhat

^

misleading since one does not know the value of p beforehand. One

may have a preliminary idea, in which case, the sample size n would

be an approximate estimate and this may have to be revised once

some data is collected.

Chap 4-Data Analysis Book-Reddy

29

4.2.5 Single (and Two) Sample Tests of Variance

Such tests allow one to specify a confidence level for the

population variance from a sample

The confidence intervals for a population variance 2 based on sample

variance s2 are to be determined. To construct such confidence intervals,

one will use the fact that if a random sample of size n is taken from a

population that is normally distributed with variance 2 , then the

random variable

2

n 1

2

s2

4..15

has the chi-square distribution with =(n-1) degrees of freedom. The

advantage of using 2 instead of s2 is similar to the advantage of

standardizing a variable to a normal random variable. Such a

transformation allows standard tables (such as Table A5) to be used for

determining probabilities irrespective of the magnitude of s2. The basis of

these probability tables is again akin to finding the areas under the chisquare curves.

Chap 4-Data Analysis Book-Reddy

30

Example 4.2.9. A company which makes boxes wishes to determine

whether their automated production line requires major servicing or not.

They will base their decision on whether the weight from one box to

another is significantly different from a maximum permissible population

variance value of 2 = 0.12 kg2. A sample of 10 boxes is selected, and

their variance is found to be s2 = 0.24 kg2. Is this difference significant at

the 95% CL?

From eq. 4.15, the observed chi-square value is 2

10 1

(0.24) 18 .

0.12

Inspection of Table A5 for =9 degrees of freedom, reveals that for a

significance level 0.05 , the critical chi-square value 2 c = 16.92

and, for 0.025 , 2 c = 19.02. Thus, the result is significant at

0.05 or 95% CL. However, the result is not significant at the 97.5%

CL. Whether to service the automated production line based on these

statistical tests involves performing a decision analysis.

Chap 4-Data Analysis Book-Reddy

31

Chap 4-Data Analysis Book-Reddy

32

4.2.6 Tests for Distributions

The Chi-square ( 2 ) statistic applies to discrete data. It is used to

statistically test the hypothesis that a set of empirical or sample data does not

differ significantly from that which would be expected from some specified

theoretical distribution. In other words, it is a goodness-of-fit test to ascertain

whether the distribution of proportions of one group differs from another or not.

The chi-square statistic is computed as:

2

k

( f obs f exp )2

f exp

4.17

where fobs is the observed frequency of each class or interval, fexp is the expected

frequency for each class predicted by the theoretical distribution, and k is the

number of classes or intervals. If 2 =0, then the observed and theoretical

frequencies agree exactly. If not, the larger the value of 2 , the greater the

discrepancy. Tabulated values of 2 are used to determine significance for

different values of degrees of freedom =k-1 (see Table A5). Certain restrictions

apply for proper use of this test. The sample size should be greater than 30, and

none of the expected frequencies should be less than 5. In other words, a long tail

of the probability curve at the lower end is not appropriate. The following

example serves to illustrate the process of applying the chi-square test.

Chap 4-Data Analysis Book-Reddy

33

Example 4.2.11. Ascertaining whether non-code compliance infringements in

residences is random or not

A county official was asked to analyze the frequency of cases when home

inspectors found new homes built by one specific builder to be non-code

compliant, and determine whether the violations were random or not. The

following data for 380 homes were collected:

No. of code infringements 0

1

Number of homes

242 94

2

38

3

4

4

2

The underlying random process can be characterized by the Poisson

distribution: P( x)

x exp( )

x!

. The null hypothesis, namely that the sample

is drawn from a population that is Poisson distributed is to be tested at the 0.05

significance level.

The sample mean

0(242) 1(94) 2(38) 3(4) 4(2)

=0.5 infringements

380

per home

Chap 4-Data Analysis Book-Reddy

34

For a Poisson distribution with =0.5, the underlying or expected values are

found for different values of x as shown in table

X=number of

non-code compliance

0

1

2

3

4

5 or more

Total

P(x).n

Expected no

(0.6065).380

(0.3033).380

(0.0758).380

(0.0126).380

(0.0016).380

(0.0002).380

(1.000).380

230.470

115.254

28.804

4.788

0.608

0.076

380

The last three categories have expected frequencies that are less than 5, which do

not meet one of the requirements for using the test (as stated above). Hence, these

will be combined into a new category called “3 or more cases” which will have

an expected frequency of 4.7888+0.608+0.076=5.472. The following statistic is

calculated first:

(242 230.470)2 (94 115.254)2 (38 28.804)2 (6 5.472)2

2

=7.483

230.470

115.254

28.804

5.472

Since there are only 4 groups, the degrees of freedom = 4-1=3, and from Table

2

5, the critical value at 0.05 significance level is critical =7.815. Hence, the null

hypothesis cannot be rejected at the 0.05 significance level; this is, however,

marginal.

Chap 4-Data Analysis Book-Reddy

35

Recall the concept of Correlation Coefficient

Example 3.4.2. Extension of a spring under different loads:

Load (Newtons)

Extension (mm)

2

10.4

4

19.6

6

29.9

8

42.2

10

49.2

12

58.5

Standard deviations of load and extension are 3.742 and 18.298 respectively,

while the correlation coefficient = 0.998. This indicates a very strong

positive correlation between the two variables as one should expect.

_

_

1 n

cov(xy)=

. (x i x ).(yi y )

n-1 i1

Load

Extension

(Newtons)

(mm)

x-xbar

2

10.4

-5

4

19.6

-3

6

29.9

-1

8

42.2

1

10

49.2

3

12

58.5

5

Mean

stdev

7.000

3.742

y-ybar

-24.57

-15.37

-5.07

7.23

14.23

23.53

34.967

18.298

Product

122.85

46.11

5.07

7.23

42.69

117.65

sum

341.600

cov(xy) 68.320

corr

0.998

Chap 3-Data Analysis-Reddy

36

4.2.7 Tests on the Pearson Correlation Coefficient

Making inferences about the population correlation coefficient

knowledge of the sample correlation coefficient r.

from

Assumption: both the variables are normally distributed (bivariate normal

population),

Fig. 4.2.5 provides a convenient way of ascertaining the 95% CL of the

population correlation coefficient for different sample sizes. Say, r = 0.6 for a

sample n = 10 pairs of observations, then the 95% CL for the population

correlation coefficient are (-0.05 < < 0.87), which are very wide. Notice how

increasing the sample size shrinks these bounds. For n = 100, the intervals are

(0.47 < < 0.71).

Table A7 lists the critical values of the sample correlation coefficient r

for testing the null hypothesis that the population correlation coefficient is

statistically significant (i.e., 0 ) at the 0.05 and 0.01 significance levels for

one and two tailed tests. The interpretation of these values is of some importance

in many cases, especially when dealing with small data sets.

Say, analysis of the 12 monthly bills of a residence revealed a linear

correlation of r=0.6 with degree-days at the location. Assume that a two-tailed

test applies. The sample correlation barely suggests the presence of a correlation

at a significance level =0.05 (the critical value from Table A7 is c =0.576)

while none at =0.01, (for which c =0.708).

Chap 4-Data Analysis Book-Reddy

37

Fig. 4.8 Plot depicting 95% confidence bands for population correlation in a bivariate normal

population for various sample sizes n. The bold vertical line defines the lower and upper limits of

when r = 0.6 from a data set of 10 pairs of observations (from Wonnacutt and Wonnacutt, 1985 by

permission of John Wiley and Sons)

Chap 4-Data Analysis Book-Reddy

38

4.3 ANOVA test for multi-samples

The statistical methods known as ANOVA (analysis of variance) are a

broad set of widely used and powerful techniques meant to identify and measure

sources of variation within a data set. This is done by partitioning the total

variation in the data into its component parts. Specifically, ANOVA uses

variance information from several samples in order to make inferences about the

means of the populations from which these samples were drawn (and, hence, the

appellation).

Fig. 4.9 Conceptual explanation of the basis of an ANOVA test

Chap 4-Data Analysis Book-Reddy

39

ANOVA methods test the null hypothesis of the form:

H 0 : 1 2 ... k

H a : at least two of the i 's are different

4.18

Adopting the following notation:

Sample sizes: n1 , n2 ..., nk

Sample means: x1 , x 2 ... x k

Sample standard deviations: s1 , s2 ...sk

Total sample size: n n1 n2 ... nk

Grand average: x weighted average of all n responses

Then, one defines between-sample variation called “treatment sum of squares1”

(SSTr) as:

k

SSTr= ni ( xi x ) 2 with d.f.= k-1

4.19

i 1

and within-samples variation or “error sum of squares” (SSE) as:

k

SSE= ( ni 1) si2

with d.f.= n-k

4.20

i 1

Chap 4-Data Analysis Book-Reddy

40

Together these two sources of variation: the “total sum of squares” (SST):

k

SST = SSTr + SSE =

n

( x

ij

x )2 with d.f.= n-1

4.21

i 1 j 1

SST is simply the sample variance of the combined set of n data points=

(ni 1) s 2 where s is the standard deviation of all the n data points.

The statistic defined below as the ratio of two variances is said to follow the Fdistribution:

F

MSTr

MSE

4.22

where MSTr is the mean between-sample variation =SSTr/(k-1)

and MSE is the mean error

total

err sum of squares= SSE/(n-k)

Recall that the p-value is the area of the F curve for (k-1,n-k) degrees of

freedom to the right of F value. If p-value (the selected significance level),

then the null hypothesis can be rejected. Note that the test is meant to be used for

normal populations and equal population variances.

Chap 4-Data Analysis Book-Reddy

41

Example 4.3.1. Comparing mean life of five motor bearings

A motor manufacturer wishes to evaluate five different motor bearings for motor

vibration (which adversely results in reduced life). Each type of bearing is

installed on different random samples of six motors. The amount of vibration (in

microns) is recorded when each of the 30 motors are running.

Sample

1

2

3

4

5

6

Mean

Std. dev.

Brand 1

13.1

15.0

14.0

14.4

14.0

11.6

13.68

1.194

Brand 2

16.3

15.7

17.2

14.9

14.4

17.2

15.95

1.167

Brand 3

13.7

13.9

12.4

13.8

14.9

13.3

13.67

0.816

Brand 4 Brand 5

15.7

13.5

13.7

13.4

14.4

13.2

16.0

12.7

13.9

13.4

14.7

12.3

14.73

13.08

0.940

0.479

Determine whether the bearing brands have an effect on motor vibration at the

=0.05 significance level.

Chap 4-Data Analysis Book-Reddy

42

In this example, k=5, and n=30. The one-way ANOVA table is first generated

Source

Factor

Error

Total

d.f.

5-1=4

30-5=25

30-1=29

Sum of Squares

SSTr=30.855

SSE=22.838

SST=53.694

Mean Square F- value

MSTr=7.714 8.44

MSE=0.9135

From the F tables (Table A6) and for =0.05, the critical F value for d.f. =(4,25)

is Fc=2.76, which is less than F=8.44 computed from the data. Hence, one is

compelled to reject the null hypothesis that all five means are equal, and

conclude that type of bearing motor does have a significant effect on motor

vibration. In fact, this conclusion can be reached even at the more stringent

significance level of =0.001.

The results of the ANOVA analysis can be conveniently illustrated by generating an effects plot,

or means plot which includes 95% CL intervals

Fig. 4.10 (a) Effect plot.

(b) Means plot showing the 95% CL intervals

Chap 4-Data Analysis Book-Reddy

43

A limitation of the ANOVA method is that the null hypothesis

is rejected even if one motor bearing is different from the

others. In order to pin-point the cause for this rejection,

different methods have been developed.

One could adopt a paired comparison approach.

With 5 sets, 10 paired tests are needed

- Tedious

- More importantly, sensitivity decreases,

i.e., Type I error increases

The Tukey method is widely used

(applies only when samples are equal)

Student t-test is used and approach allows clear visual representation

Chap 4-Data Analysis Book-Reddy

44

Tukey’s procedure is based on comparing the distance (or

_

_

absolute value) between any two sample means | xi x j | to a threshold

value T that depends on significance level as well as on the mean

square error (MSE) from the ANOVA test. The T value is calculated

as:

T q (

MSE 1/ 2

)

ni

4.3.8

where ni is the size of the sample drawn from each population,

q values are called the studentized range distribution values

(Table A8 for =0.05 for d.f. =(k,n-k)

_

_

If | xi x j | >T, then one concludes that i j at the corresponding

significance level. Otherwise, one concludes that there is no difference

between the two means

Chap 4-Data Analysis Book-Reddy

45

Example 4.3.2. 1Using the same data as that in Example 4.3.1,

conduct a multiple comparison procedure to distinguish which of

the motor bearing brands are superior to the rest.

Following Tukey’s procedure given by eq. 4.3.8, the critical

distance

between

sample

means

at

is:

=0.05

T q (

MSE 1/ 2

0.913 1/ 2

) 4.15(

) 1.62

ni

6

where q is found by interpolation from Table A8 based on

d.f.=(k,n-k)=(5,25).

The pairwise distances between the five sample means

Samples

Distance

Conclusion*

1,2

|13.68 15.95 | 2.27

1,3

|13.68 13.67 | 0.01

1,4

|13.68 14.73 | 1.05

1,5

|13.68 13.08 | 0.60

i j

Fig. 4.11 Graphical depiction

i j

summarizing the ten pairwise

|15.95 13.67 | 2.28

2,3

comparisons following Tukey’s

|15.95 14.73 | 1.22

2,4

procedure. Brand 2 is significantly

i j

|15.95 13.08 | 2.87

different from Brands 1,3 and 5, and

2,5

so is Brand 4 from Brand 5 (Example

|13.67 14.73 | 1.06

3,4

4.3.2)

3,5

|13.67 13.08 | 0.59

(bars drawn to correspond to a

4,5

|14.73 13.08 | 1.65

i j

specified confidence level based on ttests)

46

* Only if distance > critical value of 1.62 Chap 4-Data Analysis Book-Reddy

-Multivariate analysis (also

called multifactor analysis)

deals with statistical inference

and model building as applied

to multiple measurements

made from one or several

samples taken from one or

several populations.

- They can be used to make

inferences about sample means

and variances. Rather than

treating each measure

separately as done in t-tests

and single-factor ANOVA,

these allow the analyses of

multiple measures

simultaneously as a system of

measurements (results in

sounder inferences )

Underlying assumptions

of distributions are important:

Distortion due to correlated variables

4.4 Tests of Significance of Multivariate Data

(not covered)

Fig. 4.12 Two bivariate normal distributions and associated

50% and 90% contours assuming equal standard deviations

for both variables. However, the left hand side plots

presume the two variables to be uncorrelated, while those

on the right have a correlation coefficient of 0.75 which

results in elliptical

Chap 4-Data Analysis Book-Reddy

47

4.5 Non-Parametric Tests

Parametric tests have implicit built-in assumptions regarding the distributions

from which the samples are taken. Comparison of populations using the t-test and

F-test can yield misleading results when the random variables being measured

are not normally distributed and do not have equal variances.

It is obvious that fewer the assumptions, broader would be the potential

applications of the test. One would like that the significance tests used lead to

sound conclusions, or that the risk of coming to wrong conclusions be

minimized. Two concepts relate to the latter aspect.

- robustness of a test is inversely proportional to the sensitivity of the test

and to violations of the underlying assumptions.

- power of a test is a measure of the extent to which cost of

experimentation is reduced.

There are instances when the random variables are not quantifiable

measurements but can only be ranked in order of magnitude (as in surveys).

Rather than use actual numbers, nonparametric tests usually use relative ranks by

sorting the data by rank (or magnitude), and discarding their specific numerical

values.

Nonparametric tests are generally less powerful than parametric ones, but on the

other hand, are more robust and less sensitive to outlier points

Chap 4-Data Analysis Book-Reddy

48

4.5.1 Spearman Rank Coefficient Method

Example 4.5.1. Non-parametric testing of correlation between the sizes of

faculty research grants and teaching evaluations

The provost of a major university wants to determine whether a statistically

significant correlation exists between the research grants and teaching evaluation

rating of its senior faculty. Data over three years has been collected as assembled

in Table 4.8 which also shows the manner in which ranks have been generated

and the quantities di ui vi computed.

Table 4.8

Faculty Research Teaching Research

grants ($) evaluation Rank (ui)

1

1,480,000

7.05 5

2

890,000

7.87 1

3

3,360,000

3.90 10

4

2,210,000

5.41 8

5

1,820,000

9.02 7

6

1,370,000

6.07 4

7

3,180,000

3.20 9

8

930,000

5.25 2

9

1,270,000

9.50 3

10

1,610,000

4.45 6

Teaching

Rank (vi)

7

8

2

5

9

6

1

4

10

3

Chap 4-Data Analysis Book-Reddy

Diff

di

-2

-7

8

3

-2

-2

8

-2

-7

3

TOTAL

Diff sq.

di 2

4

49

64

9

4

4

64

4

49

9

260

49

.

Spearman Rank Correlation Coeff:

rs 1

6 di2

n(n 2 1)

where n is the number of paired measurements, and di ui vi the difference

between the ranks for the ith measurement for ranked variables u and v is

Using eq. 4.34 with n=10:

6(260)

rs 1

0.576

10(100 1)

Thus, one notes that there exists a negative correlation between the sample data.

However, whether this is significant for the population correlation coefficient s

can be ascertained by means of a statistical test:

H 0 : s 0 (there is no significant correlation)

H a : s 0 (there is sigificant correlation)

Table A10 in Appendix A gives the absolute cutoff values for different

0.648

significance levels. For n=10, the critical value for 0.05 is 0.564,

which

suggests

that

the correlation

can beis not

deemed

to be significant at the 0.05

0.648 which

suggests

that the correlation

significant.

significance level, but not at the 0.025 level.

Chap 4-Data Analysis Book-Reddy

50

Critical Values of

Spearman’s Rank Correlation

Coefficient

Chap 4-Data Analysis Book-Reddy

51

4.5.2 Wilcoxon Rank Tests

Rather than compare specific parameters (such as the mean and the

variance), the non-parametric tests evaluate whether the probability distributions

of the sampled populations are different or not. The test is nonparametric and no

restriction is placed on the distribution other than it needs to be continuous and

symmetric.

(a) The Wilcoxon rank sum test is meant for independent samples where

the individual observations can be ranked by magnitude. The following

example illustrates the approach.

Example 4.5.2. Ascertaining whether oil company researchers and academics

differ in their predictions of future atmospheric carbon dioxide levels

The intent is to compare the predictions in the change of atmospheric carbon

dioxide levels between researchers who are employed by oil companies and those

who are in academia. The gathered data shown in Table 4.9 in percentage

increase in carbon dioxide from the current level over the next 10 years from 6

oil company researchers and seven academics. Perform a statistical test at the

0.05 significance level in order to evaluate the following hypotheses:

(a) Predictions made by oil company researchers differ from those made by

academics.

(b) Predictions made by oil company researchers tend to be lower than those

made by academics.

(not treated in the slides)

Chap 4-Data Analysis Book-Reddy

52

Table 4.9 Wilcoxon

rank test calculation for

paired independent

samples

1

2

3

4

5

6

7

SUM

Oil Company Researchers

Prediction (%) Rank

3.5

4

5.2

7

2.5

2

5.6

8

2.0

1

3.0

3

25

Academics

Prediction (%)

4.7

5.8

3.6

6.2

6.1

6.3

6.5

Rank

6

9

5

11

10

12

13

66

Ranks are assigned as shown for the two groups of individuals combined. Since

there are 13 predictions, the ranks run from 1 through 13 as shown in the table.

The test statistic is based on the sum totals of each group (and hence its name). If

they are close, the implication is that there is no evidence that the probability

distributions of both groups are different; and vice versa.

Let TA and TB be the rank sums of either group. Then

TA TB

n(n 1) 13(13 1)

91

2

2

4.36

where n= n1 + n2 with n1 = 6 and n2 = 7. Note that n1 should be selected as the one

with fewer observations. A small value of T A implies a large value of TB, and

vice versa. Hence, greater the difference between both the rank sums, greater the

evidence that the samples come from different populations. Since one is testing

whether the predictions by both groups are different or not, the two-tailed

significance test is appropriate. Table A11 provides the lower and upper cutoff

values for different values of n1 and n2 for both the one-tailed and the two-tailed

tests. Note that the lower and higher cutoff values are (28, 56) at 0.05

significance level for the two-tailed test. The computed statistics of TA = 25 and

TB = 66 are outside the range, the null hypothesis is rejected, and one would

Chap the

4-Data

Analysis

Book-Reddy

conclude that the predictions from

two

groups

are different.

53

Chap 4-Data Analysis Book-Reddy

54

4.6 Bayesian Inferences

The strength of Bayes’ theorem lies in the fact that it provides a

framework for including prior information in a two-stage

experiment whereby one could draw stronger conclusions

It is especially advantageous for small data sets. It shown that its

predictions converge with those of the classical method:

(i) as the data set of observations gets larger; and

(ii) if the prior distribution is modeled as a uniform distribution.

It was pointed out that advocates of the Bayesian approach view

probability as a degree of belief held by a person about an

uncertainty issue as compared to the objective view of long run

relative frequency held by traditionalists.

We will discuss how the Bayesian approach can also be used to make

statistical inferences from samples about an uncertain quantity and

also used for hypothesis testing problems.

Chap 4-Data Analysis Book-Reddy

55

4.6.2 Inference about one uncertain quantity

Consider the case when the population mean is to be estimated (point and

_

interval estimates) from the sample mean x with the population assumed to be

Gaussian with a known standard deviation . The probability P of a two-tailed

distribution at significance level can be expressed as:

_

P( x z / 2 .

n1/ 2

_

x z / 2 .

n1/ 2

) 1

4.37

where n is the sample size and z is the value from the standard normal tables.

The traditional interpretation is that one can be (1 ) confident that the above

interval contains the true population mean. However, the interval itself should

not be interpreted as a probability interval for the parameter.

The Bayesian approach uses the same formula but the mean and standard

deviation are modified since the posterior distribution is now used which

includes the sample data as well as the prior belief. The confidence interval is

usually narrower than the traditional one and is referred to as the credible

interval or the Bayesian confidence interval. The interpretation of this credible

interval is somewhat different from the traditional confidence interval: there is a

(1 ) probability that the population mean falls within the interval.

Thus, the traditional approach leads to a probability statement about the interval,

while the Bayesian about the population parameter

Chap 4-Data Analysis Book-Reddy

56

The relevant procedure to calculate the credible intervals for the case of a

Gaussian population and a Gaussian prior is presented without proof below. Let

the prior distribution, assumed normal, be characterized by a mean 0 and

_

variance , while the sample values are x and s x . Selecting a prior distribution

is equivalent to having a quasi-sample of size n0 whose size is given by:

2

0

n0

sx 2

4.38

2

0

The posterior mean and standard deviation * and * are then given by:

_

*

n0 0 n x

sx

and *

n0 n

(n0 n)1/ 2

4.39

Note that the expression for the posterior mean is simply the weighted average of

the sample and the prior mean, and is likely to be less biased than the sample

mean alone. Similarly, the standard deviation is divided by the total normal

sample size and will result in increased precision. However, had a different prior

rather than the normal distribution been assumed above, a slightly different

interval would have resulted which is another reason why traditional statisticians

are uneasy about fully endorsing the Bayesian approach.

Chap 4-Data Analysis Book-Reddy

57

Example 4.6.1. Comparison of classical and Bayesian confidence intervals

A certain solar PV module is rated at 60 W with a standard deviation of 2 W.

Since the rating varies somewhat from one shipment to the next, a sample of 12

modules has been selected from a shipment and tested to yield a mean of 65 W

and a standard deviation of 2.8 W. Assuming a Gaussian distribution, determine

the 95% confidence intervals by both the traditional and the Bayesian

approaches.

_

(a) Traditional approach: x 1.96

sx

2.8

65

1.96

65 1.58

1/ 2

1/ 2

n

12

(b) Bayesian approach. Using eq.4.38 to calculate the quasi-sample size

inherent in the prior:

2.82

n0 2 1.96 , i.e., the prior is equivalent to information from an

2

additional 2 modules tested.

Next, eq.4.39 is used to determine the posterior mean and standard deviation:

*

2(60) 12(65)

2.8

64.29 and *

0.748

1/ 2

2 12

(2 12)

The Bayesian 95% confidence interval is then:

64.29 1.47

* 1.96 * 64.29 1.96(0.748) 62.29

Since prior information has been used, the Bayesian interval is likely to be

centered better and be more precise (with a narrower interval) than the classical

interval.

Chap 4-Data Analysis Book-Reddy

58

4.6.3 Hypothesis Testing

The traditional or frequentist approach to hypothesis testing is to divide the

sample space into an acceptance region and a rejection region, and posit

that the null hypothesis can be rejected only if the probability of the test

statistic lying in the rejection region can be ascribed to chance or

randomness at the preselected significance level .

Advocates of the Bayesian approach have several objections to this line of

thinking (Phillips, 1973):

• the null hypothesis is rarely of much interest. The precise specification of,

say, the population mean is of limited value; rather, ascertaining a range

would be more useful;

• the null hypothesis is only one of many possible values of the uncertain

variable, and undue importance being placed on this value is unjustified ;

• as additional data is collected, the inherent randomness in the collection

process would lead to the null hypothesis to be rejected in most cases;

• erroneous inferences from a sample may result if prior knowledge is not

considered.

Chap 4-Data Analysis Book-Reddy

59

4.6.2 Inference about one uncertain quantity

The Bayesian approach to hypothesis testing is not to base the conclusions on a

traditional significance level like p<0.05. Instead it makes use of the posterior

credible interval introduced in the previous section. The procedure is

summarized below for the instance when one wishes to test the population mean

of the sample collected against a prior mean value 0 (Bolstad, 2004).

(a) One sided hypothesis test: Let the posterior distribution of the mean

value be given by g ( / x1 ,...xn ) . The hypothesis test is set up as:

H 0 : 0 versus H1 : 0

4.40

Let be the significance level assumed (usually 0.10, 0.05 or 0.01). Then, the

posterior probability of the null hypothesis, for the special case when the

posterior distribution is Gaussian:

0 *

P( H 0 : 0 / x1... xn ) P( z

)

4.41

*

where z is the standard normal variable with * and * given by eq. 4.39. If the

probability is less than our selected value of , the null hypothesis is rejected,

and one concludes that 0 .

Chap 4-Data Analysis Book-Reddy

60

Example 4.6.2. Traditional and Bayesian approaches to determining CLs

The life of a certain type of smoke detector battery (assumed normal) is:

-mean of 32 months and a standard deviation of 0.5 months.

A building owner decides to test this claim at a significance level of 0.05. He

tests a sample of 9 batteries and finds:

-mean of 31 and a sample standard deviation of 1 month.

Note that this is a one-side hypothesis test case.

(a) The traditional approach would entail testing H 0 : =31

32 versus H1 : 31

32

. The Student t value: t

31 32

3.0 . From Table A4, the critical value for

1/ 9

d.f.=8 is t0.05 1.86 . Thus, he can reject the null hypothesis, and state that the

claim of the manufacturer is incorrect.

Chap 4-Data Analysis Book-Reddy

61

(b) The Bayesian approach, on the other hand, would require calculating the

posterior probability of the null hypothesis. The prior distribution has a mean 0

=32 and variance 02 =0.52.

12

First, use eq 4.38, and determine n0

4 , i.e., the prior information is

2

0.5

“equivalent” to increasing the sample size by 4. Next, use eq. 4.39 to determine

the posterior mean and standard deviation:

4(32) 9(31)

1.0

*

31.3 and *=

0.277 .

49

(4+9)1/2

32.0 31.3

2.53 . From the student t table (Table A4) for d.f.

From here: t

0.277

= (9+4-1)=12, this corresponds to a confidence level of less than 99% or a

probability of less than 0.01. Since this is lower than the selected significance

level 0.05 , he can reject the null hypothesis.

In this case, both approaches gave the same result, but sometimes one would

reach different conclusions especially when sample sizes are small.

Chap 4-Data Analysis Book-Reddy

62

4.7 Sampling Methods

There are different ways by which one could draw samples; this aspect falls under the

purview of sampling design.

There are three general rules of sampling design:

• the more representative the sample, the better the results;

• all else being equal, larger samples yield better results, i.e., the results are more

precise;

• larger samples cannot compensate for a poor sampling design plan or a poorly

executed plan.

Some of the common sampling methods are described below:

(a) random sampling (also called simple random sampling) is the simplest

conceptually, and is most widely used. It involves selecting the sample of n

elements in such as way that all possible samples of n elements have the same

chance of being selected. Two important strategies of random sampling involve:

•

•

sampling with replacement, in which the object selected is put back into the

population pool and has the possibility to be selected again in subsequent picks, and

sampling without replacement , where the object picked is not put back into the

population pool prior to picking the next item.

Chap 4-Data Analysis Book-Reddy

63

(b)

non-random sampling: Often occurs unintentionally or unwittingly.

Introduces bias or skewness is introduced- and misleading confidence limits

In some cases, the experimenter intentionally selects the samples in a non-random

manner and analyzes the data accordingly.

Types of nonrandom sampling

• stratified sampling involves partitioning population into disjoint subsets or strata

based on some criterion. This improves efficiency of the sampling process in some

instances,

• cluster sampling in which strata/clusters are first generated, then random sampling

is done to identify a subset of clusters, and finally all the elements in the picked

clusters are analysis;

• sequential sampling is a quality control procedure where a decision on the

acceptability of a batch of products is made from tests done on a sample of the

batch. Tests are done on a preliminary sample, and depending on the results, either

the batch is accepted or further sampling tests are performed. This procedure

usually requires fewer samples to be tested to meet a pre-stipulated accuracy.

• composite sampling where elements from different samples are combined together;

• multistage or nested sampling which involves selecting a sample in stages. A larger

sample is first selected, and then subsequently smaller ones (IAQ testing in

buildings)

• convenience sampling, also called opportunity sampling is a method of choosing

samples arbitrarily following the manner in which they are acquired. Though

impossible to treat rigorously, it is commonly encountered in many practical

situations.

Chap 4-Data Analysis Book-Reddy

64

Stratified Sampling

Example 4.7.2 Stratified sampling for variance reduction (better estimate of

mean)

A home improvement center wishes to estimate the mean annual expenditure

of its local residents in the hardware section and the drapery section.

- Men visit the store more frequently and spend annually

approximately $50; expenditures of as much as $100 or as little as

$25 per year are found occasionally.

- Annual expenditures by women can vary from nothing to over $500

(variance much greater)

- Assume that 80% of the customers are men and that sample size is 15

If simple random sampling were employed, one would expect the sample to

consist of approximately 12 men (80% of 15) and 3 women.

Stratified sampling: 5 men and 10 women selected instead (more women

have been preferentially selected because their expenditures are more

variable).

Chap 4-Data Analysis Book-Reddy

65

Stratified Sampling Example contd.

Suppose the annual expenditures of the members of the sample turned out to

be:

- Men: 45, 50, 55, 40, 90

- Women: 80, 50, 120, 80, 200, 180, 90, 500, 320, 75

The appropriate weights must be applied to the original sample data if one

wishes to deduce the overall mean. Thus, if Mi and Wi are used to

designate the ith sample of men and women, respectively,

10

- 1 5 0.80

0.20

1 0.80

0.20

X = [

Mi

Wi ] [

280

1695] $79

15 i=1 0.33

15 0.33

0.67

i=1 0.67

where 0.80 and 0.20 are the original weights in the population, and 0.33 and

0.67 the sample weights respectively.

Chap 4-Data Analysis Book-Reddy

66

Probability

distributions

4.7.2 Desirable Properties of Estimators

Unbiased

Biased

Actual value

Fig. 4.14

Concept of

biased and

unbiased

estimators

Probability

distributions

Efficient

estimator

Inefficient

estimator

Fig. 4.15 Concept of

efficiency of estimators

(related to distribution,

allows stronger inferential

statements to be made)

Actual value

Chap 4-Data Analysis Book-Reddy

67

Normal Distribution

4

n=200

Minimum mean

square error

n=200

3

density

Probability

distributions

M

Unbiased

n=50

2

1

n=10

n=5

0

Actual value

-1

Fig. 4.16 Concept of mean square

error which includes bias and

efficiency of estimators

-0.5

True

0 value0.5

1

1.5

2

x

Fig. 4.17 A consistent estimator

is one whose

distribution becomes gradually peaked

as the sample size n is increased

Chap 4-Data Analysis Book-Reddy

68

4.7.3 Determining Sample Size during Random Surveys

Assume the underlying probability distribution to be normal. Let RE be

the relative error (also called the margin of error or bound on error of estimation)

of the population mean at a confidence level (1 ) , which for a two-tailed

distribution is defined as:

RE1 z / 2 .

4.45

A measure of variability in the population the CV defined as:

CV

s

std.dev.

x

true mean

where sx is the sample standard deviation

where sx is the sample stdev. The maximum value of sx at a stipulated CL:

sx ,1 z / 2 .CV1 . x

4.46

First, a simplifying assumption is made by replacing (N-1) by N in eq. 4.3 which

is the expression for the standard error of the mean for small samples. Then

sx2 N n

s 2x s 2x

(

)=

n

N

n N

2

4.47

Finally, using the definitions of RE and CV stated above, the required sample

size is:

n

1

2

1

sx2 N

1

RE1 2 1

(

)

z / 2 .CV1

N

Chap 4-Data Analysis Book-Reddy

4.48

69

Example 4.7.1. Determination of random sample size needed to verify peak

reduction in residences at preset confidence levels

An electric utility has provided financial incentives to a large number of their

customers to replace their existing air-conditioners with high efficiency ones.

This rebate program was initiated in an effort to reduce the aggregated electric

peak during hot summer afternoons which is dangerously close to the peak

generation capacity of the utility. The utility analyst would like to determine the

sample size necessary to assess whether the program has reduced the peak as

projected such that the relative error RE 10% at 90% CL.

The following information is given:

The total number of customers: N=20,000

Estimate of the mean peak saving 2 kW (from engineering calculations)

Estimate of the standard deviation sx =1 kW (from engineering calculations)

This is a two-tailed distribution problem with 90% CL which corresponds to a

one-tailed significance level of / 2 = (100-90)/2/100=0.05. Then, from Table

A4, z0.05 1.65 . Inserting values of RE = 0.1 and CV

sx

1

0.5 in eq.

2

4.48, the required sample size is:

n

1

0.1

1

[

]2

(1.65).(0.5)

20, 000

658.2 660

Chap 4-Data Analysis Book-Reddy

70

Sample size vs RE

Population =20,000

2,000

Population size =20,000

90% confidence level

1,800

1,600

Sample size n

1,400

CV (%)

1,200

50

1,000

25

800

600

400

200

0

0

5

10

15

20

Relative Error (%)

Fig. 4.18 Size of random sample needed to achieve different relative errors of the

population mean for two different values of population variability (CV of 25% and

50%). (Example 4.7.1)

Chap 4-Data Analysis Book-Reddy

71

4.8 Resampling Methods

The rationale behind resampling methods is to draw one sample, treat this original

sample as a surrogate for the population, and generate numerous sub-samples by

simply resampling the sample itself.

Thus, resampling refers to the use of given data, or a data generating mechanism, to

produce new samples from which the required estimator can be deduced

numerically. It is obvious that the sample must be unbiased and be reflective of the

population (which it will be if the sample is drawn randomly), otherwise the

precision of the method is severely compromised.

Effron and Tibshrirami (1982) have argued that given the available power of

computing, one should move away from the constraints of traditional parametric

theory with its over-reliance on a small set of standard models for which theoretical

solutions are available, and substitute computational power for theoretical analysis.

This parallels the manner in which numerical methods have in large part replaced

closed forms solution techniques in almost all fields of engineering mathematics.

•

•

•

Resampling is much more intuitive and provides a way of simulating the physical

process without having to deal with statistical constraints of the analytic methods.

A big virtue of resampling methods is that they extend classical statistical

evaluation to cases which cannot be dealt with mathematically.

The downside to the use of these methods in that they require large computing

resources (of the order of 1000 and more samples)- not an issue now

Chap 4-Data Analysis Book-Reddy

72

The creation of multiple sub-samples from the original sample can be done in several.

The three most common resampling methods are:

•

•

•

Permutation method (or randomization method) is one where all possible subsets of

r items (which is the sub-sample size) out of the total n items (the sample size) are

generated, and used to deduce the population estimator and its confidence levels or

its percentiles.

The jackknife method creates subsamples with replacement. There are several

numerical schemes for implementing the jackknife scheme. A widespread method

of implementation is to simply create n subsamples with (n-1) data points wherein a

single different observation is omitted in each subsample.

The bootstrap method is similar but differs in that no groups are formed but the

different sets of data sequences are generated by simply sampling with replacement

from the observational data set. Individual estimators deduced from such samples

permit estimates and confidence intervals to be determined. The method would

appear to be circular, i.e., how can one acquire more insight by resampling the same

sample? The simple explanation is that “the population is to the sample as the

sample is to the bootstrap sample”.

Chap 4-Data Analysis Book-Reddy

73

Example 4.8.1 Using the bootstrap method for deducing 95% CL of mean

The data corresponds to the breakdown voltage (in kV) of an insulating liquid

which is indicative of its dielectric strength. Determine the 95% CL.

62

59

54

46

57

53

50

64

55

55

48

52

53

50

57

53

63

50

57

53

50

54

57

55

41

64

55

52

57

60

53

62

50

47

55

50

55

50

56

47

53

56

61

68

55

55

59

58

First, use the large sample confidence interval formula to

estimate the 95% CL intervals of the mean. Summary

quantities are : sample size n=48,

xi 2646 and

x

2

i

_

144,950 from which x 54.7 and standard

deviation s= 5.23. The 95% CL interval is then:

54.7 1.96

5.23

54.7 1.5 (53.2,56.2)

48

Bootstrap: The 95% confidence intervals correspond to

the two-tailed 0.05 significance level yield (53.2, 56.1)

which are very close to the classical parametric range

Bootstrap with 1000 samples

Chap 4-Data Analysis Book-Reddy

74

Example 4.8.2. Using the bootstrap method with a nonparametric test to

ascertain correlation of two variables

One wishes to determine whether there exists a correlation between

athletic ability and intelligence level. A sample of 10 high school athletes

was obtained involving their athletic and I.Q. scores. The data is listed in

terms of descending order of athletic scores in the first two columns

Athletic score

97

94

93

90

87

86

86

85

81

76

I.Q. Score

114

120

107

113

118

101

109

110

100

99

Athletic rank

1

2

3

4

5

6

7

8

9

10

Chap 4-Data Analysis Book-Reddy

I.Q. Rank

3

1

7

4

2

8

6

5

9

10

75

- The athletic scores and the I.Q. scores are rank ordered from 1 to 10 as shown in the

last two columns of the table.

- The table is split into two groups of five “high” and five “low”. An even split of the

group is advocated since it uses the available information better and usually leads to

better “efficiency”.

-The sum of the observed I.Q. ranks of the five top athletes is =(3+1+7+4+2)= 17.

Fig. 4.20 Histogram based on 100

trials of the sum of 5 random ranks

from the sample of 10. Note that in

only 2% of the trials was the sum

equal to 17 or lower. Hence, with

98% CL, there is a correlation

between athletic ability and IQ

Frequency

The resampling scheme will involve numerous trials where a subset of 5 numbers is

drawn randomly from the set {1..10}. One then adds these five numbers for each

individual trial. If the observed sum across trials is consistently higher than 17, this

will indicate that the best athletes will not have earned the observed I.Q. scores

purely by chance. The probability can be directly estimated from the proportion of

trials whose sum exceeded 17.

Chap 4-Data Analysis Book-Reddy

Sum of 5 ranks

76