* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download chapter 4 classification of heart murmurs using

Survey

Document related concepts

Transcript

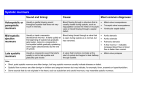

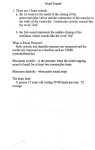

52 CHAPTER 4 CLASSIFICATION OF HEART MURMURS USING WAVELETS AND NEURAL NETWORKS 4.1 INTRODUCTION Heart auscultation is the process of interpreting sounds produced by the turbulent flow of blood into and out of the heart and the movement of mechanical structures that control this flow. This non-invasive, low-cost screening technique is used as a primary tool in the diagnosis of certain heart disorders, especially valvular problems. The conventional method of auscultation with a stethoscope has many limitations. The skills required for interpretation are acquired by listening to the heart sounds of many different patients. Therefore, it is a very subjective process that depends largely on the physician’s experience and ability to differentiate between different sound patterns. For an objective assessment of heart sounds for diagnosis of certain cardiac disorders, digital recording and subsequent analysis of these acoustic signals is the only reliable method. Digital sounds can be analysed efficiently using a computer. 4.2 LITERATURE SURVEY Faizan et al (2006) have developed a heart murmur classification system with features extracted using Spectrogram. Seven types of murmurs are classified using a Multilayer Perceptron Neural Network based on their timing within the cardiac cycle using Smoothed Pseudo Wigner-Ville distribution. Zhou et al (2008) have proposed a heart sound recognition 53 system based on features extracted from the normalized average Shannon energy in wavelet domain. These features are used with Full Bayesian Neural Networks to classify six types of heart sounds. Yadi et al (2008) report a classification method based on statistical features extracted using wavelet decomposition and MLP Neural Network to classify five murmurs. Many new techniques have been introduced for efficient heart disease diagnosis. However, they are expensive, require skilled technicians to operate the equipment and experienced cardiologists to interpret the results (Faizan et al 2006). These facilities are usually available only in advanced hospitals and not in rural and most urban hospitals. Furthermore, the wavelet transform has demonstrated the ability to analyse the heart murmurs more accurately than the techniques like STFT or Wigner Ville Distribution (WVD) cited in literature. The WVD has the ability to separate signals in both time and frequency. One advantage of the WVD over the STFT is that it does not suffer from the time frequency trade-off problem. On the other hand, WVD has a disadvantage since it shows cross terms in its response and attempts to smooth these results in decreased resolution in both time and frequency (Debbal and Bereksi-Reguig 2008). Considering these constraints, a new approach based on wavelet transform and artificial neural networks has been proposed for classifying heart murmurs into eight types, namely, normal, early systolic, mid systolic, late systolic, holo systolic, early diastolic, mid diastolic and late diastolic. Classification of heart murmurs into more number of types leads to enhanced accuracy of diagnosis. 4.3 HEART MURMURS 4.3.1 Categories In healthy adults, there are two normal heart sounds often described as a lub and a dub, that occur in sequence with each heart beat. These are the first heart sound (S1) and second heart sound (S2), produced by the turbulent flow against the closed atrioventricular and semilunar valves respectively. 54 The period between S1 and S2 is referred to as systole and the period between S2 and subsequent S1 is diastole. Murmurs are abnormal sounds that occur between S1 and S2 called systolic murmurs and between S2 and S1 called diastolic murmurs. The former occurs during ventricular contraction (systole) and the latter occur during ventricular filling (diastole). These heart sounds and murmurs are shown in Figure 4.1. (a) Normal heart sounds S1 and S2 (b) Systolic murmurs between S1 and S2 (c) Diastolic murmurs between S2 and S1 Figure 4.1 Heart sounds and murmurs 55 The systolic murmurs are again sub classified into early, mid, late and holo systolic murmurs depending on their position in the systolic period. Similarly the diastolic murmurs are further sub classified into early, mid and late diastolic murmurs. These pathological types of heart murmurs and their associated abnormalities are shown in Table 4.1. Table 4.1 Heart murmurs and associated abnormalities Category Systolic Sub category Early systolic Mid systolic Late systolic Holo systolic Early diastolic Diastolic Mid diastolic Late diastolic 4.3.2 Associated abnormality Mitral regurgitation Aortic stenosis Atrial septal defect Mitral valve prolapse Tricuspid valve prolapse Papillary muscle dysfunction Tricuspid insufficiency Ventricular septal defect Aortic regurgitation Pulmonary regurgitation Left anterior descending artery stenosis Mitral stenosis Tricuspid stenosis Atrial myxoma Complete heart block Characteristics Murmurs can be classified based on seven different characteristics : timing, shape, location, radiation, intensity, pitch and quality. Timing refers to whether the murmur is a systolic or diastolic murmur. Shape refers to the intensity over time; murmurs can be crescendo, decrescendo or crescendodecrescendo. Location refers to where the heart murmur is auscultated best. There are six places on the anterior chest to listen for heart murmurs; each of these locations roughly corresponds to a specific part of the heart. Radiation 56 refers to where the sound of the murmur radiates. The general rule of thumb is that the sound radiates in the direction of the blood flow. Intensity refers to the loudness of the murmur, and is graded on a scale from 0-6/6. The pitch of the murmur is low, medium or high and is determined by whether it can be auscultated best with the bell or diaphragm of a stethoscope. The quality of a murmur may be blowing, harsh, rumbling and musical. In the proposed work, classification is done with respect to the timing characteristic. 4.4 HEART MURMUR CLASSIFICATION 4.4.1 Data Collection and Pre-Processing Heart murmur files, normal and pathological, collected from the Johns Hopkins Cardiac Auscultatory Recording Database (CARD), an excellent resource for research purposes, and other internet sites, were used in this classification work (CARD 2006; Raymond 2006). The pathological types include early systolic, mid systolic, late systolic, holo systolic, early diastolic, mid diastolic and late diastolic murmurs. The murmur files obtained in mp3 format were converted to wav format for further processing. The sampling frequency chosen was 8000Hz in all cases to cover the range for analysing murmurs (Zhou et al 2008). Signals, when contaminated with noise, might lead to erroneous classification. To remove the noise that may overlap with the heart sounds, SureShrink, the Discrete Wavelet Transform (DWT) based wavelet shrinkage denoising technique was used (Donoho and Johnstone 1995; Cai and Harrington 1998). 4.4.2 Feature Extraction The main objective of feature extraction process is to derive a set of features that best represents the signal. So, the selection of features is an important criterion for proper classification of different heart murmurs. In this work, a set of 15 statistical features were extracted from the murmur files. The steps involved in the feature extraction process are illustrated in Figure 4.2. 57 Digitized heart sound sampled at 8000 Hz 16 bits/sample Extraction of single cycle of the heart murmur Normalized to absolute maximum Wavelet decomposition (db6 level 5) 3rd level detail 500-1000 Hz 4th level detail 250-500 Hz 5th level detail 125-250 Hz 5th level approximation 0-125 Hz Determining mean of absolute values of coefficients in each sub band Determining standard deviation of wavelet coefficients in each sub band Determining average power of wavelet coefficients in each sub band Determining ratio of absolute mean values of adjacent sub bands Figure 4.2 Feature extraction process 58 The denoised signal was segmented into cardiac cycles. A single cycle containing first heart sound S1, systolic period, second heart sound S2 and diastolic period, was extracted for analysis. To nullify the effect of input gain variations, the original signal was normalized to absolute maximum. Heart murmurs are non-stationary signals exhibiting marked changes with time and frequency (Debbal and Bereksi-Reguig 2008). Hence, Wavelet Transform, a proven tool for time-frequency analysis, was used. The most important aspect of feature extraction is the selection of a suitable wavelet and the number of levels of decomposition. Usually, the signal is visually inspected first and if they are kind of continuous, Haar or other sharp wavelet functions are used; otherwise a smoother wavelet can be employed. Another method is to perform tests with different types of wavelets and the one which gives maximum efficiency can be selected for the particular application. The number of levels decomposition is chosen based on the dominant frequency components of the signal. The levels are chosen such that those parts of the signal that correlate well with the frequencies required for classification of the signal are retained in the wavelet coefficients. Since the frequency range of heart murmurs is 0 to 1000Hz (Zhou 2008), the number of levels was chosen to be 5. The normalized signal was decomposed into five subbands using Daubechies db6 wavelet decomposition. The DWT decomposition of the input signal x[n] is schematically shown in Figure 4.3. h[n] h[n] h[n] x[n] A2 2 g[n] A Level 2 2 D2 1 g[n] ….. . 2 Level 5 Approximations 2 A5 g[n] Level 5 Details 2 D5 Details Level 1 2 Details D1 Figure 4.3 Level-5 wavelet decomposition to obtain wavelet coefficients 59 The decomposition of the signal into different frequency bands is simply obtained by successive highpass and lowpass filtering of the signal (Kandaswamy et al 2003; Sadik and Mustafa 2007). The decomposed subbands with their corresponding ranges of frequency are shown in Table 4.2. The resulting wavelet coefficients provide a compact representation of the energy distribution of the signal in each subband. As the frequency spectrum of heart murmurs range from 0 to 1000 Hz and the values of the observed wavelet coefficients in D1 and D2 were also very close to zero, the higher subbands D1 and D2 were discarded from further processing. Table 4.2 Frequency range of sub bands Decomposed sub band Frequency Range(Hz) D1 2000-4000 D2 1000-2000 D3 500-1000 D4 250-500 D5 125-250 A5 0-125 The time domain representation of the subbands for a normal heart sound file is shown in Figure 4.4. From the wavelet coefficients in each subband (D3, D4, D5 and A5), a set of 15 statistical parameters were derived in order to represent the time-frequency characteristics of the heart murmurs. These are shown in Table 4.3. 60 Figure 4.4 Decomposition of normal heart sound with db6 wavelet Table 4.3 Features derived for classification of heart murmurs Sl No 1 2 3 4 No.of features Mean of the absolute values of the coefficients in each sub band 4 Standard deviation of the wavelet coefficients in each sub band 4 Average power of the wavelet coefficients in each sub band 4 Ratio of the absolute mean values of adjacent sub bands 3 Description of feature Total 15 In order to automate the whole feature extraction process, Matlab was linked with Microsoft Excel. The features were then exported to the worksheet directly. These 15 parameters along with the corresponding output (type of murmur) forms the input feature vector for classification (Criley et al 2000; January and Zahra 2007). The dataset used in this work has 441 feature vectors or instances. 61 4.5 CLASSIFICATION USING ARTIFICIAL NEURAL NETWORKS 4.5.1 Neural Network Classifier Artificial Neural Networks (ANN) are computational models that are patterned after the structure of the human brain. They have the ability to ‘learn’ mathematical relationships between a series of input (independent) variables and the corresponding output (dependent) variables. This is achieved by training the network with a training dataset consisting of input variables and the known or associated outcomes. Networks are programmed to adjust their internal weights based on the mathematical relationships identified between the inputs and outputs in a dataset. The knowledge gained by the learning experience through training is stored in the form of connection weights, which are used to make decisions on test inputs. Once a network has been trained, it can be used for classification tasks with a separate test data set for validation (Rajendra et al 2003; Jack 1996). H1 X1 Y1 O1 I1 H2 X2 Outputs I2 Y8 Inputs O8 X15 I15 Input Layer Hn Hidden Layer Output Layer Figure 4.5 Feedforward neural network to classify heart murmurs 62 Among the several existing neural network architectures, the feedforward neural network trained using supervised learning with backpropagation algorithm has been chosen for this work. This model, which is considered the most useful learning model, is being widely used in the biomedical field (William 1995; Maurice and Donna 2006). The feedforward neural network used in this classification work is shown in Figure 4.5. The input layer has 15 nodes representing the 15 input features and the output layer has 8 nodes representing normal and seven different classes of murmurs. 4.5.2 Encoding of Data for ANN The classification scheme of 1-of-C coding has been used for classifying the heart murmurs into one of the eight output types. For each type of heart murmur, a corresponding output class is associated. The feature vector set, x represents the ANN inputs and the corresponding class once coded, constitutes the ANN outputs. In order to make the neural network training more efficient, the input feature vectors were normalized so that all the values fall in the range between 0 and 1 (Jack 1996; Richard and Michael 2005). The encoding of output is as shown in Table 4.4. Table 4.4 Encoding of output for classification of heart murmurs Sl No. Output vector Classification 1 1 0 0 0 0 0 0 0 Normal 2 0 1 0 0 0 0 0 0 Early systolic 3 0 0 1 0 0 0 0 0 Mid systolic 4 0 0 0 1 0 0 0 0 Late systolic 5 0 0 0 0 1 0 0 0 Holo systolic 6 0 0 0 0 0 1 0 0 Early diastolic 7 0 0 0 0 0 0 1 0 Mid diastolic. 8 0 0 0 0 0 0 0 1 Late diastolic 63 The value corresponding to the correct class of output is entered as 1 and other values are entered as zero. The modified input vector is xk, with k = 1, 2,…..K where K is the number of heart murmur signals. The output vector associated with xk is denoted by yk. 4.5.3 Training with Backpropagation Learning As inputs and desired outputs are known, the training is done in a supervised fashion. In the basic back propagation (BP) training algorithm, the weights are moved in the direction of the negative gradient. The BP learning updates the network weights and biases in the direction in which the performance function decreases most rapidly – the negative of the gradient. A single iteration of this algorithm can be written as wk+1 = wk – αk gk (4.1) where wk is a vector of current weights and biases, gk is the current gradient, and αk is the learning rate. Convergence is sometimes faster if a momentum term is added to the weight update formula. When using momentum, the net is proceeding not in the direction of the gradient, but in the direction of a combination of the current gradient and the previous direction of weight correction. In backpropagation learning with momentum, the weights for training step t+1 are based on the weights at training steps t and t-1. The newff function in Matlab’s neural network toolbox was used for the generation of feedforward backpropagation neural network architecture. This newff function has ‘learngdm’ as the default learning function which chooses ‘initnw’ initialization function for initializing a layer’s weights and biases according to the Nguyen-Widrow initialization algorithm. Learning occurs according to learngdm’s learning parameters with their default values as 0.01 for learning rate and 0.9 for momentum constant. 64 4.5.4 Activation Function There are different types of activation functions. The most commonly used are : (i) hyperbolic tangent sigmoid (ii) log sigmoid and (iii) linear. The first criterion of an activation function is that the function must output values in the interval range [0,1]. A second criterion is that the function should output a value close to 1 when sufficiently excited. The sigmoid function meets both the criteria. The performance of these three activation functions were experimented in the neural network models constructed. 4.5.5 Stopping Criteria Network training continues until a specific terminating condition is satisfied. The terminating condition chosen for the network convergence is the minimum mean squared error (MSE) as defined below : MSE where 1 p 2 (d y ) m m p m 1 (4.2) p = number of training instances d = desired outputs y = output obtained from the neural network The target for mean squared error set in our work is 0.001. Training stopped when the value of MSE dropped below 0.001. 4.6 EXPERIMENTAL RESULTS AND DISCUSSIONS The training algorithm, activation function and the number of neurons in the hidden layer that provide the best results were determined 65 empirically. For this purpose, several feedforward backpropagation neural networks were constructed using various combinations of training algorithms, activation functions and different number of neurons in the hidden layer. The training algorithms used are Levenberg-Marquardt (LM), Gradient Descent with Adaptive learning rate (GDA), Resilient Backpropagation (RP) and Scaled Conjugate Gradient Descent (SCG). The activation functions used are logsigmoid, tansigmoid and purelin. Each network was trained with 331 instances (75% of the dataset with uniform distribution of all classes) and tested with 110 instances (25% of the dataset with uniform distribution of all classes). The performance of these neural network models was measured through accuracy, sensitivity and specificity. The results obtained are presented in Table 4.5. Accuracy, sensitivity and specificity were calculated using the following formulae (Sadik and Mustafa 2007) : Accuracy where TP TN Total (4.3) Sensitivity TP TP FN (4.4) Specificity TN TN FP (4.5) TP = True positive, positive cases classified as positive TN = True negative, negative cases classified as negative FN = False negative, positive cases classified as negative FP = False positive, negative cases classified as positive 66 Table 4.5 Performance of different neural network models Model Training Activation Number number Algorithm Function of Epochs 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 LM LM LM LM LM LM LM LM LM LM LM LM GDA GDA GDA GDA GDA GDA GDA GDA GDA GDA GDA GDA RP RP RP RP RP RP RP RP RP RP RP RP SCG SCG SCG SCG SCG SCG SCG SCG SCG SCG SCG SCG Logsig Logsig Logsig Logsig Purelin Purelin Purelin Purelin Tansig Tansig Tansig Tansig Logsig Logsig Logsig Logsig Purelin Purelin Purelin Purelin Tansig Tansig Tansig Tansig Logsig Logsig Logsig Logsig Purelin Purelin Purelin Purelin Tansig Tansig Tansig Tansig Logsig Logsig Logsig Logsig Purelin Purelin Purelin Purelin Tansig Tansig Tansig Tansig 8 10 6 10 6 5 4 5 19 15 18 16 1734 1400 1472 1371 2000 2000 2000 2000 2000 2000 2000 2000 147 73 140 110 2000 2000 2000 2000 2000 2000 2000 2000 141 113 143 116 713 756 827 538 931 773 633 659 Number of neurons in hidden layer 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 21 26 31 35 Accuracy Sensitivity Specificity % % % 91.4892 93.6419 97.9473 95.9768 85.0311 85.0311 85.0311 86.1174 97.9473 99.0236 99.0236 99.0943 94.7183 96.8710 94.7183 92.7117 88.2602 86.1075 85.0311 81.8838 95.7946 96.8710 95.7946 94.7916 95.7946 95.7946 94.7183 95.9117 85.0311 85.0311 85.0311 86.1025 99.0236 99.0236 99.0236 95.9419 97.9473 97.9473 88.2602 96.9502 85.0311 85.0311 85.0311 86.1234 97.9473 96.8710 99.0236 96.9289 91.7584 83.4166 91.7584 96.5837 75.0750 75.0750 75.0750 87.7788 91.7584 100.000 91.7584 100.000 75.0750 83.4166 91.7584 95.3390 75.0750 75.0750 75.0750 81.5821 75.0750 91.7584 91.7584 95.3834 83.4166 91.7584 91.7584 98.9725 75.0750 75.0750 75.0750 87.8596 91.7584 91.7584 91.7584 96.5213 91.7584 91.7584 91.7584 97.6854 75.0750 75.0750 75.0750 87.7144 83.4166 83.4166 91.7584 98.9593 91.4494 95.1568 98.8642 91.9587 86.5062 86.5062 86.5062 75.0898 98.8642 98.8642 100.000 91.8272 97.6284 98.8642 95.1568 75.1152 90.2136 87.7420 86.5062 83.3919 98.8642 97.6284 96.3926 91.8117 97.6284 96.3926 95.1568 75.2631 86.5062 86.5062 86.5062 75.2463 100.000 100.000 100.000 91.8808 98.8642 98.8642 87.7420 91.8179 86.5062 86.5062 86.5062 75.0984 100.000 98.8642 100.000 83.3982 67 The network models that performed best with each of the four training algorithms were compared. The comparison results are presented in Table 4.6 and illustrated in Figure 4.6. Table 4.6 Performance comparison of training algorithms No. of Number Accuracy Sensitivity Specificity Training Activation neurons in of algorithms function hidden Epochs % % % layer Tansig 26 15 99.02 100.00 98.86 LM Tansig 31 633 99.02 91.76 100.00 GDA Tansig 26 2000 96.87 91.76 97.63 RP Tansig 21 2000 99.02 91.76 100.00 Percentage SCG 102 100 98 96 94 92 90 88 86 Accuracy Sensitivity Specificity LM SCG GDA Training Algorithms RP Figure 4.6 Performance comparison of training algorithms It was observed that the neural network model with LM training algorithm and 26 neurons in the hidden layer showed better performance when compared to the models with other training algorithms. It was also noted that tansigmoid activation function outperforms the other two activation functions. Also, given the speed of convergence of the network during 68 training is indicated by the number of epochs, the networks trained with LM algorithm were observed to converge faster than those with SCG, GDA and RP. Hence, the neural network model (Sl. No.10 in Table 4.5) with 26 neurons in the hidden layer, trained with Levenberg-Marquardt (LM) algorithm and tansigmoid activation function for both the hidden and output layer was found to yield the best results for the dataset used. The performance of the proposed neural network classifier is comparable with other classifiers cited in literature and the comparison is presented in Table 4.7. Table 4.7 Performance comparison of different classifiers Reference Classifier No of Average types of Classification murmurs Accuracy (%) classified 7 86.40 Faizan et al (2006) MLP-ANN Zhou et al (2008) FBNN 6 95.83 Yadi et al (2008) MLP-ANN 5 92.00 Proposed MLP-ANN 8 99.02 4.7 SUMMARY In this chapter, the development of a heart murmur classifier was presented. Discrete Wavelet Transform was used to extract a set of 15 features from the heart murmur signals. Feedforward neural network models with backpropagation learning were developed with different combinations of training algorithms and activation functions to classify the heart murmurs into one of the eight types namely normal, early systolic, mid systolic, late systolic, holo systolic, early diastolic, mid diastolic and late diastolic. The neural network model with Levenberg-Marquardt training algorithm and tansigmoid activation function was found to yield the best performance. The results demonstrate the capability of the developed system as a support tool for physicians in the diagnosis of heart murmurs.