* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Psycho Acoustics

Survey

Document related concepts

Transcript

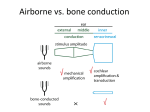

Psycho acoustics. The Biology and Psychology of hearing by Charles Feilding. Introduction. Whether you seek a career as a creator of music, producer of musical content or just seek to enrich your life with the pleasure of playing you will inevitably be seeking to develop consistent and predictable results. Live performers practice in order to be able to play a piece of music with predictable results. Composers with home studios invest a lot of money to create an acoustic environment that will yield them consistent results. Builders of concert halls invest hundreds of millions to create halls that will allow live players to approach a concert with confidence of getting the sound they have developed. Paradoxically the one thing that the ear will almost never provide you with is consistent results. Nature designed the ear to be a highly flexible device capable of protecting itself against sudden extremes of volume and constantly adapting itself to best interpret the constant streams of audio with which it is bombarded. It is necessary therefore to develop some understanding of what the ear is doing in order to be able to guarantee consistent results in the music world. This study is called psychoacoustics. Psychoacoustics is not the study of sound. It is the study of how we perceive sound. It is a complex analysis of both the biology of the ear (by now quite well understood) and the psychology of sound perception which is an ongoing study. 1. The biology of hearing Sound pressure waves are funneled through the acoustic meatus of the external ear (E) to the tympanic membrane (in green), and mechanically transduced through the middle ear (M) into a fluid-filled chamber called vestibulus. The inner ear (I) organs branch off from this chamber. The inner ear is the most complex portion of the peripheral auditory system. It hosts sensory hair cells that signal head accelerations and sound-induced vibrations to the brain. The auditory system of mammals is housed in a labyrinthic foramen deeply embedded in the temporal bone, the hardest and most complex bone of the skull. The temporal bone hosts a large central chamber permeated by physiological fluids, called vestibulus. All of the inner ear organs branch off from this chamber. On one side is the snail shaped cochlea, where the acoustic interface of the nervous system is found, on the other are the semicircular canals, hosting the sensors of head acceleration, which are not relevant for the auditory function. Soon after the discovery of the hearing organ by Alfonso Corti in 1851, other anatomists, among which Reissner, Deiters, Lowenberg, Hensen, Hasse, contributed to the clarification of the cochlear structure. The first relevant contribution to the understanding of the processes underlying sound transduction by the ear is due to Hermann von Helmholtz. The eminent German scientist pointed out the function of the ossicle chain in the middle ear. In an exemplar description, von Helmoltz explained that the mechanical coupling provided by the middle ear optimizes the transfer of energy from the air that sets the tympanic membrane into motion, to the fluid filling the cochlea. QuickTime™ and a GIF decompressor are needed to see this picture. Von Helmholtz also provided the first mechanical model of the cochlea. Ignoring the hydrodynamical effects of the fluid, he represented the basilar membrane as an elastic strip radially clamped across the cochlear duct with different and graded tension coefficients in the radial directions. Assuming a negligible tension in the longitudinal direction, this basilar membrane model is equivalent to a set of harmonic oscillators tuned to different frequencies. Imagining that each portion of the basilar membrane senses a force proportional to the stapedial input, the basilar membrane response to a tone of given frequency (say 2 kHz) should be similar to that shown in the movie above, although with a much smaller amplitude. Accordingly, the cochlea was thought of as a sort of spectrum analizer providing a frequency-position map of sound Fourier components. The idea that the frequency analysis of sound is provided by such a simple mechanism survived until 1927. Realizing the importance of hydrodynamic interactions, Georg von Békésy built a brass and glass frame with two uniformly tapered chambers, filled with water and separated by a strip having graded elastic properties. Cochlear travelling waves were discovered! Von Békésy’s experiments on freshly dissected human temporal bones confirmed the observations performed on his physical model. QuickTime™ and a GIF decompressor are needed to see this picture. The movie here above illustrates, with exaggerated amplitude, the basilar membrane response to a tone of about 2 kHz. At variance from the resonator-bank model imagined by von Helmholtz, the inertial effects of the comoving fluid impart a phase delay of a few cycles to the basilar membrane oscillation. Von Békésy’s findings stimulated the production of numerous cochlear models that reproduced the observed wave shapes, but were in contrast with psychophysical data on the frequency selectivity of the cochlea. Beginninnig in the ‘70s with Rhode’s results and more definitively in the ‘80s, with the improved measurement by Sellick, Patuzzi and Johnstone (figure at right), it was realized that the vibration of the basilar membrane was non-linear and more sharply tuned, at low sound pressure levels, than previously thought. In 1983, this prompted Davies to state that it was necessary to accept a revolutionary new hypothesis concerning the action of the cochlea namely, that an active process within the organ of Corti increases the vibration of the basilar membrane. Between 1985 and 1986 William Brownell, Bechara Kachar and their collaborators used video microscopy to demonstrate low-frequency electro motile responses in isolated outer hair cells. In 1987 Jonathan Ashmore used the patch-clamp technique to elicit electro motile responses from outer hair cells (movie below) at kHz frequencies and measured the cell fractional length change as a function of trans membrane potential. QuickTime™ and a GIF decompressor are needed to see t his picture. 2. Wave Form Characteristics Before we begin talking about sound we need to define some commonly accepted terms for the discussion of sound. Every waveform has characteristics that distinguish it from other waveforms. These are: 1. Frequency 2. Amplitude 3. Velocity 4. Wavelength 5. Shape 6. Phase 7. Harmonic content 8. Envelope Frequency In the diagram below the value of the waveform starts at 0 at time t=0, increases to a maximum value in the positive direction, decreases through zero to a maximum value in the negative direction then returns to zero at t=2 and then repeats the process again. One completion of this path is called one cycle of the wave form. A cycle can begin at any point on the waveform but to be complete it must pass through the zero line and end at a point moving in the same direction (positive or negative) having the same value as the starting point. Thus the wave from t=0 to t=2 constitutes a cycle and the wave from t=1 to t=3 is also a cycle. The number of cycles which occur end to end (that is cycle two begins at the cycle one end point) in one second is called the frequency of the waveform and is measured in the unit hertz (Hz). The term Hertz is equivalent to “cycles per second”. Amplitude In the case of sound waves the positive and negative excursions of the diagram represent increases and decreases in the atmospheric pressure of the air caused by the sound source and perceived by the listener’s ear with the zero line representing normal atmospheric pressure. The distance above or below the line is called the amplitude of the waveform at that particular instant of time. The maximum positive and negative excursions are called the positive and negative peaks respectively. Velocity The velocity of a wave is the speed with which it travels through a medium and is given by the equation: V = d/t2-t1 Where V is the velocity of propagation in the medium d is the distance from the source t is the time in seconds. In the case of sound waves the medium is commonly air mollecules, for electricity the medium is commonly electrons. The wave velocity determines how fast a particular cycle of a waveform will travel a certain distance. At 70 degrees Fahrenheit the speed of sound waves in air is approximately 1130 feet per second. This speed is temperature dependent and increases at a rate of about 1.1 feet per seconds for each degree of Fahrenheit of temperature. Wavelength The wave length (∆) of a wave is the actual distance in the medium between the beginning and the end of a cycle and is equal to: ∆ = V/f where: ∆ is the wavelength in the medium V = the velocity in the medium F is the frequency in Hertz Thus the wavelength of one cycle of a 30Hz sound wave is 1130 ft/sec divided by 30 Hz or 37.66 feet. Although we speak of wave velocity, the particles of the wave medium do not move far. Sound travels as a compression wave. The air in one spot is compressed by the sound source and it compresses the air next to it as it expands back to normal. The location of the compression moves at the speed of sound but the air molecule only move to the extent that they are pushed together and then returned to their normal spacing. The molecules do not travel with the wave. The action of electrical waves in a wire is the same except that electrons rather than air molecules are compressed. Shape The diagrams up to this point have shown only one shape of wave form, the sine wave, but the eight characteristics apply to simple waveforms of all shapes. These are called simple waveforms because they are continuous and repetitive. One cycle of a square wave looks exactly like the next and they are all symmetrical round the zero line. Complex waves are waves that do not necessarily repeat and are not necessarily symmetrical around the zero line. An example of complex waveform would be that created by speaking a word. Since complex waveforms often do not repeat it is difficult to divide them into cycles or categorize them as having a frequency. Fortunately all possible simple and complex waveforms can be constructed through the use of combinations of sine waves of different frequency, phase and amplitude plus noise. The discussion here focuses on sine waves. Phase Since a cycle can begin at any point on a waveform it is possible to have two wave generators producing waveforms of the same shape, frequency, and peak amplitude which will have different amplitudes at different point in time. These wave are said to out of phase with respect to each other. A cycle can be divided into 360 degrees and the sine wave (so named because its amplitude follows the trigonometric sine function) is usually considered to begin at 0 degrees with zero amplitude, increase to a positive maximum at 90 degrees, decrease to 0 at 180 degrees, decrease to a negative maximum at 270 degrees and returns to 0 at 360 degrees. In the following diagram the fist wave A can be considered in phase with the ideal sine curve because their amplitudes match at every point on the X axis. The second wave reaches its maximum positive amplitude 90 degrees before the first and is considered to be 90 degrees out of phase with the first because it led it by 90 degrees. The third wave C begins decreasing from zero 180 degrees before the first and is 180 degrees out of phase. The fourth D leads the first wave by 270 degrees and is also out of phase. Phase cancellation. Waveforms can be added by adding their signed amplitudes at each instant of time. When two waveforms which are completely in phase (0 degrees phase difference) and of the same frequency, shape, and peak amplitude are added the resulting waveform is of the same frequency phase and shape but will have twice the original peak amplitude. If two waves are the same as the ones described except that they are completely out of phase (phase difference = 180 degrees they will completely cancel each other out when added resulting in a straight line of zero amplitude. If the second wave is only partially out of phase (not exactly 180 or (2n-1)x180 out of phase it would interfere constructively in some places resulting in a more positive amplitude than in the first wave and interfere destructively at other points resulting in a more negative amplitude at those points in time than in the first wave. Harmonics A waveform having a frequency that is an integral multiple of the frequency of a second waveform is called a harmonic of that second waveform. For example a 1000 Hz wave is a harmonic of a 500 Hz wave because it is two time the 500 Hz frequency. In this case the 500 Hz wave is called the Fundamental (or first Harmonic) and the 1000 Hz wave is called the Second Harmonic because its is the result of multiplying the fundamental frequency by two. The third harmonic would be 1500 Hz. Envelopes The envelope of a waveform describes the way its intensity varies over time. For a sound wave it describes changes in loudness. The envelope is composed of three or four sections depending on whether the sound sustains or not. Attack: The time necessary for a sound to rise from silence to it’s maximum amplitude. Decay: The time required for a sound to drop from its maximum amplitude to its sustaining level (if there is one) or to silence if there is not one. Sustain: the level at which a sound sustains (if it does sustain) Release: The time required for a sound to drop from its sustaining level to silence after the sustaining energy is discontinued. 3. Characteristics of ears 1. Perception of related frequencies Beats Combination tones Masking 2. Perception of dynamic range 3. The hot spot 4. Perception of spectral content 5. Perception of sound pressure levels 6. Perception of direction 7. Threshold of hearing 8. Threshold of feeling 9. Threshold of pain 10. The phon 11. The sone 3.1 Perception of related frequencies The ear perceives frequencies that are even multiples of each other to be specially related, and this relationship is the basis of the musical octave. For example: since concert A is 440 Hz the ear hears 880 Hz as having a special relationship to concert A. it is the next tone higher than concert A which sounds most like concert A. The next note above 880 Hz that sounds most like concert A would be 1760 Hz. Therefore 880 is said to be one octave above concert A and 1760 Hz is said to be two octaves above concert A. In all probability the ear is able to recognize octaves due to the absence of beats when they sound together Beats Two tones which differ only slightly in frequency and have approximately the same amplitude will produce beats (periodic changes in amplitude) at the ear equal to the difference between the two frequencies. Beats are the result of the ear’s inability to separate two closely pitched notes. For example, if A440 and A442 are sounded together the ear will hear a pitch which is the average of the two pitches (440+442)/2 = 441, and the beating will modulate at a rate equal to the difference between the two frequencies (442440 = 2Hz. Combination tones. Another important psycho acoustic phenomenon associate with related frequencies is the production of combination tones. Whenever two waves of whose frequencies differe by more than 50Hz are sounded together two additional frequencies are generate by our hearing mechanism: one which is the sum of the two frequencies and one which is the difference between the two frequencies. For example: If a 2,000 Hz tone is sounded with a 2500 Hz tone will additionally produce 1) A sum tone at 4500 Hz 2) A difference tone at 500 Masking Masking is a phenomenon by which loud sounds prevent the ear from hearing soft sound. The greatest masking effect occurs when the frequency opf the sound aqnd the frequency of the masking noise are close to each other. For example a 4kHz will mask a softer 3.5 kHz tone but have little effect on a 100 Hz tone. Masking can also be caused by harmonics of a masking tone so a 1kHz tone with a strong second harmonic could mask a 1900 kHz tone. 3.2 Perception of dynamic range The ear operates over an energy range of 1^12:1 (1,000,000,000,000:1) and compresses the perceived intensity level in order to protect itself. The loudness of a sound is perceived by the ear as varying approximately in proportion to the logarithm of its energy. As a result increasing the power output of an amplifier by 10 watts from 10 to 20 watts gives a significantly greater volume increase than increasing power output from 60 to 70 watts. To get the same increase in loudness the 60 watt output would have to be doubled to 120 watts. When we compare this information with the measurements by Sellick we can easily see why it is folly to mix at high volume. The ear’s sensitivity to sound changes decreases logarithmically as the gain increases 3.3 Hot spots and distortions The ear has its greatest sensitivity in the range 1 to 4 kHz. This means that a 1 kHz sine wave that produces a given sound pressure will sound louder than a 10 kHz sine wave which produces the same sound pressure. In addition the nature of the ear causes it to produce harmonic distortion of sound waves above a certain volume level. Harmonic distorion is the production of harmonics of a waveform which do not exist in the original sound. Thus the ear can cause a loud 1 kHz tone to be heard as a combination of 1kHz, 2 kHz 3kHz etc tones. 3.4 Perception of Harmonic Content Harmonics are very important with respect to musical instruments because their presence and relative intensities in the sound waves produced enable the ear to differentiate between instruments playing the same fundamental tone. For example a violin has a set of harmonics differing in degree and intensity from that of a viola. The overtone stucture is called the timbre of an instrument. According to Hamm: (1973) The primary color characteristic of an instrument is determined by the strength of the first few harmonics. Each of the lower harmonics produces its own characteristic effect when it is dominant or it can modify the effect of another dominant harmonic if it is prominent. In the simplest classification the lower harmonics are divided into two tonal groups. The odd harmonics (3rd and 5th) produce a stopped or covered sound. The even harmonics (2nd 4th and 6th) produce choral or singing sounds. Musically the second is an octave above the sound making it fuller. The third is termed a quint or musical twelfth, It produces a sound that many musicians refer to as blanketed. Instead of making the tone fuller a stron third actually makes the tone softer. Adding a fifth to s strong third gives the sound a metallic quality that gets annoying in character as its amplitude increases. A strong second with a strong third tends to open the covered effect. Adding the 4th and 5th to to this changes the sound to an open horn like character the higher harmonics above the 7th give the tone edge or bite. Provided the bit is balance to the basic musical tone it tends to reinforce the fundamental giving the sound a sharp attack quality. Many of the edge harmonics are musically unrelated pitches such as the 7th 9th and 11th. Therefore too much edge can produce a raspy dissonant quality. Since the ear seems very sensitive to the edge harmonics controlling their amplitude is of paramount importance. The study of a trumpet tone shows that the edge effect is directly related to the loudness of the tone. Playing same trumpet note loud or soft makes very little difference in the amplitude of the fundamental and lower harmonics. However harmonics above the 6th increase and decrease in amplitude in almost direct proportion to the loudness. The edge balance is a critically important loudness signal for the human ear. Although the ear may receive the overtone structure of a violin if the listening level is loud enough the ear will provide additional harmonics and change the perceived timbre of the instrument. This means that sound monitored at very loud levels may sound quite different when played back at very low levels. To make things even more difficult the frequency response of the ear changes with the loudness of perceived signals. The loudness compensation switch found on many hi fi pre amps is an attempt to compensate for the decrease in the ear’s sensitivity to low frequency sound at low levels. Sound Pressure Levels The curves in the following diagram are the Fletcher Munson Equal Loudness Contours and they indicate average ear response to different frequencies at different levels. The horizontal curves indicate the sound pressure levels (spls) at different frequencies that are require to produce the same perceived loudness. Thus to equal the loudness of a 1.5 kHz tone at a level of 110 dB spl, (the typical level create dby a trumpet type car horn at a distance of three feet) a 40 Hz tone has to be 2 dB greater in sound pressure level. A 10 kHz tone must be 8 dB greater in sound pressure than the 1.5 kHz tone to be perceived as being as loud. At 50 dB spl, (the noise level present in the average private business office) the level of a 30 Hz tone must be 30 dB greater and a 10 kHz tone must be 14 dB greater than a 1.5 kHz tone to be perceived at the same volume. Thus if a piece of music is monitored so that the signals produce a sound pressure level of 110 dB and it sounds well balanced it will sound both bass and treble deficient when played back at 50 dB spl. Loudness and pitch The loudness level of a tone can affect the the pitch the ear perceives. For example if the intensity of a 100 Hz tone is increased from 40 to 100 dB spl the pitch will decrease by about ten percent. You have only to listen to recordings of a ships hortn as it leaves harbour to hear the flattening induce by huge sound pressure levels. At 500 Hz the pitch changes about 2% fo the same increase in sound pressure level. Important features to note when looking at Fletcher-Munsen equal-loudness curves: Notice that the curves flatten out at higher loudness levels. For example in the graph above, the range from highest to lowest in physical decibel level is about 70 dB; while for the medium loudness level, the range is 37 dB; and for the highest level, 28 dB. Notice that there is an increase in sensitivity to frequency intensity around 3500 - 4000 Hz. This is due in part to the first resonance of the ear canal (which is about an inch long). There is a second resonance at twice the first that can be seen in the medium loudness level curve at around 8000 Hz. Perception of Direction One ear cannot discern the direction from which a sound comes but two ears can. This is called the binaural effect and is made possible by four cues received by the ears. 1) relative intensity 2) time of incidence of the sound 3) phase 4) complexity of the wave form. Relative intensity Refers to the fact that a sound coming from the right will reach the right ear at a higher intensity level than the left ear. There are two reasons for this: 1) the intensity of a sound wave is inversely proportional to the square of the distance between the source and the listener. The intensity 6 feet from the source is one fourth that at three feet. Since the left ear is farther away from the source than the right it receives a lower intensity signal. 2) The left ear is in an acoustic shadow cast by the head, The head blocks the direct sound waves and allows only reflected sound to reach the left ear at the blocked frequencies thus reducing the intensity of the sound perceived at the left ear. This effect is insignificant for low tones because they bend around the head easily but it is considerable for tones above 500 Hz because the effect increases as the frequency rises. Time of Incidence. Because the path length to the right ear is shorter than the path length to the left ear with respect to a sound source on the right, sudden changes in sound pressure from the source will be sensed earlier by the right ear. Thus if a wave begins, changes intensity, changes frequency, changes wave shape or stops the right ear detects it before the left ear. Phase For waves which are continuous the ears can detect the phase difference between them resulting from the time needed for a particular portion of the wave to travel from one side of the head to the other. The phase cue is most accurate in localizing low frequency tones where the path length between the ears is a wavelength or less. As the frequency increases the wavelength decreases and it is possible the wave may appear in phase at both ears with the far ear hearing the wave several complete cycles after the near ear. Thus phase cues are less effective at high frequencies. Complexity of the wave form. The complexity of the waveform acts as a cue for the localization of compound tones and noises. The sound shadow created by the head attenuates high frequencies while low frequencies reach the low ear by bending around the head. As a result the two ears hear a difference in the timbre of the waveform. The farther ear hears fewer high frequency components. To summarize: the accuracy of cues for localization varies with the frequency and complexity of the perceived tone. High frequency tones are located by intensity, low frequency tone by phase, complex tones and noise by a combination of intensity, time of incidence, and timbre. If there is no difference between what the left ear and the right ear hears the source appears to be the same distance from each ear and the only places a single source could fulfill this requirement are directly in front of or behind us. Since the shape of our ears favors sound from the front the brain generally assumes that it any unidentified location is in front of the listener. This phenomenon allows the recording engineer to place sound not only in the left and right speakers of a stereo system but also between the speaker. By feeding the same sound to both speakers the ear hears the sound identically in both ears and the sound appears to be directly in front of the listener. By changing the proportions fed to the two speakers the engineer can create the illusion that the sound source is anywhere between the two speakers. This is called panning. Conclusions for Musicians. The ear is a highly adaptive and self-protecting organ and that makes it a less than 100% reliable witness. Of the eight characteristics of wave forms only three are not affected by psycho acoustic factors: Velocity, Wavelength and Shape. The remaining five are very much influenced by psycho acoustic factors. Frequency – Sounds appear flat at high volumes Amplitude – depends on playback volume differently at different frequencies. Phase – Although the phase of a sound is fixed at the point of origin the perception of phase by the ear is frequency and distance dependent. Harmonic content high volume can add harmonics that do not exist. Envelope envelopes played at high volume will become compressed by the natural logarithmic compression of the ear. Most musicians first encounter these variables when they discover that a mix that they had spent most of the night working on sounds terrible in the morning. This “next day test” can be massively disappointing and can also be avoided using the following rules. The Golden Rule: Learn to mix at low to medium volume levels unless you are specifically checking a timbre or trying to impress a client with a finished mix in which case crank it to 75dB plus.. Gain matching Match the gains between instruments using the lowest possible gain. For example if you trying to match the level of a bass drum against a bass slowly turn the master gain down to silence and see which one disappears first. Adjust their respective levels until turning the gain to zero cause them to disappear both at the same time. Avoid masking and difference tones. This is basically done at the arrangement level. Arrange the parts in your music in such a way that instruments with similar harmonic content play in different octaves (like guitar and piano for example). Unless you are seeking a special effect do not double chord voicing between similar instruments. Timbre matching. If you are making critical adjustments to timbre do so at a reasonably high volume. (around 60-70dB). Listening to timbre at low volume will cause you to boost the lows and highs too much. Avoid working too long on a single sound. Do not work on a single sound for long or your ear will adapt. Alternate between percussive and sustaining sounds when possible. Do not spend more than 10-15 minutes on any one sound. If you do your ear will rapidly “protecting” itself from the sound. Take frequent breaks and listen to something different. If you are working on pop listen to Classical and vice versa. If you have been working on decaying sounds (percussion) listen to strings. Avoid over tiredness.