* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download What is Operating System (OS)

Survey

Document related concepts

Transcript

What is Operating System (OS) ?

Operating System is a program (in general sense – in fact there are loads of programs

included in OS) working as a linker between computer hardware and user. It is started

as a first program and it is the last program that is turned off when we switch off

computer. Old definition of OS says that it’s “the software that controls the

hardware”. The most well known OS is famous M$ Windows. It’s also known from

its weak stability and low security level. But its simplicity won over other features (at

least for home applications).Other know operating systems are various distributions of

LINUX, UNIX.

We can divide OSes by the way user communicates with it:

text mode – like DOS or UNIX/LINUX console (we can run several consoles)

graphical mode – Windows, MacOS

We can also distinct systems that can run one or several programs at the same time.

That feature made computer multimedial.

What parts are included and why?

There are several levels of controlling the machine:

kernel services – supports processes by communicating them with peripheral

devices. It responds and reacts on interrupts from devices – OS is being

steered by interrupts. Kernel is the core of OS.

library services – programmers may use some already written functions.

Programs don’t have to be written each time from the lowest point.

application level services

If you build an OS what things must be considered?

Operating System has to have do certain things.Computer should be useable, which

means avarage person should be albo to use it,not only specially educated people.

Hence, we have abstraction. Virtual world is created. Through OS we can imagine

that catalogues are shelves where our papers (files) are stored. From the success of

M$ Windows we may conclude that creating nice and intuitive virtual world is almost

crucial in developing a system.

Abstracting have other reasons:

to control computers devices OS has device drivers that communicates with

programmes.

It enables new functions. Eg. File abstraction – programs don’t have to worry about

disks

To run several programs virtual computers are being run.

Operating Systems supports the following components:

process management – OS manages various activities which ‘forms’

processes. Process in not a program – few processes may run one program.

main memory management – OS maneges which program needs

memory,which data (on which address) may be deleted (freed).

file management – they may be stored on secondary storage-disks.Files are not

deleted when the power is turned off. Creation,deletion,moving of files and

directories is supported.

Input/Output management – user don’t know how those devices specificly

works – it’s hidden from him. Driver knows the details.

secondary storage management – it consists of disks, tapes etc. Data in main

memory is almost all the time being changed – all data cannot be stored

there.So its written down as backup on secondary storage. But this space also

have to be managed, allocated.

protection system – multiple user computer’s processes has to be protected

from other processes. They cannot fall in conflict.

command interpreter system – gets and runs users commands.It not a part of

kernel.

Process synchronization

A cooperating process is one that can affect or be affected by other processes

executing in the system. Concurrent execution of cooperating processes requires

mechanisms that allow these processes to communicate with one another and also to

synchronize their actions.

Inorder, for the processes to communicate, an operating system must adopt one of the

following schemes:

Shared memory model

Message passing model.

Shared memory model and semaphores

In this model, processes share memory or the address space. Therefore, they could

very easily share data between them without the need for explicit messages to be

exchanged inbetween them. But, this shared model could lead to problems as well. If

two processes try to modify a shared resource (eg: a variable) at the same time, then

this leads to inconsistencies. This condition is called a race condition. To guard

against race condition, we need process synchronization and coordination. One of the

ways to achieve process synchronization is to use semaphores. Other solutions

include the use of monitors.

Semaphores

Each process has a segment of code, called a critical section, in which the process

may be changing some common variables, updating a table, writing a file, and so on.

Therefore, it is essential that at any time, if one process is executing in its critical

section, then no other process is executing in its critical section. This can be achieved

by using a synchronization tool, semaphore. A semaphore S is an integer that can be

accessed only through two standard atomic operations: wait and signal.

wait(S) {

while(S<=0); // no operation

S--;

signal(S) {

S++;

}

}

Message passing and mailboxes

If two or more processes do not share memory, then we need to use message

passing. It is an Inter-Processs Communication (IPC) mechanism to allow processes

to communicate and to synchronize their actions without sharing the same address

space.

Processes communicate by sending/receiving message using send/receive system calls

or primitives:

send(destination, &message);

receive(source, &message);

The problem with above system calls is, how to identify a sender/receiver? The

solution is either to use a direct communication model wherein each process (sender

or receiver) is identified by a unique id. The other solution will be to use a mailbox.

A mailbox can be viewed abstractly as an object into which messages can be placed

by processes and from which messages can be removed. Each mailbox has a unique

identification. In this scheme, a process can communicate with some other processes

via a number of different mailboxes. Two processes can communicate only if they

share a mailbox.

Semaphores vs. Mailboxes

Semaphores are low-level and efficient. But, they are at the same time, hard to use

right. It is very easy to mix up wait() and signal() or to omit a signal(). Such bugs may

be hard to find, because they might show up only when during race conditions.

Message passing using mailboxes on the other hand, is relatively easy to implement

unlike semaphores. But, message passing can be highly inefficient because message

passing involves context switches after every send/receive call.

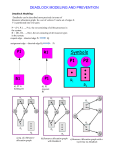

Deadlocks

What is a deadlock

Deadlocks are something that may occur in a system where several processes are

competing for the resources available. If, for example, process 1 (P1) is holding

resource A but needs resource B to complete its work, and process 2 (P2) is holding

resource B but needs resource A to complete its work, a deadlock has occurred.

About resources

There are several types of resources in a system. Files, memory space and

input/output devices are some examples on resources. One type of resource may have

several instances. For example, if you have two printers, and you don’t care which

printer prints what, you have two instances of the printer resource. If, however, one of

the printers is a colour printer and the other prints in black and white, or if the printers

are located far away from each other, you probably want to make a difference

between them. In that case, the printers are regarded as individual resource types

rather than instances of the same resource type. In other words, if a process requests a

resource, any instance of that resource type should suffice.

How to handle deadlocks

Since deadlocks occur quite seldom (a small number of times a year), most operating

systems don’t handle deadlocks at all. Instead, if a deadlock occurs, they rely on

manual restart of the system. There are, however, ways to handle deadlocks, but they

have negative effects on performance.

There are three major ways to handle deadlocks: deadlock prevention, deadlock

avoidance and deadlock detection. There are 4 conditions that must be fulfilled for a

deadlock to occur, and with deadlock prevention, you make sure that one of them can

never happen. If you’re using deadlock avoidance, there are several algorithms to

choose from. For example, in one of the algorithms, the safe state algorithm, you

make sure that the system is in a ‘safe state’, i.e. a state where deadlocks cannot

occur. If a process makes a request that would put the system in an unsafe state, it is

not allocated to the resource, even if it is available at that time. Deadlock detection

uses an algorithm to examine the state of the system to see if a deadlock has occurred,

and if it has, it uses another algorithm to recover from it.

Memory management

Programs that are to be executed needs to be brought into main memory and placed in

a process. When it executes, it loads instructions and data from memory. Memory also

needs to be shared between the processes currently residing in main memory. Because

a process can be placed almost anywhere in memory in most systems, it needs some

way to reach the information in the memory regardless of where it resides. If you

know at compile time where the process will be, you can generate absolute code,

which statically points at a memory location. If you don’t, you can delay the statically

pointing until execution time, making memory locations unique for each time you

load it. Or, you can use point dynamically to memory, and the pointers may change

during execution. This is the most common way to do it. The actual memory

addresses are called physical addresses, while the addresses generated by the CPU are

called logical addresses. In compile time and load time, the logical addresses are the

physical addresses, while the logical addresses generated at execution time differs

from the actual physical addresses. The logical addresses are then translated into

physical addresses by a memory-management unit (MMU), which is done at run-time.

There is also a relocation register that relocates the addresses spawned by the user to

the actual address asked for. For example, if the process starts at memory location

500, all calls to location 0 (the start of the process from the users eye) is relocated to

500 and so on (location 5 is relocated to location 505).

If you’re running many processes, you might have troubles fitting them into the

memory. This is where dynamic loading comes in handy. The main program is loaded

into memory, but all the routines remain on the disk until they are needed. When a

routine is called, you first check if it is in memory, if it isn’t you load it from disk.

When the routine isn’t needed anymore, you free the memory it used, and you can use

it for other routines. This is done without any help from the operating system, it is up

to the user to design the program so it can take advantage of dynamic loading.

Similar to dynamic loading, dynamic linking waits until, for example, language

libraries are used until it links them. With dynamic linking, you can also update the

libraries used and only need to recompile the program linked to them in order for it to

use the new, updated library instead of relinking it. Dynamic linking, however,

requires help from the operating system. Some operating systems only support static

linking.

Another way to more efficiently use main memory is swapping. With swapping, you

can swap a process to disk in order to give space for another process, for example one

with higher priority than the one currently in memory. When the other process

finishes, you swap it back from disk to memory and continue the execution. One must

be careful to not swap a process that is waiting for an I/O device to become available,

since usually when you swap a process, you use the same address space for the new

process. If the I/O device then becomes available, it can try to allocate itself with the

new process. Also, since disk access is slow, you want to make sure you have enough

work to do while the processes are being swapped, you don’t want the CPU just to ‘sit

there’, waiting for orders.

I’m running out of time and space, I will not cover this subject any more, even though

there are much more to say about it, like memory allocation and such.

Lessons Learned

Andrew wrote:

Personally I’m not happy with the amount of interaction amongst the group at

the current time. I believe this has resulted from a lack of face-to-face

interaction and dedicated project discussion time. Perhaps with the next

deliverable we could schedule a meeting early on in the piece to thrash out an

outline or plan of what everyone is going to do and how it relates to

everything else. As many pieces of the project are related to each other this

could be very helpful. To date it has been hard to get this interaction

happening as most of the group are only just settling into new residences in

Sweden (and Cezary hasn’t even arrived in the country yet) but in future this

would be easy to remedy.

Just a note on the labs – I’ve been having a little difficulty getting process

lists, particularly via SSH. It makes it hard to work out which processes are

yours when you are returned a list of 400 processes that are

running…particularly finding the defunct processes is hard. I don’t know if

there is an easy solution to this but I expect that using the grep command to

filter the list could help. Unfortunately, it’s almost a year since I learned grep

and I’m a little rusty (and can’t seem to get it to output anything when I try the

command ps –e | grep ‘*defunct*’ so perhaps a little help here would be in

order? Perhaps Magnus is a better person to ask about this point so I’ll forward

it to him as well…

Rickard wrote:

I agree with Andrew on the part regarding the group. Most likely we will be

much more better off with the next GD, since the communication will work

better. Also, since I have been the one delaying this work, I must become

better at organizing my time, so that the fact that I have no internet access the

day before the deadline doesn’t make me unable to make it.