* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Linear Combinations and Linear Independence – Chapter 2 of

Non-negative matrix factorization wikipedia , lookup

Orthogonal matrix wikipedia , lookup

Determinant wikipedia , lookup

Linear least squares (mathematics) wikipedia , lookup

Perron–Frobenius theorem wikipedia , lookup

Exterior algebra wikipedia , lookup

Cross product wikipedia , lookup

Jordan normal form wikipedia , lookup

Gaussian elimination wikipedia , lookup

Cayley–Hamilton theorem wikipedia , lookup

Singular-value decomposition wikipedia , lookup

Laplace–Runge–Lenz vector wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

Matrix multiplication wikipedia , lookup

Vector space wikipedia , lookup

Euclidean vector wikipedia , lookup

Covariance and contravariance of vectors wikipedia , lookup

Matrix calculus wikipedia , lookup

Linear Combinations and Linear Independence – Chapter

2 of DeFranza

Dr. Doreen De Leon

Math 152, Fall 2016

1

Vectors in Rn - Section 2.1 of DeFranza

Recall: A matrix with one column is called a (column) vector.

We will now study sets of vectors and look at their properties.

Euclidean 2-space, denoted R2 , is the set of all vectors with two real-valued entries:

}

{[ ]

x1 2

x ,x ∈ R .

R =

x2 1 2

Similarly, Euclidean 3-space, denoted R3 , is the set of all vectors with three real-valued entries:

x1 3

x2 x1 , x 2 , x 3 ∈ R .

R =

x3 Definition (Vectors in Rn ). For any positive integer n, Euclidean n-space, denoted by Rn ,

is the set of all vectors with n real-valued entries:

x1

x2

n

R = .. x1 , x2 , . . . , xn ∈ R .

.

x n

The entries of a vector are called the components of the vector.

Geometrically, in R2 and R3 , a vector is a directed line segment from the origin to the point

whose coordinates re given by the components of the vector.

[ ]

4

Example: The vector

in the plane.

3

1

(4, 3)

[ ]

4

3

Geometrically, two vectors can be added using the parallelogram rule.

The origin (0, 0) is called the initial point and the point (4, 3) is the terminal point. The

length of a vector[is]the length of the segment from the initial point to the terminal point. So,

4

the length of v =

is

3

√

42 + 34 = 5.

For a vector v ∈ Rn , its length, denoted ∥v∥ is given by

√

∥v∥ = v12 + v22 + · · · + vn2 .

Vector Algebra

• The vector whose entries are all zero is the zero vector, 0.

• Equality. Two vectors in Rn are equal provided their corresponding components are

equal.

• Addition. Given u, v ∈ Rn , their sum is determined by adding corresponding entries:

u1

v1

u1 + v1

u2 v2 u2 + v2

u + v = .. + .. = .. .

. . .

un

vn

un + vn

• Scalar multiplication. Given u ∈ R2 and c ∈ R, the scalar multiple of u by c is found

by multiplying each entry in u by c:

u1

cu1

u2 cu2

cu = c .. = .. .

. .

un

cun

2

Note: The product cu represents a scaling of the vector u. If 0 < |c| < 1, then the

scaling is a contraction; if |c| > 1, the scaling is a dilation. If c > 0, then the vector has

the same direction as u; otherwise, it has the opposite direction.

3

−1

−2

1

Examples: Let u =

4 , v = 0 , c = 2. Find u + v and cu − v.

0

−1

Solution:

3 + −1

2

−2 + 1 −1

u+v =

4 + 0 = 4

0 + −1

−1

2(3)

1

2(−2) −1

cu − v =

2(4) − 0

2(0)

1

7

−5

=

8 .

−1

Algebraic Properties of Rn

For all u, v, w ∈ Rn and all scalars c and d,

(i) u + v = v + u

Commutativity of Addition

(ii) (u + v) + w = u + (v + w)

Associativity of Addition

(iii) u + 0 = 0 + u = u

Additive Identity

(iv) u + (−u) = −u + u = 0

Additive Inverse

(v) c(u + v) = cu + cv

Right Distributivity

(vi) (c + d)u = cu + du

Left Distributivity

(vii) c(du) = (cd)u

Associativity of Scalar Multip.

(viii) 1 · u = u

Scalar Identity

3

2

Linear Combinations - Section 2.2 of DeFranza

Definition (Linear Combinations). Let S = {v1 , v2 , . . . , vp } be a set of vectors in Rn and

let c1 , c2 , . . . , cp be scalars. An expression of the form

y = c1 v1 + c2 v2 + · · · + cp vp =

p

∑

ci vi

i=1

is a linear combination of the vectors of S with weights c1 , c2 , . . . , cp .

3

Example: Can b = −6 be written as a linear combination of

2

1

2

a1 = −5 and a2 = 3?

2

2

In other words, we want to know if we can find scalars c1 and c2 so that

c1 a1 + c2 a2 = b.

1

2

3

c1 −5 + c2 3 = −6

2

2

2

c1

2c2

3

−5c1 + 3c2 = −6

2c1

2c2

2

c1 + 2c2

3

−5c1 + 3c2 = −6 ,

2c1 + 2c2

2

which gives the linear system of equations

c1 + 2c2 = 3

−5c1 + 3c2 = −6

2c1 + 2c2 = 2

We may solve using Gaussian elimination.

1 2 |

3

1

r2 →r2 +5r1

−5 3 | −6 −

−−−−−→ 0

r3 →r3 −2r1

2 2 |

2

0

1 2

r2 ↔r3

−−−→ 0 1

0 13

1

2 |

3 r →− 1 r

2

2 2

13 |

9 −−−−−→ 0

0

−2 | −4

| 3

1 2

r3 →r3 −13r2

| 2 −−−−−−−→ 0 1

| 9

0 0

4

2 | 3

13 | 9

1 | 2

|

3

|

2

| −17

giving the system of equations

c1 + 2c2 = 3

c2 = 2

0 = −17 ←− false statement

=⇒ no solution

Since there is no solution, then b cannot be written as a linear combination of a1 and a2 .

Note: The columns of the augmented matrix are a1 , a2 , b,

[

]

a1 a2 | b .

In general, a vector equation

x1 a1 + x2 a2 + · · · + xp ap = b

has the same solution set as the linear system whose augmented matrix is

[

]

a1 a2 · · · ap | b

(1)

So, b can be generated by a linear combination of a1 , a2 , . . . , ap if there exists a solution to

the linear system corresponding to the matrix (1).

Theorem 1. The linear system Ax = b is consistent if and only if the vector b can be written

as a linear combination of the column vectors of A.

Definition. If v1 , v2 , . . . , vp ∈ Rn , then the set of all linear combinations of v1 , v2 , . . . , vp

is denoted by span{v1 , v2 , . . . , vp } and is the subset of Rn spanned by v1 , v2 , . . . , vp (So,

span{v1 , v2 , . . . , vp } is the set of all vectors of the form c1 v1 + c2 v2 + · · · cp vp , with c1 , c2 , . . . , cp

scalars).

1

−2

Example: Let v1 = 3 and v2 = 1 .

−1

7

(a) Give a geometric description of span{v1 , v2 }.

1

(b) For what value(s) of h (if any) is b = 4 ∈ span{v1 , v2 }?

h

Solution:

(a) A plane through the origin containing v1 and v2 .

5

(b) b ∈ span{v1 , v2 } if there exist c1 , c2 such[that c1 v1 + c]2 v2 = b, which is true if the system

of equations whose augmented matrix is v1 v2 | b is consistent.

1 −2 | 1

1 −2 |

1

1 −2 |

1

5

r

→r

−

r

3

3 7 2

r2 →r2 −3r1

3

1 | 4 −−

1 −−−−−−

1

−−−−→ 0 7 |

→ 0 7 |

r3 →r3 +r1

−1

7 | h

0 5 | h+1

0 0 | h + 27

This system is consistent if

2

2

= 0, or h = − .

7

7

2

In other words, b ∈ span{v1 , v2 } only if h = − .

7

h+

Matrix-Vector Products Revisited

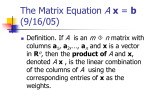

Definition. If A is an m × n matrix with columns a1 , a2 , . . . , an ∈ Rm and if x ∈ Rn , then

the product of A and x is the linear combination of the columns in A using the corresponding

entries in x as weights; i.e.,

x1

[

] x2

Ax = a1 a2 · · · an .. = x1 a1 + x2 a2 + · · · + xn an .

.

xn

Example:

[ ]

1 −4

2

0 and x =

(1) Find Ax if A = 2

.

−1

3

6

1

−4

0

Ax = 2 2 + (−1)

3

6

2

4

0

= 4 +

6

−6

6

= 4 .

0

6

(2) Let v1 , v2 , v3 ∈ Rn . Write 3v1 + 6v2 − 2v3 as the product of a matrix and a vector.

[

] 3

3v1 + 6v2 − 2v3 = v1 v2 v3 6 .

−2

Theorem 2. If A is an m × n matrix with columns a1 , a2 , . . . , an ∈ Rm , x ∈ Rn , and b ∈ Rm ,

then the matrix equation Ax = b has the same solution as the vector equation x1 a1 + x2 a2 +

· · · xn an =[ b, which has the same]solution set as the system of linear equations whose augmented

matrix is a1 a2 · · · an | b .

Because of the above, we see that the equation Ax = b has a solution if and only if b is a linear

combination of the columns of A (i.e., b ∈ span{a1 , a2 , . . . , an }).

Theorem 3. Let A be an m × n matrix. The following statements are logically equivalent (i.e.,

either all or true or none are true).

(a) For each b ∈ Rm , Ax = b has a solution.

(b) Each b ∈ Rm is a linear combination of the columns of A.

(c) The columns of A span Rm (i.e., span{a1 , a2 , . . . , an } = Rm ).

(d) A has a pivot in every row.

1 3 2

Example: Let A = 2 4 −1. For what values of b does Ax = b have a solution?

5 11 0

Solution:

1 3

2 | b1

1

3

2 |

b1

R2 −2R1 →R2

2 4 −1 | b2 −−

−−−−−−−→ 0 −2 −5 | b2 − 2b1

→R3 −5R1 →R3

5 11

0 | b3

0 −4 −10 | b3 − 5b1

1

3

2 |

b1

→R3 −2R2 →R3

b2 − 2b1

−−−−

−−−−−→ 0 −2 −5 |

0

0

0 | b3 − 2b2 − b1

Therefore, there is no solution unless b3 − 2b2 − b1 = 0 . Do the columns of A span R3 ? No.

Note: There is no pivot in row 3.

3

Linear Independence – Section 2.3 of DeFranza

Consider the homogeneous system of equations Ax = 0 by writing it as a vector equation.

7

2 0 9

Example: Write 3 −1 2 x = 0 as a vector equation.

4 −5 4

2

0

9

0

Solution: x1 3 + x2 −1 + x3 2 = 0

4

5

4

0

The question we want to consider is whether the trivial solution (x1 = x2 = x3 = 0) is the only

solution.

Definition (Linearly Independent). A set of vectors S = {v1 , v2 , . . . , vp } in Rn is linearly

independent (LI) if the vector equation

x 1 v1 + x 2 v2 + · · · x p v = 0

has only the trivial solution. Otherwise, S is linearly dependent (LD). (In other words, S is

linearly dependent if there exist constants c1 , c2 , . . . , cp not all zero so that

c1 v1 + c2 v2 + · · · + cp vp = 0,

which is known as a linear dependence relation among v1 , v2 , . . . , vp .)

1

0

−1

Example: Are v1 = 2 , v2 = 1 , and v3 = 2 linearly independent? If not, find a

3

1

1

linear dependence relation among the vectors.

Solution: Solve x1 v1 + x2 v2 + x3 v = 0.

1 0 −1 | 0

1 0 −1 | 0

1 0 −1 | 0

R2 −2R1 →R2

R −R2 →R3

2 1

2 | 0 −−

4 | 0 −−3−−−

4 | 0

−−−−−→ 0 1

−−→ 0 1

R3 −3R1 →R3

3 1

1 | 0

0 1

4 | 0

0 0

0 | 0

We see that x1 and x2 are basic variables, and x3 is a free variable. Therefore, the system has

infinitely many solutions, and v1 , v2 , and v3 are not linearly independent.

Next, we must find a linear dependence relation among the vectors. Solving the system, we

obtain

x1 − x3 = 0 =⇒ x1 = x3

x2 + 4x3 = 0 =⇒ x2 = −4x3

x3 is free.

Then, we choose a nonzero value for the free variable, x3 . For example, let x3 = 1. Then x1 = 1

and x2 = −4, so v1 − 4v2 + v3 = 0 is a linear dependence relation.

Q: How many linear dependence relations are there?

A: Infinitely many.

8

Linear Independence of Matrix Columns

The columns of a matrix A are linearly independent if and only if Ax = 0 has only the trivial

solution.

[

]

Why? Write A as A = a1 a2 · · · an . Then, Ax = 0 is equivalent to

x1 a1 + x2 a2 + · · · xn an = 0.

By definition, ai , for i = 1, 2, . . . , n, are linearly independent if and only if the equation has

only the trivial solution.

Sets of Vectors

One Vector

A set containing one nonzero vector v is linearly independent.

Why? x1 v1 = 0 has only the trivial solution if v ̸= 0.

Two Vectors

Consider the set {v1 , v2 } where v1 ̸= 0 and v2 ̸= 0. Then

x 1 v1 + x 2 v = 0

has nontrivial solution only if x1 and x2 are both nonzero. So, we can write

x2

xv v1 = −x2 v2 =⇒ v1 = − v2 .

x1

So, the set {v1 , v2 } is linearly dependent if and only if one of the vectors is a multiple of the

other.

Two or More Vectors

Theorem 4. A set S = {v1 , v2 , . . . , vp } of two or more vectors is linearly dependent if and

only if at least one of the vectors in S is a linear combination of the other vectors.

1

0

−1

Example: Consider our previous example, in which v1 = 2 , v2 = 1 , v3 = 2 . We

3

1

1

saw that

v1 − 4v2 + v3 = 0

=⇒ v1 = 4v2 − v3 .

9

Theorem 5. If a set contains more vectors than there are entries in each vector, then the set

is linearly dependent.

[

]

Proof. Let S = {v1 , v2 , . . . , vp } ⊂ R. Let A = v1 v2 · · · vp . Then A is n × p and the

equation Ax = 0 corresponds to a system of n equations in p unknowns. If p > n, there are

more variables than equations, so there must be a free variable. This implies that Ax = 0 has a

nontrivial solution, and therefore, the columns of A are linearly dependent and so S is linearly

dependent.

Theorem 6. If a set S = {v1 , v2 , . . . , vp } in Rn contains the zero vector, then S is linearly

dependent.

Proof. Without loss of generality (WLOG), let v1 = 0. Then, 1v1 + 0v2 + · · · + 0vp = 0 is a

linear dependence relation, so S is linearly dependent.

Example Determine if the sets are linearly dependent.

1

0

2

(1) −4 , 0 , 0

5

0

1

1

4

7

0

(2) 2 , 5 , 8 , 1

3

6

9

2

1

−2

−2 4

(3)

3 , −6

−4

8

Solution:

(1) Linearly dependent, because it contains the zero vector.

(2) Linearly dependent, because there are four vectors in R3 .

(3) Linearly dependent, because v2 = −2v1 .

Theorem

7. A set ]{v1 , v2 , . . . , vn } of n vectors in Rn is linearly independent if and only of

[

̸ 0.

det v1 v2 · · · vn =

Notes:

1. The proof of this theorem is a straightforward application of the Invertible Matrix Theorem.

2. It should be emphasized that this theorem only applies to sets of n vectors in Rn .

10