* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download View - OhioLINK ETD

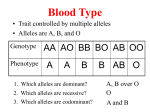

Quantitative trait locus wikipedia , lookup

Genealogical DNA test wikipedia , lookup

Pharmacogenomics wikipedia , lookup

Population genetics wikipedia , lookup

Genetic drift wikipedia , lookup

Microevolution wikipedia , lookup

Species distribution wikipedia , lookup

Inbreeding avoidance wikipedia , lookup

UNIVERSITY OF CINCINNATI

Date:

I,

August 6th, 2007

Ran He

,

hereby submit this work as part of the requirements for the degree of:

DOCTOR OF PHILOSOPHY

in:

ENVIRONMENTAL HEALTH

It is entitled:

Some Statistical Aspects of Association Studies in Genetics

and Tests of the Hardy-Weinberg Equilibrium

This work and its defense approved by:

Chair: Dr. Marepalli Rao

Dr. Ranajit Chakraborty

Dr. Ranjan Deka

Dr. Ning Wang

Some Statistical Aspects of Association Studies in Genetics and

Tests of the Hardy-Weinberg Equilibrium

A dissertation submitted to the

Division of Research and Advanced Studies

of the University of Cincinnati

In partial fulfillment of the

requirements for the degree of

DOCTOR OF PHILOSOPHY (Ph.D.)

in the Division of Epidemiology and Biostatistics

of Department of Environmental Health

of the College of Medicine

2007

By

Ran He

B. S., Sichuan University, China, 2001

Committee Chair: Dr. Marepalli Rao

Abstract

The applicability of a statistical method hinges how far the assumptions are met

for its validity. Some statistical tests are robust when assumptions are relaxed, while

others are not.

In first part of the dissertation, we focus on exploring assumption

violations in some statistical methods for genetic association studies and use simulations

to test the robustness of these methods. In genetic studies, one of the major objectives is

to apply statistical models to identify genes contributing to variations in specific

quantitative traits. In order to correlate such quantitative phenotypes with underlying

genotypes, the method of analysis of variance (ANOVA) is most commonly used. If the

null hypothesis of equality of means is rejected, it implies that the investigating gene is

associated with the phenotype. However, we show that this method raises a paradox by

violating the assumptions of its validity. An alternative method, namely Bartlett’s test, is

available to overcome the paradox. We compare the performances of the ANOVA test

and Bartlett’s test to answer the underlying question. Our study indicates that the

ANOVA test works despite the failure of the validity of its assumption.

In the second part of the dissertation, we focus on tests of the Hardy-Weinberg

Equilibrium (HWE). In population genetics, HWE states that, under certain conditions,

after one generation of random mating genotype frequencies at a single gene locus will

attain a particular set of equilibrium values. The most commonly used method for testing

HWE is the goodness-of-fit Chi-squared statistic, which does not discriminate

homozygote excess from heterozygote deficiency because of its two-sided nature. We

ii

propose alternative methods and use simulations to assess their power. The proposed

methods are amenable to sample size calculations. We compare our sample size

calculations with those available in the literature and find that ours are smaller. For more

than two alleles, testing the HWE is computationally complex. We propose a new method

of testing the HWE for multi-allelic cases by reducing the dimensionality of the problem.

Mathematical, statistical, and computational aspects of the new method are set out in

detail.

iii

iv

Acknowledgments

I would like to express my sincere gratitude to Dr. Marepalli Rao, who is my

advisor, and Dr. Ranajit Chakraborty, who is the director of Center for Genome

Information, for their inspiration, professional guidance and support for my dissertation. I

have greatly profited from their solid knowledge and great personalities. I am indebted to

their constant encouragement and mentoring throughout my graduate studies.

I would also like to thank Dr. Ranjan Deka and Dr. Ning Wang for serving on my

committee and providing many insightful suggestions and comments and discussing with

me some of the difficult points in the dissertation. I would also like to express my

appreciations to faculty and staff in the Department of Environmental Health with whom

I have a good fortune to interact. Finally, I want to thank my family who always

encouraged me to succeed in achieving high goals.

v

Table of contents

1

2

3

4

Introduction..........................................................................................................................1

Purpose, Hypotheses, Specific Aims, and Significance ......................................................3

2.1

Purpose...................................................................................................................3

2.2

Research Hypotheses .............................................................................................4

2.3

Specific Aims.........................................................................................................5

2.4

Significance............................................................................................................6

On testing that genotypes at a marker locus is associated with a given phenotype.............8

3.1

Background: Traditional Approach .......................................................................8

3.2

Statistical Methods...............................................................................................10

3.2.1 Analysis of Variance (ANOVA)..............................................................10

3.2.2 Bartlett’s Test...........................................................................................11

3.2.3 Linkage Disequilibrium Coefficient and Joint distribution .....................13

3.2.4 Joint distribution of the Phenotype and Genotypes of G and G′..............14

3.2.5 Conditional Expectations and Variances .................................................15

3.3

Some Facts and Paradox ......................................................................................16

3.3.1 Some Facts ...............................................................................................16

3.3.2 The Paradox .............................................................................................16

3.4

Efficacy of ANOVA ............................................................................................16

3.5

Power Comparison of ANOVA and Bartlett’s test..............................................26

3.5.1 Different choices of mean (λ) ..................................................................27

3.5.2 Conclusion ...............................................................................................28

Hardy-Weinberg Equilibrium in the case of two alleles....................................................31

4.1

Introduction..........................................................................................................31

4.1.1 What is Hardy-Weinberg Equilibrium? ...................................................31

4.1.2 Assumption of HWE................................................................................33

4.1.3 Departures from the Equilibrium .............................................................34

4.1.4 Inbreeding Coefficient θ ..........................................................................35

4.2

Properties of Inbreeding coefficient θ..................................................................38

4.2.1 Formulation of the problem .....................................................................38

4.2.2 Bounds on θ .............................................................................................40

4.2.3 Homozygote excesses and Heterozygote deficiencies.............................40

vi

4.3

4.4

5

Maximum Likelihood estimates ..........................................................................41

Testing the validity of HWE ................................................................................42

4.4.1 Hypothesis Testing on θ...........................................................................42

4.4.2 A likelihood test of the null hypothesis ...................................................43

4.4.3 Siegmund’s T-Test...................................................................................45

4.4.4 χ2 -Test......................................................................................................48

4.4.5 Relationship between θˆ , Wald’s Z-test, Siegmund’s T-test and

χ2 -test.......................................................................................................50

4.5

Advantages of the Wald’s Z-test or Siegmund’s T-Test .....................................51

4.6

Sample size calculation........................................................................................51

4.6.1 Sample size calculation based on Z-test or Siegmund’s T-test................51

4.6.2 Sample size calculation based on Ward and Sing’s χ2-test......................54

4.6.3 Power comparison between T and χ2 tests via simulations......................55

4.7

Conclusion. ..........................................................................................................59

Hardy-Weinberg Equilibrium in the case of three alleles..................................................60

5.1

Introduction..........................................................................................................60

5.2

Joint distribution of genotypes.............................................................................60

5.2.1 Parameter spaces......................................................................................61

5.2.2 Bounds on θ .............................................................................................65

5.2.3 Biological scenario...................................................................................65

5.3

Structure of the case of 3 alleles: data and Likelihood ........................................67

5.3.1 Structure of the case of 3 alleles: data .....................................................67

5.3.2 Maximum Likelihood estimators.............................................................67

5.4

5.5

6

Joint distribution of the type Ωθ and Connection to lower dimensional

joint distributions .................................................................................................74

5.4.1 The case of A1 vs. (not A1) ......................................................................75

5.4.2 The case of A2 vs. (not A2) ......................................................................76

5.4.3 The case of A3 vs. (not A3) ......................................................................77

Estimation of inbreeding coefficient and hypotheses testing ..............................77

5.5.1

Estimation of inbreeding coefficient in a model of the type Ωθ .............77

5.5.2

Testing that the joint distribution of the alleles is of the type Ωθ ...........80

5.6

Conclusions..........................................................................................................92

Generalization to multiple alleles ......................................................................................93

6.1

Formulation of the problem .................................................................................93

vii

6.2

7

Data and Likelihood.............................................................................................96

6.2.1 Data Structure ..........................................................................................96

6.2.2 Maximum Likelihood estimators.............................................................96

6.3

Lower dimensional joint distributions .................................................................97

Conclusions and Future Research....................................................................................101

Bibliographic references ................................................................................................103

viii

List of Appendix

Appendix 1:

Appendix 2:

Appendix 3:

Appendix 4:

Appendix 5:

Appendix 6:

Appendix 7:

Appendix 8:

Appendix 9:

Derivation of Conditional Expectations and Variances........................106

SAS code of different scenarios of ANOVA test .................................112

SAS code of Power comparison of ANOVA and Bartlett test .............118

Derivation of Expectation and Variance of Siegmund’s T-test ............122

SAS code of sample size calculation of Wald’s Z test .........................124

SAS code of power comparison of Ward and Sing’s χ2 Test

and Wald’s Z test ..................................................................................125

SAS code of Rao’s Homogeneity Test .................................................129

Derivatives of θˆ s with respective to frequencies ................................133

Mathematica code for power and size computations of the Qtest .........................................................................................................141

ix

List of tables

Table 3-1

Joint distribution of the genotypes of G and G′ .......................................13

Table

Table

Table

Table

Table

Table

Table

Table 5-1

Conditional distributions under Scenario 1.................................................17

Conditional distributions under Scenario 2.................................................21

Summarized results of simulations .............................................................25

Punnet square for Hardy-Weinberg Equilibrium........................................38

Genotype frequencies in the population .....................................................39

Joint distribution with inbreeding coefficient θ ..........................................40

Sample size n to achieve a specified power, 1−β, using Wald’s Ztest for various values of allele frequencies q , and true inbreeding

coefficient θ, and level α.............................................................................52

Joint distribution of genotypes....................................................................61

Table 5-2

Joint distribution is of type Ωθ ...................................................................63

Table 5-3

Joint distribution for Genotypes under Equilibrium ( Ω 0 )..........................64

Table

Table

Table

Table

Table

Table

Table

Table

Table

Table

Example: A distribution in Ω but not in Ω* ..............................................64

Population subdivision with respect to tri-alleles .......................................65

Data on Genotypes......................................................................................67

Joint distribution: A1 vs. (not A1) ...............................................................75

Joint distribution: A1 vs. (not A1) with inbreeding coefficient θ1 ...............76

Joint distribution: A2 vs. (not A2) ...............................................................76

Joint distribution: A2 vs. (not A2) with inbreeding coefficient θ2 ...............76

Joint distribution: A3 vs. (not A3) ...............................................................77

Joint distribution: A3 vs. (not A3) with inbreeding coefficient θ3 ...............77

General Joint distribution of genotypes ......................................................93

3-2

3-3

3-4

4-1

4-2

4-3

4-4

5-4

5-5

5-6

5-7

5-8

5-9

5-10

5-11

5-12

6-1

Table 6-2

A joint distribution of type Ω* ...................................................................95

Table 6-3

Joint distribution of genotypes under Equilibrium ( Ω 0 ) ............................95

Table 6-4

Table 6-5

Data collected for any multiple alleles........................................................96

2x2 distribution required for a test about inbreeding coefficient..............100

x

List of figures

Figure 3-1

Common conditional pdf of P | G′under Scenario 1 ................................17

Figure 3-2

Common conditional pdf of P | G under Scenario 1 ..................................18

Figure 3-3

Conditional pdf of P | G′under Scenario 2 ...............................................22

Figure 3-4

Conditional pdf of P | G′under Scenario 3 ...............................................23

Figure 3-5

Conditional pdf of P | G′under Scenario 4 ...............................................24

Figure

Figure

Figure

Figure

Figure

Figure

Figure

Figure

Figure

Figure

Power comparison of ANOVA and Bartlett’s test, when λ = 1.................27

Power comparison of ANOVA and Bartlett’s test, when λ = 50...............28

Power comparison of Z and χ2, when p = 0.5 ............................................56

Power comparison of Z and χ2, when p = 0.2 ............................................56

Power comparison of Z and χ2, when p = 0.05 ..........................................57

Histogram and Normal Q-Q Plot for Z’s, when p = 0.5 .............................58

Histogram and Normal Q-Q Plot for Z’s, when p = 0.2 .............................58

Histogram and Normal Q-Q Plot for Z’s, when p = 0.05 ...........................59

Empirical power of χ2 Test Q for testing H0: θ1 = θ2 = θ3 = θ = 0...........84

Empirical size of χ2 Test Q for testing H0: θ1 = θ2 = θ3 = θ

(θ unknown) ................................................................................................87

3-6

3-7

4-1

4-2

4-3

4-4

4-5

4-6

5-1

5-2

xi

1

Introduction

One of the important problems in genetic studies is to explore association between a gene

and a quantitative phenotype, such as blood pressure, body mass index (BMI), and lipid levels in

blood. A standard additive model is generally postulated exemplifying the connection between the

genotypes and phenotype. The model assumes a normal distribution for the phenotype under each

genotype with additive effects. The relevant question is whether a gene of interest is associated

with the phenotype. Data collected on the phenotype of a random sample of subjects are classified

according to the genotypes and the ANOVA method is most commonly used. By comparing the

mean phenotypic values across the different genotypic groups, the proportion of variance

explained by the marker loci are examined. The rejection of the null hypothesis would lead us to

believe that there is a statistically significant difference among these groups, which implies the

conclusion that the genotypes are correlated with the given quantitative phenotype.

This test is reasonable and the method is easy to use. However, we note that the method of

analysis of variance raises a paradox by violating the assumptions of its validity. For the validity

of the ANOVA method, homogeneity of variances of the genotype populations is needed. We

observe that homogeneity holds if and only if the population means are equal, which the ANOVA

method is purporting to test. This is a paradoxical situation. Thus motivation for this part of our

work stems from the feeling that if the test assumptions are violated, the test results may not be

valid.

The Bartlett’s test can be used to test homogeneity of variances. In Chapter 3, we compare

the performance of the ANOVA method and Bartlett’s test via simulations. Our conclusion is that

the ANOVA method works despite violation of the assumptions of its validity.

1

In Chapter 4 through 6, we focus on the assumption of the Hardy-Weinberg Equilibrium

(HWE) to describe genotype frequencies at autosomal codominant loci. In population genetics, the

HWE or Hardy–Weinberg law, named after G. H. Hardy and W. Weinberg, states that, under

certain conditions, after one generation of random mating, the genotype frequencies at a single

gene locus will attain a particular set of equilibrium values. It also specifies that those equilibrium

frequencies can be represented as a simple function of allele frequencies at that locus.

In Chapter 4, we focus on testing the HWE in the bi-allelic case. The most commonly used

entity for testing is the goodness-of-test Chi-squared statistic, which does not

discriminate

homozygote excess from heterozygote deficiency because of its two-sided nature. We propose

alternative methods for testing the HWE against an one-sided alternative. We use simulations to

compare the power of the new test and goodness-of-test Chi-squared test. We also compare sample

sizes required to achieve a given power. Sample sizes calculated based on the new method are

lower than what are available in the literature.

For more than two alleles, testing the HWE raises severe computational problems. In

Chapter 5, we propose a new method of testing the HWE following the dimensionality reduction

principle. We set out in great detail execution of the new method and computational routines, and

demonstrate its feasibility and effectiveness by simulations.

In Chapter 6, results and methods are extended to multi-allelic cases. As the number of

alleles increases, computational complexity arises. The computational power is adequate to cover

cases of reasonable number of alleles.

In Chapter 7, we draw conclusions from the work presented. We will also outline research

problems which we wish to pursue in future.

2

2

Purpose, Hypotheses, Specific Aims, and Significance

2.1

Purpose

There are two main goals pursued in this dissertation:

1. First we establish that the assumptions needed for the commonly used ANOVA method

for testing that if a gene or some genes is associated with a given phenotype are not met,

giving rise to a paradox. This motivated us to an exploration of one of the goals of this

dissertation to scrutinize the violations and check the appropriateness of using ANOVA.

Bartlett’s test seems to be more appropriate to use in this context, even though the (marker)

genotypic group populations are not normal. In fact, the populations are mixed normal. We

compare the performances of Bartlett’s test and ANOVA procedure, using simulations

under different choices of parameters (i.e., allele frequencies, allelic effect, linkage

disequilibrium, type I error) to examine whether the ANOVA procedure still works, even

when the assumptions are violated, and how robust it is compared to the Bartlett’s test.

2. The second aim is to propose a new method to test Hardy-Weinberg Equilibrium since the

commonly used Chi-squared statistic can not discriminate homozygote excess from

heterozygote deficiency. The new method is simple to use in the bi-allelic case. For more

than two alleles, testing the HWE raises severe computational problems. We detail a new

technique reducing the multi-allelic problem to several bi-allelic problems. The

mathematical and computational details have been set out.

3

2.2

Research Hypotheses

Research hypotheses to be examined in this dissertation are the following:

1. The goal is to test the hypothesis that genotypes is associated with a given quantitative

phenotype. The method commonly used is ANOVA. We show that the assumptions for the

validity of ANOVA are violated, giving rise to a paradox. The Bartlett’s test seems more

appropriate. We hypothesize that the ANOVA procedure still works despite the violations.

2. On testing the Hardy-Weinberg Equilibrium, in the case of bi-allelic genes, the chi-squared

test cannot be used for one-sided alternatives. We propose a new method of testing which

can accommodate one-sided alternatives. We hypothesize that the new method provides

lower sample sizes than the chi-squared method.

3. Computational problems are insurmountable when testing the HWE in cases with more

than two alleles. In the tri-allelic case, we propose a new method of testing the HWE. The

new method reduces the tri-allelic problem to several bi-allelic problems. The research

hypothesis is that it will work. We use simulations to examine the power of the new

method.

4. The new proposed method can be generalized to any case of multiple alleles.

4

2.3

Specific Aims

The specific aims of this dissertation are outlined below:

1. Under the additive model of allelic effects on a quantitative phenotype, the ANOVA

method is commonly used to check the influence of the underlying gene on the phenotype.

We want to examine whether the assumptions for the validity of the ANOVA method are

met. If not, propose an alternative method to answer the question of interest and compare

its performance with that of the ANOVA method. This is pursued in Chapter 3.

2. For testing the Hardy-Weinberg equilibrium in the two-allelic case, goodness-of-fit Chisquared statistic is commonly used. The Chi-squared statistic can not be used to test onesided alternatives. We want to explore whether a new test can be developed to achieve the

objective. We propose such a new test. We use the new test for power and sample size

calculations. This is pursued in Chapter 4.

3. Test for HWE in the tri-allelic case is computationally intractable. We want to explore

ways of overcoming computational complexities. We propose a new method for testing the

HWE in this case. We want to detail the new procedure for practical implementation. This

is pursued in Chapter 5.

4. Generalize the testing procedure developed to any multi-allelic case.

Chapter 6.

5

This is pursued in

2.4

Significance

In genetic studies, one of the major objectives is to apply statistical models to assist in

identification of genes contributing to specific quantitative traits. The appropriateness of these

methods depend on the validity of the assumptions needed to carry out these methods. The

difficult thing is that many times the violation is not readily apparent (i.e., it is deeply buried and

not detectable without extensive algebraic computations). Unavoidably, geneticists sometimes

pick the most commonly used statistical method without checking the validity of these methods

which may bias or even jeopardize the integrity of the research. Some methods are robust when

the assumptions are violated, while others are not. Therefore, checking the validity of the

assumptions becomes very critical for assuring the validity of the method used.

In order to correlate a given quantitative phenotype with a gene, the method of analysis of

variance (ANOVA) is most commonly used. However, we have observed a paradox when

applying the ANOVA method to test the null hypotheses H0: the genotypes of a gene G′ do not

discriminate the phenotype P, which is equivalent to H0: Δ = 0, where Δ is the linkage

disequilibrium between G′ and the causative gene locus (G) of the phenotype P.ٛ For the

applicability of ANOVA for testing H0: Δ = 0, we need to assume homogeneity of variances of the

phenotype P across all genotypes of G′ (i.e., the groups formed by the genotypes of G′ have to

have the same variance). However, ANOVA tests equality of means, and the assumption of

homogeneity of variances holds only if Δ = 0, under which the means are also all equal, which is

what we are testing. This is a paradoxical situation. Using simulation to compare its power with an

alternative method “Bartlett’s test” would give scientists a better idea about the performance of

ANOVA. Research carried out on this problem would help quantitative geneticists to provide a

good understanding of the ANOVA method vis-à-vis Bartlett’s test in this context.

6

The second half of the dissertation focuses on Tests of the Hardy-Weinberg Equilibrium

(HWE). HWE is one of the most important assumptions to be checked in genetic analysis. In

population genetics, the HWE states that, under certain conditions, after one generation of random

mating, the genotype frequencies at a single gene locus will attain at a set of specific equilibrium

values. The most commonly used method for testing HWE is the Chi-squared statistic, which does

not discriminate homozygote excess from heterozygote deficiency because of its two-sided nature.

Based on this deficiency, we propose an alternative method and use simulations to assess its

power. For more than two alleles, testing the HWE is computationally complex. We propose a

new method of testing the HWE for multi-allelic cases by reducing it to several bi-allelic cases.

The quantitative geneticists are expected to use our routines when examining issues surrounding

the HWE.

7

3

On testing that genotypes at a marker locus is associated with

a given phenotype

3.1

Background: Traditional Approach

Suppose that we are investigating association between a particular quantitative phenotype

and a gene. The focus of genotype-phenotype mapping is to identify if the candidate gene or genes

have some bearing on this given phenotype. Let P denote the given phenotype, which will be

measured for each participant. In addition, blood sample will be collected for determining the

genotype of each participant in a randomly selected sample. It is believed that there is a gene G,

bi-allelic with alleles M and m, which impacts the phenotype. The genotypes MM, Mm, and mm

of the gene G influence the phenotype in the following sense:

P | G = MM ~ N(λ, σ2);

P | G = Mm ~ N(0, σ2);

P | G = mm ~ N(−λ, σ2).

for some λ ≠ 0, where G stands for ‘Genotype.’ In the subpopulation of those with genotype MM

the phenotype P is normally distributed with mean λ and variance σ2, in the subpopulation of those

with genotype Mm the phenotype P is normally distributed with mean 0 and variance σ2, and in

the subpopulation of those with genotype mm the phenotype is normally distributed with – λ and

variance σ2. This is essentially an additive model of allelic effects of the causative gene (G) on the

phenotype P.

Let PM2 , 2 PM Pm , and Pm2 be the relative frequencies of the genotypes MM, Mm, and mm,

respectively, in the population, where the probabilities (allele frequencies) PM and Pm satisfy the

condition PM + Pm = 1 . We are assuming the gene G is in Hardy–Weinberg equilibrium with PM

8

and Pm representing the frequencies of alleles M and m at the causative locus G in the entire

population ( Li, 1976).

Unconditionally, P has a distribution which is a mixture of normal distributions. More

precisely,

P ~ PM2 N (λ , σ 2 ) + 2 PM Pm N (0, σ 2 ) + Pm2 N (−λ , σ 2 ) .

The joint distribution of P and G is:

f(P, MM) = PM2 N (λ , σ 2 )

f(P, Mm) = 2 PM Pm N (0, σ 2 )

f(P, mm) = Pm2 N (−λ , σ 2 )

Suppose G′ is another gene at a chosen site of the genome. Suppose G′ is also a bi-allelic

gene with alleles A and a. Since we do not know where G is truly located yet, we choose some

gene that is probably located close to the true gene; we call this gene the “Marker.” The question

of interest is whether or not the genotypes AA, Aa, and aa of the Marker locus discriminate the

phenotype. If we have success to find some association between the marker and the phenotype, we

can do more analysis to locate the true gene closer. For this purpose, a sample of n individuals is

selected, their phenotypes measured, and genotypes determined. The phenotype data are classified

according to genotypes. In the literature (Chakraborty,1986), the ANOVA method on the one-way

classified data is used to answer the question raised above. Accepting the null hypothesis of equal

means is tantamount to declaring that G′ is not the gene that discriminates the phenotype. We

notice that the assumptions needed for the validity of ANOVA are not met giving rise to a paradox.

One of the goals of this dissertation is that if traditional ANOVA is used to answer the question,

check its power via simulations. We also compare the ANOVA method with the Bartlett’s test by

9

checking their powers via simulations. A broad conclusion of the investigation is that the ANOVA

method still works.

3.2

Statistical Methods

3.2.1

Analysis of Variance (ANOVA)

In general, experimenters assume that markers segregate randomly. Once the data are

collected on each individual, statistical associations between the markers and quantitative traits are

established through statistical approaches that range from simple techniques, such as analysis of

variance (ANOVA), to models that include multiple markers and interactions. The simpler

statistical approaches tend to be methods of Quantitative Trait Locus (QTL) detection that assess

differences in the phenotypic means for single-marker genotypic classes. The actual location of

QTL involves an estimated genetic map with known distances between markers, and on evaluation

of a likelihood function that is maximized over the established parameter space.

Typically, the null hypothesis tested is that the mean of the trait value is independent of the

genotype at a particular marker. The null hypothesis is rejected when the test statistic is larger than

a critical value, and the implication is that the QTL is linked to the marker under investigation.

Single-marker analyses investigate individual markers independently without reference to their

position or order.

The data classified according to the genotypes of G ′ have the following structure (Pi’s

stands for phenotypic values):

10

Phenotypic Values

Genotypes

AA

Aa

aa

P11

P21

P31

P12

P22

P32

.

.

.

.

.

.

.

.

.

P1, n1

P2, n2

P3, n3

n = total sample size = n1+n2+n3

The ANOVA technique can be used to test the equality of the group means corresponding to the

genotypes.

3.2.2

Bartlett’s Test

As we shall see later, Bartlett’s test can also be used to test homogeneity of means for the

data set-up of Section 3.2.1. In this section, we provide a brief introduction to the Bartlett’s test.

3.2.2.1

Introduction

Bartlett's test (Snedecor and Cochran, 1983) is used to test if k normal populations have

equal variances. Equal variances across samples is called homoscedasticity or homogeneity of

variances. Some statistical tests, for example the analysis of variance (ANOVA), assume that

variances are equal across groups or samples. The Bartlett’s test can be used to verify that

assumption.

11

Bartlett's test is sensitive to departures from normality. That is, if the samples come from

non-normal distributions, then Bartlett's test may simply be testing for non-normality. The Levene

test (Milliken and Johnson, 1989) is an alternative to the Bartlett’s test that is less sensitive to

departures from normality. Here, we are investigating mixed normally distributed data and

focusing only on the Bartlett’s test.

3.2.2.2

Definition

The Bartlett’s test statistic is designed to test for equality of variances across groups

against the alternative that variances are unequal for at least two groups.

The hypotheses are:

H0: σ 1 = σ 2 = .... = σ k

Ha: σ i ≠ σ j for at least one pair (i,j)

The test statistic is given by:

( N − k ) ln s 2p − ∑i=1 ( N i − 1) ln si2

k

T=

⎛ 1 ⎞⎛ k 1

1 ⎞

⎟⎟

⎟⎟⎜⎜ (∑

1 + ⎜⎜

)−

⎝ 3(k − 1) ⎠⎝ i=1 N i − 1 N − k ⎠

In the above, si2 is the sample variance of the ith group (i =1,2,…,k), N is the total sample

size, Ni is the sample size of the ith group, k is the number of groups, and s 2p is the pooled

variance. The pooled variance is a weighted average of the group variances and is defined as:

k

s 2p = ∑ ( N i − 1) si2 /( N − k )

i =1

12

The variances are judged to be unequal if T >

2

χ (2α ,k −1) , where χ (α ,k −1) is the 100* α upper

percentile critical value of the chi-squared distribution with k −1 degrees of freedom at the

significance level of α .

The above formula for the critical region follows the convention that χα2 is the upper

critical value from the chi-squared distribution and χ12−α is the lower critical value from the chisquared distribution.

3.2.3

Linkage Disequilibrium Coefficient and Joint distribution

Let Δ be the linkage disequilibrium coefficient between the genes G and G′. Since G is

unknown, Δ is unknown. The joint distribution of the genotypes of G and G′ is given in the

following table:

Table 3-1

Joint distribution of the genotypes of G and G′

G′

Marginal

AA

Aa

aa

MM

2

PMA

2PMA PMa

2

PMa

PM2

Mm

2PMA PmA

2PMA Pma + 2PMa PmA

2PMa Pma

2PM Pm

mm

2

PmA

2PmA Pma

2

Pma

Pm2

PA2

2PA Pa

Pa2

1

G

Marginal

frequencies

frequencies

Using the concept of haplotype frequencies, the entities in the joint distribution are defined by:

PMA = PMPA + Δ;

PMa = PMPa − Δ;

PmA = PmPA − Δ;

Pma = PmPa + Δ.

13

where PA and Pa are the frequencies of alleles A and a of the marker locus (G′) in the entire

population. The conditional distribution of P given genotypes of G and G′ depend only on the

genotype of the true gene G. More precisely,

P | G = MM, G′ = AA ~ N(λ, σ2);

P | G = MM, G′ = Aa ~ N(λ, σ2);

P | G = MM, G′ = aa ~ N(λ, σ2);

P | G = Mm, G′ = AA ~ N(0, σ2);

P | G = Mm, G′ = Aa ~ N(0, σ2);

P | G = Mm, G′ = aa ~ N(0, σ2);

P | G = mm, G′ = AA ~ N(-λ, σ2);

P | G = mm, G′ = Aa ~ N(-λ, σ2);

P | G = mm, G′ = aa ~ N(-λ, σ2).

3.2.4

Joint distribution of the Phenotype and Genotypes of G and G′ٛ

The joint distribution of the Phenotype, genotypes of G and G′ is given as follows:

2

f(P, MM, AA)= PMA

N (λ , σ 2 )

f(P, MM, Aa)= 2 PMA PMa N (λ , σ 2 )

2

N (λ , σ 2 )

f(P, MM, aa)= PMa

f(P, Mm, AA)= 2 PMA PmA N (0, σ 2 )

f(P, Mm, Aa)= (2 PMA Pma + 2 PMa PmA ) N (0, σ 2 )

f(P, Mm, aa)= 2 PMa Pma N (0, σ 2 )

2

f(P, mm, AA)= PmA

N ( −λ , σ 2 )

f(P, mm, Aa)= 2 PmA Pma N (−λ , σ 2 )

2

N ( −λ , σ 2 )

f(P, mm, aa)= Pma

14

The joint pdf of phenotype and marker G′ is given by:

2

2

N (λ , σ 2 ) + 2 PMA PmA N (0, σ 2 ) + PmA

f( P, AA) = PMA

N ( −λ , σ 2 )

f( P, Aa) = 2 PMA PMa N (λ , σ 2 ) + (2 PMA Pma + 2 PMa PmA ) N (0, σ 2 ) + 2 PmA Pma N (−λ , σ 2 )

2

2

N (λ , σ 2 ) + 2 PMa Pma N (0, σ 2 ) + Pma

N ( −λ , σ 2 )

f( P, aa) = PMa

3.2.5

Conditional Expectations and Variances

From these joint distributions, the following conditional distributions are derived:

(P | G′ ~ AA) =

(P | G′ ~ Aa) =

(P | G′ ~ aa) =

2

2

PMA

N (λ , σ 2 ) + 2 PMA PmA N (0, σ 2 ) + PmA

N ( −λ , σ 2 )

PA2

PMA PMa N (λ , σ 2 ) + ( PMA Pma + PMa PmA ) N (0, σ 2 ) + PmA Pma N (−λ , σ 2 )

PA Pa

2

PMa

N (λ , σ 2 ) + 2 PMa Pma N (0, σ 2 ) + Pma2 N (−λ , σ 2 )

Pa2

The conditional expectations and variances, are summarized below (derivations of which are given

in Appendix 1)

E(P | G′ = AA) = λ ( PM − Pm ) +

2Δλ

PA

Var(P | G′ = AA) = σ 2 + 2λ2 PM Pm +

E(P | G′ = Aa) = λ ( PM − Pm ) −

2Δλ2 ( Pm − PM ) 2Δ2 λ2

−

PA

PA2

Δλ ( PA − Pa )

PA Pa

Δλ2 ( PM − Pm )( PA − Pa ) Δ2 λ2 ( PA2 + Pa2 )

−

Var(P | G′ = Aa) = σ + 2λ PM Pm +

PA Pa

PA2 Pa2

2

2

15

E(P | G′ = aa) = λ ( PM − Pm ) −

2Δλ

Pa

Var(P | G′ = aa) = σ 2 + 2λ2 PM Pm +

3.3

2Δλ2 ( PM − Pm ) 2Δ2λ2

−

Pa

Pa2

Some Facts and Paradox

3.3.1

Some Facts

1. If Δ ≠ 0, the conditional means as well as the conditional variances are different. The

genotypes of G′ do discriminate the phenotype P.

2. If Δ = 0, all the conditional expectations and all the conditional variances are equal. The

genotypes of G′ do not discriminate the phenotype P.

3. If PA = 0.5 and PM = 0.5, all the conditional variances are equal.

4. Each conditional distribution of P | Genotype of G′ is a mixed normal.

3.3.2

The Paradox

We will formulate the null hypothesis H0: the genotypes of G′ do not discriminate the

phenotype P, which is equivalent to H0: Δ = 0. For applicability of ANOVA for testing H0: Δ = 0,

we need to assume homogeneity of variances (i.e., the groups formed by the genotypes of G′ have

to have the same variance). The assumption of homogeneity of variances holds if Δ = 0, which

implies that the means are also all equal, which is what we are testing using the ANOVA method.

This is a paradoxical situation.

3.4

Efficacy of ANOVA

Despite the paradoxical situation outlined above, in this section, we examine how much an

application of ANOVA to the one-way classified data achieves the objectives. This study will be

conducted by resorting to simulations.

16

The SAS code of simulations is presented in Appendix 2.

Scenario 1. The first situation we considered has the following specifications for the parameters

involved:

%sim (Δ=0, λ=1,

σ2=1,Pm=0.5,Pa=0.5,sample=200,alphalevel=0.05);

Under this scenario, the conditional distributions are tabulated below:

Table 3-2

Conditional distributions under Scenario 1

Conditional distribution

mean

variance

1. P | G′ = AA

¼ N(1,1) + ½ N(0,1) + ¼ N(−1,1)

0

1.5

2. P | G′ = Aa

¼ N(1,1) + ½ N(0,1) + ¼ N(−1,1)

0

1.5

3. P | G′ = aa

¼ N(1,1) + ½ N(0,1) + ¼ N(−1,1)

0

1.5

4. P | G = MM

N(1,1)

0

1

5. P | G = Mm

N(0,1)

0

1

6. P | G = mm

N(−1,1)

0

1

The common conditional probability density function under distributions 1, 2, and 3 above is

plotted in Figure 3-1:

Figure 3-1

Common conditional pdf of P | G′ under Scenario 1

0.3

0.25

0.2

0.15

0.1

0.05

-4

-2

2

4

Distribution: ¼ N(−1,1) + ½ N(0,1) + ¼ N(1,1)

This plot does not show trimodality as expected because the assumed variance is as large as the

allelic effect (i.e., σ2 = 1 and λ = 1). In consequence, the three modes are smoothed out. If we

assume a smaller variance, for example, like 0.4 and keep λ the same (λ = 1), the conditional

distribution with three modes is shown as follows:

17

0.5

0.4

0.3

0.2

0.1

-2

-1

1

2

Distribution: ¼ N(−1,0.4) + ½ N(0,0.4) + ¼ N(1,0.4)

For comparison, when we choose σ2 = 0.5, the modes are smoothed out as follows:

0.4

0.3

0.2

0.1

-2

-1

1

2

Distribution: ¼ N(−1,0.5) + ½ N(0,0.5) + ¼ N(1,0.5)

The conditional probability density function under Distributions 4, 5, and 6 of Table 3-2 are

plotted in Figure3-2:

Figure 3-2

Common conditional pdf of P | G under Scenario 1

0.4

0.3

0.2

0.1

-4

-2

2

4

Distributions: N(−1,1); N(0,1) ; N(1,1)

We now focus on testing the hypothesis H0: Δ = 0, which is true under Scenario 1. We generate a

random sample of size n = 200 from the following joint distribution of P and G′.

18

f (P, AA) = 1/16 N(1,1) + 1/8 N(0,1) + 1/16 N(−1,1)

f (P, Aa) = 1/8 N(1,1) + 1/4 N(0,1) + 1/8 N(−1,1)

f (P, aa) = 1/16 N(1,1) + 1/8 N(0,1) + 1/16 N(−1,1)

−∞ < P < ∞

Simulations are conducted according to the following steps.

Step 1. Draw a random sample of size 200 from the following trinomial distribution:

G′

AA

Aa

Aa

Pr:

1/4

1/2

1/4

Let n1 , n2 , n3 be the observed frequencies of the genotypes. Obviously, n1 + n2 + n3 = 200 .

Step 2. If G′ = AA, Aa or aa, simulate the mixed normal distribution

¼ N(1,1) + ½ N(0,1) + ¼ N(−1,1).

Step 3. Arrange the Genotype-Phenotype data generated in Steps1 and 2 in the following way.

Phenotypic Values

Genotypes

AA

Aa

aa

P11

P12

P21

P22

P31

P32

.

.

.

.

.

.

.

P1, n1

.

P2, n2

.

P3, n3

n = total sample size = n1+n2+n3

Step 4. Carry out Analysis of Variance and Bartlett’s test on the phenotype data of Step 3. Use

level α = 0.05 . Note down whether or not H0 is rejected under each test.

Step 5. Repeat steps 1, 2, 3, and 4 10,000 times.

19

Summery statistics of simulations:

1. Empirical size under the ANOVA test =

No. of times H 0 is rejected

= 0.052

10,000

2. Empirical size under the Bartlett’s test =

No. of times H 0 is rejected

=0.059

10,000

The observed size under the Bartlett’s test is slightly larger than that under the ANOVA method.

However, the observed powers are not significantly different from the nominal power 0.05.

Scenario 2. We now look at scenarios when the null hypothesis H0: Δ = 0 is not true.

We have the following specifications for the parameters involved:

%sim (Δ=0.1875, λ=1,

Note that

σ2=1,Pm=0.5,Pa=0.5,n=200,alphalevel=0.05);

PMA = PMPA + Δ = 0.4375

PMa = PMPa − Δ = 0.0625

PmA = PmPA − Δ = 0.0625

Pma = PmPa + Δ = 0.4375

The conditional distributions of P | G′ are given by

(P | G′ ~ AA) =

(P | G′ ~ Aa) =

(P | G′ ~ aa) =

2

2

PMA

N (1,1) + 2 PMA PmA N (0,1) + PmA

N (−1,1)

2

PA

PMA PMa N (1,1) + ( PMA Pma + PMa PmA ) N (0,1) + PmA Pma N (−1,1)

PA Pa

2

2

PMa

N (1,1) + 2 PMa Pma N (0,1) + Pma

N (−1,1)

2

Pa

The conditional means and variances are given by:

E(P | G′ = AA) = λ ( PM − Pm ) +

2Δλ

= 0.75

PA

Var(P | G′ = AA) = σ 2 + 2λ2 PM Pm +

2Δλ2 ( Pm − PM ) 2Δ2 λ2

= 1.21875

−

PA

PA2

20

E(P | G′ = Aa) = λ ( PM − Pm ) −

Δλ ( PA − Pa )

=0

PA Pa

Var(P | G′ = Aa) = σ 2 + 2λ2 PM Pm +

E(P | G′ = aa) = λ ( PM − Pm ) −

Δλ2 ( PM − Pm )( PA − Pa ) Δ2 λ2 ( PA2 + Pa2 )

−

= 1.21875

PA Pa

PA2 Pa2

2Δλ

= −0.75

Pa

Var(P | G′ = aa) = σ 2 + 2λ2 PM Pm +

2Δλ2 ( PM − Pm ) 2Δ2λ2

−

= 1.21875

Pa

Pa2

Under this scenario, the conditional distributions are tabulated below:

Table 3-3

Conditional distributions under Scenario 2

Conditional distribution

1. P | G′ = AA

2. P | G′ = Aa

3. P | G′ = aa

0.765625N(1,1) + 0.21875N(0,1) +

0.015625N(−1,1)

0.109375N(1,1) + 0.78125N(0,1) +

0.109375N(−1,1)

0.01562N(1,1) + 0.21875 N(0,1) +

0.765625N(−1,1)

mean

variance

0.75

1.21875

0

1.21875

−0.75

1.21875

4. P | G = MM

N(1,1)

0

1

5. P | G = Mm

N(0,1)

0

1

6. P | G = mm

N(−1,1)

0

1

These three conditional densities 1, 2, and 3 of Table 3-3 are plotted in Figure 3-3.

21

Figure 3-3

Conditional pdf of P | G′ under Scenario 2

0.35

0.3

0.25

0.2

0.15

0.1

0.05

-4

-2

2

4

Distribution: 0.015625N(1,1) + 0.21875N(0,1) + 0.765625N(−1,1)

0.109375N(1,1) + 0.78125N(0,1) + 0.109375N(−1,1)

0.765625N(1,1) + 0.21875N(0,1) + 0.015625N(−1,1)

As a contrast, the conditional densities 4, 5, and 6 of P|G were plotted in Figure 3-2.

In this scenario, the null hypothesis H0: Δ = 0 is not true. A random sample of size n = 200 is

generated from the joint distribution of P and given by

2

2

N (1,1) + 2 PMA PmA N (0,1) + PmA

N (−1,1)

f(P, AA) = PMA

f(P, Aa) = 2 PMA PMa N (1,1) + (2 PMA Pma + 2 PMa PmA ) N (0,1) + 2 PmA Pma N (−1,1)

2

N (1,1) + 2 PMa Pma N (0,1) + Pma2 N (−1,1)

f(P, aa) = PMa

With the protocol outlined under Scenario 1, we calculate the following entities:

1. Empirical power under the ANOVA test =

No. of times H 0 is rejected

= 0.27

10,000

2. Empirical power under the Bartlett’s test =

No. of times H 0 is rejected

= 0.14

10,000

22

Scenario 3.

We consider the following specifications for the parameters involved:

%sim (Δ=0.125, λ=1,

σ2=1,Pm=0.5,Pa=0.5,n=200,alphalevel=0.05);

Under this scenario, the conditional distributions are tabulated below:

Conditional distribution

mean

variance

1. P | G′ = AA

0.5625N(1,1) + 0.375N(0,1) + 0.0625N(−1,1)

0.5

1.375

2. P | G′ = Aa

0.1875N(1,1) + 0.625N(0,1) + 0.1875N(−1,1)

0

1.375

3. P | G′ = aa

0.0625N(1,1) + 0.375 N(0,1) +0.5625N(−1,1)

−0.5

1.375

The graphs of the conditional distributions of P | G′ are given in Figure 3-4.

Figure 3-4

Conditional pdf of P | G′ under Scenario 3

0.3

0.25

0.2

0.15

0.1

0.05

-4

-2

2

4

Distributions: 0.0625N(1,1) + 0.375N(0,1) +0.5625N(−1,1)

0.1875N(1,1) + 0.625N(0,1) + 0.1875N(−1,1)

0.5625N(1,1) + 0.375N(0,1) + 0.0625N(−1,1)

Simulations are conducted to calculate empirical powers under ANOVA and Bartlett’s test.

No. of times H 0 is rejected

= 0.225

10,000

No. of times H 0 is rejected

= 0.10

2. Empirical size under the Bartlett’s test =

10,000

1. Empirical size under the ANOVA test =

23

Scenario 4.

We consider the following specifications for the parameters involved:

%sim (Δ=0.0625, λ=1,

σ2=1,Pm=0.5,Pa=0.5,n=200,alphalevel=0.05);

Under this scenario, the conditional distributions are tabulated below:

Conditional distribution

0.390625N(1,1) + 0.46875N(0,1) +

1. P | G′ = AA

0.140625N(−1,1)

0.234375N(1,1) + 0.53125N(0,1) +

2. P | G′ = Aa

0.234375N(−1,1)

0.140625N(1,1) + 0.46875 N(0,1) +

3. P | G′ = aa

0.390625N(−1,1)

mean

variance

0.25

1.46875

0

1.46875

−0.25

1.46875

The graphs of the conditional distributions of P | G′ are given in Figure 3-5.

Figure 3-5

Conditional pdf of P | G′ under Scenario 4

0.3

0.25

0.2

0.15

0.1

0.05

-4

-2

2

4

Distributions: 0.140625N(1,1) + 0.46875 N(0,1) + 0.390625N(−1,1)

0.234375N(1,1) + 0.53125N(0,1) + 0.234375N(−1,1)

0.390625N(1,1) + 0.46875N(0,1) + 0.140625N(−1,1)

24

Simulations are conducted to calculate empirical powers under ANOVA and Bartlett’s test.

No. of times H 0 is rejected

= 0.194

10,000

No. of times H 0 is rejected

= 0.086

2. Empirical power under the Bartlett’s test =

10,000

1. Empirical power under the ANOVA test =

The results of the simulations under each of Scenarios 1, 2, 3, and 4 are summarized below

(10,000 simulations).

Specifications (common parameters):

λ=1, σ2=1,Pm=0.5,Pa=0.5,n=200,alphalevel=0.05

Table 3-4

Summarized results of simulations

Δ

Empirical Power

ANOVA

Bartlett

0

0.059

0.052

0.0625

0.194

0.086

0.125

0.225

0.101

0.1875

0.273

0.142

Conclusions: We have considered four sets of parameter values involved in the simulations. In

one set of simulations, the observed sizes are not significantly different from the nominal size of

0.05. In all other cases, the observed powers are significantly different and the ANOVA test is

superior to the Bartlett’s test. In all the scenarios, homogeneity of variances holds. The genotypic

distributions are not normal but mixed normal. The ANOVA procedure is robust to violation of

the normality assumption but the Bartlett’s test is not.

25

3.5

Power Comparison of ANOVA and Bartlett’s test

Checking the conditional expectations and variances, we know that the assumption of

homogeneity of variances to carry ANOVA is violated. Normality assumption is violated for the

applicability of both the ANOVA and Bartlett tests. We used simulations (10,000 times) to

compare the power of these two tests in general. Please refer to Appendix 3 for SAS code.

Selection of Parameter values for the simulations:

•

Allele frequencies of PM and PA are randomly selected: PM ∈ [0, 1], PA ∈ [0, 1]

•

Disequilibrium values (Δ ) are randomly selected: Δ ∈ [0, 0.2]

•

Significance level = 5%

In Section 3.4, PM and PA are fixed at 0.5. Here we randomly select their frequencies from

0 to 1. We know that The genotypes MM, Mm, and mm of the gene G discriminate the phenotype

in the following sense:

P | G = MM ~ N(λ, σ2);

P | G = Mm ~ N(0, σ2);

P | G = mm ~ N(-λ, σ2).

In addition, we need to choose λ, σ2 , and n in the simulations.

We simulated many scenarios under different choices of parameter values for a

comparison of powers. More specifically, we look at:

26

•

Same mean (λ), different variance (σ2), same sample size (n);

•

Different mean (λ), same variance (σ2), same sample size (n);

•

Same mean (λ), same variance (σ2), but different sample size (n).

The simulations showed that power of both tests increase when sample size (n) increases,

especially when Δ is low (Δ ∈ [0, 0.2]) and the power of both tests does not depend on variance

(σ2 ∈ [1, 50]) too much, the thing that matters most is the mean (λ). The performances of these

two tests are different under different choices of mean (λ). We report two special cases.

3.5.1

Different choices of mean (λ)

1. Let λ = 1, n = 200 and σ 2 = 1.

The power of both tests are low ( <30%), but the ANOVA method has better power than

the Bartlett’s test. See Figure 3-6.

Figure 3-6

Power comparison of ANOVA and Bartlett’s test, when λ = 1

27

2. Let λ = 50, n = 200 and σ 2 = 1.ٛ

When the mean (λ) gets larger, both tests have power between 0.6 and 0.75 for Δ ≥ 0.06. The

ANOVA method is still better than the Bartlett’s test, but the difference is not that wide. See

Figure 3-7.

Figure 3-7

3.5.2

Power comparison of ANOVA and Bartlett’s test, when λ = 50

Conclusion

In testing that genotypes at a marker locus are ascociated with a certain quantitative

phenotype, the ANOVA method is widely used. However, we showed that, the assumptions

required for the validity of the ANOVA method are violated. First, the conditional distribution is a

mixed normal distribution instead of normal, and the homogeneity of variances is violated.

Homogeneity of variances holds if the disequilibrium (Δ) is zero or both alleles at the marker locus

are equi-probable. If Δ = 0, all conditional means are equal, which is the null hypothesis we are

testing.

28

Such a paradox triggered our interest to question the appropriateness of using ANOVA.

An alternative procedure for testing Δ = 0 is the Bartlett’s test. The assumptions needed for the

validity of the Bartlett’s test are not valid. The underlying genotypic populations are not normal

but mixed normal. We want to examine appropriateness of using the Bartlett’s test.

With 10,000 replications of simulations, we compared the power of both test under a

variety of specifications of parameter values. We found that, generally speaking, ANOVA

performed better than the Bartlett’s test. With the choice of higher values of allelic effect (i.e., λ,

represents the mean of the underlying normal distribution), the power of the Bartlett’s test

approached that of the power of ANOVA’s gradually. However, the observed size of the Bartlett’s

test is higher than the nominal level and this bias increases as λ increases. Even though the

homogeneity of variances is violated, the normality assumption does not hold, and the application

of ANOVA is not completely correct, from a statistical point of view, the ANOVA method is still

robust, and the power of ANOVA is better than the Bartlett’s test.

Besides, Bartlett’s test is sensitive to departures from normality, and each conditional

distribution here is mixed normal instead of normal distribution. If Δ is small, we need a very large

sample for the ANOVA test to have decent power.

Is intrinsically ANOVA more powerful than the Bartlett’s test? The answer is yes, because

ANOVA is a test for centrality based on the first moment and Bartlett’s test is a test for equality of

variances, which is about second moment. The sampling variance of the first moment is smaller

than the sampling variances of the second moment. However, as our simulations show, for smaller

Δ, Bartlett’s test appears to have a higher power than ANOVA. This is so because with smaller Δ,

the distributions remain as mixed normals, but the mean of the component distributions are more

equal, making their differences less detectable by ANOVA. Nevertheless, for such small Δ, the

absolute power of either of these two procedures is small.

29

From Figure 3-6 and Figure 3-7, it appears that the Bartlett test is biased, i.e., it fails to

achieve the nominal size. When simulations are conducted with PM ∈ [0, 1], PA ∈ [0, 1], and Δ

∈ [0, 0.2] randomly generated, certain configurations of PMA, PMa, PmA, and Pma could lead to

negative constituents. In such situations, data can not be generated. In the program, whenever such

a configuration arises, it is ignored. This may have some bearing on the bias of the Bartlett’s test.

ٛ

30

4

Hardy-Weinberg Equilibrium in the case of two alleles

4.1

Introduction

4.1.1

What is Hardy-Weinberg Equilibrium?

Hardy-Weinberg Equilibrium is one of the most important assumptions to be checked in

genetic analysis. In population genetics, the Hardy–Weinberg equilibrium (HWE), or Hardy–

Weinberg law, named after G. H. Hardy and W. Weinberg, states that, under certain conditions,

after one generation of random mating, the genotype frequencies at a single gene locus will attain

at a set of particular equilibrium values. It also specifies that those equilibrium frequencies can be

represented as simple functions of the allele frequencies at that locus.

We focus on a diploid organism and a specific gene locus with alleles A and a. The entity

A is the dominant allele and a is the recessive allele for a certain trait. An intuitive question would

be “Will the dominant character eventually dominate the whole population?” Hardy and Weinberg

discovered independently that dominance will not happen from random mating.

Let the population of organisms be infinite or very large. Let the frequencies (first

generation) of the genotypes be given by

AA

Aa

aa

r

2s

t

with r, s, t ≥ 0 and r + 2s + t =1. Suppose the population is under in random mating. The

frequencies of the genotype of the offspring population (second generation) is given by

AA

Aa

31

aa

(r + s)2

2(r + s)(s + t)

(s + t)2

An important question when the genotype frequencies of the two generations are the same, i.e.,

r = (r + s)2

s = 2(r + s)(s + t)

and

t = (s + t)2.

Note that r = (r + s)2 ⇔ r = r2 + 2rs + s2

⇔ r = r (r + 2s) + s2

⇔ r = r (1−t) + s2

⇔ s2 = rt

Thus the two sets of genotypes frequencies are equal if and only if s2 = rt.

Interestingly, if we denote the genotype frequencies of the second generation by:

r1 = (r + s)2,

2 s1 = 2 (r + s) (r + s)

and t1 = (r + s) 2

it follows that

2

s1 = r1 t1 . If random mating occurs in the second generation, the genotype

frequencies in the third generation are identical to those in the second generation. Under random

mating, subsequent generations will have genotype frequencies identical to those of the second

generation. This is essentially the gist of the Hardy-Weinberg Law.

It should be noted for the second generation, or for that matter, in any subsequent

generation, the allele frequencies are given by:

A

a

r+s

s+r

32

In view of this observation, one can now say that the population is in Hardy-Weinberg

equilibrium (HWE). More informally, if the allele frequencies are p and q, then the genotype

frequencies are p2, 2pq, and r2, provided the population is in HWE.

4.1.2

Assumption of HWE

The assumptions governing the Hardy–Weinberg equilibrium (HWE) are that the organism

under consideration:

•

is diploid and the trait under consideration is not on a chromosome that has different copy

numbers for different sexes, such as the X chromosome in humans (i.e., the trait is

autosomal);

•

is sexually reproducing, either monoecious or dioecious;

•

has discrete generations;

•

in addition, the population under consideration is idealised, that is:

•

random mating within a single population

•

infinite population size (or sufficiently large so as to minimize the effect of genetic

drift)

and experiences:

•

no selection;

•

no mutation;

•

no migration (gene flow).

The first group of assumptions are required for the mathematics involved. It is relatively

easy to expand the definition of HWE to include modifications of these, such as for sex-linked

traits. The other assumptions are inherent in the Hardy-Weinberg principle. A Hardy-Weinberg

population is used as a reference population when discussing various genetic issues.

33

4.1.3

Departures from the Equilibrium

To analyze departures, we can look at populations to see if they conform to these

numerical patterns. If they differ, we seek the reasons for the difference in some violation of the

Hardy-Weinberg assumptions. Two processes, natural selection and genetic drift, are the most

common and important factors at work in most populations that are not at equilibrium. Inbreeding

and nonrandom mating are also forces of departure.

For example, suppose we find a population in which the recessive allele frequency is

declining over time. We might then investigate whether homozygous recessives are dying earlier.

(Many genetic diseases, such as cystic fibrosis, are due to recessive alleles.) This could be due to

natural selection, in which those that are better adapted to the environment survive longer and

reproduce more frequently.

Suppose we find a population in which there is a smaller-than-expected number of

homozygotes of both types, and a larger number of heterozygotes. This could be due to

heterozygote superiority—where the heterozygote is more fit than either homozygotes. In humans,

this is the case for the allele causing sickle cell disease, a type of hemoglobinopathy.

Nonrandom mating is another potential source of departure from the Hardy-Weinberg

equilibrium. Imagine that two alleles give rise to two very different appearances. Individuals may

choose to mate with those whose appearance is closest to theirs. This may lead to divergence of

the two groups over time into separate populations and perhaps ultimately separate into two

distinct subpopulations.

In very small populations, allele frequencies may change dramatically from one generation

to the next, due to the vagaries of mate choice or other random events. For instance, half a dozen

34

individuals with the dominant allele may, by chance, have fewer offspring than half a dozen with

the recessive allele. This would have comparatively little effect in a population of one thousand,

but it could have a dramatic effect in a population of twenty. Such changes are known as genetic

drifts.

In this dissertation, we assume inbreeding is the only force for a departure from the HardyWeinberg Equilibrium.

4.1.4

Inbreeding Coefficient θ

Inbreeding depression was recognized early by plant and animal breeders (Wright 1977)

and in zoo polulations (Ralls, Brugger and Ballow 1979; Senner 1980) and in the management and

restocking of endangered populations in the wild.

Inbreeding

Inbreeding is defined as mating between related individuals. It is also called consanguinity,

meaning "mixing of the blood." Although some plants successfully self-fertilize (the most extreme

case of inbreeding), biological mechanisms are in place in many organisms, from fungi to humans,

to encourage cross-fertilization. In human populations, customs and laws in many countries have

been developed to prevent marriages between closely related individuals (e.g., siblings and first

cousins). Despite these proscriptions, genetic counselors are frequently presented with the question

"If I marry my cousin, what are the chances that we will have a baby who has a disease?" The

answer is that when two partners are related their chance to have a baby with a disease or birth

defect is higher than the background risk in the general population.

Increased Disease Risk

Many genetic diseases are recessive, meaning only people who inherit two disease alleles

develop the disease. Many of us carry several single alleles for genetic diseases. Since close

35

relatives have more genes in common than unrelated individuals, there is an increased chance that

parents who are closely related will have the same disease alleles and thus have a child who is

homozygous for a recessive disease.

For instance, cousins share approximately one-eighth or 12.5 percent of their alleles. So, at

any locus the chance that cousins share an allele inherited from a common parent is one-eighth.

The chance that their offspring will inherit this allele from both parents, if each parent has one

copy of the allele, is one-fourth. Thus, the risk the offspring will inherit two copies of the same

allele is 1/8 × 1/4, or 1/32, about 3 percent. If this allele is deleterious, then the homozygous child

will be affected by the disease. Overall, the risk associated with having a child affected with a

recessive disease as a result of a first cousin mating is approximately 3 percent, in addition to the

background risk of 3 to 4 percent that all couples face.

Detect Inbreeding

Unfortunately, inbreeding cannot be detected by pedigrees directly, because pedigrees are

not usually available for individuals with these populations. However, inbreeding coefficient (θ)

can be measured indirectly form genotypic data.

This is the probability that two genes at any locus in one individual are identical by

descent (have been inherited from a common ancestor). The more closely related the parents are,

the larger the value of θ is. For example, the coefficient of inbreeding for an offspring of two

siblings is one-fourth (0.25), for an offspring of two half-siblings it is one-eighth (0.125), and for

an offspring of two first cousins it is one-sixteenth (0.0625). (This is a different calculation than

the calculation of shared alleles between cousins, above.)

In general, inbreeding in human populations is rare. The average inbreeding coefficient is

0.03 for the Dunker population in Pennsylvania and 0.04 for islanders on Tristan da Cunha.

Inbreeding occurs in both those populations. Some isolated populations actively avoid inbreeding

36

and have maintained low average inbreeding coefficients even though they are small. For example,

polar Eskimos have an average inbreeding coefficient that is less than 0.003.

Beneficial changes can also come from inbreeding, and inbreeding is practiced routinely in

animal breeding to enhance specific characteristics, such as milk production or low fat-to-muscle

ratios in cows. However, there can often be deleterious effects of such selective breeding when

genes controlling unselected traits are influenced too. Generations of inbreeding decrease genetic

diversity, and this can be problematic for a species. Some endangered species, which have had

their mating groups reduced to very small numbers, are losing important diversity as a result of

inbreeding.

37

4.2

Properties of Inbreeding coefficient θ

4.2.1

Formulation of the problem

We first consider the case of a single locus with two alleles and then consider a single

locus with three alleles. We cannot examine the entire population to check the equilibrium law.

We take a random sample of n individuals from the population. At a single autosomal locus with

two alleles, a diploid can be one of three genotypes: AA, Aa and aa. Let n1, n2, and n3 be the

frequencies of the genotypes of AA, Aa and aa in the sample. We need to formulate the

equilibrium law as a hypothesis to be tested using the data collected.

Consider two alleles, A and a, and let p and q, respectively, be the allele frequencies,

which are unknown in the population. A punnet square (Table 4-1) can be used to formulate the

problem, where the fraction in each cell is equal to the product of the row and column

probabilities.

Table 4-1

Punnet square for Hardy-Weinberg Equilibrium

Alleles

Alleles

A

a

Marginal

frequencies

2

pq

p

a

pq

2

q

q

Marginal

frequencies

p

q

1

A

p

In general, let p1, 2q1, and r1 be the unknown genotype frequencies in the population. The

genotype frequencies can be written in the form of a bivariate frequency Table 4-2 as follows:

38

Table 4-2

Genotype frequencies in the population

Alleles

Marginal

A

a

A

p1

q1

p

a

q1

r1

q

Marginal

frequencies

p

q

1

Alleles

frequencies

Thus the joint distribution of the alleles is symmetric with identical marginal frequencies.

Therorem: Given frequencies p1, 2q1, and r1 , there exists a number θ such that

p1 = p 2 + θ pq

q1 = pq − θ pq

and

r1 = q 2 + θ pq

Proof

p1 − p 2

Let θ =

. This readily implies that p1= p2 +θpq. Likewise,

pq

q1 = p − p1 = p − ( p 2 + θpq ) = p (1 − p ) − θpq = pq − θpq .

In a similar vein, one can show that r1 = q 2 + θpq . The number θ is called inbreeding coefficient.

If θ = 0, the joint distribution matches with the one spelled under the Hardy-Weinberg

Equilibrium. The inbreeding coefficient θ measures the extent of departure from the HardyWeinberg Equilibrium. Consequently, the joint distribution can be put in the following Table 4-3:

39

Table 4-3

Joint distribution with inbreeding coefficient θ

Alleles

a

A

p 2 + θpq

pq − θpq

p

a

pq − θpq

q 2 + θpq

q

p

q

1

Alleles

Marginal

frequencies

4.2.2

Marginal

A

frequencies

Bounds on θ

Since each entry in the above table is a frequency, each entry has to be larger than or equal to zero.

p

⎧ 2

⎪ p + θpq ≥ 0 ⇒ θ ≥ − q

⎪

q

⎪

It’s easy to find the bound of θ by ⎨q 2 + θpq ≥ 0 ⇒ θ ≥ −

p

⎪

⎪ pq − θpq ≥ 0 ⇒ θ ≤ 1

⎪

⎩

Therefore, θ has to satisfy :

⎧p q⎫

− min ⎨ , ⎬ < θ < 1

⎩q p⎭

4.2.3

Homozygote excesses and heterozygote deficiencies

Genotype can be distinguished as homozygous to heterozygous. Phenotypic traits are

determined from the genotypes and therefore, it is important to know on a specific locus whether

we have a homozygote or heterozygote excess. Homozygote genotypes are represented by, say,

40

AA and aa, heterozygote genotype is represented by Aa. The question is when homozygotes outnumber heterozygotes?

•

Homozygote proportion = p 2 + q 2 + 2 pqθ

= ( p + q ) 2 − 2 pq − 2 pqθ

= 1 − 2 pq(1 − θ )

•

Heterozygote propotion: 2 pq (1 − θ )

1) Thus homozygotes out-number heterozygotes if

1

1 − 2 pq (1 − θ ) < 2 pq (1 − θ ) ⇒ θ > 1 −

4 pq

1

424

3

≤0

2) Heterozygotes out-number homozygotes

1

1 − 2 pq(1 − θ ) > 2 pq (1 − θ ) ⇒ θ < 1 −

4 pq

1

424

3

≤0

Homozygote excesses and heterozygote deficiencies have important genetic meanings but

the currently widely used tests, like Chi-squared test and exact test (Guo and Thompson 1992) for

small sample size and large number of alleles, of the Hardy-Weinberg proportions do not take into

account the homozygote excesses and heterozygote deficiencies because of their two-sided

hypothesis testing nature.

4.3

Maximum Likelihood estimates

Theoretically, the frequencies (n1, n2, n3 ) under genotypes AA, Aa, aa have a multinomial

distribution: Multinomial (n, p 2 + θpq, 2 pq(1 − θ ), q 2 + θpq ) . The parameters of the model are p

and θ, which are unknown. We employ the Maximum Likelihood method to get the estimates:

41

The likelihood can be written as: L = ( p 2 + θpq ) n1 [2 pq (1 − θ )]n2 (q 2 + θpq ) n3

ln L( p,θ ) = n1 ln( p 2 + θpq) + n2 ln(2 pq(1 − θ )) + n3 ln(q 2 + θpq )

∂ ln L( p,θ )

n

n2

= 2 1

(2 p + θ (1 − 2 p)) +

(2(1 − θ )(1 − 2 p)) +

∂p

p + θpq

2 pq(1 − 2θ )

n3

(−2q + θ (1 − 2 p ))

q + θpq

2

∂ ln L( p,θ )

n

n2

n

= 2 1

( pq) +

(−2 pq) + 2 3 ( pq)

∂θ

p + θpq

2 pq (1 − θ )

q + θpq

Solve the following equations for p and θ:

⎧ ∂ ln L( p,θ )

=0

⎪⎪

∂p

⎨

⎪ ∂ ln L( p,θ ) = 0

∂θ

⎩⎪

4.4

4.4.1

2n + n

⎧

⇒ ⎪ pˆ = 1 2

2n

⎪

⎨

4n1n3 − n22

⎪θˆ =

⎪⎩

(2n1 + n2 )(n2 + 2n3 )

Testing the validity of HWE

Hypothesis Testing on θ

In reality, the departure from the Hardy-Weinberg law is affected not only by

consanguinity (inbreeding) but also by selection, genetic drift, assortative mating, and other

evolutionary forces (Cockerham 1973) which are beyond the scope of this dissertation. Here we

assume that the departure is affected just by inbreeding so that we can emphasize on studying and

interpreting inbreeding only. As a first step toward an accurate and efficient measure of inbreeding

in a small population, it is important to initially resolve the single locus measure of inbreeding,

determine its sampling variance, and also build hypotheses and test their validity. In this