* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Lecture 8 - UCSB Department of Economics

Survey

Document related concepts

Transcript

Oct. 24, 2002 LEC #8

ECON 240A-1

Correlation and Analysis of Variance

L. Phillips

I. Introduction

Pursuing the results from the ordinary least squares estimates of the linear model

from the previous lecture, we investigate correlation, measures of goodness of fit, and

analysis of variance. Then we turn to issues of central tendency and dispersion for the

parameter estimates of the intercept and slope.

II. Correlation

Recall that in Lectures Four and Six we introduced the covariance between y and

x as a measure of the relationship between these variables,

E[(y – Ey)(x – Ex)] = Cov(yx).

(1)

The covariance depends on units of measurement, so it is not a relative measure. We can

go back to the linear model, Eq. (4), from the previous lecture and see the relationship

between the variance of y and the covariance of y and x:

yi = a + b xi + ui .

(2)

Taking expectations,

E y = a + b E x + Eu

(3)

The mean of the error term, E u, is zero by assumption and we have seen that the sample

mean of the estimated error is zero, i.e.

û i

/n =0.

i

Subtract Eq. (3) from Eq. (2) to write in deviation form:

(y - Ey) = b (x – Ex) + u.

(4)

Multiply both sides by (x – Ex) and take expectations. If the residual u is independent of

the explanatory variable, which is another assumption of regression analysis, then

E[(y - Ey)(x – Ex)] = b E(x – Ex)2 + E[ (x – Ex) u] = b Var x .

(5)

Oct. 24, 2002 LEC #8

ECON 240A-2

Correlation and Analysis of Variance

L. Phillips

Solving for the slope b,

b = Cov yx/Var x.

(6)

We can estimate the parameter b using the method of moments, which substitutes the

sample estimates for the population entities of Cov yx and Var x:

b̂ = [ (yi -

i

i

yi /n)(xi -

xi /n)][ )(xi -

i

xi /n) )(xi -

i

xi /n). (7)

i

This is the same formula as the ordinary least squares solution given by Eq. (15) in the

previous lecture. To see this, rewrite Eq. (7) above as:

b̂ =

(yi - y ) (xi - x )

i

(xi - x )2 ,

(8)

[(xi )2 - 2 x xi + ( x )2 ], (9)

i

and expanding,

b̂ =

(yi xi - y xi - x yi + yx )

i

i

and taking summations,

b̂ = [ (yi xi )- y

i

xi - x

i

yi + n yx ] [ (xi )2 - 2 x

i

i

xi + n( x )2 ](10)

i

and collecting terms,

b̂ = ( yi xi - n yx ) [ (xi )2 - n( x )2],

i

(11)

i

and multiplying top and bottom by n/n:

b̂ = [n yi xi – ( yi )( xi )] [n (xi )2 –( xi )2],

i

i

i

i

(12)

i

the same as Eq. (15), as promised.

We can use Eq. (4) to pursue the relationship between variances of y, x, and u.

Square both sides of Eq. (4) and take expectations:

E(y – Ey)2 = b2 E(x – Ex)2 + 2b E[(x – Ex)u] + E u2 ,

(13)

Oct. 24, 2002 LEC #8

ECON 240A-3

Correlation and Analysis of Variance

L. Phillips

or

Var y = b2 Var x + Var u,

(14)

since another assumption of least squares, as discussed above, is that the explanatory

variable, x, is independent of the error u, so their covariance is zero, i.e E[(x – Ex)u] = 0.

Thus the total variance in y can be decomposed into two parts, the variance explained by

y’s dependence on x, b2 Var x, called the signal, and the unexplained variance, Var u,

called the noise.

Combining Eq.’s (6) and (14),

Var y = [Cov yx]2 Var x] + Var u,

(15)

And dividing by the variance of y:

1 = {[Cov yx]2 [Var xVar y] }+ Var u/Var y,

(16)

where 1 – Var u/Var y is one minus the ratio of the unexplained variance to the total

variance, i.e.

1 – Var u/Var y 1 – unexplained variance/total variance,

(17)

(total variance – unexplained variance)/ total variance,

explained variance/total variance,

(18)

(19)

and from Eq. (16), this fraction of the total variance that is explained is:

explained variance/total variance = [Cov yx]2 [Var xVar y]

.

(20)

Note, the covariance squared divided by the variance of y and the variance of x cancels

out the units of measurement, leaving a relative measure called R2 , the coefficient of

determination. This coefficient, which measures the fraction of the variance in y

explained by dependence on x is consequently a measure of goodness of fit.

Oct. 24, 2002 LEC #8

ECON 240A-4

Correlation and Analysis of Variance

L. Phillips

In bivariate regression of y on x, the coefficient of determination, R2, is just the

square of the correlation coefficient, r, between y and x, which is a relative (unitless)

measure of the interdependence of y and x

R2 = Cov yx/{ Var(y)

Var (x) } = r.

(21)

The sample correlation coefficient, r̂ , can be estimated by the method of

moments, substituting sample estimates of sums of squares for the covariance and

variances:

r̂ = { [ y(i) y ][ x(i) x ] } (n –1)/ [ [ y(i) y ] 2 (n 1) ][ [ x(i) x ] 2 (n 1) ],

i

or

r̂ = { [ y(i) y ][ x(i) x ] }/ [ [ y(i) y ] 2 ][ [ x(i) x ] 2 ].

(22)

i

The correlation coefficient ranges between minus one, if the correlation is

negative and perfect, through zero for no correlation, and up to one if the correlation is

positive and perfect, i.e. -1 r 1 .

In multivariate regression, where y depends on two or more explanatory variables

or regressors, the estimated coefficient of determination, R̂ 2, can be calculated from the

sum of squared residuals and the sum of squared deviations of y around its mean:

R̂ 2 = 1 – [ [uˆ (i ) ]2 / (yi - y )2

i

(23)

i

III. Analysis of Variance

The results of a regression analysis can be summarized in a table of analysis of

variance, or ANOVA, as depicted in Table 1.

Oct. 24, 2002 LEC #8

ECON 240A-5

Correlation and Analysis of Variance

L. Phillips

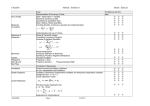

Table 1: Table of Analysis of Variance: Bivariate Regression

Source of Variation

Sum of Squares

Explained by x

b̂ 2

Degrees of Freedom

(xi - x )2

1

Mean Square

b̂ 2

i

û 2

Unexplained

(xi - x )2 /1

i

n-2

i

û 2 /(n-2)

i

Total

(yi - y )2

n-1

i

(yi - y )2 /(n-1)

i

A key to understanding ANOVA is that the total sum of squared deviations of the

dependent variable, y, from its mean can be partitioned into the explained sum of squares,

and the unexplained sum of squares, in a fashion parallel to how we partitioned the

population variance. To see this, combine Eq. (6), uˆ (i ) y (i ) yˆ (i ), with Eq. (10),

y (i ) aˆ bˆx (i ), both from the previous chapter, to obtain:

uˆ (i ) y (i ) [aˆ bˆx(i )] [ y aˆ bˆx ] ,

(24)

uˆ (i ) [ y (i ) y ] bˆ[ x(i ) x ] .

(25)

Squaring the observed residual and summing,

[uˆ(i)] 2 = {[ y(i) y ] 2 + b̂ 2 [x(i) - x ] 2 –2 bˆ[ x(i) x ][ y(i) y ] }.

i

(26)

i

Note from Eq. (8) that

bˆ [ x(i ) x ] 2 =

i

[ y(i) y ][ x(i) x ] ,

(27)

i

and substituting the left hand side of Eq. (27) for the right hand side, as it appears in Eq.

(26), we obtain

Oct. 24, 2002 LEC #8

ECON 240A-6

Correlation and Analysis of Variance

[uˆ(i)] 2 = {[ y(i) y ] 2 - b̂ 2 [x(i) - x ] 2},

i

L. Phillips

(28)

i

i.e. the residual sum is the difference between the total sum of squares for y, and that

explained by the dependence of y on x, which is depicted in the second column of Table

1.

One degree of freedom is lost in a sample size of n in calculating the sample

mean, which is used to calculate the total sum of squares for the dependent variable. Two

degrees of freedom are lost in estimating the two regression parameters, â and b̂ ,

necessary to calculate ŷ and the sum of observed squared residuals for a sample of size

n. That leaves one degree of freedom for the observed mean square, as indicated in the

third column of Table 1.

The mean squares are just the sums of squares divided by their respective degrees

of freedom, i.e sums in column two divided by degrees of freedom in column three, and

are listed in column 4. The ratio of the explained mean square to the unexplained mean

square has the F- distribution with 1 and n-2 degrees of freedom, 1 in the numerator and

n-2 in the denominator:

F1, n-2 = [ b̂ 2

i

(xi - x )2 /1]

û 2 /(n-2) .

(29)

i

This F test can be used to determine whether x significantly explains the variance in y, or

equivalently, whether the goodness of fit is statistically significant at some critical level,

, say 5% .

This F test can also be conducted with the coefficient of determination. Recall that

R2 is the ratio of explained variance to total variance, or in terms of the estimated

Oct. 24, 2002 LEC #8

ECON 240A-7

Correlation and Analysis of Variance

L. Phillips

coefficient of determination, R̂ 2, the ratio of the explained sum of squares to the total

sum of squares. As expressed in Eq. (23), 1 - R̂ 2, is the ratio of the unexplained sum of

squares to the total sum of squares. Thus the ratio of R̂ 2/(1 - R̂ 2) is the ratio of the

explained sum of squares to the unexplained sum of squares, and multiplying by (n –2)/1,

we have the F statistic:

F1, n-2 = [ R̂ 2/1][(1 - R̂ 2)/(n –2) = (n – 2)/1 [ R̂ 2/(1 - R̂ 2)].

(30)

As an example of a Table of ANOVA, we use our data set from Table 1 in lecture

seven, and the estimation of the capital asset pricing model as illustrated in Figure 4 of

that lecture.

Table 2: Table of Analysis of Variance: Bivariate Regression, CAPM

Source of Variation

Sum of Squares

Explained by x

Degrees of Freedom

Mean Square

1

Unexplained

40.63589

10

4.0636

Total

170.0485

11

15.4590

The sum of squared residuals from the regression is 40.63589, and divided by ten,

the unexplained mean square is 4.0636. The standard deviation in the dependent variable

is 3.931788, and squaring and multiplying by eleven, the total sum of squares is

170.0485. The explained sum of squares can be calculated by difference as 129.4126.

The F statistic with one degree of freedom in the numerator and ten degrees of freedom in

the denominator, is 31.486. The critical value of F1, 10 for a level of significance of =

5% is 4.96, so we can reject the null hypothesis that the market has no explanatory power

Oct. 24, 2002 LEC #8

ECON 240A-8

Correlation and Analysis of Variance

L. Phillips

for the UC stock index fund. The coefficient of determination is 0.761, and gives an

equivalent F statistic. Three fourths of the variation in the UC stock index fund is

explained by variation in the market, with the remainder of the volatility being specific to

the UC stock index fund.

The square root of the unexplained mean square, 2.0158, is an estimate of the

square root of the variance of the error u for the regression, ˆ u , and is called the

standard error of the regression.

IV. The Mean and Variance of the OLS Slope Estimate

It is useful to express the linear model in deviation form. For the sake of notation,

start with Eq. (2):

y(i) = a + b x(i) + u(i),

(31)

and sum over I,

y(i) = na + b

i

i

x(i) +

u(i),

(32)

i

and divide by n:

y a + b x u .

(33)

Subtract Eq. (33) from Eq. (31) to obtain the linear model in deviation form:

[y(i) - y ] = b[x(i) - x ] + [u(i) - u ]

(34)

Use the right hand side of Eq. (34) to substitute for [y(i) - y ] in the expression for the

estimate of the slope given by Eq. (8):

b̂ =

{ b[x(i) - x ] + [u(i) - u ]}[x(i) - x ] { [x(i) - x ]2}, (35)

i

i

b̂ = b + { [u(i) - u ][x(i) - x ] }{ [x(i) - x ]2}.

i

i

(36)

Oct. 24, 2002 LEC #8

ECON 240A-9

Correlation and Analysis of Variance

L. Phillips

Take expectations of both sides of Eq. (36):

E ( b̂ ) = b + { [x(i) - x ] E[u(i) - u ]}{ [x(i) - x ]2}.

i

(37)

i

Note that E u(i) =0, for all observations i, and E u = (1/n)

E[u(i)] =0, as well, so the

i

expected value of the estimated slope, b̂ , is the model slope parameter, b, and as a

consequence we say the estimate is unbiased.

Use Eq. (36) to subtract b from b̂ , and separate the summation in the numerator

into two terms:

( b̂ - b) ={1{ [x(i) - x ]2} { u(i) [x(i) - x ] - u

i

Since

i

[x(i) - x ] }.

i

[x(i) - x ] = 0, we obtain:

i

( b̂ - b) ={1{ [x(i) - x ]2} { u(i) [x(i) - x ]

i

,

(38)

i

and squaring both sides and taking expectations, we obtain the variance of the estimated

slope parameter:

E( b̂ - b)2 = {1{ [x(i) - x ]2}2 { (x1 - x )2 E [u(1)2] + (x2 - x )2 E [u(2)2] + …

i

+ ((x1 - x )(x2 - x ) E [u(1)u(2)] + …. .

(39)

An assumption of OLS is that the error terms are independent, so E[u(i)u(j)] = 0, all i and

j, and that the variance of the error is homoskedastic, i.e. the same for all observations, so

E[u(1)]2 = E[u(2)]2 , etc. so that Eq. (38) simplifies to:

E( b̂ - b)2 = {1{ [x(i) - x ]2}2 {2 { [x(i) - x ]2} = 2 /{ [x(i) - x ]2 }(40)

i

i

i

Since 2 is unknown we use the unexplained mean square as an estimate.

Oct. 24, 2002 LEC #8

ECON 240A-10

Correlation and Analysis of Variance

L. Phillips

The estimate of the variance for the estimated slope, b̂ , for the CAPM is:

Var( b̂ ) = E( b̂ - b)2 = ˆ 2 / [ x(i) x ] 2 = 4.0636/252.8868 = 0.016068, (41)

i

And taking the square root, the estimated standard deviation for b̂ is: 0.1268.

V. Hypothesis Tests About the Slope

Using the estimated CAPM as an example, the null hypothesis that the UC stock

index fund does not depend on the market can be tested by the conjecture that the slope is

zero, i.e.

H0 : b = 0,

Versus the alternative hypothesis that the slope is not zero,

Ha : b 0.

Forming a t-statistic with 10 degrees of freedom,

t = ( b̂ - b)/ ˆ ( b̂ ) = (0.715 – 0)/0.1268 = 5.63,

and for 10 degrees of freedom, and using a significance level of 5% for the probability

of a type I error, t0.025 = 2.23, so we reject the null hypothesis. Most regression software

packages provide not only the OLS parameter estimates, but the estimates of their

standard deviations as well, to facilitate tests of hypotheses about economic models.

The square of this t-statistic testing the significance of the explanatory variable in

a bivariate regression equals the F1, 10 statistic from Table 2 that tests whether the

regression has explanatory power. In a simple bivariate regression, all of your hopes for

explanation rest on a single independent variable, so the t-statistic and the F statistic are

linked.

Oct. 24, 2002 LEC #8

ECON 240A-11

Correlation and Analysis of Variance

L. Phillips

The hypothesis that the UC stock index fund is equal in volatility to the market, as

measured by the S&P500, can be tested as we saw in the previous chapter by testing

whether the slope, or beta is equal to one:

H0 : b =0,

Versus the alternative hypothesis,

Ha : b 0.

The test using the Student’s t-distribution statistic is:

t = ( b̂ - b)/ ˆ ( b̂ ) = (0.715 – 1)/0.1268 = -0.285/0.127 = -2.24.

Selecting a level of significance of 5%, t0.025 = 2.23 using a two-tailed test. We reject

the null, barely, at this level of significance.