Reference - Department of Statistics, Yale

... An Introduction to Probability Theory and Its Applications, Volume II A classic. If you are serious about probability theory you need to own this book (and the companion volume I). Covers lots of material not found in other texts. Very good on characteristic functions; very little on martingales. Un ...

... An Introduction to Probability Theory and Its Applications, Volume II A classic. If you are serious about probability theory you need to own this book (and the companion volume I). Covers lots of material not found in other texts. Very good on characteristic functions; very little on martingales. Un ...

Inf2D-Reasoning and Agents Spring 2017

... Uncertainty and rational decisions • A general theory of rational decision making ...

... Uncertainty and rational decisions • A general theory of rational decision making ...

Lecture Notes

... The concept of entropy originated in thermodynamics in the 19th century where it was intimately related to heat flow and central to the second law of the subject. Later the concept played a central role in the physical illumination of thermodynamics by statistical mechanics. This was due to the effo ...

... The concept of entropy originated in thermodynamics in the 19th century where it was intimately related to heat flow and central to the second law of the subject. Later the concept played a central role in the physical illumination of thermodynamics by statistical mechanics. This was due to the effo ...

return interval - University of Colorado Boulder

... • Adjustment (or Adaptation, we’ll use the terms interchangeably though some authors want them more defined, usually as short vs long-term • Cultural adaptations have long been a part of human development---e.g., the way traditional farmers grew crops or built their homes. • Adjustment: can be purpo ...

... • Adjustment (or Adaptation, we’ll use the terms interchangeably though some authors want them more defined, usually as short vs long-term • Cultural adaptations have long been a part of human development---e.g., the way traditional farmers grew crops or built their homes. • Adjustment: can be purpo ...

Belief-Function Formalism

... • The impact of each distinct piece of evidence on the subsets of S is represented as a function known as the bpa. • It is a generalization of the traditional probability density function. • For example… ...

... • The impact of each distinct piece of evidence on the subsets of S is represented as a function known as the bpa. • It is a generalization of the traditional probability density function. • For example… ...

Consider Exercise 3.52 We define two events as follows: H = the

... By Definition 3.8, events F and H are not mutually exclusive because ______________________. We now calculate the following conditional probabilities. The probability of F given H, denoted by P(F | H), is _____ . We could use the conditional probability formula on page 138 of our text. Note that P(F ...

... By Definition 3.8, events F and H are not mutually exclusive because ______________________. We now calculate the following conditional probabilities. The probability of F given H, denoted by P(F | H), is _____ . We could use the conditional probability formula on page 138 of our text. Note that P(F ...

2-2 Distributive Property

... McCutchen’s next hit is a 2B. In other words, the P(2B | Hit). P(2B | Hit) = P(Hit and 2B) P(Hit) As of 5/11/17 his P(Hit and 2B) is .0413 P(Hit) is .215 .0413 ÷ .215 = .1922 or about a 19% chance that his next hit is a 2B ...

... McCutchen’s next hit is a 2B. In other words, the P(2B | Hit). P(2B | Hit) = P(Hit and 2B) P(Hit) As of 5/11/17 his P(Hit and 2B) is .0413 P(Hit) is .215 .0413 ÷ .215 = .1922 or about a 19% chance that his next hit is a 2B ...

PROBABILITY IS SYMMETRY

... uncertainty (ii) Given new information (data), update your opinion using the Bayes Theorem (iii) Make decisions that maximize the expected gain (utility) None of the above ideas was invented by von Mises or de Finetti. ...

... uncertainty (ii) Given new information (data), update your opinion using the Bayes Theorem (iii) Make decisions that maximize the expected gain (utility) None of the above ideas was invented by von Mises or de Finetti. ...

F2006

... law of total probability and Bayes’ rule; independence of events (Sections 1.1-1.4, 1.6, 1.7, 2.1-2.4, 3.1-3.5 of text). • Discrete Random Variables: random variables; distribution functions; expectation, variance, and moments of a discrete random variable; uniform, Bernoulli, binomial, Poisson, and ...

... law of total probability and Bayes’ rule; independence of events (Sections 1.1-1.4, 1.6, 1.7, 2.1-2.4, 3.1-3.5 of text). • Discrete Random Variables: random variables; distribution functions; expectation, variance, and moments of a discrete random variable; uniform, Bernoulli, binomial, Poisson, and ...

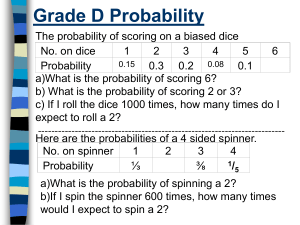

Grade D Probability

... Grade D Probability-answers The probability of scoring on a biased dice No. on dice ...

... Grade D Probability-answers The probability of scoring on a biased dice No. on dice ...

Word

... this result purely by deductive reasoning. The result does not require that any coin be tossed (after all, it’s common sense right?). Nothing is said, however, about how one can determine whether or not a particular coin is true/fair. Unfortunately, there are some rather troublesome defects in the c ...

... this result purely by deductive reasoning. The result does not require that any coin be tossed (after all, it’s common sense right?). Nothing is said, however, about how one can determine whether or not a particular coin is true/fair. Unfortunately, there are some rather troublesome defects in the c ...

Formal fallacies and fallacies of language

... Judging the argument “by the man” not the actual argument. ...

... Judging the argument “by the man” not the actual argument. ...

Probability - s3.amazonaws.com

... The probability of getting exactly three heads when I toss a coin six times is ½. False – and there are a large number of possible outcomes. The actual probabilities are shown on the next page. ...

... The probability of getting exactly three heads when I toss a coin six times is ½. False – and there are a large number of possible outcomes. The actual probabilities are shown on the next page. ...

Converses to the Strong Law of Large Numbers

... In the special case that the {Xn } are independent random variables, the tail events have a simple structure which is described by Kolmogorov’s Zero-One Law: In this case if E is a tail event, then P (E) is either zero or one. The proof consists of showing that every tail event E is independent of ...

... In the special case that the {Xn } are independent random variables, the tail events have a simple structure which is described by Kolmogorov’s Zero-One Law: In this case if E is a tail event, then P (E) is either zero or one. The proof consists of showing that every tail event E is independent of ...

PROBABILISTIC ALGORITHMIC RANDOMNESS §1. Introduction

... sequences, including Martin-Löf randomness (1-randomness), partial computable randomness, computable randomness, and Schnorr randomness, among others. A particularly attractive aspect of these, and other, notions of randomness is that they have equivalent definitions in all three paradigms. Martin-L ...

... sequences, including Martin-Löf randomness (1-randomness), partial computable randomness, computable randomness, and Schnorr randomness, among others. A particularly attractive aspect of these, and other, notions of randomness is that they have equivalent definitions in all three paradigms. Martin-L ...

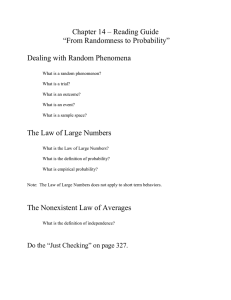

Chapter.14.Reading.Guide

... always occurs. A probability is a number between 0 and 1. For any event A, 0 < P(A) < 1. 2.) If a random phenomenon has only one possible outcome, it’s not very interesting (or very random). So we need to distribute the probabilities among all the outcomes a trial can have. The Probability Assignmen ...

... always occurs. A probability is a number between 0 and 1. For any event A, 0 < P(A) < 1. 2.) If a random phenomenon has only one possible outcome, it’s not very interesting (or very random). So we need to distribute the probabilities among all the outcomes a trial can have. The Probability Assignmen ...

6.3 Calculator Examples

... • Our calculator can also directly calculate binomial probabilities • Binompdf(n,p,k) computes the probability that X=k • Binomcdf(n,p,k) computes the probability that X≤k – Remember, n is the number of trials – P is the probability of success in any given trial ...

... • Our calculator can also directly calculate binomial probabilities • Binompdf(n,p,k) computes the probability that X=k • Binomcdf(n,p,k) computes the probability that X≤k – Remember, n is the number of trials – P is the probability of success in any given trial ...

Chapter 7 Lesson 8 - Mrs.Lemons Geometry

... Chapter 7 Lesson 8 Objective: To use segment and area models to find the probabilities of events. ...

... Chapter 7 Lesson 8 Objective: To use segment and area models to find the probabilities of events. ...

Using Curve Fitting as an Example to Discuss Major Issues in ML

... Experiment: Given a function; create N training example What M should we choose?Model Selection Given M, what w’s should we choose? Parameter Selection ...

... Experiment: Given a function; create N training example What M should we choose?Model Selection Given M, what w’s should we choose? Parameter Selection ...

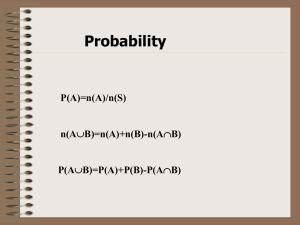

Probability

... Discuss the procedure you followed, and your findings for each color. Describe any similarities and differences between the probabilities. Describe which color is most likely to be picked at random, and which color is least likely. (“closer to one”) Justify why you think this. What questions do you ...

... Discuss the procedure you followed, and your findings for each color. Describe any similarities and differences between the probabilities. Describe which color is most likely to be picked at random, and which color is least likely. (“closer to one”) Justify why you think this. What questions do you ...

Random Variables - University of Arizona

... • The probability distribution can be written as a table, or as a histogram (called a probability histogram). • In order to be a legitimate probability distribution, the probabilities must fall between 0 and 1 and sum to 1. ...

... • The probability distribution can be written as a table, or as a histogram (called a probability histogram). • In order to be a legitimate probability distribution, the probabilities must fall between 0 and 1 and sum to 1. ...

INTRODUCTION TO PROBABILITY & STATISTICS I MATH 4740/8746

... INTRODUCTION TO PROBABILITY & STATISTICS I MATH 4740/8746 ...

... INTRODUCTION TO PROBABILITY & STATISTICS I MATH 4740/8746 ...

History of randomness

In ancient history, the concepts of chance and randomness were intertwined with that of fate. Many ancient peoples threw dice to determine fate, and this later evolved into games of chance. At the same time, most ancient cultures used various methods of divination to attempt to circumvent randomness and fate.The Chinese were perhaps the earliest people to formalize odds and chance 3,000 years ago. The Greek philosophers discussed randomness at length, but only in non-quantitative forms. It was only in the sixteenth century that Italian mathematicians began to formalize the odds associated with various games of chance. The invention of modern calculus had a positive impact on the formal study of randomness. In the 19th century the concept of entropy was introduced in physics.The early part of the twentieth century saw a rapid growth in the formal analysis of randomness, and mathematical foundations for probability were introduced, leading to its axiomatization in 1933. At the same time, the advent of quantum mechanics changed the scientific perspective on determinacy. In the mid to late 20th-century, ideas of algorithmic information theory introduced new dimensions to the field via the concept of algorithmic randomness.Although randomness had often been viewed as an obstacle and a nuisance for many centuries, in the twentieth century computer scientists began to realize that the deliberate introduction of randomness into computations can be an effective tool for designing better algorithms. In some cases, such randomized algorithms are able to outperform the best deterministic methods.