Sum of Squares seminar- Homework 0.

... they are not always tight. It is often easier to compute tr(Ak )1/k than trying to compute kAk directly, and as k grows this yields a better and better estimate. Exercise 3. Let A be an n × n symmetric matrix. Prove that the following are equivalent: n ...

... they are not always tight. It is often easier to compute tr(Ak )1/k than trying to compute kAk directly, and as k grows this yields a better and better estimate. Exercise 3. Let A be an n × n symmetric matrix. Prove that the following are equivalent: n ...

5. Continuity of eigenvalues Suppose we drop the mean zero

... should be either zero or at least two (to get a non-zero expectation). Hence, the number of distinct indices that occur in i can be atmost q. From a vector of indices i, we make a graph G as follows. Scan i from the left, and for each new index that occurs in i, introduce a new vertex named v1 , v2 ...

... should be either zero or at least two (to get a non-zero expectation). Hence, the number of distinct indices that occur in i can be atmost q. From a vector of indices i, we make a graph G as follows. Scan i from the left, and for each new index that occurs in i, introduce a new vertex named v1 , v2 ...

5.6 Using the inverse matrix to solve equations

... AX = B we can multiply both sides by the inverse of A, provided this exists, to give A−1 AX = A−1 B But A−1 A = I, the identity matrix. Furthermore, IX = X, because multiplying any matrix by an identity matrix of the appropriate size leaves the matrix unaltered. So X = A−1 B www.mathcentre.ac.uk ...

... AX = B we can multiply both sides by the inverse of A, provided this exists, to give A−1 AX = A−1 B But A−1 A = I, the identity matrix. Furthermore, IX = X, because multiplying any matrix by an identity matrix of the appropriate size leaves the matrix unaltered. So X = A−1 B www.mathcentre.ac.uk ...

Question 1 ......... Answer

... a2 x2 + . . . + an xn = 0, where at least one of the coefficients ai is nonzero. (a) [3 points] How many of the variables xi are free? What is the dimension of a hyperplane in Rn ? (b) [4 points] Explain what a hyperplane in R2 looks like, and give a basis for the hyperplane in R2 given by the equat ...

... a2 x2 + . . . + an xn = 0, where at least one of the coefficients ai is nonzero. (a) [3 points] How many of the variables xi are free? What is the dimension of a hyperplane in Rn ? (b) [4 points] Explain what a hyperplane in R2 looks like, and give a basis for the hyperplane in R2 given by the equat ...

3.5 Perform Basic Matrix Operations

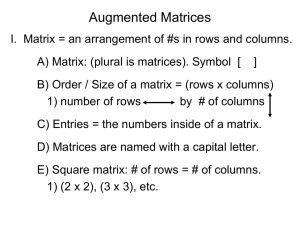

... Augmented Matrices II..Augment = to enhance, to make something bigger. A) Augmented matrix = a linear system written as a single matrix. 1) ax + by = # a b # a b # cx + dy = # ...

... Augmented Matrices II..Augment = to enhance, to make something bigger. A) Augmented matrix = a linear system written as a single matrix. 1) ax + by = # a b # a b # cx + dy = # ...

EET 465 LAB #2 - Pui Chor Wong

... From linear algebra, the basis vectors, g1, g2, …..gk, can be represented as a matrix G defined as: g ...

... From linear algebra, the basis vectors, g1, g2, …..gk, can be represented as a matrix G defined as: g ...

Orthogonal matrices, SVD, low rank

... A = U ΣV ∗ where U and V ∗ are orthogonal matrices and Σ is a diagonal matrix with non-negative diagonal entries that — according to convention — appear in descending order. The rank of a matrix A is given by the number of nonzero singular values. In computational practice, we would say that a matri ...

... A = U ΣV ∗ where U and V ∗ are orthogonal matrices and Σ is a diagonal matrix with non-negative diagonal entries that — according to convention — appear in descending order. The rank of a matrix A is given by the number of nonzero singular values. In computational practice, we would say that a matri ...

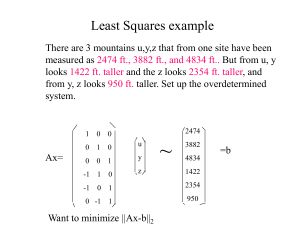

leastsquares

... •Does not require decomposition of matrix •Good for large sparse problem-like PET •Iterative method that requires matrix vector multiplication by A and AT each iteration •In exact arithmetic for n variables guaranteed to converge in n iterations- so 2 iterations for the exponential fit and 3 iterati ...

... •Does not require decomposition of matrix •Good for large sparse problem-like PET •Iterative method that requires matrix vector multiplication by A and AT each iteration •In exact arithmetic for n variables guaranteed to converge in n iterations- so 2 iterations for the exponential fit and 3 iterati ...

Jordan normal form

In linear algebra, a Jordan normal form (often called Jordan canonical form)of a linear operator on a finite-dimensional vector space is an upper triangular matrix of a particular form called a Jordan matrix, representing the operator with respect to some basis. Such matrix has each non-zero off-diagonal entry equal to 1, immediately above the main diagonal (on the superdiagonal), and with identical diagonal entries to the left and below them. If the vector space is over a field K, then a basis with respect to which the matrix has the required form exists if and only if all eigenvalues of the matrix lie in K, or equivalently if the characteristic polynomial of the operator splits into linear factors over K. This condition is always satisfied if K is the field of complex numbers. The diagonal entries of the normal form are the eigenvalues of the operator, with the number of times each one occurs being given by its algebraic multiplicity.If the operator is originally given by a square matrix M, then its Jordan normal form is also called the Jordan normal form of M. Any square matrix has a Jordan normal form if the field of coefficients is extended to one containing all the eigenvalues of the matrix. In spite of its name, the normal form for a given M is not entirely unique, as it is a block diagonal matrix formed of Jordan blocks, the order of which is not fixed; it is conventional to group blocks for the same eigenvalue together, but no ordering is imposed among the eigenvalues, nor among the blocks for a given eigenvalue, although the latter could for instance be ordered by weakly decreasing size.The Jordan–Chevalley decomposition is particularly simple with respect to a basis for which the operator takes its Jordan normal form. The diagonal form for diagonalizable matrices, for instance normal matrices, is a special case of the Jordan normal form.The Jordan normal form is named after Camille Jordan.