Why Question Machine Learning Evaluation Methods? (An

... data set using 10-fold cross-validation together with accuracy. 10-fold cross-validation consists of dividing the data set into 10 non-overlapping subsets (folds) of equal size and running 10 series of training/testing experiments, combining 9 of the subsets into a single training set and using the ...

... data set using 10-fold cross-validation together with accuracy. 10-fold cross-validation consists of dividing the data set into 10 non-overlapping subsets (folds) of equal size and running 10 series of training/testing experiments, combining 9 of the subsets into a single training set and using the ...

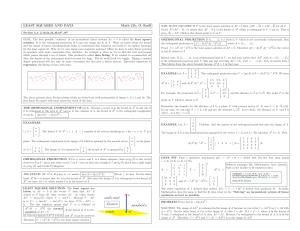

Linear fit

... GOAL. The best possible ”solution” of an inconsistent linear systems Ax = b is called the least square solution. It is the orthogonal projection of b onto the image im(A) of A. What we know about the kernel and the image of linear transformations helps to understand this situation and leads to an ex ...

... GOAL. The best possible ”solution” of an inconsistent linear systems Ax = b is called the least square solution. It is the orthogonal projection of b onto the image im(A) of A. What we know about the kernel and the image of linear transformations helps to understand this situation and leads to an ex ...

Improving the Accuracy and Efficiency of the k-means

... heuristic method to improve the efficiency. During the iteration, the data-points may get redistributed to different clusters. The method involves keeping track of the distance between each data-point and the centroid of its present nearest cluster. At the beginning of the iteration, the distance of ...

... heuristic method to improve the efficiency. During the iteration, the data-points may get redistributed to different clusters. The method involves keeping track of the distance between each data-point and the centroid of its present nearest cluster. At the beginning of the iteration, the distance of ...

Association Rule Generation using Attribute Information Gain and

... has become one of the core data mining tasks, and has attracted tremendous interest among data mining researchers and practitioners. It has an elegantly simple problem statement, that is, to find the set of all subsets of items (called itemsets) that frequently occur in many database records or tran ...

... has become one of the core data mining tasks, and has attracted tremendous interest among data mining researchers and practitioners. It has an elegantly simple problem statement, that is, to find the set of all subsets of items (called itemsets) that frequently occur in many database records or tran ...

Privacy-Preserving Databases and Data Mining

... Probabilistic learning: Calculate explicit probabilities for hypothesis, among the most practical approaches to certain types of learning problems Incremental: Each training example can incrementally increase/decrease the probability that a hypothesis is correct. Prior knowledge can be combined with ...

... Probabilistic learning: Calculate explicit probabilities for hypothesis, among the most practical approaches to certain types of learning problems Incremental: Each training example can incrementally increase/decrease the probability that a hypothesis is correct. Prior knowledge can be combined with ...

Statistical Data Analysis - Faoza Hafiz Saragih, SP, M.Sc

... Partial Least Square (PLS) PLS is an alternative method of settlement of a complex multilevel models that do not require a big size samples PLS regression is particularly useful when we need to predict a set of dependent variables from a (very) large set of independent variables (predictors) ...

... Partial Least Square (PLS) PLS is an alternative method of settlement of a complex multilevel models that do not require a big size samples PLS regression is particularly useful when we need to predict a set of dependent variables from a (very) large set of independent variables (predictors) ...

Normality distribution testing for levelling data obtained

... Abstract. Normal distribution of data is of crucial importance in data processing and hypothesis testing in geodesy. Models of geodetic measurements adjustment assume that data are normally distributed. However, results of measurements could be affected by different influences because geodetic data ...

... Abstract. Normal distribution of data is of crucial importance in data processing and hypothesis testing in geodesy. Models of geodetic measurements adjustment assume that data are normally distributed. However, results of measurements could be affected by different influences because geodetic data ...

Survey on Remotely Sensed Image Classification

... maps algorithm. All these algorithms are evaluated as per the following factors: number of clusters, size of dataset, type of dataset and type of software used. FSVM is used to enhance the SVM in reducing the effect of outliers and noises in data points and is suitable for applications, in which dat ...

... maps algorithm. All these algorithms are evaluated as per the following factors: number of clusters, size of dataset, type of dataset and type of software used. FSVM is used to enhance the SVM in reducing the effect of outliers and noises in data points and is suitable for applications, in which dat ...

A Multiobjective Genetic Algorithm for Attribute Selection

... A genetic algorithm (GA) is a search algorithm inspired by the principle of natural selection. The basic idea is to evolve a population of individuals, where each individual is a candidate solution to a given problem. Each individual is evaluated by a fitness function, which measures the quality of ...

... A genetic algorithm (GA) is a search algorithm inspired by the principle of natural selection. The basic idea is to evolve a population of individuals, where each individual is a candidate solution to a given problem. Each individual is evaluated by a fitness function, which measures the quality of ...

Object Oriented Model Classification of Satellite Image Dharamvir

... IRS Landsat satellite image is one of the main sources of information. One frame of Landsat MSS imagery consists of three digital images of the same scene in different spectral bands. Two of these are in the visible region (corresponding approximately to green and red regions of the visible spectrum ...

... IRS Landsat satellite image is one of the main sources of information. One frame of Landsat MSS imagery consists of three digital images of the same scene in different spectral bands. Two of these are in the visible region (corresponding approximately to green and red regions of the visible spectrum ...

Decision Trees Using the Minimum Entropy-of-Error Principle

... Decision trees are mathematical devices largely applied to data classification tasks, namely in data mining. The main advantageous features of decision trees are the semantic interpretation that is often possible to assign to decision rules at each tree node (a relevant aspect e.g. in medical applic ...

... Decision trees are mathematical devices largely applied to data classification tasks, namely in data mining. The main advantageous features of decision trees are the semantic interpretation that is often possible to assign to decision rules at each tree node (a relevant aspect e.g. in medical applic ...

The Distance Between two Rational Numbers

... numbers? • A hiker starts hiking at the beginning of a trail at a point which is 200 feet below sea level. He hikes to a location on the trail that is 580 feet above sea level and stops for lunch. 1. What is the vertical distance between 200 feet below sea level and 580 feet above sea level? ...

... numbers? • A hiker starts hiking at the beginning of a trail at a point which is 200 feet below sea level. He hikes to a location on the trail that is 580 feet above sea level and stops for lunch. 1. What is the vertical distance between 200 feet below sea level and 580 feet above sea level? ...

Knowledge Discovery in Databases II Lecture 5: Stream

... • Consider a real-valued random variable r whose range is R – e.g., for a probability the range is one, – for an information gain the range is log2(c), where c is the number of classes ...

... • Consider a real-valued random variable r whose range is R – e.g., for a probability the range is one, – for an information gain the range is log2(c), where c is the number of classes ...

Paper Title (use style: paper title)

... Depending on the attribute values, it creates a decision tree. The decision tree approach is most helpful in classification problem. With this system, a tree is built to model the classification method. Once the tree is built, it's applied to every tuple within the database which results in classifi ...

... Depending on the attribute values, it creates a decision tree. The decision tree approach is most helpful in classification problem. With this system, a tree is built to model the classification method. Once the tree is built, it's applied to every tuple within the database which results in classifi ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.