Outlier Mining in Large High-Dimensional Data Sets

... remaining data” [12]. Many data mining algorithms consider outliers as noise that must be eliminated because it degrades their predictive accuracy. For example, in classification algorithms mislabelled instances are considered outliers and thus they are removed from the training set to improve the a ...

... remaining data” [12]. Many data mining algorithms consider outliers as noise that must be eliminated because it degrades their predictive accuracy. For example, in classification algorithms mislabelled instances are considered outliers and thus they are removed from the training set to improve the a ...

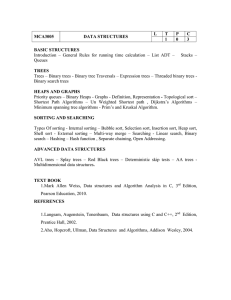

Course Plan

... *. Suppose Davis is to be inserted into the array. How many names must be moved to new locations? *. Suppose Gupta is to be deleted from the array. How many names must be moved to new locations? ...

... *. Suppose Davis is to be inserted into the array. How many names must be moved to new locations? *. Suppose Gupta is to be deleted from the array. How many names must be moved to new locations? ...

DTU: Decision Tree for Uncertain Data

... The core issue in a decision tree induction algorithm is to decide the method of records being split. Each step of the tree-grow process needs to select an attribute test condition to divide the records into smaller subsets. A decision tree algorithm must provide a method for specifying the test con ...

... The core issue in a decision tree induction algorithm is to decide the method of records being split. Each step of the tree-grow process needs to select an attribute test condition to divide the records into smaller subsets. A decision tree algorithm must provide a method for specifying the test con ...

ELKI: A Software System for Evaluation of Subspace Clustering

... A wealth of data-mining approaches is provided by the almost “classical” open source machine learning framework Weka [1]. We consider Weka as the most prominent and popular environment for data mining algorithms. However, the focus and strength of Weka is mainly located in the area of classification, ...

... A wealth of data-mining approaches is provided by the almost “classical” open source machine learning framework Weka [1]. We consider Weka as the most prominent and popular environment for data mining algorithms. However, the focus and strength of Weka is mainly located in the area of classification, ...

A Novel Density based improved k

... "nearest-neighbour" queries for the points in the dataset. If the data is‘d’ dimensional and there are ‘N’ points in the dataset, the cost of a single iteration is O(kdN). As one would have to run several iterations, it is generally not feasible to run the naïve k-means algorithm for large number of ...

... "nearest-neighbour" queries for the points in the dataset. If the data is‘d’ dimensional and there are ‘N’ points in the dataset, the cost of a single iteration is O(kdN). As one would have to run several iterations, it is generally not feasible to run the naïve k-means algorithm for large number of ...

APPLICATION OF ARTIFICIAL INTELLIGENCE BASED

... the training vectors in high dimensional feature space. Each vector is labeled by its class. A SVM views a classification problem as a quadratic optimization problem. It avoids the “curse of dimensionality” by placing an upper bound on the margin between the different classes. This makes it easy to ...

... the training vectors in high dimensional feature space. Each vector is labeled by its class. A SVM views a classification problem as a quadratic optimization problem. It avoids the “curse of dimensionality” by placing an upper bound on the margin between the different classes. This makes it easy to ...

Feature Selection Algorithm with Discretization and PSO

... combinatorial optimization problems to continuous optimization problems, single and multi-objective problems, etc. In this study, we propose a new discretization method that is applied for continuous attributes to convert the discrete values after applied feature subset selection based on PSO techni ...

... combinatorial optimization problems to continuous optimization problems, single and multi-objective problems, etc. In this study, we propose a new discretization method that is applied for continuous attributes to convert the discrete values after applied feature subset selection based on PSO techni ...

Defense Presentation

... Issues for Object Detection • What semi-supervised approaches are applicable? – Ability to handle object detection problem uniqueness. – Compatibility with existing detector implementations. ...

... Issues for Object Detection • What semi-supervised approaches are applicable? – Ability to handle object detection problem uniqueness. – Compatibility with existing detector implementations. ...

Snap-drift ADaptive FUnction Neural Network (SADFUNN) for Optical and Pen-Based Handwritten Digit Recognition

... case, although 7494 patterns need to be classified, but every 250 samples are from the same writer, many similar samples exist), the learned dSDNN is ready to supply ADFUNN for pattern recognition. The training patterns are introduced to dSDNN again but without learning. The winning F2 nodes, whose ...

... case, although 7494 patterns need to be classified, but every 250 samples are from the same writer, many similar samples exist), the learned dSDNN is ready to supply ADFUNN for pattern recognition. The training patterns are introduced to dSDNN again but without learning. The winning F2 nodes, whose ...

C - delab-auth

... Instead of using the Euclidean distance we can use the Squared Euclidean distance ...

... Instead of using the Euclidean distance we can use the Squared Euclidean distance ...

K-Means and K-Medoids Data Mining Algorithms

... the dimension of the data. Several methods have been proposed in the literature for improving performance of the kmeans clustering algorithm. In this research, the most representative algorithms K-Means and K-Medoids were examined and analyzed based on their basic approach. The best algorithm in eac ...

... the dimension of the data. Several methods have been proposed in the literature for improving performance of the kmeans clustering algorithm. In this research, the most representative algorithms K-Means and K-Medoids were examined and analyzed based on their basic approach. The best algorithm in eac ...

Fuzzy-rough data mining - Aberystwyth University Users Site

... Harmony search approach • R. Diao and Q. Shen. A harmony search based approach to hybrid fuzzy-rough rule induction, Proceedings of the 21st International Conference on Fuzzy Systems, 2012. ...

... Harmony search approach • R. Diao and Q. Shen. A harmony search based approach to hybrid fuzzy-rough rule induction, Proceedings of the 21st International Conference on Fuzzy Systems, 2012. ...

CSIS 5420 Mid-term Exam

... a tiny proportion of the population certain parts of the population may be misrepresented. For instance, if 25/1000 known instances are used for training there is a good chance that the few outliers that exist may be missed. Despite their small numbers these outliers have great value and should not ...

... a tiny proportion of the population certain parts of the population may be misrepresented. For instance, if 25/1000 known instances are used for training there is a good chance that the few outliers that exist may be missed. Despite their small numbers these outliers have great value and should not ...

3 Conventional Churn Prediction

... 3 Conventional Churn Prediction A typical approach to the problem of churn prediction is as follows: a sufficiently large data set containing churning and non churning customers is used to construct a classifier. This classifier is an algorithm that is able to decide, given a customer data set, whet ...

... 3 Conventional Churn Prediction A typical approach to the problem of churn prediction is as follows: a sufficiently large data set containing churning and non churning customers is used to construct a classifier. This classifier is an algorithm that is able to decide, given a customer data set, whet ...

Semi-supervised Learning for SVM-KNN

... A. The motivation of the methodology In many pattern classification problems, if there is plenty of unlabeled data while only a small number of labeled data is available, then we should adopt semisupervised learning strategy, the existing methods have different kinds of restrictions at present, so h ...

... A. The motivation of the methodology In many pattern classification problems, if there is plenty of unlabeled data while only a small number of labeled data is available, then we should adopt semisupervised learning strategy, the existing methods have different kinds of restrictions at present, so h ...

OCARA AS METHOD OF CLASSIFICATION AND ASSOCIATION

... This article focuses on how to integrate the two major data mining techniques namely, classification and association rules to come up with An Optimal Class Association Rule Algorithm (OCARA) as Method of Classification and Association Rules Obtaining. Classification and association rule mining algor ...

... This article focuses on how to integrate the two major data mining techniques namely, classification and association rules to come up with An Optimal Class Association Rule Algorithm (OCARA) as Method of Classification and Association Rules Obtaining. Classification and association rule mining algor ...

A Correlation Framework for Continuous User Authentication Using

... The methodology used to analyse the raw keystroke data using DM followed a similar principle to that described in section 2. For the purpose of this work, the data sets were split into a ratio of 9:1. The algorithm or classifier is subjected initially to the training set and then the classification ...

... The methodology used to analyse the raw keystroke data using DM followed a similar principle to that described in section 2. For the purpose of this work, the data sets were split into a ratio of 9:1. The algorithm or classifier is subjected initially to the training set and then the classification ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.