Artificial Neural Networks - Introduction -

... Learning = learning by adaptation For example: Animals learn that the green fruits are sour and the yellowish/reddish ones are sweet. The learning happens by adapting the fruit picking behavior. Learning can be perceived as an optimisation process. When an ANN is in its SUPERVISED training or lear ...

... Learning = learning by adaptation For example: Animals learn that the green fruits are sour and the yellowish/reddish ones are sweet. The learning happens by adapting the fruit picking behavior. Learning can be perceived as an optimisation process. When an ANN is in its SUPERVISED training or lear ...

Descision making

... • The strength of each connection is calculated from the product of the preand postsynaptic activities, scaled by a “learning rate” a (which determines how fast connection weights change). Δwij = a * g[i] * f[j]. • The linear associator stores associations between a pattern of neural activations in ...

... • The strength of each connection is calculated from the product of the preand postsynaptic activities, scaled by a “learning rate” a (which determines how fast connection weights change). Δwij = a * g[i] * f[j]. • The linear associator stores associations between a pattern of neural activations in ...

Artificial neural networks – how to open the black boxes?

... Another explanation of the high model capacity of neural networks is surprising as well. Let us imagine that all the patterns, namely input and output data, can be transformed into a n-dimensional feature space H. Its dimension n is significantly higher than the number m of the input-output channels ...

... Another explanation of the high model capacity of neural networks is surprising as well. Let us imagine that all the patterns, namely input and output data, can be transformed into a n-dimensional feature space H. Its dimension n is significantly higher than the number m of the input-output channels ...

Topic 4

... During training, input weights of the best matching node and its neighbours are adjusted to make them resemble the input pattern even more closely At the completion of training, the best matching node ends up with its input weight values aligned with the input pattern and produces the strongest ...

... During training, input weights of the best matching node and its neighbours are adjusted to make them resemble the input pattern even more closely At the completion of training, the best matching node ends up with its input weight values aligned with the input pattern and produces the strongest ...

Black Box Methods – Neural Networks and Support Vector

... biological neural network works, allows extremely complex patterns to be learned. The addition of a short term memory (labeled Delay in the following figure) increases the power of recurrent networks immensely. Notably, this includes the capability to understand sequences of events over a period of ...

... biological neural network works, allows extremely complex patterns to be learned. The addition of a short term memory (labeled Delay in the following figure) increases the power of recurrent networks immensely. Notably, this includes the capability to understand sequences of events over a period of ...

The Evolutionary Emergence of Socially Intelligent Agents

... The use of coevolutionary models is fast becoming a dominant approach in the adaptive behavior field. This is essentially a response to the problems encountered when trying to use artificial selection to evolve complex behaviors. However, artificial selection has kept its hold so far − most systems ...

... The use of coevolutionary models is fast becoming a dominant approach in the adaptive behavior field. This is essentially a response to the problems encountered when trying to use artificial selection to evolve complex behaviors. However, artificial selection has kept its hold so far − most systems ...

Yuste-Banbury-2006 - The Swartz Foundation

... We assessed the pathways by which excitatory and inhibitory neurotransmitters elicit postsynaptic changes in [Ca2+]i in brain slices of developing rat and cat neocortex, using fura 2. Glutamate, NMDA, and quisqualate transiently elevated [Ca2%]i in all neurons. While the quisqualate response relied ...

... We assessed the pathways by which excitatory and inhibitory neurotransmitters elicit postsynaptic changes in [Ca2+]i in brain slices of developing rat and cat neocortex, using fura 2. Glutamate, NMDA, and quisqualate transiently elevated [Ca2%]i in all neurons. While the quisqualate response relied ...

... I. INTRODUCTION Artificial Intelligence (AI) techniques, such as expert system (ES), fuzzy logic (FL), artificial neural network (ANN), genetic algorithm (GA), particle swarm optimization (PSO) and biologically inspired (BI) have recently been applied widely in power electronics and motor drives. Th ...

Deep Machine Learning—A New Frontier in Artificial Intelligence

... stronger mapping of their architecture to biologically-inspired models. In particular, they attempt to solve problems of learning and invariance through diverse mechanisms such as temporal analysis, in which time is considered an inseparable element of the learning process. One prominent example is ...

... stronger mapping of their architecture to biologically-inspired models. In particular, they attempt to solve problems of learning and invariance through diverse mechanisms such as temporal analysis, in which time is considered an inseparable element of the learning process. One prominent example is ...

Artificial Spiking Neural Networks

... Artificial Spiking Neuron • The “state” (= membrane potential) is a weighted sum of impinging spikes – spike generated when potential crosses threshold, reset ...

... Artificial Spiking Neuron • The “state” (= membrane potential) is a weighted sum of impinging spikes – spike generated when potential crosses threshold, reset ...

Com1005: Machines and Intelligence

... special memories….The same neurons which retain memory traces of one experience must also participate in countless other activities” ...

... special memories….The same neurons which retain memory traces of one experience must also participate in countless other activities” ...

Computability and Learnability of Weightless Neural Networks

... where the abbreviated notation means: WRL is the set of weighted regular languages, RL is the set of regular languages, CFL is the set of context-free languages, CSL is the set of context-sensitive languages and TZL is the set of type zero languages. In order to show that the computational power of ...

... where the abbreviated notation means: WRL is the set of weighted regular languages, RL is the set of regular languages, CFL is the set of context-free languages, CSL is the set of context-sensitive languages and TZL is the set of type zero languages. In order to show that the computational power of ...

Learning with Perceptrons and Neural Networks

... Backpropagation Observations • Procedure is (relatively) efficient – All computations are local • Use inputs and outputs of current node ...

... Backpropagation Observations • Procedure is (relatively) efficient – All computations are local • Use inputs and outputs of current node ...

Norges teknisk-naturvitenskapelige universitet

... a) What is the Turing Test, and how is it related to the concept of Artificial Intelligence? b) What is a state space? List four important components of a state space, and explain the role of each of them. c) Use the predicates animal, dog, cat, owns, animal-lover, kills, and the constants Ole, Pusu ...

... a) What is the Turing Test, and how is it related to the concept of Artificial Intelligence? b) What is a state space? List four important components of a state space, and explain the role of each of them. c) Use the predicates animal, dog, cat, owns, animal-lover, kills, and the constants Ole, Pusu ...

ling411-13 - Rice University

... “If neurons in the functional web are strongly linked, they should show similar response properties in neurophysiological experiments. “If the neurons of the functional web are necessary for the optimal processing of the represented entity, lesion of a significant portion of the network neurons must ...

... “If neurons in the functional web are strongly linked, they should show similar response properties in neurophysiological experiments. “If the neurons of the functional web are necessary for the optimal processing of the represented entity, lesion of a significant portion of the network neurons must ...

Words in the Brain - Rice University -

... structural representation in the cortex • Answer: Yes, language is different, but – The differences are a consequence not of different (local) structure but differences of connectivity – The network does not have different kinds of structure for different kinds of information • Rather, different con ...

... structural representation in the cortex • Answer: Yes, language is different, but – The differences are a consequence not of different (local) structure but differences of connectivity – The network does not have different kinds of structure for different kinds of information • Rather, different con ...

Neural Nets

... as noise. For example: the NN reaches convergence when weights change within the magnitude of the noise: Δwij <= noise Or, weights oscillate between two or more states. If a NN learns from too many input-output training samples, it can memorize the data. This process is called overfitting or overtra ...

... as noise. For example: the NN reaches convergence when weights change within the magnitude of the noise: Δwij <= noise Or, weights oscillate between two or more states. If a NN learns from too many input-output training samples, it can memorize the data. This process is called overfitting or overtra ...

Lecture 2

... net systematically (or in some cases, not so systematically), examining nodes, looking for a goal node. • Clearly following a cyclic path through the net is pointless because following A,B,C,D,A will not lead to any solution that could not be reached just by starting from A. • We can represent the p ...

... net systematically (or in some cases, not so systematically), examining nodes, looking for a goal node. • Clearly following a cyclic path through the net is pointless because following A,B,C,D,A will not lead to any solution that could not be reached just by starting from A. • We can represent the p ...

History of Neural Computing

... History of Neural Computing • McCulloch - Pitts 1943 - showed that a ”neural network” with simple logical units computes any computable function - beginning of Neural Computing, Artificial Intelligence, and Automaton Theory • Wiener 1948 - Cybernetics, first time statistical mechanics model for comp ...

... History of Neural Computing • McCulloch - Pitts 1943 - showed that a ”neural network” with simple logical units computes any computable function - beginning of Neural Computing, Artificial Intelligence, and Automaton Theory • Wiener 1948 - Cybernetics, first time statistical mechanics model for comp ...

IAI : Biological Intelligence and Neural Networks

... They are very noise tolerant – so they can cope with situations where normal symbolic systems would have difficulty. ...

... They are very noise tolerant – so they can cope with situations where normal symbolic systems would have difficulty. ...

Pattern recognition with Spiking Neural Networks: a simple training

... classifies neural networks according to their computational units into three generation: the first generation being perceptrons based on McCulloch-Pitts neurons [4], the second generation being networks such as feedforward networks where neurons apply an “activation function”, and the third generati ...

... classifies neural networks according to their computational units into three generation: the first generation being perceptrons based on McCulloch-Pitts neurons [4], the second generation being networks such as feedforward networks where neurons apply an “activation function”, and the third generati ...

ppt - Computer Science Department

... Machine learning is programming computers to optimize a performance criterion using example data or past experience. -- Ethem Alpaydin ...

... Machine learning is programming computers to optimize a performance criterion using example data or past experience. -- Ethem Alpaydin ...

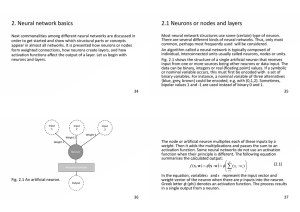

2. Neural network basics 2.1 Neurons or nodes and layers

... and ouput layer. Normally, all nodes of a single layer have the same properties like activation function and type like input, hidden or output. Note that these node types are used in feedforward networks, that is multilayer percoptrons. Still, virtually always the nodes of the same layer are of the ...

... and ouput layer. Normally, all nodes of a single layer have the same properties like activation function and type like input, hidden or output. Note that these node types are used in feedforward networks, that is multilayer percoptrons. Still, virtually always the nodes of the same layer are of the ...

Hierarchical temporal memory

Hierarchical temporal memory (HTM) is an online machine learning model developed by Jeff Hawkins and Dileep George of Numenta, Inc. that models some of the structural and algorithmic properties of the neocortex. HTM is a biomimetic model based on the memory-prediction theory of brain function described by Jeff Hawkins in his book On Intelligence. HTM is a method for discovering and inferring the high-level causes of observed input patterns and sequences, thus building an increasingly complex model of the world.Jeff Hawkins states that HTM does not present any new idea or theory, but combines existing ideas to mimic the neocortex with a simple design that provides a large range of capabilities. HTM combines and extends approaches used in Sparse distributed memory, Bayesian networks, spatial and temporal clustering algorithms, while using a tree-shaped hierarchy of nodes that is common in neural networks.