Stats ch03.s03-3

... applications is that different values of X can be set and there will be a corresponding mean value of Y that results because of the underlying linear process being studied. The linear equation model computes the mean of Y for every value of X. This idea is the basis for obtaining many economic and b ...

... applications is that different values of X can be set and there will be a corresponding mean value of Y that results because of the underlying linear process being studied. The linear equation model computes the mean of Y for every value of X. This idea is the basis for obtaining many economic and b ...

Identifying Input Distributions

... R2 is the percent of variation in Y that is explained by the regression model used on X. It will be a number between 0 and 1, where 0 represents none of the variation being explained and 1 represents 100% of the variation being explained. The standard error of the model is the average amount of sc ...

... R2 is the percent of variation in Y that is explained by the regression model used on X. It will be a number between 0 and 1, where 0 represents none of the variation being explained and 1 represents 100% of the variation being explained. The standard error of the model is the average amount of sc ...

Linear Regression t

... response variable varies normally. We have not seen this assumption before. We have in the past needed to establish that data is derived from a normal distribution. We will follow the same approach here. ...

... response variable varies normally. We have not seen this assumption before. We have in the past needed to establish that data is derived from a normal distribution. We will follow the same approach here. ...

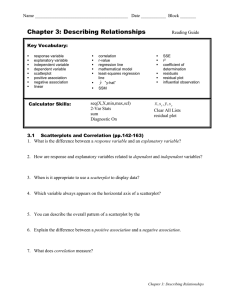

The Practice of Statistics

... independent variable dependent variable scatterplot positive association negative association linear ...

... independent variable dependent variable scatterplot positive association negative association linear ...

The Linear Regression Model

... Least squares prediction: formal terms • population equation for a linear model: Y = + X + ε • equation for a given observation: Yi = a + bXi + ei where Yi and Xi are observed values of Y and X, and ei is the error in observation Yi . • prediction for a given value of X, based on a sample: • Yh ...

... Least squares prediction: formal terms • population equation for a linear model: Y = + X + ε • equation for a given observation: Yi = a + bXi + ei where Yi and Xi are observed values of Y and X, and ei is the error in observation Yi . • prediction for a given value of X, based on a sample: • Yh ...

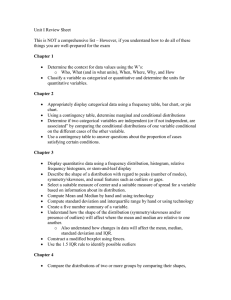

Section 10

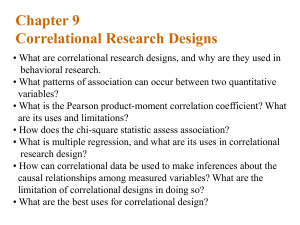

... Chapter 4 – Describing the Relation between Two Variables Section 4.1 – Scatter Diagrams and Correlation Correlation – There is a correlation between two variables when one of them is related to the other in some way. It is a measure of the strength of an association. The response variable (y value) ...

... Chapter 4 – Describing the Relation between Two Variables Section 4.1 – Scatter Diagrams and Correlation Correlation – There is a correlation between two variables when one of them is related to the other in some way. It is a measure of the strength of an association. The response variable (y value) ...

estimated t

... (X) and sales (Y), selling price (P), and disposable income (M) If look only at Y and X: Result: Y = 120.755 + .434 X One use of a regression is to make predictions. If a region had promotional expenditures of 185, the prediction is Y = 201.045, by substituting 185 for X The regression output ...

... (X) and sales (Y), selling price (P), and disposable income (M) If look only at Y and X: Result: Y = 120.755 + .434 X One use of a regression is to make predictions. If a region had promotional expenditures of 185, the prediction is Y = 201.045, by substituting 185 for X The regression output ...

e388_08_Spr_Final

... c. Which equation do you prefer, if you only want to include statistically significant regressors? (Show your reasoning, including any necessary derivations. You can assume that absolute t values greater than 2 are significant, and chi-square and F-statistic values greater than 3 are significant und ...

... c. Which equation do you prefer, if you only want to include statistically significant regressors? (Show your reasoning, including any necessary derivations. You can assume that absolute t values greater than 2 are significant, and chi-square and F-statistic values greater than 3 are significant und ...

Multiple Regression Lecture

... and are left with 60% residual variability. Ideally, we would like to explain most if not all of the original variability Therefore - r2 value is an indicator of how well the model fits the data (e.g., an r2 close to 1.0 indicates that we have accounted for almost all of the variability with the var ...

... and are left with 60% residual variability. Ideally, we would like to explain most if not all of the original variability Therefore - r2 value is an indicator of how well the model fits the data (e.g., an r2 close to 1.0 indicates that we have accounted for almost all of the variability with the var ...

Regression Towards the Mean

... Collinearity Very often independent variables are intercorrelated, related to one another i.e., lung cancer can be predicted from smoking, but smoking is intercorrelated with other factors such as diet, exercise, social class, medical care, etc. ...

... Collinearity Very often independent variables are intercorrelated, related to one another i.e., lung cancer can be predicted from smoking, but smoking is intercorrelated with other factors such as diet, exercise, social class, medical care, etc. ...

Advantages of Multivariate Analysis

... Y increases or decreases linearly with increase or decrease in X. For any given value of X the values of Y are distributed Normally. Variance of Y at any given value of X is the same for all value of X. The deviations in any one value of Y has no effect on other values of Y for any given X ...

... Y increases or decreases linearly with increase or decrease in X. For any given value of X the values of Y are distributed Normally. Variance of Y at any given value of X is the same for all value of X. The deviations in any one value of Y has no effect on other values of Y for any given X ...

Linear Models

... predicted increases, may indicate nonconstant variance may indicate need to log dependent variable May need to use weighted regression ...

... predicted increases, may indicate nonconstant variance may indicate need to log dependent variable May need to use weighted regression ...

Lecture12

... • Sometimes, variable transformations can bring the connection between variables to a linear form • Other times, this is not possible: The relationship describes the dependent variable as some function of independent variables and some random error. • The model may still be estimated by minimizing t ...

... • Sometimes, variable transformations can bring the connection between variables to a linear form • Other times, this is not possible: The relationship describes the dependent variable as some function of independent variables and some random error. • The model may still be estimated by minimizing t ...

Linear regression

In statistics, linear regression is an approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted X. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, the process is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.)In linear regression, data are modeled using linear predictor functions, and unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, linear regression refers to a model in which the conditional mean of y given the value of X is an affine function of X. Less commonly, linear regression could refer to a model in which the median, or some other quantile of the conditional distribution of y given X is expressed as a linear function of X. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of y given X, rather than on the joint probability distribution of y and X, which is the domain of multivariate analysis.Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine.Linear regression has many practical uses. Most applications fall into one of the following two broad categories: If the goal is prediction, or forecasting, or error reduction, linear regression can be used to fit a predictive model to an observed data set of y and X values. After developing such a model, if an additional value of X is then given without its accompanying value of y, the fitted model can be used to make a prediction of the value of y. Given a variable y and a number of variables X1, ..., Xp that may be related to y, linear regression analysis can be applied to quantify the strength of the relationship between y and the Xj, to assess which Xj may have no relationship with y at all, and to identify which subsets of the Xj contain redundant information about y.Linear regression models are often fitted using the least squares approach, but they may also be fitted in other ways, such as by minimizing the ""lack of fit"" in some other norm (as with least absolute deviations regression), or by minimizing a penalized version of the least squares loss function as in ridge regression (L2-norm penalty) and lasso (L1-norm penalty). Conversely, the least squares approach can be used to fit models that are not linear models. Thus, although the terms ""least squares"" and ""linear model"" are closely linked, they are not synonymous.