* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Mathematical Tools for Image Collections Outline Problems

Survey

Document related concepts

Transcript

Outline

Mathematical Tools for

Image Collections

•

•

•

•

Problems to solve

Mathematical models

Probability and Statistics

Graph Cuts

Paulo Carvalho

IMPA

© Paulo Carvalho

Problems to solve

Summer 2006

2

Problems to solve

• Registration

• Basic tasks:

• Segmentation

– Classify data according to similarities and

dissimilarities

– Generate new data according to observed

patterns

• Model Estimation

• Fusion

• Re-Synthesis

© Paulo Carvalho

Summer 2006

3

Example: matting

© Paulo Carvalho

Summer 2006

4

Mathematical Tools

• Probability

• Chuang et al. A Bayesian

Approach to Digital Matting

– Mathematical models for uncertainty

• Statistics

classify

– Inference on probabilistic models

• Optimization

– Formulating goals as functions to be

minimized (and doing it...)

generate

© Paulo Carvalho

Summer 2006

5

© Paulo Carvalho

Summer 2006

6

1

Probability

Statistics

• Random vs deterministic phenomena

• Uncertainty expressed by means of

probability distributions.

• Describe relative likelihood.

© Paulo Carvalho

Summer 2006

• Basic problem: infer attributes of

probability models from samples

(Statistics: probability models + samples)

7

© Paulo Carvalho

Statistics

• Classical vs Bayesian

– Parametric models: distributions known,

except for a finite number of parameters

(e.g., Normal(µ, σ 2))

– Otherwise, non-parametric (e.g.,

distribution symmetric about µ).

Summer 2006

– Classical statistics: parameters are

unknown constants (states of Nature) and

inference is purely objective: based only on

observed data.

– Bayesian statistics: parameters are random

themselves and inference is based on

observed data and on prior (subjective)

belief (probability distribution).

9

© Paulo Carvalho

Bayesian Inference

•

•

•

•

•

•

– Start with previous (imprecise) knowledge

– Observe new data

– Revise knowledge (still imprecise, but

better informed)

Summer 2006

Summer 2006

10

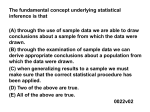

Types of inference problems

• Appropriate for learning models

• Mimics learning process

© Paulo Carvalho

8

Statistics

• Parametric vs non-parametric

© Paulo Carvalho

Summer 2006

11

Classification

Regression

Density estimation

Dimension reduction

Clustering

Model selection

© Paulo Carvalho

Summer 2006

12

2

Bayesian Inference

Bayesian Inference

• Good starting point:

• Given

– prior distribution π(θ)

– conditional distribution p(x|θ)

– data x

– A. Hertzmann. Machine Learning for

Computer Graphics: A Manifesto and a

Tutorial

– Provides basic references on machine

learning

– Relates techniques to papers in Computer

Graphics.

• Compute

– posterior distribution

prior likelihood

p (", x)

#(") p (x | ")

p (" | x ) =

=

p ( x)

! #(") p(x | ")d"

observation

(Bayes’ theorem)

© Paulo Carvalho

Summer 2006

13

Bayesian Inference

14

• Easy to use (Bayesian or other)

inference methods for each pixel.

– Local solution

– Bad overvall results

!ˆ = arg max p (! | x)

• How to produce spatially-integrated

results?

• Estimation (minimize expected quadratic

error):

"ˆ = E (" | x) = ! p (" | x)d"

Summer 2006

Summer 2006

Segmentation/classification

• Use the posterior distribution p(θ|x) to make

inferences on θ

• Classification: MAP estimate (maximum a

posteriori)

© Paulo Carvalho

© Paulo Carvalho

15

Example [Rabih et al]

© Paulo Carvalho

Summer 2006

16

Depth segmentation

Stereo

– Classify pixels according to depths (disparity)

– Neighboring pixels should be at similar depths

• Except at the borders of objects!

© Paulo Carvalho

Summer 2006

Ground truth

17

© Paulo Carvalho

Local method

(maximum correlation)

Summer 2006

18

3

Pixel labeling problem

Example: segmentation

• Global formulation

– Attribute labelings fp∈{1, 2, ..., m} to pixels

– Assignment cost Dp(fp) for assigning label

fp to pixel p.

– Separation cost V(fp, fq) for assigning

labels fp, fq to neighboring pixels p, q

– Minimize total cost (energy function):

min

! D p ( f p ) + !V ( f p , f q )

p"I

Summer 2006

19

Example: stereo

© Paulo Carvalho

Summer 2006

– Simulated annealing, or some such

– Bad answers, slowly

• Local methods

2 K , if | I p $ I q |< C

• If fp≠ fq, V ( f p , f q ) = #"

! K , otherwise

– Each pixel chooses a label independently

– Bad answers, fast

Summer 2006

21

Efficient solution: min cuts

© Paulo Carvalho

Summer 2006

• [Ford & Fulkerson] The maximum s-t

flow in a network equals the capacity of

the minimum s-t cut

Given a directed graph, with distinguished

nodes s, t and edge capacities ca, find the

maximum flow from s to t

ca

8

2

...

2

s

t

3

5

Summer 2006

22

Max flow – min cut theorem

• Maximum flow problem

© Paulo Carvalho

20

• NP-Hard

• General purpose optimization methods

• Dp(fp) is difference in intensity for each depth

(disparity)

s

2 K , if | I p $ I q |< C

! K , otherwise

Pixel labeling program

• Labels are disparity between corresponding

pixels

© Paulo Carvalho

• If fp≠ fq, V ( f p , f q ) = #"

(discontinuity preserving)

neigh. p ,q

© Paulo Carvalho

• Labels are 0-1 (backgroundforeground)

• Dp(fp) measures the individual

likelihood to belong to the

background or the foreground

(e.g, a posteriory probability)

23

© Paulo Carvalho

Summer 2006

t

24

4

Max flow – min cut theorem

Max flow – min cut theorem

• [Ford & Fulkerson] The maximum s-t

flow in a network equals the capacity of

the minimum s-t cut

• [Ford & Fulkerson] The maximum s-t

flow in a network equals the capacity of

the minimum s-t cut

8

2

2

s

2

3

3

3

5

© Paulo Carvalho

Max flow – min cut theorem

© Paulo Carvalho

min cut = 5

3

5

25

max flow = 5

2

s

t

Summer 2006

8

2

max flow = 5

2

t

Summer 2006

Pixel labeling and min cuts

• Inequality easy.

• Equality shown by the flow augmenting

path algorithm.

• Provides the basis for building efficient

algorithms for max flow (min cut)

• State of the art: low-degree polynomial

complexity, with small constants (i.e.,

fast)

• Pixel labeling problems can be efficiently

solved by a sequence of min cut problems.

• Each min-cut problem represents a labeling

problem with only two labels (label

expansion).

• Cuts are labelings, cut costs are energy

functions.

• Local minima, but global for certain energy

functions.

© Paulo Carvalho

© Paulo Carvalho

Summer 2006

27

Summer 2006

Pixel labeling and min cuts

Pixel labeling and min cuts

p q r s

p q r s

© Paulo Carvalho

Summer 2006

26

29

© Paulo Carvalho

Summer 2006

28

30

5

Energy minimization via min cut

Example - Stereo [Zabih]

For each move we choose expansion that gives the largest decrease in

the energy:

binary energy minimization subproblem

• Zabih’s web page:

http://www.cs.cornell.edu/~rdz/graphcuts.html

initial solution

-expansion

-expansion

-expansion

-expansion

-expansion

-expansion

-expansion

© Paulo Carvalho

Summer 2006

31

© Paulo Carvalho

Summer 2006

32

6