* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Review Notes - Wharton Statistics

Psychometrics wikipedia , lookup

Taylor's law wikipedia , lookup

History of statistics wikipedia , lookup

Bootstrapping (statistics) wikipedia , lookup

Foundations of statistics wikipedia , lookup

Resampling (statistics) wikipedia , lookup

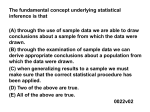

Statistical inference wikipedia , lookup

Stat 112 D. Small Review of Ideas from Lectures 1-10 I. Basic Concepts of Statistical Inference 1. Types of Inference Problems in Statistics A. Population inference: Goal is to make inference about characteristics of population distribution (e.g., mean, standard deviation) based on a sample. B. Causal inference: Goal is to make inferences about what would have happened if subjects were treated differently than they actually were (e.g., what would have happened if subject was given drug instead of a placebo). 2. Sampling Distributions A. Population: Collection of all items of interest to the researcher B. Statistic: Any quantity that can be calculated from the data C. Sampling distribution of a statistic: the proportion of times that a statistic will take on each possible value in repeated trials of the data collection process (randomized experiment or random sample) D. Population distribution: the sampling distribution of a randomly chosen observation (sample of size one) from the population. E. Measures of central tendency of population/sampling distribution: mean, median. F. Measure of variability of population/sampling distribution: standard deviation (square root of average squared deviation from the mean) G. Standard error of a statistic: an estimate of the standard deviation of a statistic based on a sample. 3. Hypothesis Testing A. Goal is to decide which of two hypotheses H 0 (null hypothesis) or H a (alternative hypothesis) is true based on the data. B. Errors in hypothesis testing: Type I error – reject H 0 when it is true; Type II error – accept H 0 when it is false. Statistical hypothesis testing control the probability of making a Type I error, usually setting it to 0.05. It treats Type I error as more serious error. C. Test statistic: summary of data. We rank values of the test statistic by the degree to which they are “plausible” under the null hypothesis. D. p-value: the probability that if the null hypothesis were true, we would observe at least as implausible a test statistic as the test statistic we actually observed. Measure of evidence against the null hypothesis. E. Interpreting p-value: If the p-value is >.10, we consider the data to provide no evidence against H 0 ; if the p-value is between .05 and .10, we consider the data to provide suggestive, but inconclusive evidence against H 0 ; if the p-value is between .01 and .05, we consider the data to provide moderate evidence against H 0 and “reject” H 0 ; if the p-value is <.01, we consider the data to provide strong evidence against H 0 . F. Logic of hypothesis testing: Statistical hypothesis testing places the burden of proof on the alternative hypothesis and we should choose the alternative hypothesis to be the hypothesis that we want to prove. Because we typically reject H a only if the p-value is <.05, i.e., only if H 0 is found to be implausible, we establish a strong case for H a when we reject H 0 but if we do not reject H 0 , we do not establish a strong case that H 0 is true (analogous to a criminal trial in which a defendant is convicted only if there is strong evidence of guilt; a failure to convict does not mean that the jury thinks that the defendant is innocent). G. Practical vs. statistical significance: A small p-value only means that there is strong evidence that H 0 is not true; it does not mean that the study’s finding is of practical importance. Always accompany p-values for tests of hypotheses with confidence intervals. Confidence intervals provide information about the practical importance of the study’s findings. 4. Confidence Intervals A. Point estimate: A single number used as the best estimate of a parameter, e.g., the sample mean is a point estimate of the population mean. B. Confidence interval: A range of values used as an estimate of a parameter. Range of values for parameter that are “plausible” given the data. C. Form of confidence interval: point estimate multiplier for degree of confidence * standard error of point estimate Standard error of point estimate: An estimate of the standard deviation of the sampling distribution of the point estimate. For 95% confidence interval, multiplier for degree of confidence is about 2. D. Interpretation of confidence intervals: A 95% confidence interval will contain the true parameter 95% of the time if repeated random samples are taken. It is impossible to say whether our particular confidence interval from a sample contains the true parameter. The parameter is “likely” to be in the confidence interval. II. Study Design 1. Statistical Inferences Permitted by Study Designs Statistical inferences (p-values, confidence intervals) are based on knowing the probability model by which the sampling distribution is generated from the population. A. Population inference: Statistical inferences are based on the sample being randomly selected (i.e., the units are selected through a chance mechanism with each unit having a known >0 chance of being selected). B. Causal inference: Statistical inferences are based on the assumption that subjects were assigned to groups at random. C. Observational study: Study in which the researcher observes but does not control the group membership of a subject. D. Confounding (lurking) variables: Variables that are related to both group membership and outcome in an observational study; their presence makes it hard to establish the outcome as being a direct consequence of group membership. E. Inference based on observational studies and non-random sampling: Statistical inference for observational studies is often made on the assumption that even though a randomized experiment was not actually performed, the division of subjects into groups was “as good as random.” If there are confounding variables that are not controlled for, then statistical inferences for observational studies are not valid. Similarly, statistical inference for non-random sampling is often made on the assumption that the sample was as “good as random.” If there is selection bias, then statistical inferences for non-random sampling are not valid. 2. Principles of Experimental Design A. Randomization: Use of impersonal chance to assign experimental units to treatments produces groups that should be similar in all respects before the treatment is applied B. Control: Make sure all other factors besides the intended treatments are kept the same in the different groups. Examples of control: Use of placebo in control group, double blinding. C. Replication: Use enough experimental units to reduce chance variation in the results. The law of large numbers guarantees that if enough units are used, the groups will be similar with respect to any variable. D. Design of observational studies: Seek to choose control group that is as similar as possible with respect to any known confounding variables as the treatment group. III. Inference for two-sample problems 1. Causal inference for 2-group randomized experiment A. Additive treatment effect model: Each subject i has two potential outcomes - what would have happened if subject i was in group 1, Yi , and what would have happened if subject i was in group 2, Yi * . The additive treatment effect model says that for all i, Yi* Yi . B. An exact p-value for the additive treatment effect model is computed using the test statistic T Y2 Y1 and looking at the proportion of all regroupings of the subjects into two groups that give a test statistic at least as far from zero as the observed one. C. An approximate p-value and confidence interval can be computed using the twosample t-tools if either (i) the number of subjects in each group n1 , n2 are large ( n1, n2 30 ) or (ii) the distribution of responses in each group is approximately normal. 2. Inference for mean of one population based on random sample (Same as inference for difference among matched pairs based on random sample of pairs) A. Standard error of sample mean. For sampling with replacement, SE (Y ) For sampling without replacement, SE (Y ) s N n n N 1 s . n where n is the sample size and N is the population size. B. If the population is normally distributed and the sampling is done with replacement, the one sample t tools apply: Y * a. Hypothesis test: test statistic t . For the two sided test SE (Y ) H 0 : * vs. H a : * , values of t that are far away from zero are considered more implausible under H 0 . Under H 0 , t has a t distribution with n-1 degrees of freedom. s b. 95% Confidence interval: Y t n 1 (.975) . n C. If n 30 , one sample t-tools are approximately valid even if the population does not appear normally distributed. 3. Inference for difference in mean of two populations based on two independent samples A. Under the assumption that the two populations are normally distributed and have the same standard deviation, the two sample t-tools can be used. 1 1 a. SE (Y1 Y2 ) s p , s p = pooled estimate of standard deviation, n1 n2 available in JMP. b. Hypothesis test: For testing H 0 : 1 2 * vs. H a : 1 2 * , test (Y1 Y2 ) * . Values of t that are farther away from zero SE (Y1 Y2 ) are considered more implausible under H 0 . c. 95% confidence interval for 1 2 : statistic is t (Y1 Y2 ) t n1 n2 2 (.975) SE(Y1 Y2 ) B. If the two populations do not have the same standard deviation but are still normally distributed, Welch’s t test applies (Section 4.3.2). C. Rules of thumb for validity of t-tools: The two sample t-tools assume (i) normal populations; (ii) equal standard deviations of the two populations; (iii) two samples are independent. Rules of thumb for approximate validity: a. Normality :Look for gross skewness. t-tools are approximately valid if data are nonnormal but n1 , n2 30 . b. Equal standard deviations: Validity okay if either (i) n1 n2 or (ii) ratio of larger sample standard deviation to smaller sample standard deviation is less than 2. c. Outliers: Look for outliers in box plots, in particular very extreme points that are more than 3 box lengths away from the box. Apply the outlier examination strategy in Display 3.6. d. Independence: Look for serial and cluster effects. Matched pairs can be can be used instead of two sample independent t-tools if the only dependence is among members of a pair. D. Wilcoxon Rank Sum Test: Let F and G denote the population distribution of group 1 and group 2 respectively. The Wilcoxon Rank Sum Test tests H 0 : F G vs. H a : F G . The test statistic is the sum of the ranks of the observations in group 1. An approximate p-value is computed by considering T mean(T ) where mean(T) and SD(T) refer to mean and SD of T under z SD(T ) H 0 : F G . Under H 0 , z has approximately a standard normal distribution. Values of z far away from zero are considered implausible under the null hypothesis. The Wilcoxon Rank Sum Test is valid for all population distributions but is most useful when comparing two populations with the same spread and shape in which case rejection of H 0 indicates a difference in the means of the two populations. E. Levene’s test of equality of variances: Test H 0 : 12 22 where 12 , 22 are variances of group 1 and group 2 respectively. 4. Outliers A. Outliers: observations relatively far from their estimated mean. B. Resistance: A statistical procedure is resistant if one or a few outliers cannot have an undue influence on the result. C. The t tools are not resistant because they are based on means. The Wilcoxon rank sum test is resistant because it is based on ranks. D. Outlier examination strategy of Display 3.6 should be applied when using the ttools. 5. Log transformation A. Transformations: Transforming the response variables, e.g., by taking their logarithm, square root or reciprocal, is useful if the two samples have different spreads on the original scale or are nonnormal. If the two samples have approximately the same spread and are approximately normal on the transformed scale, the t-tools can be used on the transformed scale. B. Log transformation: Z1 log( Y1 ) , Z 2 log( Y2 ) , log denotes logarithm to base e. C. If the two populations have different spreads with the population with the larger mean having the larger spread, the log transformation can be tried. If Z 1 and Z 2 have similar standard deviations and are approximately normal (or n1 , n2 30 ), then the t-tools can be used to analyze the difference between the two populations on the log scale. D. Interpretation for causal inference: Suppose that Z 1 and Z 2 have approximately the same standard deviation and shape, then an additive treatment effect model holds on the log scale and a multiplicative treatment effect model holds on the original scale. Let the additive treatment effect on the log scale be , i.e., Z 2i Z1i . Then on the original scale -- Y2i Y1i e , there is a multiplicative treatment effect of e . A point estimate for the multiplicative treatment effect is e Z 2 Z1 and a 95% confidence interval for the multiplicative treatment effect is (e L , eU ) where ( L,U ) Z 2 Z1 t.025,n1 n2 2 SE(Z 2 Z1 ) [Note: The confidence interval is only valid if Z1 , Z 2 are approximately normal or if the sample sizes are large, n1 , n2 30 .] E. Interpretation for population inference: Suppose that Z 1 and Z 2 are symmetric. Then e Z 2 Z1 is an estimate of the ratio of the population median of group 2 to the population median of group 1 and a 95% confidence interval for the ratio of the population median of group 2 to the population median of group 1 is (e L , eU ) where ( L,U ) Z 2 Z1 t.025,n1 n2 2 SE(Z 2 Z1 ). [Note: The confidence interval is only valid if (i) Z1 , Z 2 are approximately normal or if the sample sizes are large, n1 , n2 30 and (ii) Z1 , Z 2 have the approximately the same standard deviation or if n1 n2 .]