* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Audiology Primer

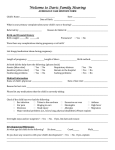

Survey

Document related concepts

Speech perception wikipedia , lookup

Auditory processing disorder wikipedia , lookup

Telecommunications relay service wikipedia , lookup

Sound localization wikipedia , lookup

Olivocochlear system wikipedia , lookup

Auditory system wikipedia , lookup

Evolution of mammalian auditory ossicles wikipedia , lookup

Hearing loss wikipedia , lookup

Hearing aid wikipedia , lookup

Noise-induced hearing loss wikipedia , lookup

Sensorineural hearing loss wikipedia , lookup

Audiology and hearing health professionals in developed and developing countries wikipedia , lookup

Transcript