* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download 20070716-wenji-demar-bowden

Survey

Document related concepts

Zero-configuration networking wikipedia , lookup

Piggybacking (Internet access) wikipedia , lookup

Distributed firewall wikipedia , lookup

Computer network wikipedia , lookup

Internet protocol suite wikipedia , lookup

Deep packet inspection wikipedia , lookup

Wake-on-LAN wikipedia , lookup

List of wireless community networks by region wikipedia , lookup

Network tap wikipedia , lookup

Recursive InterNetwork Architecture (RINA) wikipedia , lookup

Airborne Networking wikipedia , lookup

Packet switching wikipedia , lookup

Transcript

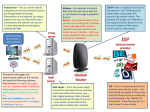

Wide Area Network Performance Analysis Methodology Wenji Wu, Phil DeMar, Mark Bowden Fermilab ESCC/Internet2 Joint Techs Workshop 2007 [email protected], [email protected], [email protected] Topics Problems End-to-End Network Performance Analysis TCP transfer throughput TCP throughput is network-end-system limited TCP throughput is network-limited Network Performance Analysis Methodology Performance Analysis Network Architecture Performance Analysis Steps 2 1. Problems What, Where, and How are the performance bottlenecks of network applications in wide area networks? How to diagnose network/application performance quickly and efficiently? 3 Network Application Performance Factors !!! End System 1 2 MEM CPU Applications 3 4 Disks Operating System 5 NIC 7 1’ • • • • • CPU speed MEM Size System Load Disk I/O Speed Operating System • R/W buffer size • Disk cache size • NIC Speed MEM CPU Disks 8 Network 3’ Operating System 5’ NIC Router LAN Network Applications 4’ 6 R/S 2’ R/S 6’ 7’ Cable 9 WAN Router • Network Delay • Bandwidth • Packet Drop Rate 4 2. End-to-End Network/Application Performance Analysis 5 2.1 TCP transfer throughput An end-to-end TCP connection can be separated into: the sender, the networks, and the receiver. TCP adaptive windowing scheme consists of a sendwindow (Ws), congestion-window (CWND), and receivewindow (WR). Congestion Control: congestion-window Flow Control: receive window The overall end-to-end performance of TCP throughput is decided by the sender, the network, and the receiver, which are modeled and symbolized in the sender as Ws, CWND, and WR. Assume the round trip time RTT, the instantaneous TCP throughput at time t: Throughput (t) = min{Ws(t), CWND(t), WR(t) }/RTT(t) 6 2.1 TCP transfer throughput (cont) If any of the three windows is small, especially when such conditions last for a relatively long period of time, the overall TCP throughput would be seriously degraded. The TCP throughput is network-end-system-limited for the duration T, if it has: T W 0 T S (t )d (t ) T T CWND(t )d (t ) , or WR (t )d (t ) CWND(t )d (t ) 0 0 0 The TCP throughput is network-limited for the duration T, if it has: T T CWND(t )d (t ) W 0 0 S (t )d (t ) T T 0 0 , and CWND(t )d (t ) WR (t )d (t ) 7 2.2 TCP throughput is network-end-system limited User space Kernel Space Kernel Space Send buffer Receive buffer BS Network application Socket write S receive TCP Socket read TCP Network application R (b) TCP Receiver User/Kernel space split BR send (a) TCP Sender User space Network application in user space, in process context Protocol processing in kernel, in the interrupt context Interrupt-driven operating system Hardware interrupt -> Software interrupt -> process 8 2.2 TCP throughput is network-end-system limited (cont) Factors leading to a relatively small window of WS(t) & WR(t) Poorly-designed network application Performance-limited hardware CPU, disk I/O subsystem, system buses, memory Heavily-loaded network end systems System interrupt loads are too high Interrupt coalescing, Jumbo Frame System process load are too high Poorly configured TCP protocol parameters TCP Send/Receive buffer size TCP window scaling in high speed, long distance networks. 9 2.3 TCP throughput is network-limited Two Facts: TCP sender tries to estimate the available bandwidth in the networks, and represents it as CWND with congestion control algorithms. TCP assumes packet drops are caused by network congestion. Any packet drops will lead to a reduction in CWND. Two determining factors for CWND Congestion control algorithm Network Conditions (Packet drops) 10 2.3 TCP throughput is network-limited (cont) TCP congestion control algorithm is evolving Standard TCP congestion control (Reno/NewReno) Slow start, congestion avoidance, retransmission timeouts, fast retransmit and fast recovery AIMD scheme for congestion avoidance Perform well in traditional networks Cause under-utilized problem in high-speed and long-distance networks High-speed TCP variants: FAST TCP, HTCP, HSTCP, BIC, and CUBIC Modify the AMID congestion avoidance scheme of standard TCP to be more aggressive, Keep the same fast retransmit and fast recovery algorithm Solve the under-utilized problem in high speed and long distance networks 11 2.3 TCP throughput is network-limited (cont) With high-speed TCP variants, it is mainly the packet drops that lead to a relatively small CWND The following conditions could lead to packet drops Network congestion. Network infrastructure failures. Network end systems. Routing changes. Packet drops in Layer 2 queues due to limited queue size. Packet dropped in ring buffer due to system memory pressure. When a route changes, the interaction of routing policies, iBGP, and the MRAI timer may lead to transient disconnectivity. Packet reordering. Packet reordering will cause duplicate ACKs to the sender. RFC 2581 suggest a TCP sender should consider three or more dupACKs as an indication of packet loss. With severe packet reordering, TCP might misinterpret it as packet losses. 12 2.3 TCP throughput is network-limited (cont) Congestion window is manipulated on the unit of Maximum Segment Size (MSS). Larger MSS entails higher TCP throughput. Larger MSS is efficient for both networks and network end systems. 13 3. Network Performance Analysis Methodology 14 Network Application Performance Factors !!! End System 1 2 MEM CPU Applications 3 4 Disks Operating System 5 NIC 7 1’ • • • • • CPU speed MEM Size System Load Disk I/O Speed Operating System • R/W buffer size • Disk cache size • NIC Speed MEM CPU Disks 8 Network 3’ Operating System 5’ NIC Router LAN Network Applications 4’ 6 R/S 2’ R/S 6’ 7’ Cable 9 WAN Router • Network Delay • Bandwidth • Packet Drop Rate 15 Network Performance Analysis Methodology An end-to-end network/application performance is viewed as Application-related problems, Beyond the scope of any standardized problem analysis Network end system problems Network path problems Network performance analysis methodology Analyze and appropriately tune the network end systems Network path analysis, with remediation of detected problems where feasible If network end system and network path analysis do not uncover significant problems or concerns, packet trace analysis will be conducted. Any performance bottlenecks will manifest themselves in the packet traces. 16 3.1 Network Performance Analysis Network Architecture NESDS ` NES NPDS NES NES LAN R/S NESDS End-to-end Path BR NPDS PTDS ` LAN 7’ 8 Router WAN BR NPDS Network Path Diagnosis Server NPDS Packet Trace Diagnosis Server NESDS Network End System Diagnosis Server PTDS Cable 9 Network End System Router BR Border Router WAN 17 3.1 Network Performance Analysis Network Architecture (cont) Network end system diagnosis server. We use Network Diagnostic Tool (NDT). collect various TCP network parameters in the network end systems, and identify their configuration problems Identify local network infrastructure problems such as faulted Ethernet connections, malfunctioning NICs, and Ethernet duplex mismatch. Network path diagnosis server We use OWAMP applications to collect and diagnose one-way network path statistics. The forward and reverse path might not be symmetric The forward and reverse path traffic loads likely not symmetric The forward and reverse path might have different Qos schemes Other tools such as Ping, traceroute, pathneck, iperf, and PerfSONAR etc could be used. 18 3.1 Network Performance Analysis Network Architecture (cont) Packet trace diagnosis server Directly connect to the border router, can port-mirror any port in the border router TCPDump, used to record packet traces TCPTrace, used to analyze the recorded packet traces Xplot, used to examine the recorded traces visually 19 3.2 Network/Application Performance Analysis Steps Step 1: Definition of the problem space Step 2: Collect of network end system information & network path characteristics Step 3: Network end system diagnosis Step 4: Network path performance analysis Route changes frequently? Network congestion: delay variance large? Bottleneck location? Infrastructure failures: examine the counter one by one Packet reordering: load balancing? Parallel processing? Step 5: Evaluate packet trace pattern 20 Hardware Collection of network end system information Operating system CPU speed; CPU numbers; Memory size; memory latency; Maximum bus bandwidth; Maximum disk I/O bandwidth; Maximum bandwidth; Interrupt coalescing supported? TCP offloading supported? Jumbo frame supported? Operating system type, versions; 32bit/64bit? For Linux, identify the kernel version; System Loads Network applications running context; Maximum system background loads; Network Applications Network application traffic generation pattern; Storage system involved? Send/Receive (Socket) buffer size; Timestamp option enabled? Window scaling option enabled? Window scaling parameter; TCP reordering threshold; Congestion control algorithm; Total TCP memory size; Maximum Segment Size; SACK enabled? D-SACK enabled? ECN enabled? CPU Memory Bus Disk Software NIC TCP Parameters NIC Driver Parameter Device driver send/recv queue size; TCP offloading enabled? Interrupt coalescing enabled? Jumbo Frame enabled? Table 1 Network End Systems Information 21 Collection of network path characteristics Network path characteristics Round-trip time (ping) Sequence of routers along the paths (traceroute) One-way delay, delay variance (owamp) One-way packet drop rate (owamp) Packet reordering (owamp) Current achievable throughput (iperf) Bandwidth bottleneck location (pathneck) 22 Traffic trace from Fermi to OEAW What happened? 23 Traffic trace from Fermi to Brazil What happened? 24 Conclusion Fermilab is working on developing a performance analysis methodology Objective is to put structure into troubleshooting network performance problems Project is in early stages of development We welcome collaboration & feedback Biweekly Wide-Area-Working-Group (WAWG) meeting on alternate Friday mornings Send email to [email protected] 25