* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download LES07

Expense and cost recovery system (ECRS) wikipedia , lookup

Clusterpoint wikipedia , lookup

Data Protection Act, 2012 wikipedia , lookup

Data center wikipedia , lookup

Forecasting wikipedia , lookup

Data analysis wikipedia , lookup

3D optical data storage wikipedia , lookup

Database model wikipedia , lookup

Information privacy law wikipedia , lookup

Operational transformation wikipedia , lookup

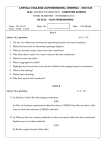

Strategies for Extracting, Transforming, and Loading Extraction, Transformation, and Loading Processes (ETL) • Extract source data • Transform and cleanse data • Index and summarize • Load data into warehouse • Detect changes • Refresh data ETL Programs Gateways Tools Operational systems Warehouse Data Staging Area • The construction site for the warehouse • Required by most implementations • Composed of ODS, flat files, or relational server tables • Frequently configured as multitier staging Operational environment Staging environment Extract Transform Transport Transform Warehouse environment Transport (Load) Preferred Traditional Staging Model Remote staging: Data staging area in its own environment, avoiding negative impact on the warehouse environment Extracting Data • • • • Routines developed to select fields from source Various data formats Rules, audit trails, error correction facilities Various techniques Examining Source Systems • Production – Legacy systems – Database systems – Vertical applications • Archive – Historical (for initial load) – Used for query analysis – May require transformations Mapping • Defines which operational attributes to use • Defines how to transform the attributes for the warehouse • Defines where the attributes exist in the warehouse Designing Extraction Processes • Analysis – Sources, technologies – Data types, quality, owners • Design options – Manual, custom, gateway, third-party – Replication, full, or delta refresh • Design issues – Batch window, volumes, data currency – Automation, skills needed, resources • Maintenance of metadata trail Importance of Data Quality • • • • Business user confidence Query and reporting accuracy Standardization Data integration Benefits of Data Quality Cleansed data is critical for: • Standardization within the warehouse • High quality matching on names and addresses • Creation of accurate rules and constraints • Prediction and analysis • Creation of a solid infrastructure to support customer-centric business intelligence • Reduction of project risk • Reduction of long term costs Guidelines for Data Quality • Operational data should not be used directly in the warehouse. • Operational data must be cleaned for each increment. • Operational data is not simply fixed by modifying applications. Transformation Transformation eliminates operational data anomalies: • Cleans • Standardizes • Presents subject-oriented data Consolidate Cleanse Extract Transform Transport Restructure Transform Transport (Load) Transformation Routines • • • • • • Cleansing data Eliminating inconsistencies Adding elements Merging data Integrating data Transforming data before load Why Transform? • • • • In-house system development Multipart keys Multiple encoding Multiple local standards Why Transform? • • • • Multiple files Missing values Duplicate values Element names Why Transform? • Element meaning • Input format • Referential integrity Why Transform? Name and address: • • • • • • • • • No unique key Missing data values (NULLs) Personal and commercial names mixed Different addresses for the same member Different names and spelling for the same member Many names on one line One name on two lines The data may be in a single field of no fixed format Each component of an address is in a specific field Integration (Match and Merge) Match and Merge schema Source Target Transformation Techniques • Merging data – Operational transactions do not usually map one-to-one with warehouse data. – Data for the warehouse is merged to provide information for analysis. • Adding keys to data Transformation Techniques Time Transformation Techniques Adding a date stamp: • Fact table – Add triggers – Recode applications – Compare tables • Dimension table • Time representation – Point in time – Time span Transformation Techniques Creating summary data: • During extraction on staging area • After loading onto the warehouse server Transformation Techniques Creating artificial keys: • Use generalized or derived keys • Maintain the uniqueness of a row • Use an administrative process to assign the key • Concatenate operational key with number • Easy to maintain • Cumbersome keys • No clean value for retrieval 109908 109908 01 Where to Transform? Choose wisely where the transformation takes place: • Operational platform • Staging area • Warehouse server When to Transform? Choose the transformation point wisely: • Workload • Environment impact • CPU use • Disk space • Network bandwidth • Parallel execution • Load window time • User information needs Designing Transformation Processes • Analysis – Sources and target mappings, business rules – Key users, metadata, grain, verify integrity of data • Design options – Programming, Tools • Design issues – Performance – Size of the staging area – Exception handling, integrity maintenance Loading Data into the Warehouse • Loading moves the data into the warehouse. • Subsequent refresh moves smaller volumes. • Business determines the cycle. Operational environment Staging environment Extract Transform Transport Transform Warehouse environment Transport (Load) Extract versus Warehouse Processing Environment • Extract processing builds a new database after each time interval. Operational databases T1 T2 T3 Operational databases T1 T2 T3 • Warehouse processing adds changes to the database after each time interval. First-Time Load • Single event that populates the database with historical data • Involves a large volume of data • Uses distinct ETL tasks • Involves large amounts of processing after load Refresh • • • • • Performed according to a business cycle Simpler task Less data to load than first-time load Less complex ETL Smaller amounts of postload processing Building the Transportation Process Specification: • Techniques and tools • File transfer methods • The load window • Time window for other tasks • First-time and refresh volumes • Frequency of the refresh cycle • Connectivity bandwidth Building the Transportation Process • • • • • • Test the proposed technique Document proposed load Gain agreement on the process Monitor Review Revise Granularity • Important design and operational issue • Low-level grain: Expensive, high level of processing, more disk, detail • High-level grain: Cheaper, less processing, less disk, little detail • Space requirements – – – – – Storage Backup Recovery Partitioning Load Post-Processing of Loaded Data Summarize Extract Transform Transport Index