* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download MTH/STA 561 BERNOULLI AND BINOMIAL DISTRIBUTION A

Survey

Document related concepts

Transcript

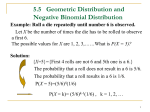

MTH/STA 561 BERNOULLI AND BINOMIAL DISTRIBUTION A simple experiment is one that may result in either of two possible outcomes. We can think of many examples of such experiments: a toss of a coin (head or tail), performance of a student in a course (pass or fail), the sex of a yet-to-be-born child (male or female), placing a satellite in orbit around the earth (success or failure). We will call an experiment with two possible outcomes a Bernoulli trial and will label the two outcomes success and failure. De…nition 1. A Bernoulli trial is an experiment with two possible outcomes, success or a failure. The sample space for a Bernoulli trial is S = fsuccess, failureg. The probability distribution for a Bernoulli trial can be easily speci…ed and depends only upon the single parameter p, the probability of success, and then the probability of failure is q = 1 p. In order that it be possible for either outcome to occur, the parameter p lie between 0 and 1, exclusively. Any experiment can be used to de…ne a Bernoulli trial simply by labeling some event A as “success”and calling its compliment A a “failure”. In this case, p = P (A) and q = P A . A Bernoulli random variable can be de…ned on a Bernoulli trial or any more complicated sample space. De…nition 2. Let S be the sample space for an experiment, let A with p = P (A), 0 < p < 1, and de…ne Y = S be any event if event A occurs if event A occurs. 1 0 Then the random variable Y is called the Bernoulli random variable with parameter p. If the experiment is actually a Bernoulli trial, we simply let A = fsuccessg. The probability distribution for a Bernoulli random variable follows directly from the probability distribution for S. Since Y = 1 if and only if event A occurs, we have P fY = 1g = P (A) = p. Similarly, since Y = 0 if and only if event A occurs, we have P fY = 0g = P A = 1 p = q. No other value can occur for Y . Bernoulli Distribution. The probability distribution for a Bernoulli random variable with parameter p is called Bernoulli distribution and given by p (y) = py q 1 0 y if y = 0; 1 elsewhere. The mean and variance of the Bernoulli distribution can be readily obtained as illustrated in the following theorem. 1 Theorem 1. Let Y be a Bernoulli random variable with success probability p. Then and = E (Y ) = p 2 = V ar (Y ) = pq where q = 1 p. Proof. By de…nition, = E (Y ) = 1 p + 0 q = p and E Y 2 = 12 p + 02 q = p: Thus, 2 Example 1. aircraft. Let Y = =E Y2 2 =p p2 = p (1 p) = pq: Suppose that a radar has a probability 0:98 of detecting an intruding 1 0 if the radar detects the intruding aircraft if the radar fail to detect the intruding aircraft. Clearly, Y is a Bernoulli random variable with parameter p = 0:98, and has probability distribution (0:98)y (0:02)1 y if y = 0; 1 p (y) = 0 elsewhere. That is, P fY = 1g = 0:98 and P fY = 0g = 0:02. We also …nd that = 0:98 and 2 = (0:98) (0:02) = 0:0196: If a Bernoulli trial is repeated n times independently, then the resulting experiment is referred to as a Binomial experiment. For example, tossing a coin once is a Bernoulli trial whereas tossing a coin 10 times is a binomial experiment. De…nition 3. An experiment that consists of n (…xed) repeated independent Bernoulli trials, each with probability of success p, is called a binomial experiment. The number successes observed during the n trials is called the binomial random variable with n trials and parameter p. More precisely, a binomial experiment is one that possesses the following properties: 1. The experiment consists of n repeated identical trials. 2. Each trial results in one of two outcomes. We shall call one outcome a success and the other a failure. 3. The probability of success on a single trail is equal to p and remains the same from trial to trial. The probability of a failure is equal to q = 1 p. 4. The repeated trials are independent. 2 5. The random variable of interest is Y , the number of successes observed during the n trials. Example 2. The following are typical binomial experiments: 1. Toss a fair coin ten times and observe the number, Y , of heads. Then Y is a binomial random variable with n = 10 and p = 1=2. 2. An early warning detection system for aircraft consists of four identical radar units operating independently of one another. Suppose that each unit has a probability of 0:98 of detecting an intruding aircraft. When an intruding aircraft enters the scene the random variable of interest is Y , the number of radar units that detect the aircraft. Clearly, Y is a binomial random variable with n = 4 and p = 0:98. 3. Suppose that 40% of a large population of registered voters favor candidate Mr. Jones. A random sample of …fteen voters will be selected and the number, Y , of voters favoring Mr. Jones is to observed. Then Y is a binomial random variable with n = 15 and p = 0:40. The natural sample space for a binomial experiment is the Cartesian product of Bernoulli trial sample space with itself n times. That is, the binomial sample space is S = S1 S2 Sn where Si = fsuccess, failureg for i = 1; 2; ; n. Each element of S is an n-tuple (! 1 ; ! 2 ; where ! i represents success or failure on the ith trial for i = 1; 2; ; n. That is, S = f(! 1 ; ! 2 ; ; ! n ) j ! i is success or failure for i = 1; 2; ; ! n ), ; ng Since, for each Bernoulli trial, p = probability of success q = 1 p = probability of failure and the trials are independent, we compute the probability of occurrence of a single outcome (single n-tuple) of S by multiplying the probabilities of occurrence of the appropriate outcomes for the given trials. For example, the probability of occurrence of outcome (success, success, is equal to p p p = pn , whereas the probability of occurrence of outcome (failure, failure, is equal to q q , success) , failure) q = qn. Example 3. For a binomial experiment with n = 3 trials and the probability of success, p, for each trial, the probabilities of occurrence of outcomes are given as follows. 3 Outcome (success, success, success) (success, success, failure) (success, failure, success) (success, failure, failure) (failure, success, success) (failure, success, failure) (failure, failure, success) (failure, failure, failure) Probability p p p = p3 p p q = p2 q p q p = p2 q p q q = pq 2 q p p = p2 q q p q = pq 2 q q p = pq 2 q q q = q3 It can be easily veri…ed that the sum of the probabilities assigned to the outcomes does in fact add to one, as it must. Now let Y be the binomial random variable that counts the number of successes observed in a binomial experiment with n trials. Each sample point in the sample space can characterized by an n-tuple involving the letters S and F corresponding to success and failure. A typical sample point would thus appear as SSF SF F F SF S FS where the letter in the ith position (proceeding from left to right) indicates the outcome of the ith trial. Now consider a typical sample point with y successes and n y failures which is hence contained in the event fY = yg. This sample point is the intersection of n independent trials, y successes and n y failures, and hence its probability is pp p q = py q n qq y Every other sample point in the event fY = yg will appear as a rearrangement of the S 0 s and F 0 s in the sample point described above and will therefore contain y S 0 s and n y F 0 s and be assigned the same probability. Since the number of distinct arrangements of y S 0 s and n y F 0 s is n n! = y y! (n y)! it follows that the probability distribution for Y is given by the following formula. Binomial Distribution. Consider a binomial experiment with n trials and parameter p, the probability distribution of the binomial random variable Y , the number of successes in n independent trials, is 8 < n y n y p q for y = 0; 1; 2; ;n p (y) = y : 0 elsewhere. Example 4. Suppose that a student is given a test with 10 true-false questions. Also assume that the student is totally unprepared for the test and guesses at the answer to every 4 question. Then the probability that the student answers all questions correctly is p (10) = 10 10 10 1 2 0 1 2 = 10 1 2 = 1 1024 whereas the probability that exactly four questions are answered correctly is 10 4 p (4) = 1 2 4 1 2 6 = 210 1 2 10 = 105 : 512 Example 5. Suppose that a huge lot of electrical components contains 5% defectives. If a random sample of …ve components is tested, then the probability of …nding at least one defective is P (at least one defective) = 1 P (no defectives) = 1 5 (0:05)0 (0:95)5 0 0 0:774 = 0:226 = 1 = 1 p (0) Note. The term “binomial distribution” derives from the fact that the probability n y n y p q , y = 0; 1; 2; , n, are terms of the binomial expansion y (q + p)n = n P y=0 = n y n p q y y n n n q + pq n 0 1 1 + n 2 n pq 2 2 + + n n p n Observe that p (0) = n n q ; p (1) = 0 n pq n 1 ; p (2) = 1 n 2 n 2 pq ; 2 ; p (n) = n n p n Since q + p = 1, we conclude that n P p (y) = y=0 n P y=0 n y n p q y y = (q + p)n = 1 A tabulation of binomial probability in the cumulative form a P y=0 p (y) = a P y=0 n y n p q y y presented in Table 1 of the Appendix, will greatly reduce the computations associated with some of the exercises. We illustrate the use of this table in the following examples. 5 Example 6. It is known from experience that 40% of all orders received by a shipping company are placed by the Mallon Company. If ten orders are received by the shipping company, then from Table 1 of the Appendix, we …nd the probability that (1) no more than six are received from the Mallon Company is P (Y 6) = 6 P 6 P p (y) = y=0 10 (0:4)y (0:6)10 y y=0 y = 0:9452 =1 0:6331 (2) at least …ve are received from the Mallon Company is P (Y 5) = 1 P (Y 4 P 4) = 1 p (y) y=0 = 1 4 P y=0 10 (0:4)y (0:6)10 y y = 0:3669 (3) more than three but less than 8 are received from the Mallon Company is P (4 Y 7) = P (Y 7) P (Y 3) = 7 P 3 P p (y) y=0 7 P p (y) y=0 3 P 10 10 (0:4)y (0:6)10 y (0:4)y (0:6)10 y y y=0 y=0 = 0:9877 0:3833 = 0:6054 = y (4) exactly six are received from the Mallon Company is P (Y = 6) = P (Y 6) P (Y 5) = 6 P 5 P p (y) y=0 5 P 10 10 (0:4)y (0:6)10 y (0:4)y (0:6)10 y y y=0 y=0 = 0:9452 0:8338 = 0:1114: = 6 P p (y) y=0 y Example 7. Suppose that each electronic component from a large lot fails to survive a shock test with probability 0:4. If …fteen components are selected at random from the lot, then from Table 1 of the Appendix, we …nd the probability that (1) no more than 8 components fail to survive is P (Y 8) = 8 P p (y) = y=0 8 P y=0 15 (0:4)y (0:6)15 y y = 0:9050 (2) at least 10 components fail to survive is P (Y 10) = 1 P (Y 9) = 1 9 P p (y) y=0 = 9 P y=0 15 (0:4)y (0:6)15 y 6 y =1 0:9662 = 0:0338 (3) from 3 to 8 components fail to survive is P (3 Y 8) = P (Y 8) P (Y 2) = 8 P p (y) y=0 2 P p (y) y=0 2 P 15 15 (0:4)y (0:6)15 (0:4)y (0:6)15 y y y y=0 y=0 = 0:9050 0:0271 = 0:8779 = 8 P y (4) exactly 5 components fail to survive is P (Y = 5) = P (Y 5) P (Y 4) = 5 P p (y) p (y) y=0 y=0 4 P 15 15 (0:4)y (0:6)15 y (0:4)y (0:6)15 y y y=0 y=0 = 0:4032 0:2173 = 0:1859 = 5 P 4 P y The mean and variance of the binomial distribution are presented in the following theorem. Theorem 2. Let Y be a binomial random variable based on n trials and success probability p. Then = E (Y ) = np 2 and = V ar (Y ) = npq Proof. Recall that a binomial experiment with n trials and parameter p is in fact the sum of n independent Bernoulli random variables. Suppose that n independent Bernoulli random variables, each with probability, p, of success, are to be performed. De…ne 1 0 Xi = for i = 1; 2; if the ith trial result in a success with probability p if the ith trial result in a failure with probability q ; n, where q = 1 Y = p. Then n X Xi = X1 + X2 + + Xn i=1 gives the total number of success and thus it is a binomial random variable with parameters n and p. In view of Theorem 1, E (Xi ) = p and V ar (Xi ) = pq for i = 1; 2; ; n. Therefore, = E (Y ) = E (X1 ) + E (X2 ) + + E (Xn ) = np: It will be shown in Chapter 5 that if X1 , X2 , , Xn are independent random variables, then V ar (X1 + X2 + + Xn ) = V ar (X1 ) + V ar (X2 ) + + V ar (Xn ) : Consequently, 2 = V ar (Y ) = V ar (X1 ) + V ar (X2 ) + 7 + V ar (Xn ) = npq Example 8. Refer to Example 7, the mean and variance are = E (Y ) = np = (15) (0:4) = 6 and 2 = V ar (Y ) = npq = (15) (0:4) (0:6) = 3:6: 8