* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Mining Quantitative Association Rules on Overlapped Intervals

Survey

Document related concepts

Transcript

Mining Quantitative Association Rules on

Overlapped Intervals

Qiang Tong1,3 , Baoping Yan2 , and Yuanchun Zhou1,3

3

1

Institute of Computing Technology,

Chinese Academy of Sciences, Beijing, China

{tongqiang, yczhou}@sdb.cnic.cn

2

Computer Network Information Center,

Chinese Academy of Sciences, Beijing, China

[email protected]

Graduate School of the Chinese Academy of Sciences, Beijing, China

Abstract. Mining association rules is an important problem in data

mining. Algorithms for mining boolean data have been well studied and

documented, but they cannot deal with quantitative and categorical data

directly. For quantitative attributes, the general idea is partitioning the

domain of a quantitative attribute into intervals, and applying boolean

algorithms to the intervals. But, there is a conflict between the minimum

support problem and the minimum confidence problem, while existing

partitioning methods cannot avoid the conflict. Moreover, we expect the

intervals to be meaningful. Clustering in data mining is a discovery process which groups a set of data such that the intracluster similarity is

maximized and the intercluster similarity is minimized. The discovered

clusters are used to explain the characteristics of the data distribution.

The present paper will propose a novel method to find quantitative association rules by clustering the transactions of a database into clusters and

projecting the clusters into the domains of the quantitative attributes to

form meaningful intervals which may be overlapped. Experimental results show that our approach can efficiently find quantitative association

rules, and can find important association rules which may be missed by

the previous algorithms.

1

Introduction

Mining association rules is a key data mining problem and has been widely

studied [1]. Finding association rules in binary data has been well investigated

and documented [2, 3, 4]. Finding association rules in numeric or categorical

data is not as easy as in binary data. However, many real world databases

contain quantitative attributes and current solutions to this case are so far

inadequate.

An association rule is a rule of the form X ⇒ Y , where X and Y are sets

of items. It states that when X occurs in a database so does Y with a certain

probability. X is called the antecedent of the rule and Y the consequent. There

X. Li, S. Wang, and Z.Y. Dong (Eds.): ADMA 2005, LNAI 3584, pp. 43–50, 2005.

c Springer-Verlag Berlin Heidelberg 2005

°

44

Q. Tong, B. Yan, and Y. Zhou

are two important parameters associated with an association rule: support and

confidence. Support describes the importance of the rule, while confidence determines the occurrence probability of the rule. The most difficult part of an

association rule mining algorithm is to find the frequent itemsets. The process

is affected by the support parameter designated by the user.

A well known application of association rules is in market basket data analysis, which was introduced by Agrawal in 1993 [2]. In the problem of market

basket data analysis, the data are boolean, which have values of “1” or “0”. The

classical association rule mining algorithms are designed for boolean data. However, quantitative and categorical attributes widely exist in current databases.

In [5], Srikant and Agrawal proposed an algorithm dealing with quantitative attributes by dividing quantitative attributes into equi-depth intervals and then

combining adjacent partitions when necessary. In other words, for a depth d, the

first d values of the attribute are placed in one interval, the next d in a second

interval, and so on. There are two problems with the current methods of partitioning intervals: MinSup and MinConf [5]. If a quantitative attribute is divided

into too many intervals, the support for a single interval can be low. When the

support of an interval is below the minimum support, some rules involving the

attribute may not be found. This is the minimum support problem. Some rules

may have minimum confidence only when a small interval is in the antecedent,

and the information loss increases as the interval size becomes larger. This is the

minimum confidence problem.

The critical part of mining quantitative association rules is to divide the domains of the quantitative attributes into intervals. There are several classical

dividing methods. The equi-width method divides the domain of a quantitative

attribute into n intervals, and each interval has the same length. In the equidepth method, there are equal number of items contained in each interval. The

equi-width method and the equi-depth method are so straightforward that the

partitions of quantitative attributes may not be meaningful, and cannot deal

with the minimum confidence problem. In [5], Srikant and Agrawal introduced

a measure of partial completeness which quantified the information lost due to

partitioning, and developed an algorithm to partition quantitative attributes.

In [6], Miller and Yang pointed out the pitfalls of the equi-depth method, and

presented several guiding principles for quantitative attribute partitioning. In

selecting intervals or groups of data to consider, they wanted to have a measure of interval quality to reflect the distance among data points. They took the

distance among data into account, since they believed that putting closer data

together was more meaningful. To achieve this goal, they presented a more general form of an association rule, and used clustering to find subsets that made

sense by containing a set of attributes that were close enough. They proposed

an algorithm which used birch [7] to find clusters in the quantitative attributes

and used the discovered clusters to form “items”, then fed the items into the

classical boolean algorithm apriori [3]. In their algorithm, clustering was used

to determine sets of dense values in a single attribute or over a set of attributes

that were to be treated as a whole.

Mining Quantitative Association Rules on Overlapped Intervals

45

Although Miller and Yang took the distance among data into account and

used a clustering method to make the intervals of quantitative attributes more

meaningful, they did not take the relations among other attributes into account

by clustering a quantitative attribute or a set of quantitative attributes alone.

We believe that their technique still falls shot of a desirable goal.

Based on the above analysis, we find that the partitioning method can be

further improved. On the one hand, since clustering an attribute or a set of

attributes alone is not good enough, we believe that the relations among attributes should be taken into account. We tend to cluster all attributes together,

and project the clusters into the domains of the quantitative attributes. On the

other hand, the projection of the clusters on a specific attribute can be overlapped. We think this is reasonable. Moreover, this is a good resolution to the

conflict between the minimum support problem and the minimum confidence

problem. A small interval may result in the minimum support problem, while a

large interval may lead to the minimum confidence problem. When several overlapped intervals coexist in the domain of a quantitative attribute, and different

intervals are used for different rules, the conflict between the two problems which

confuses the quantitative attributes partitioning does not exist. In this paper, we

propose an approach which first applys a clustering algorithm to all attributes,

and projects the discovered clusters into the domains of all attributes to form

intervals (the intervals may be overlapped), then uses a boolean association rule

mining algorithm to find association rules.

The rest of the paper is organized as follows. In Section 2, we introduce some

definitions of the quantitative association rule mining problem and review the

background in brief. In Section 3, we present our approach and our algorithm. In

Section 4, we give the experimental results and our analysis. Finally, in Section

5, we give the conclusions and the future work.

2

Problem Description

Now, we give a formal statement of the problem of mining quantitative association rules and introduce some definitions.

Let I = {i1 , i2 , . . . , in } be a set of attributes, and R be the set of real numbers,

IR = X × R × R , that is IR = {(x, l, u)|x ∈ I, l ∈ R, u ∈ R, l ≤ x ≤ u}. A triple

(x, l, u) ∈ IR denotes either a quantitative attribute x with a value interval [l, u],

or a categorical attribute with a value l (l = u) . Let D be a set of transactions,

where each transaction T is a set of attribute values. X ⊂ IR , if ∀(x, l, u) ∈

X, ∃(x, v) ∈ T, l ≤ v ≤ u, we say transaction T supports X. A quantitative

association rule isTan implication of the form X ⇒ Y , where X ∈ IR , Y ∈ IR ,

and

S attribute(X) attribute(Y ) = ∅. If s percent of transactions in D support

X Y , and c percent of transactions which support X also support Y , we say

that the association rule has support s and confidence c respectively. The problem of mining quantitative association rules is the process of finding association

rules which meet the minimum support and the minimum confidence at a given

transaction database which contains quantitative and/or categorical attributes.

46

Q. Tong, B. Yan, and Y. Zhou

Clustering can be considered the most important unsupervised learning technique, which deals with finding a structure in a collection of unlabeled data. A

cluster is therefore a collection of objects which are “similar” to each other and

are “dissimilar” to the objects belonging to other clusters [8]. In this paper, a

cluster is a set of transactions. An important component of a clustering algorithm

is the distance measure among data points. In [6], Miller and Yang defined two

thresholds on the cluster size and the diameter. First, the diameter of a cluster

should be less than a specific value to ensure that the cluster is sufficiently dense.

Second, the number of transactions contained in a cluster should be greater than

the minimum support to ensure that the cluster is frequent. Since our clustering

approach is different, our definition of the diameter of a cluster is also different.

Definition 1. We adopt Euclidean distance as the distance metric, and the distance between two transactions is defined as

v

u n

uX

(1)

d(Ii , Ij ) = t (Iik − Ijk )2

k=1

Definition 2. A cluster C = {I1 , I2 , . . . , Im } is a set of transactions, and the

gravity center of C is defined as

m

1 X

Ii

Cg =

m i=1

(2)

Definition 3. The diameter of a cluster C = {I1 , I2 , . . . , Im } is defined as

m

Dg (C) =

1 X

(Ii − Cg )T (Ii − Cg )

m i=1

(3)

Definition 4. The number of transactions contained in a cluster C is denoted

as |C|, d0 and s0 are thresholds for association rule mining, |C|and Dg (C) should

satisfy the following formula

|C| ≥ s0 , Dg (C) ≤ d0

(4)

The first criterion ensures that the cluster contains enough number of transactions to be frequent. The second criterion ensures that the cluster is dense

enough. To ensure that clusters are isolated from each other, we will rely on a

clustering algorithm to discover clusters which are as isolated as possible.

3

The Proposed Approach

In this section, we describe our approach of mining quantitative association rules.

We divide the problem of mining quantitative association rules into several steps:

Mining Quantitative Association Rules on Overlapped Intervals

47

1. Map the attributes of the given database to IR = I × R × R. For ordered

categorical attributes, map the values of the attribute to a set of consecutive

integers, such that the order of the attributes is preserved. For unordered

categorical attributes, we define the distance between two different attributes

as a constant value. For boolean attributes, map the values of the attributes

to “0” and “1”. For quantitative attributes, we keep the original values or

transform the values to a standard form, such as Z-Score. We adopt various

mapping methods to fit the clustering algorithm. For different data sets, we

may use different mapping methods.

2. Apply a clustering algorithm to the new database produced by the first

step. In the clustering algorithm, by dealing with the transactions as ndimension vectors, we take all attributes into account. In this paper, we adopt

a common clustering algorithm k-means to identify transaction groups that

are compact (the distance among transactions within a cluster is small) and

isolated (relatively separable from other groups). By clustering all attributes

together, the relations among all attributes are considered, and the clusters

may be more meaningful. Besides, we also use Definition 4 as the principle

for evaluating the quality of the discovered clusters.

3. Project the clusters into the domains of the quantitative attributes. The

projections of the clusters will form overlapped intervals. We make an interval x ∈ [l, u] a new boolean attribute. The two-dimension example of the

projection is shown in Figure 1.

4. Mine association rules by using a classical boolean algorithm. Since the quantitative attributes have been booleanized, we can use a boolean algorithm

(such as apriori) to find frequent itemsets, and then use the frequent itemsets

to generate association rules.

I2

C1

u1

u3

C3

l1

u2

l3

C2

l2

l1

l2

u1

u2 l3

u3

I1

Fig. 1. Projecting the clusters into the domains of quantitative attributes to form

intervals, which may be overlapped

48

4

Q. Tong, B. Yan, and Y. Zhou

Experimental Results

Our experimental environment is an IBM Netfinity 5600 server with dual PIII

866 CPUs and 512M memory, which runs Linux operating system. The experiment has been done over a real data set of bodyfat [10]. The attributes in the

bodyfat dataset are: density, age, weight, height, neck, chest, abdomen, hip,

thigh, knee, ankle, biceps, forearm and wrist. All of the attributes are quantitative attributes. There are 252 records of various people in the dataset. Our

purpose is to find association rules over all attributes.

For our algorithm, the parameters needed from the user are the minimum

support, the minimum confidence, and the number of clusters. In our experiment,

we use minimum support of 10%, minimum confidence of 60%, and the clusters of

six. We first use a common clustering algorithm (k-means) to find clusters, then

project the clusters into the domains of the quantitative attributes, and finally

use a boolean association rule mining algorithm (apriori) to find association

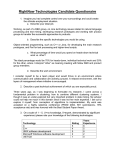

rules. Some of the rules which we have found are listed in Figure 2.

From the above rules, we can see that the intervals are overlapped, which

cannot be discovered by the previous partitioning methods. The equi-width

method cannot divide some quantitative attributes properly (such as density),

because the attributes range only in a very small domain, while the equi-depth

method may put far apart transactions into the same interval. As shown in

Figure 1, our partitioning method projects the clusters into the domains of

the quantitative attributes, and forms overlapped intervals. Our method considers both the distance among transactions and the relations among attributes.

For previous methods, if an interval is small, it may not meet the minimum

support; if an interval is large, it may not meet the minimum confidence. In

our method, since the intervals can be overlapped, we can avoid the conflict

between the minimum support problem and the minimum confidence problem. Moreover, since our intervals tend to be less than those of the previous methods, the boolean association rule mining algorithm works more

efficiently.

ID

1

2

3

4

5

6

7

8

Rules

Age[40, 74]&Weight[178, 216] Abdomen[88.7, 113.1]

Age[34, 42]&Weight[195.75, 224.75] Chest[99.6, 115.6]

Weight[219, 363.15] Hip[105.5, 147.7]&Chest[108.3, 136.2]

Weight[154, 191]&Height[65.5, 77.5] Density[1.025, 1.09]

Abdomen[88.6, 111.2]&Hip[101.8, 115.5] Weight[196,224]

Biceps[24.8, 38.5] & Forearm[22, 34.9] Wrist[15.8, 18.5]

Thigh[54.7, 69]&Knee[34.2, 42.2] Ankle[21.4, 33.9]

Weight[118.5, 159.75]&Height[64, 73.5] Density[1.047, 1.11]

Fig. 2. Some of the rules discovered by our algorithm with the parameters (minimum

support = 10%, minimum confidence = 60%, and the number of clusters k = 6)

Mining Quantitative Association Rules on Overlapped Intervals

5

49

Conclusions and Future Work

In this paper, we have proposed a novel approach to efficiently find quantitative association rules. The critical part of quantitative association rule mining is

to partition the domains of quantitative attributes into intervals. The previous

algorithms dealt with this problem by dividing the domains of quantitative attributes into equi-depth or equi-width intervals, or using a clustering algorithm

on a single attribute (or a set of attributes) alone. They cannot avoid the conflict

between the minimum support problem and the minimum confidence problem,

and risk missing some important rules. In our approach, we treat a transaction

as an n-dimension vector, and apply a common clustering algorithm to the vectors, then project the clusters into the domains of the quantitative attributes to

form overlapped intervals. We finally use a classical boolean algorithm to find

association rules. Our approach takes the relations and the distances among

attributes into account, and can resolve the conflict between the minimum support problem and the minimum confidence problem by allowing intervals to be

overlapped. Experimental results show that our approach can efficiently find

quantitative association rules, and can find important association rules which

may be missed by the previous algorithms.

Since our approach adopts a common clustering algorithm and a classical

boolean association rule mining algorithm rather than integrates the two algorithms together, we believe that our approach can be further improved by

integrating the clustering algorithm and the association rule mining algorithm

tightly in our future work.

Acknowledgement

This work is partially supported by the National Hi-Tech Development 863 Program of China under grant No. 2002AA104240, and the Informatization Project

under the Tenth Five-Year Plan of the Chinese Academy of Sciences under grant

No. INF105-SDB. We thank Mr. Longshe Huo and Miss Hong Pan for their helpful suggestions.

References

1. Han, J., Kamber, M.: Data Mining Concepts and Techniques. China Machine Press

and Morgan Kaufmann Publishers (2001)

2. Agrawal, R., Imielinski, T. and Swami, A.: Mining Association Rules between Sets

of Items in Large Databases. In Proc. of the 1993 ACM SIGMOD International

Conf. on Management of Data, Washington, D.C., May (1993) 207–216

3. Agrawal, R. and Srikant, R.: Fast Algorithms for Mining Association Rules in Large

Databases. In Proc. of 20th International Conf. on Very Large Data Bases, Santiago,

Chile, September (1994) 487–499

4. Han, J., Pei, J., Yin, Y. and Mao, R.: Mining Frequent Patterns without Candidate Generation: A Frequent-Pattern Tree Approach. Data Mining and Knowledge

Discovery (2004) 8, 53–87

50

Q. Tong, B. Yan, and Y. Zhou

5. Srikant, R. and Agrawal, R.: Mining Quantitative Association Rules in Large Relational Tables. In Proc. of the 1996 ACM SIGMOD International Conf. on Management of Data, Montreal, Canada, June (1996) 1–12

6. Miller, R. J. and Yang, Y.: Association Rules over Interval Data. In Proc. of the

1997 ACM SIGMOD International Conf. on Management of Data, Tucson, Arizona,

United States, May (1997) 452–461

7. Zhang, R., Ramakrishnan, R. and Livny, M.: BIRCH: An Efficient Data Clustering

Method for Very Large Databases. In Proc. of the 1996 ACM SIGMOD International

Conf. on Management of Data, Montreal, Canada, June (1996) 103–114

8. Jain, A. K., Dubes, R. C.: Algorithms for Clustering Data. Prentice Hall, Englewood

Cliffs, New Jersey (1988)

9. Kaufman, L. and Rousseeuw, P. J.: Finding Groups in Data: An Introduction to

Cluster Analysis. New York: John Wiley and Sons (1990)

10. Bailey, C.: Smart Exercise: Burning Fat, Getting Fit. Houghton-Mifflin Co., Boston

(1994) 179–186