* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

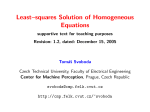

Download Solving a Homogeneous Linear Equation System

Non-negative matrix factorization wikipedia , lookup

Cubic function wikipedia , lookup

Jordan normal form wikipedia , lookup

Quadratic form wikipedia , lookup

Bra–ket notation wikipedia , lookup

Quartic function wikipedia , lookup

Quadratic equation wikipedia , lookup

Matrix calculus wikipedia , lookup

Signal-flow graph wikipedia , lookup

Basis (linear algebra) wikipedia , lookup

Gaussian elimination wikipedia , lookup

Cayley–Hamilton theorem wikipedia , lookup

Matrix multiplication wikipedia , lookup

Elementary algebra wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

System of polynomial equations wikipedia , lookup

History of algebra wikipedia , lookup

Linear algebra wikipedia , lookup

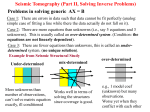

Solving a Homogeneous Linear Equation System A standard problem in computer vision (and in engineering in general) is to solve a set of homogeneous linear equations. A homogeneous linear equation system is given by the expression Ax = 0 , (1) where x is the vector of N unknowns, and A is the matrix of (M ×N ) coefficients. We will assume here that there are enough equations to solve for the unknowns, i.e. M ≥ N . The equations are linear in all unknowns, and the right side is 0. Hence, every linear equation system allows the trivial solution x = 0. This is normally not the desired solution. The standard way to avoid it is to constrain the vector x to a fixed length, for example |x|2 = 1 . (2) The constraint is not P linear, but quadratic in the elements of x, because the 2 vector norm is |x| = x2i . The numerically best way to solve the equations (1) subject to the constraint (2) is to perform singular value decomposition on the matrix A. Singular Value Decomposition (SVD) factors the matrix into a diagonal matrix D and two orthogonal matrices U, V, such that A = UDVT (3) The diagonal entries of D are related to eigenvalues of AT A. If the number of linearly independent equations in (1) is the same as the number of unknowns, M = N , then D will have exactly one diagonal entry di = 0. The matrices are often reordered such that the diagonal entries of D are in descending order (“ordered SVD”) – then this is the last (rightmost) entry. The exact solution of the equation system is given by the column of V, which corresponds to the diagonal entry di = 0 (in ordered SVD, this is the last column of V). If M > N , i.e. the equation system is over-constrained, then there is no exact solution, and no diagonal entry di = 0. Usually one is interested in the solution, which minimizes the sum of squared errors over all variables (under Gaussian white noise, this is the most likely solution). The least-squares solution is given by the column of V, which corresponds to the smallest diagonal entry of D – in ordered SVD again the last column. In MATLAB, ordered singular value decomposition comes as a pre-canned routine. The equation system is solved using the commands [U,D,V] = svd(A,0); x = V(:,end);