* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download section 5.5 reduction to hessenberg and tridiagonal forms

Non-negative matrix factorization wikipedia , lookup

Four-vector wikipedia , lookup

Jordan normal form wikipedia , lookup

Singular-value decomposition wikipedia , lookup

Cayley–Hamilton theorem wikipedia , lookup

Perron–Frobenius theorem wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

Gaussian elimination wikipedia , lookup

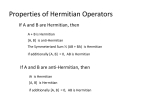

SECTION 5.5 REDUCTION TO HESSENBERG AND TRIDIAGONAL FORMS This section is concerned with an essential "pre-processing" step of the computation of the eigenvalues and eigenvectors of a matrix using the QR algorithm. This preliminary step is the reduction of A to upper Hessenberg form H by a similarity transformation. × × × × × × × × U * AU = H = O L L L O × × × × M × × × M × × × × This step is necessary to make the QR algorithm efficient. If A is Hermitian (that is, A* = A ), then H * = (U * AU ) * = U * A*U = U * AU = H , which implies that H is tridiagonal. That is, a Hermitian, upper Hessenberg matrix must be tridiagonal. UNITARY REDUCTION to upper Hessenberg form (page 350) -- uses reflectors Use the basic theorem regarding reflectors: if x is not a scalar multiple of the unit vector e1 , then let σ = sgn ( x1 ) x 2 and let u = x + σ e1 . Then 2uu T I − T u u x = −σ e1 . FIRST STEP of the reduction: partition A as 207 a A = 11 b cT Aˆ and choose a reflector Q̂1 such that Qˆ 1b = −σ 1e1 . Then a11 1 0 L 0 1 0 L 0 0 0 − σ 1 A = 0 M M Qˆ 1 Qˆ 1 M 0 0 0 * . The remaining steps are similar, using 1 0 0 M 0 0 L 1 0 L 0 M Qˆ 2 , 1 0 0 0 M 0 1 0 0 M 0 0 L 0 0 L 1 0 L 0 M Qˆ 3 , and so on. More details are on page 351, and an algorithm is given on page 352. The flop count is ≈ 10n 3 . 3 If A is Hermitian, then one can exploit symmetry and reduce the flop count to ≈ See pages 353-355. 208 4n 3 . 3