* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download decision rule - Berkeley Statistics - University of California, Berkeley

Survey

Document related concepts

Transcript

Data Errors, Model Errors, and Estimation Errors

Frontiers of Geophysical Inversion Workshop

Waterways Experiment Station

Vicksburg, MS 17-19 February 2002

P.B. Stark

Department of Statistics

University of California

Berkeley CA

www.stat.berkeley.edu/~stark

Acknowledgements

Excerpted from Evans, S.N. and Stark, P.B., 2001.

“Inverse Problems as Statistics,” Technical report 609,

Department of Statistics, University of California,

Berkeley.

Theory and Practice

In Theory, there is no difference between Theory

and Practice, but in Practice, there is.+

Reality. What a concept!++

Challenge: accept the complexity and unpredictability of

practice; develop useful (& computable) methodology.

+Jan

L.A. de Snepscheut.

++Robin

Williams

Forward Problems in Statistics

• Measurable space X of possible data.

• Set of possible descriptions of the world—models.

• Family P = {P : } of probability distributions on X , indexed by

models .

•Forward operator P maps model into a probability measure on X .

Data X are a sample from P.

P is whole story: stochastic variability in the “truth,” contamination by

measurement error, systematic error, censoring, etc.

Models

Index set usually has special structure.

For example, could be a convex subset of a separable

Banach space T. (geomag, seismo, grav, MT, …)

The forward mapping P maps the index of the

model to a probability distribution for the data.

The physical significance of generally gives P

reasonable analytic properties, e.g., continuity.

Example: Function estimation w/ systematic and random error

Observe

f (t j ) ρ j ε j , j 1, 2, ... , n,

• f C , a set of smooth of functions on [0, 1]

• tj [0, 1]

• |j| 1, j=1, 2, …, n

• j iid N(0, 1)

with

Example, cont’d

Let = C [-1, 1]n, X = Rn, = (f, 1, 2, …, n)

P is the probability distribution on Rn with density

n

- n/2

2

(2π)

exp 12 ( x j f (t j ) ρ j ) .

j 1

Forward Problems in Geophysics

Composition of steps:

– transform idealized description of Earth into perfect, noise-free,

infinite-dimensional data (“approximate physics”)

– censor perfect data to retain only a finite list of numbers, because

can only measure, record, and compute with such lists

– possibly corrupt the list with measurement error.

Equivalent to single-step procedure with corruption on par with

physics, and mapping incorporating the censoring.

Geophysical v. Statistical Forward Problems

Statistical framework for forward problems more

general:

Forward problems of Applied Math and Geophysics are

instances of statistical forward problems.

Parameters

A parameter of a model θ is the value g(θ) at θ of a

continuous G-valued function g defined on .

(g can be the identity.)

Inverse Problems

Observe data X drawn from distribution Pθ for some

unknown . (Assume contains at least two points;

otherwise, data superfluous.)

Use data X and the knowledge that to learn about

; for example, to estimate a parameter g().

Applied Math and Statistical Perspectives

• Applied Math: recover a parameter of a pde or the solution of

an integral equation from infinitely many data, noise-free or

with deterministic error.

– Common issues: existence, uniqueness, construction, stability for

deterministic noise.

• Statistics: estimate or draw inference about parameter from

finitely many noisy data

– Common issues: identifiability, consistency, bias, variance,

efficiency, MSE, etc.

Many Connections

Identifiability—distinct parameter values yield distinct

probability distributions for the observables—similar to

uniqueness—forward operator maps at most one model into the

observed data.

Consistency—parameter can be estimated with arbitrary

accuracy as the number of data grows—related to stability of a

recovery algorithm—small changes in the data produce small

changes in the recovered model.

quantitative connections too.

Geophysical Inverse Problems

• Inverse problems in geophysics often “solved” using applied

math methods for Ill-posed problems (e.g., Tichonov

regularization, analytic inversions)

• Those methods are designed to answer different questions; can

behave poorly with data (e.g., bad bias & variance)

• Inference construction: statistical viewpoint more

appropriate for interpreting geophysical data.

Elements of the Statistical View

Distinguish between characteristics of the problem, and

characteristics of methods used to draw inferences

One fundamental property of a parameter:

g is identifiable if for all η, υ Θ,

{g(η) g(υ)} {Pη Pυ}.

In most inverse problems, g(θ) = θ not identifiable, and

few linear functionals of θ are identifiable

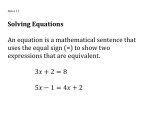

Decision Rules

A (randomized) decision rule

δ: X M1(A)

x δx(.),

is a measurable mapping from the space X of possible data to the collection

M1(A) of probability distributions on a separable metric space A of actions.

A non-randomized decision rule is a randomized decision rule that, to each

x X, assigns a unit point mass at some value

a = a(x) A.

Estimators

An estimator of a parameter g(θ) is a decision rule for

which the space A of possible actions is the space G of

possible parameter values.

ĝ=ĝ(X) is common notation for an estimator of g(θ).

Usually write non-randomized estimator as a G-valued

function of x instead of a M1(G)-valued function.

Comparing Decision Rules

Infinitely many decision rules and estimators.

Which one to use?

The best one!

But what does best mean?

Loss and Risk

• Formulate as 2-player game: Nature v. Statistician.

• Nature picks θ from Θ. θ is secret, but statistician knows Θ.

• Statistician picks δ from a set D of decision rules. δ is secret.

• Generate data X from Pθ, apply δ.

• Statistician pays loss l (θ, δ(X)). l should be dictated by

scientific context, but…

• Risk is expected loss: r(θ, δ) = Eθl (θ, δ(X))

• Good decision rule has small risk, but what does small mean?

Strategy

Rare that a single decision rule has smallest risk for

every .

• Decision is admissible if not dominated by another.

• Minimax decision minimizes supr (θ, δ) over dD

• Bayes decision minimizes r (θ, δ) π(dθ) over dD

for a given prior probability distribution p on .

Minimax is Bayes for least favorable prior

Pretty generally for convex , D, concave-convexlike r,

inf sup r (θ, δ) sup inf

δD θ

r

(

θ

,

δ)dπ(θ).

π δD

If minimax risk >> Bayes risk, prior π controls the

apparent uncertainty of the Bayes estimate.

Common Risk: Mean Distance Error (MDE)

Let dG denote the metric on G.

MDE at θ of estimator ĝ of g is

MDEθ(ĝ, g) = Eθ [d(ĝ, g(θ))].

When metric derives from norm, MDE is called mean

norm error (MNE).

When the norm is Hilbertian, (MNE)2 is called mean

squared error (MSE).

Bias

When G is a Banach space, can define bias at θ of ĝ:

biasθ(ĝ, g) = Eθ [ĝ - g(θ)]

(when the expectation is well-defined).

• If biasθ(ĝ, g) = 0, say ĝ is unbiased at θ (for g).

• If ĝ is unbiased at θ for g for every θ, say ĝ is unbiased for

g. If such ĝ exists, g is unbiasedly estimable.

• If g is unbiasedly estimable then g is identifiable.

More Notation

Let T be a separable Banach space, T * its normed dual.

Write the pairing between T and T *

<•, •>: T * x T R.

Linear Forward Problems

A forward problem is linear if

• Θ is a subset of a separable Banach space T

• For some fixed sequence (κj)j=1n of elements of T*,

X = (Xj) j=1n, where

Xj = <κj, θ> + εj, θΘ, and

ε = (εj)j=1n

is a vector of stochastic errors whose distribution does not

depend on θ (so X = Rn).

Linear Forward Problems, contd.

• The linear functionals {κj} are the “representers”

• The distribution Pθ is the probability distribution of X.

Typically, dim(Θ) = ; at the very least, n < dim(Θ), so

estimating θ is an underdetermined problem.

Define

K : T Rn

Θ (<κj, θ>)j=1n .

Abbreviate forward problem by X = Kθ + ε, θ Θ.

Linear Inverse Problems

Use X = Kθ + ε, and the knowledge θ Θ to estimate

or draw inferences about g(θ).

Probability distribution of X depends on θ only through

Kθ, so if there are two points

θ1, θ2 Θ such that Kθ1 = Kθ2 but

g(θ1)g(θ2),

then g(θ) is not identifiable.

Backus-Gilbert++: Necessary conditions

Let g be an identifiable real-valued parameter. Suppose θ0Θ, a

symmetric convex set Ť T, cR, and ğ: Ť R such that:

1. θ0 + Ť Θ

2. For t Ť, g(θ0 + t) = c + ğ(t), and ğ(-t) = -ğ(t)

3. ğ(a1t1 + a2t2) = a1ğ(t1) + a2ğ(t2), t1, t2 Ť, a1, a2 0, a1+a2 = 1, and

4. supt Ť | ğ(t)| <.

Then 1×n matrix Λ s.t. the restriction of ğ to Ť is the restriction of Λ.K to

Ť.

Backus-Gilbert++: Sufficient Conditions

Suppose g = (gi)i=1m is an Rm-valued parameter that can be

written as the restriction to Θ of Λ.K for some m×n matrix Λ.

Then

1. g is identifiable.

2. If E[ε] = 0, Λ.X is an unbiased estimator of g.

3. If, in addition, ε has covariance matrix Σ = E[εεT], the

covariance matrix of Λ.X is Λ.Σ.ΛT whatever be Pθ.

Corollary: Backus-Gilbert Theory

Let T be a Hilbert space; let Θ = T ; let g T = T * be a

n

*

{

}

T

.

linear parameter; and let κ j j1

The parameter g(θ) is identifiable iff g = Λ.K for some

1×n matrix Λ.

In that case, if E[ε] = 0, then ĝ = Λ.X is unbiased for g.

If, in addition, ε has covariance matrix Σ = E[εεT], then

the MSE of ĝ is Λ.Σ.ΛT.

Consistency in Linear Inverse Problems

• Xi = i + i, i=1, 2, 3, …

subset of separable Banach

{i} * linear, bounded on

{i} iid

• consistently estimable w.r.t. weak topology iff

{Tk}, Tk Borel function of X1, . . . , Xk s.t.

, >0, *, limk P{|Tk - |>} = 0

Importance of the Error Distribution

• µ a prob. measure on ; µa(B) = µ(B-a), a

• Hellinger distance δ(a, b) 1

dμ a dμ b

2

• Pseudo-metric on **:

{ (

)}

2 1/ 2

1 k 2

Dk (t1 , t 2 ) { δ (t1κ i , t 2 κ i )}1/ 2

k i 1

• If restriction to converges to metric compatible with weak

topology, can estimate consistently in weak topology.

• For given sequence of functionals {i}, µ rougher

consistent estimation easier.

Summary

• Statistical viewpoint is useful abstraction. Physics in map

P; prior information in constraint . Represents

systematic and stochastic errors, censoring, etc.

• Separating “model” from parameters of interest is useful:

Sabatier’s “well posed questions.”

• “Solving” inverse problem means different things to different

audiences. Thinking about measures of performance is useful.

• Difficulty of problem performance of specific method.