GroveX10Overview - The X10 Programming Language

... Flash forward a few years… Big Data and Commercial HPC ...

... Flash forward a few years… Big Data and Commercial HPC ...

CGL Requirements Definition V5.0

... In the time since the fourth major version of the Carrier Grade Linux Specification has been published there has been a great shift in both the telecommunication industry and the open source community. Most consumers of mobile communications devices see them as conduits for communication, be that vo ...

... In the time since the fourth major version of the Carrier Grade Linux Specification has been published there has been a great shift in both the telecommunication industry and the open source community. Most consumers of mobile communications devices see them as conduits for communication, be that vo ...

Lecture 5 Socket Programming CSE524, Fall 2002

... copies nbytes of data into buf returns the address of the client (cli) returns the length of cli (cli_len) don’t worry about flags ...

... copies nbytes of data into buf returns the address of the client (cli) returns the length of cli (cli_len) don’t worry about flags ...

Hyper Converged 250 System for VMware vSphere Installation Guide

... Certificate error displays during login to vCenter Web Client......................................................69 Deployment process hangs during system configuration........................................................... 69 Deployment process stalls with 57 seconds remaining and then times ...

... Certificate error displays during login to vCenter Web Client......................................................69 Deployment process hangs during system configuration........................................................... 69 Deployment process stalls with 57 seconds remaining and then times ...

Clustered Data ONTAP 8.3 Network Management Guide

... How to use VLANs for tagged and untagged network traffic ....................... 24 Creating a VLAN .......................................................................................... 25 Deleting a VLAN .......................................................................................... ...

... How to use VLANs for tagged and untagged network traffic ....................... 24 Creating a VLAN .......................................................................................... 25 Deleting a VLAN .......................................................................................... ...

CS2504, Spring`2007 ©Dimitris Nikolopoulos

... Each layers provides an interface to access the computer and hides implementation details from the layers above it Example: binary code, instruction set, high-level programming language, component programming Simplifies computing tasks, such as programming, compiling, running programs CS has been ra ...

... Each layers provides an interface to access the computer and hides implementation details from the layers above it Example: binary code, instruction set, high-level programming language, component programming Simplifies computing tasks, such as programming, compiling, running programs CS has been ra ...

client server computing

... – Run own copy of an operating system. – Run one or more applications using the client machine's CPU, memory. – Application communicates with DBMS server running on server machine through a Database Driver – Database driver (middleware) makes a connection to the DBMS server over a network. www.notes ...

... – Run own copy of an operating system. – Run one or more applications using the client machine's CPU, memory. – Application communicates with DBMS server running on server machine through a Database Driver – Database driver (middleware) makes a connection to the DBMS server over a network. www.notes ...

Parallel Programing with MPI

... a subroutine library with all MPI functions include files for the calling application program some startup script (usually called mpirun, but not standardized) ...

... a subroutine library with all MPI functions include files for the calling application program some startup script (usually called mpirun, but not standardized) ...

Chapter 19 Java Data Structures

... Each node contains the element and a data field named next that points to the next element. If the node is the last in the list, its pointer data field next contains the value null. You can use this property to detect the last node. For example, you may write the following loop to traverse all the n ...

... Each node contains the element and a data field named next that points to the next element. If the node is the last in the list, its pointer data field next contains the value null. You can use this property to detect the last node. For example, you may write the following loop to traverse all the n ...

MapReduce on Multi-core

... this programming model is that it allows the programmers to define the computational problem using functional style algorithm. The runtime system automatically parallelises this algorithm by distributing it on a large cluster of scalable nodes. The main advantage of using this model is the simplicit ...

... this programming model is that it allows the programmers to define the computational problem using functional style algorithm. The runtime system automatically parallelises this algorithm by distributing it on a large cluster of scalable nodes. The main advantage of using this model is the simplicit ...

PDF - 4up

... Each node contains the element and a data field named next that points to the next element. If the node is the last in the list, its pointer data field next contains the value null. You can use this property to detect the last node. For example, you may write the following loop to traverse all the n ...

... Each node contains the element and a data field named next that points to the next element. If the node is the last in the list, its pointer data field next contains the value null. You can use this property to detect the last node. For example, you may write the following loop to traverse all the n ...

Lecture 1 - DePaul University

... It’s not enough to just learn how to use Windows or browse the web (that’s all fun, but that’s not Computer Science!) Computer Science involves all the work needed to make things happen with a computer: developing algorithms, and how to make machines perform them. CSC255-702/703 - CTI/DePaul ...

... It’s not enough to just learn how to use Windows or browse the web (that’s all fun, but that’s not Computer Science!) Computer Science involves all the work needed to make things happen with a computer: developing algorithms, and how to make machines perform them. CSC255-702/703 - CTI/DePaul ...

Document

... array of RISC microprocessors with following communication mechanisms: – A low-latency, high-bandwidth communications mechanism that allows each processor to access data stored in other processors – A fast global synchronization mechanism that allows the entire machine to be brought to a defined sta ...

... array of RISC microprocessors with following communication mechanisms: – A low-latency, high-bandwidth communications mechanism that allows each processor to access data stored in other processors – A fast global synchronization mechanism that allows the entire machine to be brought to a defined sta ...

Programming High Performance Applications using Components

... High-Performance applications and code coupling The CORBA Component Model CCM in the context of HPC GridCCM: Encapsulation of SPMD parallel codes into components Runtime support for GridCCM components ...

... High-Performance applications and code coupling The CORBA Component Model CCM in the context of HPC GridCCM: Encapsulation of SPMD parallel codes into components Runtime support for GridCCM components ...

Characteristics of virtualized environment

... CPU installed on the host is only one set, but each VM that runs on the host ...

... CPU installed on the host is only one set, but each VM that runs on the host ...

PowerPoint - UBC Department of Computer Science

... MPI Messages Using Same Context, Two Processes Process X ...

... MPI Messages Using Same Context, Two Processes Process X ...

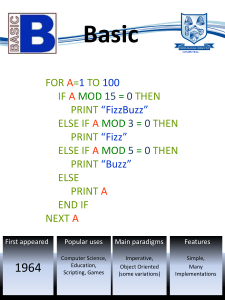

int i = 1

... console.log("FizzBuzz"); } else if ( a % 3 === 0 ) { console.log("Fizz"); } else if (a % 5 === 0 ) { console.log("Buzz"); ...

... console.log("FizzBuzz"); } else if ( a % 3 === 0 ) { console.log("Fizz"); } else if (a % 5 === 0 ) { console.log("Buzz"); ...

Network Load Balancing - Security Audit Systems

... widely available for most client/server applications. Instead, Network Load Balancing provides the mechanisms needed by application monitors to control cluster operations—for example, to remove a host from the cluster if an application fails or displays erratic behavior. When an application failure ...

... widely available for most client/server applications. Instead, Network Load Balancing provides the mechanisms needed by application monitors to control cluster operations—for example, to remove a host from the cluster if an application fails or displays erratic behavior. When an application failure ...

VMWARE PUBLIC CLOUD INFRASTRUCTURE – Development environment design and implementation

... Storage connectivity is configured to use Internet Small Computer System Interface, more commonly known as iSCSI. iSCSI is an IP-based storage networking standard that allows servers to use a common, shared storage area network (SAN). All virtual machines are stored on shared storage to allow any hy ...

... Storage connectivity is configured to use Internet Small Computer System Interface, more commonly known as iSCSI. iSCSI is an IP-based storage networking standard that allows servers to use a common, shared storage area network (SAN). All virtual machines are stored on shared storage to allow any hy ...

Oracle RAC From Dream To Production

... This approach is very powerful but complex. You can create very complex environments – multiple NICs, switches, disks etc. ...

... This approach is very powerful but complex. You can create very complex environments – multiple NICs, switches, disks etc. ...

PPT

... when rate of information transmission is low enough that the overhead of explicit route discovery/maintenance incurred by other protocols is relatively higher ...

... when rate of information transmission is low enough that the overhead of explicit route discovery/maintenance incurred by other protocols is relatively higher ...

OCR Computer Science -PLC – the big one

... AO1: Demonstrate knowledge and understanding of the key concepts and principles of Computer Science. AO2: Apply knowledge and understanding of key concepts and principles of Computer Science. AO3: Analyse problems in computational terms: • to make reasoned judgements • to design, program, evaluate a ...

... AO1: Demonstrate knowledge and understanding of the key concepts and principles of Computer Science. AO2: Apply knowledge and understanding of key concepts and principles of Computer Science. AO3: Analyse problems in computational terms: • to make reasoned judgements • to design, program, evaluate a ...

Video Surveillance EMC Storage with Honeywell Digital Video Manager Sizing Guide

... configuration. Server and network hardware can also affect performance. Performance varies depending on the specific hardware and software and might be different from what is outlined here. If your environment uses similar hardware and network topology, performance results should be similar. ...

... configuration. Server and network hardware can also affect performance. Performance varies depending on the specific hardware and software and might be different from what is outlined here. If your environment uses similar hardware and network topology, performance results should be similar. ...

Computer cluster

A computer cluster consists of a set of loosely or tightly connected computers that work together so that, in many respects, they can be viewed as a single system. Unlike grid computers, computer clusters have each node set to perform the same task, controlled and scheduled by software.The components of a cluster are usually connected to each other through fast local area networks (""LAN""), with each node (computer used as a server) running its own instance of an operating system. In most circumstances, all of the nodes use the same hardware and the same operating system, although in some setups (i.e. using Open Source Cluster Application Resources (OSCAR)), different operating systems can be used on each computer, and/or different hardware.They are usually deployed to improve performance and availability over that of a single computer, while typically being much more cost-effective than single computers of comparable speed or availability.Computer clusters emerged as a result of convergence of a number of computing trends including the availability of low-cost microprocessors, high speed networks, and software for high-performance distributed computing. They have a wide range of applicability and deployment, ranging from small business clusters with a handful of nodes to some of the fastest supercomputers in the world such as IBM's Sequoia. The applications that can be done however, are nonetheless limited, since the software needs to be purpose-built per task. It is hence not possible to use computer clusters for casual computing tasks.