Lecture 8 - HMC Math

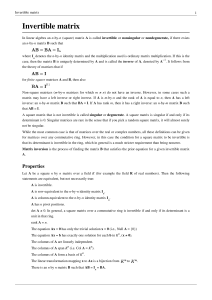

... Since A~x = ~0 has only the trivial solution ~x = ~0, by the Fundamental Thm of Inverses, we have that A is invertible, i.e., A−1 exists. Thus, (BA)A−1 = IA−1 =⇒ B (AA−1) = A−1 =⇒ B = A−1. | {z } I ...

... Since A~x = ~0 has only the trivial solution ~x = ~0, by the Fundamental Thm of Inverses, we have that A is invertible, i.e., A−1 exists. Thus, (BA)A−1 = IA−1 =⇒ B (AA−1) = A−1 =⇒ B = A−1. | {z } I ...

Chapter 18. Introduction to Four Dimensions Linear algebra in four

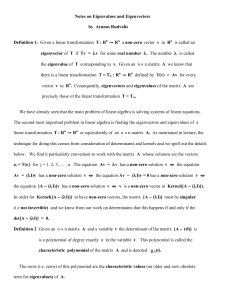

... Cramer’s formula involves sixteen 3 × 3 determinants and one 4 × 4 determinant. Usually one asks a computer to work this out. However if the matrix has a lot of zeros or has some pattern, then the determinants det Aij , hence A−1 , may computed by hand without too much trouble. Eigenvalues and Eigen ...

... Cramer’s formula involves sixteen 3 × 3 determinants and one 4 × 4 determinant. Usually one asks a computer to work this out. However if the matrix has a lot of zeros or has some pattern, then the determinants det Aij , hence A−1 , may computed by hand without too much trouble. Eigenvalues and Eigen ...

Properties of Matrices

... To find the ith row, jth column element of AB, multiply each element in the ith row of A by the corresponding element in the jth column of B. (Note the shaded areas in the matrices below.) The sum of these products will give the row i, column j element of AB. ...

... To find the ith row, jth column element of AB, multiply each element in the ith row of A by the corresponding element in the jth column of B. (Note the shaded areas in the matrices below.) The sum of these products will give the row i, column j element of AB. ...

Principles of Scientific Computing Linear Algebra II, Algorithms

... The right side requires n multiplies and about n adds for each j and k. That makes n × n2 = n3 multiplies and adds in total. The formulas (3) have (almost) exactly 2n3 flops in this sense. More generally, suppose A is n × m and B is m × p. Then AB is n × p, and it takes (approximately) m flops to ca ...

... The right side requires n multiplies and about n adds for each j and k. That makes n × n2 = n3 multiplies and adds in total. The formulas (3) have (almost) exactly 2n3 flops in this sense. More generally, suppose A is n × m and B is m × p. Then AB is n × p, and it takes (approximately) m flops to ca ...

EppDm4_10_03

... abstract settings, the definition for matrices whose entries are real numbers will be sufficient for our applications. The product of two matrices is built up of scalar or dot products of their individual rows and columns. ...

... abstract settings, the definition for matrices whose entries are real numbers will be sufficient for our applications. The product of two matrices is built up of scalar or dot products of their individual rows and columns. ...

Matrix completion

In mathematics, matrix completion is the process of adding entries to a matrix which has some unknown or missing values.In general, given no assumptions about the nature of the entries, matrix completion is theoretically impossible, because the missing entries could be anything. However, given a few assumptions about the nature of the matrix, various algorithms allow it to be reconstructed. Some of the most common assumptions made are that the matrix is low-rank, the observed entries are observed uniformly at random and the singular vectors are separated from the canonical vectors. A well known method for reconstructing low-rank matrices based on convex optimization of the nuclear norm was introduced by Emmanuel Candès and Benjamin Recht.