Lesson 96 – Discrete Random Variables

... (b) The probability of getting a ‘double’ with two of these dice. Compare with the ‘normal’ probability of getting a double ...

... (b) The probability of getting a ‘double’ with two of these dice. Compare with the ‘normal’ probability of getting a double ...

A Survey of Probability Concepts

... are defective. What is the probability of getting a defective roll the first time we draw, followed by a defective roll the second time we draw (assuming there are no replacements)? 1st film being defective: P(D1)=3/10 (3 out of 10 are defective) 2nd film being defective: P(D2|D1)= 2/9 (now 2 out of ...

... are defective. What is the probability of getting a defective roll the first time we draw, followed by a defective roll the second time we draw (assuming there are no replacements)? 1st film being defective: P(D1)=3/10 (3 out of 10 are defective) 2nd film being defective: P(D2|D1)= 2/9 (now 2 out of ...

Math 7 (Holt)

... 1. A bag contains five yellow disks, eight red disks, four orange disks, and two blue disks. All the disks are the same size. A. Would you be more likely to pull an orange or a yellow disk? ____________ B. What color disk are you least likely to pull? ...

... 1. A bag contains five yellow disks, eight red disks, four orange disks, and two blue disks. All the disks are the same size. A. Would you be more likely to pull an orange or a yellow disk? ____________ B. What color disk are you least likely to pull? ...

Unit 4 Review Packet

... 4. If P(A) = 0.65 and P(B) = 0.23 and P(A∩B) = 0.15, find the following: a. P(A U B) = b. P(B|A) = c. Are A and B disjoint events? Why or why not? d. Are A and B independent? Why or why not? ...

... 4. If P(A) = 0.65 and P(B) = 0.23 and P(A∩B) = 0.15, find the following: a. P(A U B) = b. P(B|A) = c. Are A and B disjoint events? Why or why not? d. Are A and B independent? Why or why not? ...

Convergence in Mean Square Tidsserieanalys SF2945 Timo Koski

... then we say that (6.5) is a causal linear process. The condition (6.6) guarantees (c.f. (5.6)) that the infinite sum in (6.5) converges in mean square. By causality we mean that the current value Xt is influenced only by values of the white noise in the past, i.e., Zt−1 , Zt−2 , . . . , and its curr ...

... then we say that (6.5) is a causal linear process. The condition (6.6) guarantees (c.f. (5.6)) that the infinite sum in (6.5) converges in mean square. By causality we mean that the current value Xt is influenced only by values of the white noise in the past, i.e., Zt−1 , Zt−2 , . . . , and its curr ...

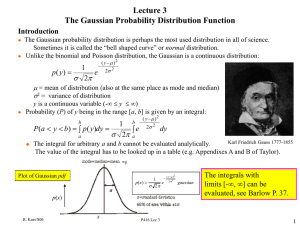

Random Variables. Probability Distributions

... • Similarly, a probability distribution or, briefly, a distribution, shows the probabilities of events in an experiment. • The quantity that we observe in an experiment will be denote by X and called a random variable (or stochastic variable) because the value it will assume in the next trial depend ...

... • Similarly, a probability distribution or, briefly, a distribution, shows the probabilities of events in an experiment. • The quantity that we observe in an experiment will be denote by X and called a random variable (or stochastic variable) because the value it will assume in the next trial depend ...

Probability

... • Suppose the retention rate for a school indicates the probability a freshman returns for their sophmore year is 0.65. Among 12 randomly selected freshman, what is the probability 8 of them return to school next year? Each student either returns or doesn’t. Think of each selected student as a trial ...

... • Suppose the retention rate for a school indicates the probability a freshman returns for their sophmore year is 0.65. Among 12 randomly selected freshman, what is the probability 8 of them return to school next year? Each student either returns or doesn’t. Think of each selected student as a trial ...

Chapter 13. What Are the Chances?

... Now we will turn our discussion to probability. Above we defined probability as the measure of the likelihood that a given event will occur. In some instances the words chance and probability are interchangeable. For example, one could say the chance of winning or the probability of winning, in whic ...

... Now we will turn our discussion to probability. Above we defined probability as the measure of the likelihood that a given event will occur. In some instances the words chance and probability are interchangeable. For example, one could say the chance of winning or the probability of winning, in whic ...

Probability - Mrs A`s Weebly

... Say whether each of these events is ‘certain’, ‘likely’, ‘unlikely’ or impossible to occur. a.) You will live in the same house for the rest of your life b.) You will toss a die and roll a ‘six’ c.) The sun will set in the west tonight d.) It will be colder where in February than in August e.) The ...

... Say whether each of these events is ‘certain’, ‘likely’, ‘unlikely’ or impossible to occur. a.) You will live in the same house for the rest of your life b.) You will toss a die and roll a ‘six’ c.) The sun will set in the west tonight d.) It will be colder where in February than in August e.) The ...

Typical Test Problems (with solutions)

... This time the difference is significant. In addition, we can now understand the source of the problem, The Binomial distribution can be used only when the probabilities of two outcomes do not depend on the number of previous trials. This condition is not fulfilled in our example. The probability of ...

... This time the difference is significant. In addition, we can now understand the source of the problem, The Binomial distribution can be used only when the probabilities of two outcomes do not depend on the number of previous trials. This condition is not fulfilled in our example. The probability of ...

Infinite monkey theorem

The infinite monkey theorem states that a monkey hitting keys at random on a typewriter keyboard for an infinite amount of time will almost surely type a given text, such as the complete works of William Shakespeare.In this context, ""almost surely"" is a mathematical term with a precise meaning, and the ""monkey"" is not an actual monkey, but a metaphor for an abstract device that produces an endless random sequence of letters and symbols. One of the earliest instances of the use of the ""monkey metaphor"" is that of French mathematician Émile Borel in 1913, but the first instance may be even earlier. The relevance of the theorem is questionable—the probability of a universe full of monkeys typing a complete work such as Shakespeare's Hamlet is so tiny that the chance of it occurring during a period of time hundreds of thousands of orders of magnitude longer than the age of the universe is extremely low (but technically not zero). It should also be noted that real monkeys don't produce uniformly random output, which means that an actual monkey hitting keys for an infinite amount of time has no statistical certainty of ever producing any given text.Variants of the theorem include multiple and even infinitely many typists, and the target text varies between an entire library and a single sentence. The history of these statements can be traced back to Aristotle's On Generation and Corruption and Cicero's De natura deorum (On the Nature of the Gods), through Blaise Pascal and Jonathan Swift, and finally to modern statements with their iconic simians and typewriters. In the early 20th century, Émile Borel and Arthur Eddington used the theorem to illustrate the timescales implicit in the foundations of statistical mechanics.

![Probability class 09 Solved Question paper -1 [2016]](http://s1.studyres.com/store/data/008899242_1-028e1b26288e29ab33106d77bc0a5077-300x300.png)