Week 3

... M. Vlachos, G. Kollios, and D. Gunopulos, “Discovering Similar Multidimensional Trajectories,” Proc. Int’l Conf. Data Eng., pp. 673- 684, 2002. (cited by 631) Lei Chen, M. Tamer Özsu, and Vincent Oria. 2005. Robust and fast similarity search for moving object trajectories. In Proc. of the 2005 ACM S ...

... M. Vlachos, G. Kollios, and D. Gunopulos, “Discovering Similar Multidimensional Trajectories,” Proc. Int’l Conf. Data Eng., pp. 673- 684, 2002. (cited by 631) Lei Chen, M. Tamer Özsu, and Vincent Oria. 2005. Robust and fast similarity search for moving object trajectories. In Proc. of the 2005 ACM S ...

clustering

... Hierarchical approach: Create a hierarchical decomposition of the set of data (or objects) ...

... Hierarchical approach: Create a hierarchical decomposition of the set of data (or objects) ...

Classification_Feigelson

... • Density-based clustering Parametric clustering algorithms: • Mixture models Nonparametric unsupervised clustering is a very uncertain enterprise, outcomes depend on algorithms, no likelihood to maximize. Parametric unsupervised clustering lies on a stronger foundation (MLE, BIC). But it assumes th ...

... • Density-based clustering Parametric clustering algorithms: • Mixture models Nonparametric unsupervised clustering is a very uncertain enterprise, outcomes depend on algorithms, no likelihood to maximize. Parametric unsupervised clustering lies on a stronger foundation (MLE, BIC). But it assumes th ...

Unsupervised Learning: Clustering

... Supervised learning used labeled data pairs (x, y) to learn a function f : X→Y. But, what if we don’t have labels? ...

... Supervised learning used labeled data pairs (x, y) to learn a function f : X→Y. But, what if we don’t have labels? ...

Unsupervised Learning - Bryn Mawr Computer Science

... Supervised learning used labeled data pairs (x, y) to learn a function f : X→Y. But, what if we don’t have labels? ...

... Supervised learning used labeled data pairs (x, y) to learn a function f : X→Y. But, what if we don’t have labels? ...

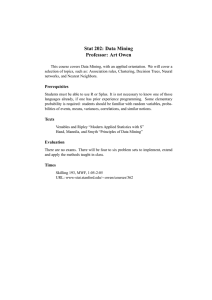

Stat 202: Data Mining Professor: Art Owen

... selection of topics, such as: Association rules, Clustering, Decision Trees, Neural networks, and Nearest Neighbors. ...

... selection of topics, such as: Association rules, Clustering, Decision Trees, Neural networks, and Nearest Neighbors. ...

Overview of Data Mining Methods (MS PPT)

... majors coming from a particular exclusive school who tend to get high grades ...

... majors coming from a particular exclusive school who tend to get high grades ...

Clustering Example

... “goodness” of a cluster. • The definitions of distance functions are usually very different for interval-scaled, boolean, categorical, ordinal and ratio variables. • Weights should be associated with different variables based on applications and data semantics. • It is hard to define “similar enough ...

... “goodness” of a cluster. • The definitions of distance functions are usually very different for interval-scaled, boolean, categorical, ordinal and ratio variables. • Weights should be associated with different variables based on applications and data semantics. • It is hard to define “similar enough ...

$doc.title

... Effect of Copper on Carbon Diffusivity in PremoMet® Alloy Problem Statement: Determine the mechanism behind PremoMet® alloys’ mechanical proper/es. ...

... Effect of Copper on Carbon Diffusivity in PremoMet® Alloy Problem Statement: Determine the mechanism behind PremoMet® alloys’ mechanical proper/es. ...

Clustering Example

... • Find representative objects, called medoids, in clusters • PAM (Partitioning Around Medoids, 1987) – starts from an initial set of medoids and iteratively replaces one of the medoids by one of the non-medoids if it improves the total distance of the resulting clustering – PAM works effectively for ...

... • Find representative objects, called medoids, in clusters • PAM (Partitioning Around Medoids, 1987) – starts from an initial set of medoids and iteratively replaces one of the medoids by one of the non-medoids if it improves the total distance of the resulting clustering – PAM works effectively for ...

What is a cluster

... Cluster point or centroid = exempler Variance = mushiness of the concept of the centroid within-group = intra-cluster between-groups = inter cluster The issue here is "similarity". How do we measure similarity? This is not easy to answer. Secondly, if there are "hidden"patterns, does the clustering ...

... Cluster point or centroid = exempler Variance = mushiness of the concept of the centroid within-group = intra-cluster between-groups = inter cluster The issue here is "similarity". How do we measure similarity? This is not easy to answer. Secondly, if there are "hidden"patterns, does the clustering ...

Clustering on Wavelet and Meta

... Special characteristics (1) It is a high dimensional basis for some high dimensional data. For 2-dimension, if the wavelet set is given by j ,k (t ) for indices of j , k 1,2,... a linear expansion would be f (t ) a j ,k j ,k (t ) k ...

... Special characteristics (1) It is a high dimensional basis for some high dimensional data. For 2-dimension, if the wavelet set is given by j ,k (t ) for indices of j , k 1,2,... a linear expansion would be f (t ) a j ,k j ,k (t ) k ...

Data Mining - Cluster Analysis

... Clustering also helps in identification of areas of similar land use in an earth observation database. It also helps in the identification of groups of houses in a city according house type, value, geographic location. ...

... Clustering also helps in identification of areas of similar land use in an earth observation database. It also helps in the identification of groups of houses in a city according house type, value, geographic location. ...

Basic clustering concepts and clustering using Genetic Algorithm

... • Some engineering sciences such as pattern recognition, artificial intelligence have been using the concepts of cluster analysis. Typical examples to which clustering has been applied include handwritten characters, samples of speech, fingerprints, and pictures. • In the life sciences (biology, bot ...

... • Some engineering sciences such as pattern recognition, artificial intelligence have been using the concepts of cluster analysis. Typical examples to which clustering has been applied include handwritten characters, samples of speech, fingerprints, and pictures. • In the life sciences (biology, bot ...

Ontology Engineering and Feature Construction for Predicting

... • These two papers consider a bag of words (BoW) approach to describe instances (web pages), and thus each instance is represented as a fixed length array. • The approach in [14] does not allow instances to belong to several concepts. • The incremental nature of the unsupervised learning approach us ...

... • These two papers consider a bag of words (BoW) approach to describe instances (web pages), and thus each instance is represented as a fixed length array. • The approach in [14] does not allow instances to belong to several concepts. • The incremental nature of the unsupervised learning approach us ...

Fabio D`Andrea LMD – 4e étage “dans les serres” 01 44 32 22 31

... How do we estimate how good is the clustering, or rather how well the data can be described as a group of clusters? What is the optimal number of clusters K, or, where to cut a dendrogram? Are the clusters statistically significant? ...

... How do we estimate how good is the clustering, or rather how well the data can be described as a group of clusters? What is the optimal number of clusters K, or, where to cut a dendrogram? Are the clusters statistically significant? ...

Cluster analysis

Cluster analysis or clustering is the task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more similar (in some sense or another) to each other than to those in other groups (clusters). It is a main task of exploratory data mining, and a common technique for statistical data analysis, used in many fields, including machine learning, pattern recognition, image analysis, information retrieval, and bioinformatics.Cluster analysis itself is not one specific algorithm, but the general task to be solved. It can be achieved by various algorithms that differ significantly in their notion of what constitutes a cluster and how to efficiently find them. Popular notions of clusters include groups with small distances among the cluster members, dense areas of the data space, intervals or particular statistical distributions. Clustering can therefore be formulated as a multi-objective optimization problem. The appropriate clustering algorithm and parameter settings (including values such as the distance function to use, a density threshold or the number of expected clusters) depend on the individual data set and intended use of the results. Cluster analysis as such is not an automatic task, but an iterative process of knowledge discovery or interactive multi-objective optimization that involves trial and failure. It will often be necessary to modify data preprocessing and model parameters until the result achieves the desired properties.Besides the term clustering, there are a number of terms with similar meanings, including automatic classification, numerical taxonomy, botryology (from Greek βότρυς ""grape"") and typological analysis. The subtle differences are often in the usage of the results: while in data mining, the resulting groups are the matter of interest, in automatic classification the resulting discriminative power is of interest. This often leads to misunderstandings between researchers coming from the fields of data mining and machine learning, since they use the same terms and often the same algorithms, but have different goals.Cluster analysis was originated in anthropology by Driver and Kroeber in 1932 and introduced to psychology by Zubin in 1938 and Robert Tryon in 1939 and famously used by Cattell beginning in 1943 for trait theory classification in personality psychology.