Part II. Testing the assumptions for ANOVA

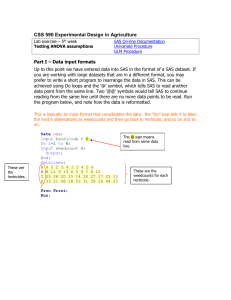

... Up to this point we have entered data into SAS in the format of a SAS dataset. If you are working with large datasets that are in a different format, you may prefer to write a short program to rearrange the data in SAS. This can be achieved using Do loops and the ‘@’ symbol, which tells SAS to read ...

... Up to this point we have entered data into SAS in the format of a SAS dataset. If you are working with large datasets that are in a different format, you may prefer to write a short program to rearrange the data in SAS. This can be achieved using Do loops and the ‘@’ symbol, which tells SAS to read ...

Sample Size and Power in Clinical Trials

... tests using 0.80 (80%) as a standard for adequacy. This convention implies a four‐to‐one trade off between β‐risk and α‐risk. (β is the probability of a Type II error, α is the probability of a Type I error, 0.2 = 1 ‐ 0.8 and 0.05 are conventional values for β and α). However, there will be time ...

... tests using 0.80 (80%) as a standard for adequacy. This convention implies a four‐to‐one trade off between β‐risk and α‐risk. (β is the probability of a Type II error, α is the probability of a Type I error, 0.2 = 1 ‐ 0.8 and 0.05 are conventional values for β and α). However, there will be time ...

Comparing Means in Two Populations

... • When reporting the results of a nonparametric test, it’s usual to only report the p-value. • In the above report, there are two p-values, one for the z-test and one using a chi-square value. • The p-values will rarely be different. • For large samples report the p-value from the normal ...

... • When reporting the results of a nonparametric test, it’s usual to only report the p-value. • In the above report, there are two p-values, one for the z-test and one using a chi-square value. • The p-values will rarely be different. • For large samples report the p-value from the normal ...

Anne_Ryan_Short_Course 2-4

... Researchers are only interested in whether the True Value is above the hypothesized value. Example— Administrators of a health care center want to know if the mean time spent by patients in the waiting room is greater than 20 ...

... Researchers are only interested in whether the True Value is above the hypothesized value. Example— Administrators of a health care center want to know if the mean time spent by patients in the waiting room is greater than 20 ...

Two-sample hypothesis testing, II

... • Sometimes, either theoretically, or from the data, it may be clear that this is not a good assumption. • Note: the equal-variance t-test is actually pretty robust to reasonable differences in the variances, if the sample sizes, n1 and n2 are (nearly) equal. – When in doubt about the variances of y ...

... • Sometimes, either theoretically, or from the data, it may be clear that this is not a good assumption. • Note: the equal-variance t-test is actually pretty robust to reasonable differences in the variances, if the sample sizes, n1 and n2 are (nearly) equal. – When in doubt about the variances of y ...

BIMM18 * Lab 2

... Analog), and culture day. Decide if T-tests would be suitable for testing the difference between treatments day 2,3 and 4. 4. Irrespective of the answer of task 3 (for practice), for each day 2,3 and 4, perform ttests comparing the cellcounts between controls – SAL, controls- Analog, and SALanalog. ...

... Analog), and culture day. Decide if T-tests would be suitable for testing the difference between treatments day 2,3 and 4. 4. Irrespective of the answer of task 3 (for practice), for each day 2,3 and 4, perform ttests comparing the cellcounts between controls – SAL, controls- Analog, and SALanalog. ...

Significance Tests

... significantly. This assumption can now be tested. The standard deviations were 0.28 and 0.31 (each obtained from five measurements on a specimen of a particular plant). • Calculating F so that it is greater than 1, F = 0.312/0.282 = 1,23 • In this case, however, we have no reason to expect in advanc ...

... significantly. This assumption can now be tested. The standard deviations were 0.28 and 0.31 (each obtained from five measurements on a specimen of a particular plant). • Calculating F so that it is greater than 1, F = 0.312/0.282 = 1,23 • In this case, however, we have no reason to expect in advanc ...

ANOVAchapter

... null hypothesis, but what I really care about are the two contrasts that I discussed above. And of these two contrasts, the one least likely to be significant is the contrast between SIT and PE. What I really want to know is what power I have to find a significant difference for that contrast. We ca ...

... null hypothesis, but what I really care about are the two contrasts that I discussed above. And of these two contrasts, the one least likely to be significant is the contrast between SIT and PE. What I really want to know is what power I have to find a significant difference for that contrast. We ca ...

Mod17-A Statistics for Water Science

... The probability of detecting a significant difference is influenced by: The magnitude of the effect A big difference is more likely to be significant ...

... The probability of detecting a significant difference is influenced by: The magnitude of the effect A big difference is more likely to be significant ...

Theories - the Department of Psychology at Illinois State University

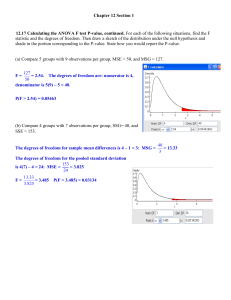

... Reporting your results The observed differences Kind of test Computed F-ratio Degrees of freedom for the test The “p-value” of the test Any post-hoc or planned comparison results “The mean score of Group A was 12, Group B was 25, and Group C was 27. A 1-way ANOVA was conducted and the resu ...

... Reporting your results The observed differences Kind of test Computed F-ratio Degrees of freedom for the test The “p-value” of the test Any post-hoc or planned comparison results “The mean score of Group A was 12, Group B was 25, and Group C was 27. A 1-way ANOVA was conducted and the resu ...

Lecture Notes #2: Introduction to Analysis of Variance 2-1

... Obviously, if we don’t know how much sleep deprivation a particular subject had, our best prediction of his or her reaction time would be the grand mean. This is ...

... Obviously, if we don’t know how much sleep deprivation a particular subject had, our best prediction of his or her reaction time would be the grand mean. This is ...

Balanced Design Analysis of Variance

... there are no numerical restrictions on these coefficients. They do not even have to sum to zero; however, this is recommended. If the coefficients do sum to zero, the comparison is called a “contrast.” The significance tests anticipate that only one or two of these comparisons are to be run. If you ...

... there are no numerical restrictions on these coefficients. They do not even have to sum to zero; however, this is recommended. If the coefficients do sum to zero, the comparison is called a “contrast.” The significance tests anticipate that only one or two of these comparisons are to be run. If you ...

Running Head: Normality and Outliers in ANOVA and MANOVA

... results to make inferences regarding the general population. To ensure the components of the test are compatible with each other, there are assumptions that must be met within each multivariate analysis (Stevens, 2009). Once you have collected your data and before moving forward with statistical ana ...

... results to make inferences regarding the general population. To ensure the components of the test are compatible with each other, there are assumptions that must be met within each multivariate analysis (Stevens, 2009). Once you have collected your data and before moving forward with statistical ana ...

Analysis of variance

Analysis of variance (ANOVA) is a collection of statistical models used to analyze the differences among group means and their associated procedures (such as ""variation"" among and between groups), developed by statistician and evolutionary biologist Ronald Fisher. In the ANOVA setting, the observed variance in a particular variable is partitioned into components attributable to different sources of variation. In its simplest form, ANOVA provides a statistical test of whether or not the means of several groups are equal, and therefore generalizes the t-test to more than two groups. As doing multiple two-sample t-tests would result in an increased chance of committing a statistical type I error, ANOVAs are useful for comparing (testing) three or more means (groups or variables) for statistical significance.

![[MSM04]](http://s1.studyres.com/store/data/008529088_2-3ce1cce4ea32dddeeb96e74b738216a4-300x300.png)