* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Shortest Paths in Directed Planar Graphs with Negative Lengths: a

Survey

Document related concepts

Network science wikipedia , lookup

Computational phylogenetics wikipedia , lookup

Computational complexity theory wikipedia , lookup

Genetic algorithm wikipedia , lookup

Selection algorithm wikipedia , lookup

Simplex algorithm wikipedia , lookup

Smith–Waterman algorithm wikipedia , lookup

Fast Fourier transform wikipedia , lookup

Algorithm characterizations wikipedia , lookup

Expectation–maximization algorithm wikipedia , lookup

Signal-flow graph wikipedia , lookup

Corecursion wikipedia , lookup

Time complexity wikipedia , lookup

Factorization of polynomials over finite fields wikipedia , lookup

Transcript

Shortest Paths in Directed Planar Graphs with Negative Lengths:

a Linear-Space O(n log2 n)-Time Algorithm

PHILIP N. KLEIN and SHAY MOZES

Brown University

and

OREN WEIMANN

Massachusetts Institute of Technology

We give an O(n log2 n)-time, linear-space algorithm that, given a directed planar graph with

positive and negative arc-lengths, and given a node s, finds the distances from s to all nodes.

Categories and Subject Descriptors: G.2.2 [Graph Theory]: Graph algorithms; Path and circuit problems; F.2.2 [Analysis

of Algorithms and Problem Complexity]: Nonnumerical Algorithms and Problems—Computations on discrete structures

General Terms: Theory, Algorithms

Additional Key Words and Phrases: planar graphs, Monge, shortest paths, replacement paths

1.

INTRODUCTION

The problem of directed shortest paths with negative lengths is as follows: Given a directed graph G with

positive and negative arc-lengths containing no negative cycles,1 and given a source node s, find the distances

from s to all the nodes in the graph. This is a classical problem in combinatorial optimization. For general

graphs, the Bellman-Ford algorithm solves the problem in O(mn) time, where m is the number of arcs and

n is the number of nodes. For integer

√ lengths whose absolute values are bounded by N , the algorithm

nm log(nN )). For integer lengths exceeding −N , the algorithm of

of Gabow and Tarjan [1989]

takes

O(

√

Goldberg [1995] takes O( nm log N ) time. For non-negative lengths, the problem is easier and can be solved

using Dijkstra’s algorithm in O((n + m) log n) time if elementary data structures are used [Johnson 1977],

and in O(n log n + m) time when implemented with Fibonacci heaps [Fredman and Tarjan 1987].

For planar graphs, there has been a series of results yielding progressively better bounds. The first algorithm that exploited planarity was due to Lipton, Rose, and Tarjan [1979], who gave an O(n3/2 ) algorithm.

Henzinger et al. [1997] gave an O(n4/3 log2/3 D) algorithm where D is the sum of the absolute values of the

lengths. Fakcharoenphol and Rao [2006] gave an algorithm requiring O(n log3 n) time and O(n log n) space.

Our result is as follows:

Theorem 1.1. There is an O(n log2 n)-time, linear-space algorithm to find shortest paths in planar directed graphs with negative lengths.

Applications.

In addition to being a fundamental problem in combinatorial optimization, shortest paths in planar graphs

with negative lengths arises in solving other problems. Miller and Naor [1995] show that, by using planar

duality, the following problem can be reduced to shortest paths in a planar directed graph:

1 Algorithms

for this problem can also be used to detect negative cycles.

Author’s address: P.N. Klein, S. Mozes, Department of Computer Science, Brown University, Providence RI 02912-1910,

USA. {klein,shay}@cs.brown.edu. O. Weimann, Massachusetts Institute of Technology, Cambridge, MA 02139, USA.

[email protected].

Klein and Mozes supported by NSF Grant CCF-0635089. Work done while Klein was visiting MIT.

Permission to make digital/hard copy of all or part of this material without fee for personal or classroom use provided that

the copies are not made or distributed for profit or commercial advantage, the ACM copyright/server notice, the title of the

publication, and its date appear, and notice is given that copying is by permission of the ACM, Inc. To copy otherwise, to

republish, to post on servers, or to redistribute to lists requires prior specific permission and/or a fee.

c 20 ACM 0000-0000/20/0000-0001 $5.00

ACM Journal Name, Vol. , No. , 20, Pages 1–13.

G0

G0

P2

P1

r

r

v

v

G1

P3

G1

P4

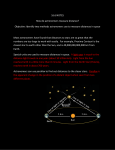

Fig. 1. A graph G and a decomposition using a Jordan curve

into an external subgraph G0 (in gray) and an internal subgraph

G1 (in white). Only boundary nodes are shown. r and v are

boundary nodes. The double-lined blue path is an r-to-v shortest path in G1 . The dashed red path is an r-to-v shortest path

in G0 .

Fig. 2. The solid blue path is an r-to-v shortest path in G. It

can be decomposed into four subpaths. The subpaths P1 and P3

(P2 and P4 ) are shortest paths in G1 (G0 ) between boundary

nodes. The r-to-v shortest paths in G0 and G1 are shown in

gray in the background.

Feasible circulation: Given a directed planar graph with upper and lower arc-capacities, find

an assignment of flow to the arcs so that each arc’s flow is between the arc’s lower and upper

capacities, and, for each node, the flow into the node equals the flow out.

They further show that the following problem can in turn be reduced to feasible circulation:

Feasible flow: Given a directed planar graph with arc-capacities and node-demands, find an

assignment of flow that respects the arc-capacities and such that, for each node, the flow into the

node minus the flow out equals the node’s demand.

For integer-valued capacities and demands, the solutions obtained to the above problems are integer-valued.

Consequently, as Miller and Naor point out, the problem of finding a perfect matching in a bipartite planar

graph can be solved using an algorithm for feasible flow.

Our new shortest-path algorithm thus gives O(n log2 n) algorithms for bipartite planar perfect matching,

feasible flow, and feasible circulation.

Several techniques for computer vision, including image segmentation algorithms by Cox, Rao, and

Zhong [1996] and by Jermyn and Ishikawa [2001; 2001], and a stereo matching technique due to Veksler [2002], involve finding negative-length cycles in graphs that are essentially planar. Thus our algorithm

can be used to implement these techniques.

Summary of the Algorithm.

Like the other planarity-exploiting algorithms for this problem, our algorithm uses planar separators [Lipton

and Tarjan 1979; Miller 1986]. Given an n-node planar embedded directed graph G with

√ arc-lengths, and

given a source node s, the algorithm first finds a Jordan curve C that passes through O( n) nodes (and no

arcs) such that between n/3 and 2n/3 nodes are enclosed by C.

A node through which C passes is called a boundary node. Cutting the planar embedding along C and

duplicating boundary nodes yields two subgraphs G0 and G1 such that, for i = 0, 1, in Gi the boundary

nodes lie on the boundary of a single face Fi . Refer to Fig. 1 for an illustration. Let r be an arbitrary

boundary node.

Our algorithm consists of five stages. The first four stages alternate between working with negative lengths

and working with only positive lengths.

Recursive call:. The first stage recursively computes the distances from r within Gi for i = 0, 1. The

remaining stages use these distances in the computation of the distances in G.

2

Intra-part boundary-distances:. For each graph Gi we use a method due to Klein [2005] to compute all

distances in Gi between boundary nodes. This takes O(n log n) time.

Single-source inter-part boundary distances:. A shortest path in G passes back and forth between G0 and

G1 . Refer to Fig. 1 and Fig. 2 for an illustration. We use a variant of Bellman-Ford to compute the distances

in G from r to all the boundary nodes. Alternating iterations use the all-boundary-distances in G0 and

G1 . Because the distances have a Monge property [Monge 1781] (discussed later), each iteration can be

implemented by two executions of an algorithm due to Klawe√and Kleitman [1990] for finding row-minima

in a special kind of matrix. Each iteration

√ is performed in O( nα(n)), where α(n) is the inverse Ackerman

function. The number of iterations is O( n), so the overall time for this stage is O(nα(n)).

Single-source inter-part distances:. The distances computed in the previous stages are used, together with

a Dijkstra computation within a modified version of each Gi , to compute the distances in G from r to all

the nodes. Dijkstra’s algorithm requires the lengths in Gi to be non-negative, but we can use the recursively

computed distances to transform the lengths in Gi into non-negative lengths without changing the shortest

paths. This stage takes O(n log n) time.2

Rerooting single-source distances:. The algorithm has obtained distances in G from r. In the last stage

these distances are used to transform the lengths in G into nonnegative lengths, and again uses Dijkstra’s

algorithm, this time to compute distances from s. This stage also requires O(n log n) time.2

Relation to Previous Work.

All known planarity-exploiting algorithms for this problem, starting with that of Lipton, Rose, and Tarjan [1979], use planar separators, and use Bellman-Ford on a dense graph whose nodes are those comprising

a planar separator. The algorithm of Henzinger et al. [1997] achieved an improvement by using a multi-part

decomposition based on planar separators. Fakcharoenphol and Rao’s algorithm [2006] introduced several

innovations. Among these is the exploitation of a Monge property of the boundary-to-boundary distances

to enable fast implementation of an iteration of Bellman-Ford. We exploit the Monge property to the same

end, although we do so using a different technique. Another key ingredient of Fakcharoenphol and Rao is

an ingenious data structure to implement a version of Dijkstra’s algorithm, where each node is processed

O(log n) times (rather than once, as in Dijkstra’s algorithm) and many arcs can be relaxed at once.

A central concept of the algorithm of Fakcharoenphol and Rao is the dense distance graph. This consists

of a recursive decomposition of a graph using separators, together with a table for each subgraph arising in

the decomposition giving the distances between all boundary nodes for that subgraph. This structure has

size Ω(n log n) for an n-node graph. The first phase of their algorithm computes this structure in O(n log3 n)

time. The second phase uses the structure to compute distances from a node to all other nodes, also in

O(n log3 n) time.

The structure of our algorithm is different—it is a simple divide-and-conquer, in which the recursive

problem is the same as the original problem, single-source shortest-path distances. In addition, we require

no data structures aside from the dynamic-tree data structure used in [Klein 2005] and a basic priority queue

for implementing Dijkstra’s algorithm.

The Replacement-Paths Problem.

We note that finding row-minima based on a Monge property can be used for other problems in planar graphs.

Consider the replacement-paths problem: we are given a directed graph with non-negative arc lengths and

two nodes s and t. We are required to compute, for every arc e in the shortest path between s and t, the

length of an s-to-t shortest path that avoids e.

Emek et al. [2008] give an O(n log3 n)-time algorithm for solving the replacement-paths problem in a

directed planar graph. Procedure District in Section 4 of their paper solves a problem that can be viewed

as finding the row-minima of a certain matrix which has a Monge property. By further exploiting the Monge

property, we obtain an O(n log2 n)-time algorithm. Details appear in Section 7.

2 This stage can actually be implemented in O(n) using the algorithm of Henzinger et al. [1997]. This however does not change

the overall running time of the algorithm.

3

Theorem 1.2. There is an O(n log2 n)-time algorithm for solving the replacement-paths problem in a

directed planar graph.

2.

2.1

PRELIMINARIES

Embedded Planar Graphs

A planar embedding of a graph assigns each node to a distinct point on the plane, and assigns each edge to a

simple arc between the points corresponding to its endpoints, with the property that no arc-arc or arc-point

intersections occur except for those corresponding to edge-node incidence in the graph. A graph is planar

if it has a planar embedding. Consider the set of points on the plane that are not assigned to any node or

edge; each connected component of this set is a face of the embedding.

2.2

Jordan Separators for Embedded Planar Graphs

Miller [1986] gave a linear-time algorithm that, given a triangulated

√ √ two-connected n-node planar embedded

graph, finds a simple cycle separator consisting of at most 2 2 n nodes, such that at most 2n/3 nodes are

strictly enclosed by the cycle, and at most 2n/3 nodes are not enclosed.

For an n-node planar embedded graph G that is not necessarily triangulated or two-connected, we define

a Jordan separator to be a Jordan curve C that intersects the embedding of the graph only at nodes such

that at most 2n/3 nodes are strictly enclosed by the curve and at most 2n/3 nodes are not enclosed. The

nodes intersected

by the curve are called boundary nodes and denoted Vc . To find a Jordan separator with

√ √

at most 2 2 n boundary nodes, add artificial edges with sufficiently large lengths to triangulate the graph

and make it two-connected without changing the distances in the graph. Now apply Miller’s algorithm.

The internal part of G with respect to C is the embedded subgraph consisting of the nodes and edges

enclosed by C, i.e. including the nodes intersected by C. Similarly, the external part of G with respect to C

is the subgraph consisting of the nodes and edges not strictly enclosed by C, i.e. again including the nodes

intersected by C.

Let G1 (G0 ) denote the internal (external) part of G with respect to C. Since C is a Jordan curve, the set

of points of the plane strictly exterior to C form a connected region. Furthermore, it contains no point or arc

corresponding to a node or edge of G1 . Therefore, the region remains connected when these points and arcs

are removed, so the region is a subset of some face of G1 . Since every boundary node is intersected by C,

it follows that all boundary nodes lie on the boundary of a single face of G1 . Similarly, in G0 , all boundary

nodes lie on the boundary of a single face.

2.3

Monotonicity, Monge and Matrix Searching

A matrix M = (Mij ) is totally monotone if for every i, i0 , j, j 0 such that i < i0 , j < j 0 and Mij ≤ Mij 0 ,

we also have Mi0 j ≤ Mi0 j 0 . Totally monotone matrices were introduced by Aggarwal et al. in [Aggarwal

et al. 1987], who showed that a wide variety of problems in computational geometry could be reduced to the

problem of finding row-maxima or row-minima in totally monotone matrices. Aggarwal et al. also give an

algorithm, nicknamed SMAWK, that, given a totally monotone n × m matrix M , finds all row-maxima of

M in just O(n + m) time. It is easy to see that by negating each element of M and reversing the order of

its columns, SMAWK can be used to find the row-minima of M as well.

A matrix M = (Mij ) is convex Monge (concave Monge) if for every i, i0 , j, j 0 such that i < i0 , j < j 0 , we

have Mij + Mi0 j 0 ≥ Mij 0 + Mi0 j (Mij + Mi0 j 0 ≤ Mij 0 + Mi0 j ). It is immediate that if M is convex Monge

then it is totally monotone. It is also easy to see that the matrix obtained by transposing M is also totally

monotone. Thus SMAWK can also be used to find the column minima and maxima of a convex Monge

matrix.

A falling staircase matrix is defined in [Aggarwal and Klawe 1990] and [Klawe and Kleitman 1990] to be

a lower triangular fragment of a totally monotone matrix. More precisely, (M, {f (i)}0≤i≤n+1 ) is an n × m

falling staircase matrix if

(1) for i = 0, . . . , n + 1, f (i) is an integer with 0 = f (0) < f (1) ≤ f (2) ≤ · · · ≤ f (n) < f (n + 1) = m + 1.

(2) Mij , is a real number if and only if 1 ≤ i ≤ n and 1 ≤ j ≤ f (i). Otherwise, Mij is blank.

(3) (total monotonicity) for i < k and j < l ≤ f (i), and Mij ≤ Mil , we have Mkj ≤ Mkl .

4

Finding the row-maxima in a falling staircase matrix can be easily done using SMAWK in O(n + m)

time after replacing the blanks with sufficiently small numbers so that the resulting matrix is totally monotone. However, this trick does not work for finding the row-minima. Aggarwal and Klawe [1990] give an

O(m log log n) time algorithm for finding row-minima in falling staircase matrices of size n × m. Klawe and

Kleitman give in [Klawe and Kleitman 1990] a more complicated algorithm that computes the row-minima

of an n × m falling staircase matrix in O(mα(n) + n) time, where α(n) is the inverse Ackerman function.

If M satisfies the above conditions with total monotonicity replaced by the convex Monge property then

M and the matrix obtained by transposing M and reversing the order of rows and of columns are falling

staircase. In this case both algorithms can be used to find the column-minima as well as the row-minima.

2.4

Price Functions and Reduced Lengths

For a directed graph G with arc-lengths `(·), a price function is a function φ from the nodes of G to the

reals. For an arc uv, the reduced length with respect to φ is `φ (uv) = `(uv) + φ(u) − φ(v). A feasible price

function is a price function that induces nonnegative reduced lengths on all arcs of G.

Feasible price functions are useful in transforming a shortest-path problem involving positive and negative

lengths into one involving only nonnegative lengths, which can then be solved using Dijkstra’s algorithm.

For any nodes s and t, for any s-to-t path P , `φ (P ) = `(P ) + φ(s) − φ(t). This shows that an s-to-t path is

shortest with respect to `φ (·) iff it is shortest with respect to `(·). Moreover, the s-to-t distance with respect

to the original lengths `(·) can be recovered by adding φ(t) − φ(s) to the s-to-t distance with respect to `φ (·).

Suppose φ is a feasible price function. Running Dijkstra’s algorithm with the reduced lengths and modifying the distances thereby computed to obtain distances with respect to the original lengths will be called

running Dijkstra’s algorithm with φ.

An example of a feasible price function comes from single-source distances. Suppose that, for some node

r of G, for every node v of G, φ(v) is the r-to-v distance in G with respect to `(·). Then for every arc uv,

φ(v) ≤ φ(u) + `(uv), so `φ (uv) ≥ 0. Here we assume, without loss of generality, that all distances are finite

(i.e., that all nodes are reachable from r) since we can always add arcs with sufficiently large lengths to make

all nodes reachable without affecting the shortest paths in the graph.

2.5

Multiple-Source Shortest Paths: Computing Boundary-to-Boundary Distances

Klein [2005] gives a multiple-source shortest-path algorithm with the following properties. The input consists

of a directed planar embedded graph G with non-negative arc-lengths, and a face f . For each node u in turn

on the boundary of f , the algorithm computes (an implicit representation of) the shortest-path tree rooted

at u. The basic algorithm takes a total of O(n log n) time and O(n) space on an n-node input graph. In

addition, given a set of pairs (u, v) of nodes of G where u is on the boundary of f , the algorithm computes

√

the u-to-v distances. The time per distance computed is O(log n). In particular, given a set S of O( n)

nodes on the boundary of a single face, the algorithm can compute all S-to-S distances in O(n log n) time.

In fact, the multiple-source shortest-path algorithm does not require that the arc-lengths be nonnegative

if the input also includes a table of distances to all nodes from some node on the face f . This observation

follows from careful inspection of the algorithm [Klein 2005] itself. Alternatively, it also follows from the

price-function technique of Section 2.4; the table of distances can be used to obtain nonnegative reduced

lengths, and these lengths can be supplied as input to Klein’s algorithm.

3.

THE ALGORITHM

The high-level description of the algorithm appears in Figure 3. After finding a Jordan separator and

selecting a boundary node as a temporary source node, the algorithm consists of five major steps. The

recursive call step is straightforward. Computing intra-part boundary distances uses the algorithm described

in Section 2.5. Computing single-source inter-part boundary distances is described in Section 4; it is based

on the Bellman-Ford algorithm. Single-source inter-part distances is described in Section 5, and is based

on Dijkstra’s algorithm. It yields distances to all nodes from the temporary source node. These distances

constitute a feasible price function, as described in Section 2.4, that enables us, in rerooting single-source

distances, to use Dijkstra’s algorithm once more to finally compute distances from the given source.

5

procedure shortest-paths(G, s)

input: a directed embedded planar graph G with arc-lengths, and a node s of G

output: a table d giving distances in G from s to all nodes of G

0

1

2

3

if G has ≤ 2 nodes, the problem is trivial; return the result

√

find a Jordan separator C of G with O( n) boundary nodes

let G0 ,G1 be the external and internal parts of G with respect to C

let r be a boundary node

Recursive call

4

for i = 0, 1: let di = shortest-paths(Gi , r)

intra-part

boundary

distances

5

for i = 0, 1: use di as input to the multiple-source shortest-path algorithm

to compute a table δi such that δi [u, v] is the u-to-v distance in Gi for

every pair u, v of boundary nodes

6

use δ0 and δ1 to compute a table B such that

B[v] is the r-to-v distance in G for every boundary node v

single-source

inter-part

distances

7

for i = 0, 1: use tables di and B, and Dijkstra’s algorithm to compute

a table d0i such that d0i [v] is the r-to-v distance in G for every node v of Gi

rerooting

singlesource

distances

8

define aprice function φ for G such that φ[v] is the r-to-v distance in G:

d00 [v] if v belongs to G0

φ[v] =

d01 [v] otherwise

use Dijkstra’s algorithm with price function φ to compute a table d

such that d[v] is the s-to-v distance in G for every node v of G

single-source

inter-part

boundary

distances

9

10

return d

Fig. 3.

4.

The shortest-path algorithm

COMPUTING SINGLE-SOURCE INTER-PART BOUNDARY DISTANCES

In this section we describe how to efficiently compute the distances in G from r to all boundary nodes (i.e.,

the nodes of Vc ). This is done using δ0 and δ1 , the all-pairs distances in G0 and in G1 between nodes in Vc

which were computed in the previous stage.

Theorem 4.1. Let G be a directed graph with arbitrary arc-lengths. Let C be a Jordan separator in G

and let G0 and G1 be the external and internal parts of G with respect to C. Let δ0 and δ1 be the all-pairs

distances between nodes in Vc in G0 and in G1 respectively. Let r ∈ Vc be an arbitrary node on the boundary.

There exists an algorithm that, given δ0 and δ1 , computes the from-r distances in G to all nodes in Vc in

O(|Vc |2 α(|Vc |)) time and O(|Vc |) space.

The rest of this section describes the algorithm, thus proving Theorem 4.1. The following structural lemma

stands in the core of the computation. The same lemma has been implicitly used by previous planarityexploiting algorithms.

Lemma 4.2. Let P be a simple r-to-v shortest path in G, where v ∈ Vc . Then P can be decomposed into

at most |Vc | subpaths P = P1 P2 P3 . . . , where the endpoints of each subpath Pi are boundary nodes, and Pi

is a shortest path in Gi mod 2 .

Proof. Consider a decomposition of P = P1 P2 P3 . . . into maximal subpaths such that the subpath Pi

consists of nodes of Gi mod 2 . Since r and v are boundary nodes, and since the boundary nodes are the only

nodes common to both G0 and G1 , each subpath Pi starts and ends on a boundary node. If Pi were not a

shortest path in Gi mod 2 between its endpoints, replacing Pi in P with a shorter path would yield a shorter

r-to-v path, a contradiction.

It remains to show that there are at most |Vc | subpaths in the decomposition of P . Since P is simple,

6

1: e0 [v] = ∞ for all v ∈ Vc

2: e0 [r] = 0

3: for j = 1, 2,3, . . . , |Vc |

minw∈Vc {ej−1 [w] + δ1 [w, v]}, if j is odd

minw∈Vc {ej−1 [w] + δ0 [w, v]}, if j is even

5: B[v] ← e|Vc | [v] for all v ∈ Vc

4:

ej [v] =

ff

, ∀v ∈ Vc

Fig. 4. Pseudocode for the single-source inter-part boundary distances stage for calculating shortest-path distances in G from

r to all nodes in Vc using just δ0 and δ1 .

each node, and in particular each boundary node appears in P at most once. Hence there can be at most

|Vc | − 1 non-empty subpaths in the decomposition of P . Note, however, that if P starts with an arc of G0

then P1 is a trivial empty path from r to r. Hence, P can be decomposed into at most |Vc | subpaths.

Lemma 4.2 gives rise to a dynamic-programming solution for calculating the from-r distances to nodes

of C, which resembles the Bellman-Ford algorithm. The pseudocode is given in Fig. 4. Note that, at this

level of abstraction, there is nothing novel about this dynamic program. Our contribution is in an efficient

implementation of Step 4.

The algorithm consists of |Vc | iterations. On odd iterations, it uses the boundary-to-boundary distances

in G1 , and on even iterations it uses the boundary-to-boundary distances in G0 .

Lemma 4.3. After the table ej is updated by the algorithm, ej [v] is the length of a shortest path in G

from r to v that can be decomposed into at most j subpaths P = P1 P2 P3 . . . Pj , where the endpoints of each

subpath Pi are boundary nodes, and Pi is a shortest path in Gi mod 2 .

Proof. By induction on j. For the base case, e0 is initialized to be infinity for all nodes other than r,

trivially satisfying the lemma. For j > 0, assume that the lemma holds for j − 1, and let P be a shortest

path in G that can be decomposed into P1 P2 . . . Pj as above. Consider the prefix P 0 , P 0 = P1 P2 . . . Pj−1 .

P 0 is a shortest r-to-w path in G that can be decomposed into at most j − 1 subpaths as above for some

boundary node w. Hence, by the inductive hypothesis, when ej is updated in Step 4, ej−1 [w] already stores

the length of P 0 . Thus ej [v] is updated in Step 4 to be at most ej−1 [w] + δj mod 2 [w, v]. Since, by definition,

δj mod 2 [w, v] is the length of the shortest path in Gj mod 2 from w to v, it follows that ej [v] is at most the

length of P . For the opposite direction, since for any boundary node w, ej−1 [w] is the length of some path

that can be decomposed into at most j − 1 subpaths as above, ej [v] is updated in Step 4 to the length of

some path that can be decomposed into at most j subpaths as above. Hence, since P is the shortest such

path, ej [v] is at least the length of P .

From Lemma 4.2 and Lemma 4.3, it immediately follows that the table e|Vc | stores the from-r shortest

path distances in G, so the assignment in Step 5 is justified, and the table B also stores these distances.

We now show how to perform all the minimizations in the j th iteration of Step 4 in O(|Vc |α(|Vc |)) time.

Let i = j mod 2, so this iteration uses distances in Gi . Since all boundary nodes lie on the boundary of

a single face of Gi , there is a natural cyclic clockwise order v1 , v2 , . . . , v|Vc | on the nodes in Vc . Define a

|Vc | × |Vc | matrix A with elements Ak` = ej−1 (vk ) + δi (vk , v` ). Note that computing all minima in Step 4

is equivalent to finding the column-minima of A. We define the upper triangle of A to be the elements of A

on or above the main diagonal. More precisely, the upper triangle of A is the portion {Ak` : k ≤ `} of A.

Similarly, the lower triangle of A consists of all the elements on or below the main diagonal of A.

Lemma 4.4. For any four indices k, k 0 , `, `0 such that either Ak` , Ak`0 , Ak0 ` and Ak0 `0 are all in A’s upper

triangle, or are all in A’s lower triangle (i.e., either 1 ≤ k ≤ k 0 ≤ ` ≤ `0 ≤ |Vc | or 1 ≤ ` ≤ `0 ≤ k ≤ k 0 ≤ |Vc |),

the convex Monge property holds:

Ak` + Ak0 `0 ≥ Ak`0 + Ak0 ` .

Proof. Consider the case 1 ≤ k ≤ k 0 ≤ ` ≤ `0 ≤ |Vc |, as in Fig. 5. Since Gi is planar, any pair of paths in

Gi from k to ` and from k 0 to `0 must cross at some node w of Gi . Let bk = ej−1 (vk ) and let bk0 = ej−1 (vk0 ).

Let ∆(u, v) denote the u-to-v distance in Gi for any nodes u, v of Gi . Note that ∆(u, v) = δi (u, v) for

7

ℓ'

k

w

k'

ℓ

Fig. 5. Nodes k < k0 < ` < `0 in clockwise order on the boundary nodes. Paths from k to ` and from k0 to `0 must cross at

some node w. This is true both in the internal and the external subgraphs of G

u, v ∈ Vc . We have

Ak,` + Ak0 ,`0 = (bk + ∆(vk , w) + ∆(w, v` ))

+ (bk0 + ∆(vk0 , w) + ∆(w, v`0 ))

= (bk + ∆(vk , w) + ∆(w, v`0 ))

+ (bk0 + ∆(vk0 , w) + ∆(w, v` ))

≥ (bk + ∆(vk , v`0 )) + (bk0 + ∆(vk0 , v` ))

= (bk + δi (vk , v`0 )) + (bk0 + δi (vk0 , v` ))

= Ak,`0 + Ak0 ,` .

The case (1 ≤ ` ≤ `0 ≤ k ≤ k 0 ≤ |Vc |) is similar.

Lemma 4.5. A single iteration of Step 4 of the algorithm in Fig. 4 can be computed in O(|Vc |α(|Vc |))

time.

Proof. We need to show how to find the column-minima of the matrix A. We compute the columnminima of A’s lower and upper triangles separately, and obtain A’s column-minima by comparing the two

values obtained for each column.

It follows directly from Lemma 4.4 that replacing the upper triangle of A with blanks yields a falling

staircase matrix. By [Klawe and Kleitman 1990], the column-minima of this falling staircase matrix can be

computed in O(|Vc |α(|Vc |)) time. Another consequence of Lemma 4.4 is that the column-minima of the upper

triangle of A may also be computed using the algorithm in [Klawe and Kleitman 1990]. To see this consider

0

a counterclockwise ordering of the nodes of |Vc | v10 , v20 , . . . , v|V

such that vk0 = v|Vc |+1−k . This reverses the

c|

order of both the rows and the columns of A, thus turning its upper triangle into a lower triangle. Again,

replacing the upper triangle of this matrix with blanks yields a falling staircase matrix.

We thus conclude that A’s column-minima can be computed in O(2|Vc | · α(|Vc |) + |Vc |) = O(|Vc | · α(|Vc |))

time. Note that we never actually compute and store the entire matrix A as this would take O(|Vc |2 ) time.

We compute the entries necessary for the computation on the fly in O(1) time per element.

Lemma 4.5 shows that the time it takes the algorithm described in Fig. 4 to compute the distances between

r and all √

nodes of Vc is O(|Vc |2 · α(|Vc |). We have thus proved Theorem 4.1. The choice of separator ensures

|Vc | = O( n), so this computation is performed in O(nα(n)) time.

5.

COMPUTING SINGLE-SOURCE INTER-PART DISTANCES

In the previous section we showed how to compute a table B that stores the distances from r to all the

boundary nodes in G. In this section we describe how to compute the distances from r to all other nodes of

G. We do so by computing tables d00 and d01 where d0i [v] is the r-to-v distance in G for every node v of Gi .

8

1: let G0i be the graph obtained from Gi by removing arcs entering r,

and adding an arc ru of length B[u] for every boundary node u

2: let pi = max{di [u] − B[u] : u a boundary node}

3: define a price function

φi for G0i :

pi

if v = r

di [v] otherwise

4: use Dijkstra’s algorithm with price function φi to compute a table d0i such that

d0i [v] is the r-to-v distance in G0i for every node v of G0i

φi [v] =

Fig. 6. Pseudocode for the single-source intra-part distances stage for computing the shortest path distances from r to all nodes.

Recall that we have already computed the table di that stores the r-to-v distance in Gi for every node v of

Gi .

The pseudocode given in Fig. 6 describes how to compute d0i . The idea is to use di and B in order to

construct a modified version of Gi , denoted G0i , so that the from-r distances in G0i are the same as the from-r

distances in G. We then construct a feasible price function φi for G0i and use Dijkstra’s algorithm on G0i

with the price function φi in order to compute these from-r distances. The following two lemmas motivate

the definition of G0i and show that it captures the true from-r distances in G.

Lemma 5.1. Let P be an r-to-v shortest path in G, where v ∈ Gi . Then P can be expressed as P = P1 P2 ,

where P1 is a (possibly empty) shortest path from r to a node u ∈ Vc , and P2 is a (possibly empty) shortest

path from u to v that only visits nodes of Gi .

Proof. Let u be the last boundary node visited by P . Let P1 be the r-to-u prefix of P , and let P2 be

the u-to-v suffix of P . Since P1 and P2 are subpaths of a shortest path in G, they are each shortest as well.

By choice of u, P2 has no internal boundary nodes, so it is a path in Gi .

Lemma 5.2. Let G0i be the graph obtained from Gi by removing arcs entering r, and adding an arc ru of

length B[u] for every boundary node u. The from-r distances in G0i are equal to the from-r distance in G.

Proof. Distances in G0i are not shorter than in G since each arc of G0i corresponds to some path in G.

For the opposite direction, consider an r-to-v shortest path in G. Let P1 , P2 , u be as in Lemma 5.1. P1 is a

shortest path in G from r to some u ∈ Vc . By definition of G0i , the length of the new arc ru in G0i is equal to

the length of P1 in G. Furthermore, P2 is a path in G0i since it only consists of arcs in Gi . Since the shortest

r-to-v path is simple, non of these arcs enters r, and therefore all of them are in G0i . Hence the length of the

path in G0i that consists of the new arc ru followed by P2 equals the length of P in G.

Since G0i contains arcs not in Gi , we cannot use the from-r distances in di as a feasible price function. We

slightly modify them to ensure non-negativity as shown by the following lemma.

Lemma 5.3. φi defined in Step 3 of Fig. 6 is a feasible price function for G0i .

Proof. First note that di [r] = 0 and B[r] = 0, so pi ≥ di [r]. Let uv be an arc of G0i . If uv is an

arc of Gi then v 6= r since G0i does not contain any arcs entering r. Since di [v] ≤ di [u] + `[uv], we have

`φi [uv] = φi [u] + `[uv] + φi [v] ≥ di [u] + `[uv] − di [v] ≥ 0. Otherwise, u = r and v is a boundary node, so

`φi [rv] = φi [r] + B[v] − φi [v]

= pi − (di [v] − B[v])

≥ 0

by definition of φi

by definition of pi .

G0i

Computing the auxiliary graphs

and the price functions φi can be easily done in linear time. Therefore,

the time required for this stage is dominated by the O(n log n) running time of Dijkstra’s algorithm. We

note that one may use the algorithm of Henzinger et al. [1997] instead of Dijkstra to obtain a linear running

time for this stage. This however does not change the overall running time of our algorithm.

6.

CORRECTNESS AND ANALYSIS

We will show that at each stage of our algorithm, the necessary information has been correctly computed

and stored. The recursive call in Step 4 computes and stores the from-r distances in Gi . The conditions for

9

applying Klein’s algorithm in Step 5 hold since all boundary nodes lie on the boundary of a single face of

Gi and since the from-r distances in Gi constitute a feasible price function for Gi (see also the discussion at

the end of Section 2.5). The correctness of the single-source inter-part boundary distances stage in Step 6

and of the single-source inter-part distances stage in Step 7 was proved in Sections 4 and 5. Thus, the r-to-v

distances in G for all nodes v of G are stored in d00 for v ∈ G0 and in d01 for v ∈ G1 . Note that d00 and d01

agree on distances from r to boundary nodes. Therefore, the price function φ defined in Step 8 is feasible for

G, so the conditions to run Dijkstra’s algorithm in Step 9 hold, and the from-s distances in G are correctly

computed. We have thus established the correctness of our algorithm.

To bound the running time of the algorithm we bound the time it takes to complete one recursive call to

shortest-paths. Let |G| denote the number of nodes in the input graph G, and let |Gi | denote the number

of nodes in each of its subgraphs. Computing the intra-subgraph boundary-to-boundary distances using

Klein’s algorithm takes O(|Gi | log |Gi |) for each of the two subgraphs, which is in O(|G| log |G|). Computing

the single-source distances in G to the boundary nodes is done in O(|G|α(|G|)), as we explain in Section 4.

The extension to all nodes of G is again done in O(|Gi | log |Gi |) for each subgraph. Distances from the given

source are computed in an additional O(|G| log |G|) time. Thus the total running time of one invocation is

O(|G| log |G|). Therefore the running time of the entire algorithm is given by

T (|G|) = T (|G0 |) + T (|G1 |) + O(|G| log |G|)

= O(|G| log2 |G|).

Here we p

used the properties of the separator, namely that |Gi | ≤ 2|G|/3 for i = 0, 1, and that |G0 | + |G1 | =

|G| + O( |G|). The formal proof of this recurrence is given in the following lemma.

Lemma

6.1. Let T (n) satisfy the recurrence T (n) = T (n1 ) + T (n2 ) + O(n log n), where n ≤ n1 + n2 ≤

√

2

n + 4 n and ni ≤ 2n

3 . Then T (n) = O(n log n).

Proof. We show by induction that for any n ≥ N0 , T (n) ≤ Cn log2 n for some constants N0 , C. For a

choice of N0 to be specified below, let C0 be such that for any N0 ≤ n ≤ 3N0n, T (n) ≤ C0on log2 (n). Let C1

C1

be a constant such that T (n) ≤ T (n1 ) + T (n2 ) + C1 (n log n). Let C = max C0 , log(3/2)

. Note that this

choice serves as the base of the induction since it satisfies the claim for any N0 ≤ n ≤ 3N0 . We assume that

the claim holds for all n0 such that 3N0 ≤ n0 < n and show it holds for n. Since for i = 1, 2, ni ≤ 2n/3 and

n1 + n2 ≥ n, it follows that ni ≥ n/3 > N0 . Therefore, we may apply the inductive hypothesis to obtain:

T (n) ≤ C(n1 log2 n1 + n2 log2 n2 ) + C1 n log n

≤ C(n1 + n2 ) log2 (2n/3) + C1 n log n

√

2

≤ C(n + 4 n) (log(2/3) + log n) + C1 n log n

√

≤ Cn log2 n + 4C n log2 n − 2C log(3/2)n log n

√

+C(n + 4 n) log2 (2/3) + C1 n log n.

It therefore suffices to show that

√

√

4C n log2 n − 2C log(3/2)n log n + C(n + 4 n) log2 (2/3) + C1 n log n ≤ 0,

or equivalently that

1−

C1

2C log(3/2)

n log n ≥

√

√

2

log(3/2)

n log2 n +

(n + 4 n).

log(3/2)

2

Let N0 be such that the right hand side is at most 12 n log n for all n > N0 . The above inequality holds for

C1

every n > N0 since we chose C > log(3/2)

so the coefficient in the left hand side is at least 12 .

We have thus proved that the total running time of our algorithm is O(n log2 n). We turn to the space

bound. The space required for one invocation is O(|G|). Each of the two recursive calls can use the same

memory locations one after the other, so the space is given by

S(|G|) = max{S(|G0 |), S(|G1 |} + O(|G|)

= O(|G|)

because max{|G0 |, |G1 |} ≤ 2|G|/3.

10

t

t

j'

z

j'

j

j

z

i'

i

i'

i

s

s

Fig. 7. The s-t shortest path P is shown in solid blue. Paths

of type Q2 (dashed black) do not cross P . Two LL paths

(i.e., leaving and entering P from the left) are shown. For

i < i0 < j < j 0 , the ij path and the i0 j 0 path must cross at

some node z.

Fig. 8. The s-t shortest path P is shown in solid blue. Paths

of type Q2 (dashed black) do not cross P . Two LR paths

(i.e., leaving P from the left and entering P from the right)

are shown. For i < i0 < j < j 0 , the ij 0 path and the i0 j path

must cross at some node z.

We have proved Theorem 1.1.

7.

THE REPLACEMENT-PATHS PROBLEM IN PLANAR GRAPHS

Recall that given a directed planar graph with non-negative arc lengths and two nodes s and t, the

replacement-paths problem asks to compute, for every arc e in the shortest path between s and t, the length

of an s-to-t shortest path that avoids e.

In this section we show how to modify the algorithm of Emek et al. [2008] to obtain an O(n log2 n)

running time for the replacement-paths problem. This is another example of using a Monge property for

finding minima in a matrix. Similarly to Section 4, we deal with a matrix whose upper triangle satisfies a

Monge property. However, the minima search problem is restricted to rectangular portions of that upper

triangle. Hence, each such rectangular portion is entirely Monge (rather than falling staircase) so the

SMAWK algorithm of Aggarwal et al. [1987] can be used (rather than that of [Klawe and Kleitman 1990]).

Let P = (u1 , u2 , . . . , up+1 ) be the shortest path from s = u1 to t = up+1 in the graph G. Consider the

replacement s-to-t path Q that avoids the arc e in P . Q can be decomposed as Q1 Q2 Q3 where Q1 is a prefix

of P , Q3 is a suffix of P , and Q2 is a subpath from some ui to some uj that avoids any other vertex in

P . If in a clockwise traversal of the arcs incident to some node ui of P , starting from the arc (ui , ui+1 ) we

encounter an arc e before we encounter the arc (ui−1 , ui ), then we say that e is to the right of P . Otherwise,

e is to the left of P . The first arc of Q2 can be left or right of P and the last arc of Q2 can be left or right

of P . In all four cases Q2 never crosses P (see Fig. 7 and Fig. 8).

For nodes x, y, the x-to-y distance is denoted by δG (x, y). The distances δG (s, ui ) and δG (ui , t) for

i = 1, . . . , p + 1 are computed from P in O(p) time, and stored in a table.

c d,d0 is defined in [Emek et al. 2008] as follows: for any 1 ≤ i ≤ p and 1 ≤ j ≤ p, let

The p × p matrix len

c d,d0 (i, j) be the length of the shortest s-to-t path of the form Q1 Q2 Q3 described above where Q2 starts at

len

ui via a left-going arc if d = L or a right-going arc if d = R, and Q2 ends at uj+1 via a left-going arc if d0 = L

and a right-going arc if d0 = R. The length of Q2 is denoted PAD-queryG,d,d0 (i, j). It can be computed in

O(log n) time by a single query to a data structure that Emek et al. call PADO (Path Avoiding Distance

Oracle). Thus, we can write

c d,d0 (i, j) = δG (s, ui ) + PAD-query

len

G,d,d0 (i, j) + δG (uj+1 , t),

c d,d0 in O(log n) time.

and query any entry of len

3

The O(n log n) time-complexity of Emek et al. arises from the recursive calls to the District procedure.

11

!"$

!"#

!

"

!"#

#

c d,d0 . The procedure District(1, p) computes the row and column-minima

Fig. 9. The upper triangular fragment of the matrix len

of the area B ∪ C, and therefore also the minimum element in B ∪ C (i.e. in range(p/2)). Then, District(1, p/2) computes the

row and column-minima of the area A. Together, they enable finding the row and column-minima of A ∪ B (i.e. in range(p/4)),

and in particular the minimum element in range(p/4).

We next give an alternative description of their algorithm. This new description is slightly simpler and makes

it easy to explain the use of SMAWK.

c d,d0 defined by rows 1, . . . , i and columns

Let range(i) denote the rectangular portion of the matrix len

i, . . . , p. With this definition the length of the replacement s-to-t path that avoids the edge (ui , ui+1 ) is

equal to the minimal element in range(i). Since d ∈ {L, R} and d0 ∈ {L, R}, we need to take the minimum

c matrices. The replacementamong these four rectangular portions corresponding to the four possible len

paths problem thus reduces to computing the minimum element in range(i) for every i = 1, 2, . . . , p, and

every d, d0 ∈ {L, R}.

Given some 1 ≤ a < b ≤ p and some d, d0 ∈ {L, R}, District(a, b) computes the row and column-minima

c d,d0 defined by rows a to b(a + b)/2c and columns b(a + b)/2c

of the rectangular portion of the matrix len

to b. Initially, District is called with a = 1 and b = p. This, in particular, computes the minimum of

range(bp/2c). Then, District(a, b(a + b)/2c − 1) and District(b(a + b)/2c + 1, b) are called recursively.

Notice that the previous call to District(a, b), together with the current call to District(a, b(a + b)/2c − 1)

suffice for computing all row and column-minima of range(bp/4c) (and hence also the global minimum of

range(bp/4c)), as illustrated in Fig. 9. Similarly, District(a, b), together with District(b(a + b)/2c + 1, b)

suffice for computing all row and column-minima of range(b3p/4c). The recursion stops when b − a ≤ 1.

Therefore, the depth of the recursion for District(1, p) is O(log p), and it computes the minimum of range(i)

for all 1 ≤ i ≤ p.

Emek et al. show how to compute District(a, b) in O((b − a) log2 (b − a) log n) time, leading to a total of

O(n log3 n) time for computing District(1, p). They use a divide and conquer technique to compute the row

and column-minima in each of the rectangular areas encountered along the computation. Our contribution

is in showing that instead of divide-and-conquer one can use SMAWK to find those minima. This enables

computing District(a, b) in O((b − a) log(b − a) log n) time, which leads to a total of O(n log2 n) time for

District(1, p), as shown by the following lemmas.

c d,d0 satisfies a Monge property.

Lemma 7.1. The upper triangle of len

Proof. Note that adding δG (s, ui ) to all of the elements in the ith row or δG (uj+1 , t) to all elements

in the j th column preserves the Monge property. Therefore, it suffices to show that the upper triangle of

PAD-queryG,d,d0 satisfies a Monge property.

When d = d0 , the proof is essentially the same as that of Lemma 4.4 because the Q2 paths have the same

12

crossing property as the paths in Lemma 4.4. This is illustrated in Fig. 7. We thus establish that the convex

Monge property holds.

When d 6= d0 , Lemma 4.4 applies but with the convex Monge property replaced with the concave Monge

property. To see this, consider the crossing paths in Fig. 8. In contrast to Fig. 7, this time the crossing

paths are i-to-j 0 and i0 -to-j.

Lemma 7.2. Procedure District(a, b) can be computed in O((b − a) log(b − a) log n) time.

Proof. Recall that, for every pair d, d0 , District(a, b) first computes the row and column-minima of

c d,d0 defined by rows a to b(a + b)/2c and columns b(a + b)/2c to b. By

the rectangular submatrix of len

Lemma 7.1, this entire submatrix has a Monge property. In the case of the convex Monge property, we can

use SMAWK to find all row and column-minima of the submatrix. In the case of the concave Monge property,

we cannot directly apply SMAWK. By negating all the elements we get a convex Monge matrix but we are

now looking for its row and column maxima. As discussed in Section 2.3, SMAWK can be used to find row

and column-maxima of a convex Monge matrix. Thus, in both cases, we find the row and column-minima

of the submatrix by querying only O(b − a) entries each in O(log n) time for a total of O((b − a) log n) time.

Therefore, T (a, b), the time it takes to compute District(a, b) is given by

T (a, b) = T (a, (a + b)/2) + T ((a + b)/2, b) + O((b − a) log n) = O((b − a) log(b − a) log n).

REFERENCES

Aggarwal, A. and Klawe, M. 1990. Applications of generalized matrix searching to geometric algorithms. Discrete Appl.

Math. 27, 1-2, 3–23.

Aggarwal, A., Klawe, M. M., Moran, S., Shor, P., and Wilber, R. 1987. Geometric applications of a matrix-searching

algorithm. Algorithmica 2, 1, 195–208.

Cox, I., Rao, S., and Zhong, Y. 1996. Ratio regions: A technique for image segmentation. Int. Conf. Pattern Recog. 02, 557.

Emek, Y., Peleg, D., and Roditty, L. 2008. A near-linear time algorithm for computing replacement paths in planar

directed graphs. In Proceedings of the 19th Annual ACM-SIAM Symposium on Discrete Algorithms. Society for Industrial

and Applied Mathematics, Philadelphia, PA, USA, 428–435.

Fakcharoenphol, J. and Rao, S. 2006. Planar graphs, negative weight edges, shortest paths, and near linear time. J. Comput.

Syst. Sci. 72, 5, 868–889.

Fredman, M. L. and Tarjan, R. E. 1987. Fibonacci heaps and their uses in improved network optimization algorithms. J.

ACM 34, 3, 596–615.

Gabow, H. N. and Tarjan, R. E. 1989. Faster scaling algorithms for network problems. SIAM J. Comput. 18, 5, 1013–1036.

Goldberg, A. V. 1995. Scaling algorithms for the shortest paths problem. SIAM J. Comput. 24, 3, 494–504.

Henzinger, M. R., Klein, P. N., Rao, S., and Subramanian, S. 1997. Faster shortest-path algorithms for planar graphs. J.

Comput. Syst. Sci. 55, 1, 3–23.

Ishikawa, H. and Jermyn, I. 2001. Region extraction from multiple images. In 8th IEEE International Conference on

Computer Vision. IEEE Computer Society, Los Alamitos, CA, USA, 509–516.

Jermyn, I. H. and Ishikawa, H. 2001. Globally optimal regions and boundaries as minimum ratio weight cycles. IEEE Trans.

Pattern Anal. Mach. Intell. 23, 10, 1075–1088.

Johnson, D. B. 1977. Efficient algorithms for shortest paths in sparse graphs. J. ACM 24, 1–13.

Klawe, M. M. and Kleitman, D. J. 1990. An almost linear time algorithm for generalized matrix searching. SIAM J. Discret.

Math. 3, 1, 81–97.

Klein, P. N. 2005. Multiple-source shortest paths in planar graphs. In Proceedings of the 16th Annual ACM-SIAM Symposium

on Discrete Algorithms. Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 146–155.

Lipton, R. J., Rose, D. J., and Tarjan, R. E. 1979. Generalized nested dissection. SIAM J. Num. Anal. 16, 346–358.

Lipton, R. J. and Tarjan, R. E. 1979. A separator theorem for planar graphs. SIAM J. Appl. Math. 36, 2, 177–189.

Miller, G. L. 1986. Finding small simple cycle separators for 2-connected planar graphs. J. Comput. Syst. Sci. 32, 3, 265–279.

Miller, G. L. and Naor, J. 1995. Flow in planar graphs with multiple sources and sinks. SIAM J. Comput. 24, 5, 1002–1017.

Monge, G. 1781. Mémoire sur la théorie des déblais et ramblais. Mém. Math. Phys. Acad. Roy. Sci. Paris, 666–704.

Veksler, O. 2002. Stereo correspondence with compact windows via minimum ratio cycle. IEEE Trans. Pattern Anal. Mach.

Intell. 24, 12, 1654–1660.

Received November 2008; revised March 2009; accepted Month Year

13