PowerPoint

... With respect to their internal structure: e.g., at the core of a noun phrase is a noun With respect to other constituents: e.g., noun phrases generally ...

... With respect to their internal structure: e.g., at the core of a noun phrase is a noun With respect to other constituents: e.g., noun phrases generally ...

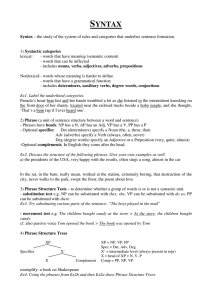

SYNTAX

... Phrase Structure Trees show that a sentence is both a linear string o words and a hierarchical structure with phrases nested in phrases. They show three aspects of speakers’ syntactic knowledge: a. the linear order of the words in the sentence b. the groupings of words into syntactic categories c. t ...

... Phrase Structure Trees show that a sentence is both a linear string o words and a hierarchical structure with phrases nested in phrases. They show three aspects of speakers’ syntactic knowledge: a. the linear order of the words in the sentence b. the groupings of words into syntactic categories c. t ...

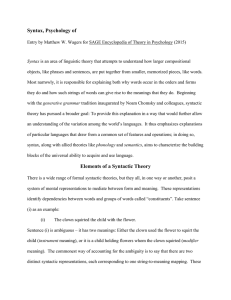

Syntax, Psychology of

... speakers track head-complement combinations closely, they don’t seem to pay as close attention to adjunct combinations. The theory of Construction Grammar illustrates a more-drastic claim about the lexicalization of syntactic processes: Syntactic generalizations are held to be more piecewise than in ...

... speakers track head-complement combinations closely, they don’t seem to pay as close attention to adjunct combinations. The theory of Construction Grammar illustrates a more-drastic claim about the lexicalization of syntactic processes: Syntactic generalizations are held to be more piecewise than in ...

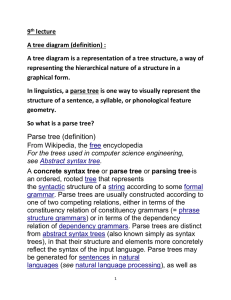

9th lecture A tree diagram (definition) : A tree diagram is a

... see X-bar theory. The parse tree is the entire structure, starting from S and ending in each of the leaf nodes (John, hit, the, ball). The following abbreviations are used in the tree: S for sentence, the top-level structure in this example NP for noun phrase. The first (leftmost) NP, a single nou ...

... see X-bar theory. The parse tree is the entire structure, starting from S and ending in each of the leaf nodes (John, hit, the, ball). The following abbreviations are used in the tree: S for sentence, the top-level structure in this example NP for noun phrase. The first (leftmost) NP, a single nou ...

University of Prince Salman Ibn Abdelaziz

... Deep &Surface Structures Two levels of description: Surface & Deep Charlie broke the window.( active) The widow was broken by Charlie.( passive) ...

... Deep &Surface Structures Two levels of description: Surface & Deep Charlie broke the window.( active) The widow was broken by Charlie.( passive) ...

Composing Music with Grammars

... Allows for infinite strings and null strings. Context-sensitive (type 1) – A α B → A β B. alpha produces beta in the context of A and B. α → Ø is forbidden. Context-free (type 2) – very useful with regards to natural language and programming. Good for representing multi-leveled syntactic formations ...

... Allows for infinite strings and null strings. Context-sensitive (type 1) – A α B → A β B. alpha produces beta in the context of A and B. α → Ø is forbidden. Context-free (type 2) – very useful with regards to natural language and programming. Good for representing multi-leveled syntactic formations ...

Konsep dalam Teori Otomata dan Pembuktian Formal

... It doesn’t work very well (or tell us much) with various languages So-called free word order or scrambling languages Languages where the morphology does more of the work ...

... It doesn’t work very well (or tell us much) with various languages So-called free word order or scrambling languages Languages where the morphology does more of the work ...

LSA.303 Introduction to Computational Linguistics

... For example, it makes sense to say that the following are all noun phrases in English... ...

... For example, it makes sense to say that the following are all noun phrases in English... ...

Lexicalising a robust parser grammar using the WWW

... 1996, Grefenstette 1996, Collins 1996, Aït-Mokhtar and Chanod 1997, Charniak 2000). These parsers assign linguistic structures to text sentences and are used with some success in various applications, such as information extraction, question answering systems, word sense disambiguation, etc. Most of ...

... 1996, Grefenstette 1996, Collins 1996, Aït-Mokhtar and Chanod 1997, Charniak 2000). These parsers assign linguistic structures to text sentences and are used with some success in various applications, such as information extraction, question answering systems, word sense disambiguation, etc. Most of ...

restarting automata: motivations and applications

... M can be viewed as a recognizer and as a (regulated) reduction system, as well. A reduction by M is defined trough the rewriting operations performed during one individual cycle by M , in which M distinguishes two alphabets: the input alphabet and the working alphabet. For any input word w, M induces ...

... M can be viewed as a recognizer and as a (regulated) reduction system, as well. A reduction by M is defined trough the rewriting operations performed during one individual cycle by M , in which M distinguishes two alphabets: the input alphabet and the working alphabet. For any input word w, M induces ...

Prague Dependency Treebank 1.0 Functional Generative Description

... - Preposition or a part of compound preposition - Subordinate conjunction - (Superfluously) referring particle or emotional particle - Rhematizer or another node acting to another constituent AuxX - Comma, but not the main coordinating comma AuxG - Other graphical symbols being not classified as Aux ...

... - Preposition or a part of compound preposition - Subordinate conjunction - (Superfluously) referring particle or emotional particle - Rhematizer or another node acting to another constituent AuxX - Comma, but not the main coordinating comma AuxG - Other graphical symbols being not classified as Aux ...

Parts of Speech

... words expressed within the same syntactic rule. A non-local dependency is an instance in which two words can be syntactically dependent even though they occur far apart in a sentence (e.g., subject-verb agreement; long-distance dependencies such as wh-extraction). Non-local phenomena are a challenge ...

... words expressed within the same syntactic rule. A non-local dependency is an instance in which two words can be syntactically dependent even though they occur far apart in a sentence (e.g., subject-verb agreement; long-distance dependencies such as wh-extraction). Non-local phenomena are a challenge ...

Dependency grammar

.jpg?width=300)

Dependency grammar (DG) is a class of modern syntactic theories that are all based on the dependency relation (as opposed to the constituency relation) and that can be traced back primarily to the work of Lucien Tesnière. Dependency is the notion that linguistic units, e.g. words, are connected to each other by directed links. The (finite) verb is taken to be the structural center of clause structure. All other syntactic units (words) are either directly or indirectly connected to the verb in terms of the directed links, which are called dependencies. DGs are distinct from phrase structure grammars (constituency grammars), since DGs lack phrasal nodes - although they acknowledge phrases. Structure is determined by the relation between a word (a head) and its dependents. Dependency structures are flatter than constituency structures in part because they lack a finite verb phrase constituent, and they are thus well suited for the analysis of languages with free word order, such as Czech, Turkish, and Warlpiri.