1 1 1 1 1 1 1 1 1 1 1 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0

... When a test record is presented to the classifier – It is assigned to the class label of the highest ranked rule it has ...

... When a test record is presented to the classifier – It is assigned to the class label of the highest ranked rule it has ...

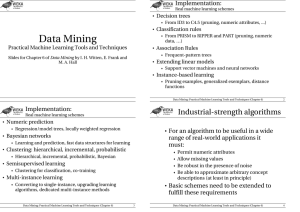

chap5_alternative_classification

... When a test record is presented to the classifier – It is assigned to the class label of the highest ranked rule it has ...

... When a test record is presented to the classifier – It is assigned to the class label of the highest ranked rule it has ...

Studies on Computational Learning via

... This thesis presents cutting-edge studies on computational learning. The key issue throughout the thesis is amalgamation of two processes; discretization of continuous objects and learning from such objects provided by data. Machine learning, or data mining and knowledge discovery, has been rapidly ...

... This thesis presents cutting-edge studies on computational learning. The key issue throughout the thesis is amalgamation of two processes; discretization of continuous objects and learning from such objects provided by data. Machine learning, or data mining and knowledge discovery, has been rapidly ...

Application Of Data Mining Technology To Support Fraud Protection

... 5.3.3 Comparison of J48 Decision Tree and Naïve Bayes Models .................................................................. 83 5.4 Evaluation of the Discovered Knowledge ..................................................................................................... 84 CHAPTER SIX ......... ...

... 5.3.3 Comparison of J48 Decision Tree and Naïve Bayes Models .................................................................. 83 5.4 Evaluation of the Discovered Knowledge ..................................................................................................... 84 CHAPTER SIX ......... ...

MODEL-BASED OUTLIER DETECTION FOR OBJECT

... data. Object-relational data can be viewed as a heterogeneous network with different classes of objects and links. We develop two new approaches to unsupervised outlier detection; both approaches leverage the statistical information obtained from a statistical-relational model. The first method deve ...

... data. Object-relational data can be viewed as a heterogeneous network with different classes of objects and links. We develop two new approaches to unsupervised outlier detection; both approaches leverage the statistical information obtained from a statistical-relational model. The first method deve ...

Chapter 6 A SURVEY OF TEXT CLASSIFICATION

... pLSA and LDA (which is a Bayesian version of pLSA) in terms of their effectiveness in transforming features for text categorization and drawn a similar conclusion and found that pLSA and LDA tend to perform similarly. A number of techniques have also been proposed to perform the feature transformatio ...

... pLSA and LDA (which is a Bayesian version of pLSA) in terms of their effectiveness in transforming features for text categorization and drawn a similar conclusion and found that pLSA and LDA tend to perform similarly. A number of techniques have also been proposed to perform the feature transformatio ...

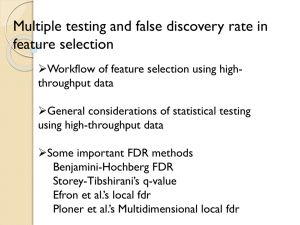

7. Multiple Testing

... Gene/protein/metabolite expression data The simplest strategy: Assume each gene is independent from others. Perform testing between treatment groups for every gene. Select those that are significant. When we do 50,000 t-tests, if the alpha level of 0.05 is used, we expect ~50,000x0.05 = 2,500 false ...

... Gene/protein/metabolite expression data The simplest strategy: Assume each gene is independent from others. Perform testing between treatment groups for every gene. Select those that are significant. When we do 50,000 t-tests, if the alpha level of 0.05 is used, we expect ~50,000x0.05 = 2,500 false ...

DEVELOPING INTELLIGENT SYSTEMS FOR

... like to be referred to as dr. Mues) for helping me to overcome the many acute outbursts of doctoratitis that tortured me during my period as a PhD researcher. Also, the expertise he passed on to me with respect to the basic principles of office soccer and thesis throwing, and the insights gained fro ...

... like to be referred to as dr. Mues) for helping me to overcome the many acute outbursts of doctoratitis that tortured me during my period as a PhD researcher. Also, the expertise he passed on to me with respect to the basic principles of office soccer and thesis throwing, and the insights gained fro ...

TOWARD ACCURATE AND EFFICIENT OUTLIER DETECTION IN

... Advances in computing have led to the generation and storage of extremely large amounts of data every day. Data mining is the process of discovering relationships within data. The identified relationships can be used for scientific discovery, business decision making, or data profiling. Among data m ...

... Advances in computing have led to the generation and storage of extremely large amounts of data every day. Data mining is the process of discovering relationships within data. The identified relationships can be used for scientific discovery, business decision making, or data profiling. Among data m ...

Hierarchical density estimates for data clustering

... contrast, works such as [Fukunaga and Hostetler 1975; Coomans and Massart 1981] make the oversimplistic assumption that each mode of the density f corresponds to a cluster and, then, they simply seek to assign every data object to one of these modes (according to some hill-climbing-like heuristic). ...

... contrast, works such as [Fukunaga and Hostetler 1975; Coomans and Massart 1981] make the oversimplistic assumption that each mode of the density f corresponds to a cluster and, then, they simply seek to assign every data object to one of these modes (according to some hill-climbing-like heuristic). ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.