CAP 4770 Introdution Data Mining and Machine Intelligence

... Reference materials: Research papers which will be distributed in the class Specific course information: Catalog description: This course deals with the principles of data mining. Topics include machine learning methods, knowledge discovery and representation, clustering, classification and predicti ...

... Reference materials: Research papers which will be distributed in the class Specific course information: Catalog description: This course deals with the principles of data mining. Topics include machine learning methods, knowledge discovery and representation, clustering, classification and predicti ...

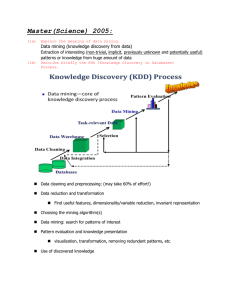

Master(Science) 2005

... characterization and clustering? Between classification and prediction? For each of these pairs of tasks, how they are similar? 4c . ...

... characterization and clustering? Between classification and prediction? For each of these pairs of tasks, how they are similar? 4c . ...

Eman B. A. Nashnush

... network, this algorithm have been widely used in real world applications like medical diagnosis, image recognition, fraud detection, and inference problems. In all of these applications, evaluation method as accuracy is not enough because there are costs involve each decision. For example, in a frau ...

... network, this algorithm have been widely used in real world applications like medical diagnosis, image recognition, fraud detection, and inference problems. In all of these applications, evaluation method as accuracy is not enough because there are costs involve each decision. For example, in a frau ...

Classification Under the Relevant Set Correlation Model

... Supervisor: Michael HOULE, Visiting Professor One of the most well-known classification methods in machine learning is that of knearest-neighbor (k-NN) classification, a voting strategy in which each object is assigned to the class most common among its k closest neighbors within a training set of e ...

... Supervisor: Michael HOULE, Visiting Professor One of the most well-known classification methods in machine learning is that of knearest-neighbor (k-NN) classification, a voting strategy in which each object is assigned to the class most common among its k closest neighbors within a training set of e ...

Fast Clustering and Classification using P

... new improvements are described. All algorithms are fundamentally based on kerneldensity estimates that can be seen as a unifying concept for much of the work done in classification and clustering. The two classification algorithms in this thesis differ in their approach to handling data with many at ...

... new improvements are described. All algorithms are fundamentally based on kerneldensity estimates that can be seen as a unifying concept for much of the work done in classification and clustering. The two classification algorithms in this thesis differ in their approach to handling data with many at ...

CPSC445/545 Introduction to Data Mining Spring 2008

... Compute the centroids of the coordinates of the points labeled 0 and the points labeled 1. Given a new couple (point) to be classified, choose the class whose centroid is closest in the Euclidean sense. Using the entire training set, plot the points and their respective centroids. (c) Divide the tra ...

... Compute the centroids of the coordinates of the points labeled 0 and the points labeled 1. Given a new couple (point) to be classified, choose the class whose centroid is closest in the Euclidean sense. Using the entire training set, plot the points and their respective centroids. (c) Divide the tra ...

Abstract - Compassion Software Solutions

... Data Mining has wide applications in many areas such as banking, medicine, scientific research and among government agencies. Classification is one of the commonly used tasks in data mining applications. For the past decade, due to the rise of various privacy issues, many theoretical and practical s ...

... Data Mining has wide applications in many areas such as banking, medicine, scientific research and among government agencies. Classification is one of the commonly used tasks in data mining applications. For the past decade, due to the rise of various privacy issues, many theoretical and practical s ...

Machine Learning

... An abundance of learning algorithms But no guidelines to select a learning algorithm according to the characteristics of the data ...

... An abundance of learning algorithms But no guidelines to select a learning algorithm according to the characteristics of the data ...

2.10 Random Forests for Scientific Discovery

... The Data Avalanche We can gather and store larger amounts of data than ever before: Satellite data Web data EPOS Microarrays etc Text mining and image recognition. Who is trying to extract meaningful information form these data? Academic statisticians Machine learning specialists ...

... The Data Avalanche We can gather and store larger amounts of data than ever before: Satellite data Web data EPOS Microarrays etc Text mining and image recognition. Who is trying to extract meaningful information form these data? Academic statisticians Machine learning specialists ...

Gene Codes introduces CodeLinker

... analysis and visualization tools for the exploration of gene expression and RNA-seq data. Getting started is easy because CodeLinker supports over 20 different import file types and has excellent data normalization and filtering tools. Once you’ve imported, filtered, and normalized you have numerous ...

... analysis and visualization tools for the exploration of gene expression and RNA-seq data. Getting started is easy because CodeLinker supports over 20 different import file types and has excellent data normalization and filtering tools. Once you’ve imported, filtered, and normalized you have numerous ...

Stat 202: Data Mining Professor: Art Owen

... selection of topics, such as: Association rules, Clustering, Decision Trees, Neural networks, and Nearest Neighbors. ...

... selection of topics, such as: Association rules, Clustering, Decision Trees, Neural networks, and Nearest Neighbors. ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.