$doc.title

... M bits. If any M-‐bit classifier has error more than 2\epsilon fraction of points of the distribution, then the probability it has error only \epsilon fraction of training set is < 2-‐M . Hence ...

... M bits. If any M-‐bit classifier has error more than 2\epsilon fraction of points of the distribution, then the probability it has error only \epsilon fraction of training set is < 2-‐M . Hence ...

Document

... by analyzing or learning from a training set made up of database tuples and their associated class labels or values. (supervised learning) – For classification, the classifier is used to classify the test data. Then the classification accuracy is calculated to estimate the classifier. – For predicti ...

... by analyzing or learning from a training set made up of database tuples and their associated class labels or values. (supervised learning) – For classification, the classifier is used to classify the test data. Then the classification accuracy is calculated to estimate the classifier. – For predicti ...

Temporal Data Mining. Vera Shalaeva Université Grenoble Alpes

... time series classification. All methods in temporal classification can be divided in the following groups: feature based classification, where first some features are extracted from time series on which conventional classification methods can be applied next; distance based classification. These ...

... time series classification. All methods in temporal classification can be divided in the following groups: feature based classification, where first some features are extracted from time series on which conventional classification methods can be applied next; distance based classification. These ...

CPSC445/545 Introduction to Data Mining Spring 2008

... visualize the distribution of the points of each Group. 2. Partition the data into training and classification sets with 600 and 400 observations respectively. How is this best done in an unbiased way? 3. Using R or Weka, compare the performance of: o Logistic Regression o Support Vector Machine (SV ...

... visualize the distribution of the points of each Group. 2. Partition the data into training and classification sets with 600 and 400 observations respectively. How is this best done in an unbiased way? 3. Using R or Weka, compare the performance of: o Logistic Regression o Support Vector Machine (SV ...

Homework 5

... and target). Assign each attribute to either nominal or numeric type. b) Select the first 5000 data points from the data set (it will allow you to perform more experiments). Reformat the data to WEKA format. Run 5-fold cross validation classification experiments using the following algorithms (you c ...

... and target). Assign each attribute to either nominal or numeric type. b) Select the first 5000 data points from the data set (it will allow you to perform more experiments). Reformat the data to WEKA format. Run 5-fold cross validation classification experiments using the following algorithms (you c ...

International Journal of Science, Engineering and Technology

... classifier highly vulnerable to noise in the data. The best choice of k depends upon the data; generally, larger values of k reduce the effect of noise on the classification, but make boundaries between classes less distinct. A good k can be selected by various heuristic techniques. In binary (two c ...

... classifier highly vulnerable to noise in the data. The best choice of k depends upon the data; generally, larger values of k reduce the effect of noise on the classification, but make boundaries between classes less distinct. A good k can be selected by various heuristic techniques. In binary (two c ...

1. the technique used for both the preliminary investigation of the

... 1.________________is the technique used for both the preliminary investigation of the data and the final data analysis. ...

... 1.________________is the technique used for both the preliminary investigation of the data and the final data analysis. ...

Spring 2013 Statistics 702: Data Mining Statistical Methods

... 1. All assignments and solutions (for close-ended problems) will be posted on blackboard. There will be one group project. 2. There will be two in-class exams on Tuesday, March 5 and Thursday, April 18. 3. There will be a final project (in lieu of a final exam) so that you can apply the data mining ...

... 1. All assignments and solutions (for close-ended problems) will be posted on blackboard. There will be one group project. 2. There will be two in-class exams on Tuesday, March 5 and Thursday, April 18. 3. There will be a final project (in lieu of a final exam) so that you can apply the data mining ...

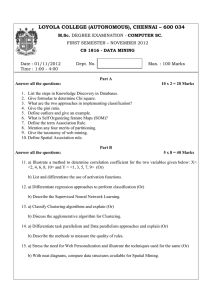

CS 1816 - Loyola College

... 3. What are the two approaches in implementing classification? 4. Give the gini ratio. 5. Define outliers and give an example. 6. What is Self Organizing feature Maps (SOM)? 7. Define the term Association Rule. 8. Mention any four merits of partitioning. 9. Give the taxonomy of web mining. 10. Defin ...

... 3. What are the two approaches in implementing classification? 4. Give the gini ratio. 5. Define outliers and give an example. 6. What is Self Organizing feature Maps (SOM)? 7. Define the term Association Rule. 8. Mention any four merits of partitioning. 9. Give the taxonomy of web mining. 10. Defin ...

Data Mining Lab

... 2. Proficient in generating association rules. 3. Able to build various classification models and Realise clusters from the available data. Prerequisites: Database systems. List of Programs 1. Basics of WEKA tool a. Investigate the Application interfaces. b. Explore the default datasets. 2. Pre-proc ...

... 2. Proficient in generating association rules. 3. Able to build various classification models and Realise clusters from the available data. Prerequisites: Database systems. List of Programs 1. Basics of WEKA tool a. Investigate the Application interfaces. b. Explore the default datasets. 2. Pre-proc ...

Time Series Classification Challenge Experiments

... In [4] we introduced the notion of time series discord. Simply stated, given a dataset, a discord is the object that has the largest nearest neighbor distance, compared to all other objects in the dataset. Similarly, k-discords are the k objects that have the largest nearest neighbor distances. Disc ...

... In [4] we introduced the notion of time series discord. Simply stated, given a dataset, a discord is the object that has the largest nearest neighbor distance, compared to all other objects in the dataset. Similarly, k-discords are the k objects that have the largest nearest neighbor distances. Disc ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.