7_Mini

... o From remaining features, select the one that provides most information gain o Continue until gain below some threshold Many Mini Topics ...

... o From remaining features, select the one that provides most information gain o Continue until gain below some threshold Many Mini Topics ...

6340 Lecture on Object-Similarity and Clustering

... Motivation: Why Clustering? Problem: Identify (a small number of) groups of similar objects in a given (large) set of object. ...

... Motivation: Why Clustering? Problem: Identify (a small number of) groups of similar objects in a given (large) set of object. ...

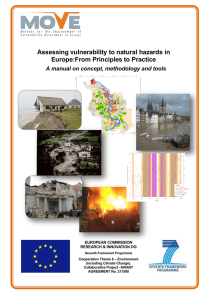

Assessing vulnerability to natural hazards in Europe:From Principles

... 2. Generic conceptual framework for a vulnerability assessment ................................................. 10 2.1. Vulnerability Assessments. What is known? What is missing? What are the needs? ............ 10 2.2. Need for a holistic approach .................................................. ...

... 2. Generic conceptual framework for a vulnerability assessment ................................................. 10 2.1. Vulnerability Assessments. What is known? What is missing? What are the needs? ............ 10 2.2. Need for a holistic approach .................................................. ...

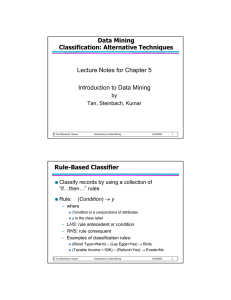

Data Mining Classification: Alternative Techniques

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

1 1 1 1 1 1 1 1 1 1 1 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

chap5_alternative_classification

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

Rule-Based Classifier

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

Intelligent Miner for Data: Enhance Your

... Intelligent Miner for Data: Enhance Your Business Intelligence ...

... Intelligent Miner for Data: Enhance Your Business Intelligence ...

PDF

... Using Classification Trees The primary data mining technique used in these analyses was the classification or decision tree. In this type of analysis, the full data set is first randomly partitioned into three subsets. These subsets are termed the training, validation, and test sets. In this analysi ...

... Using Classification Trees The primary data mining technique used in these analyses was the classification or decision tree. In this type of analysis, the full data set is first randomly partitioned into three subsets. These subsets are termed the training, validation, and test sets. In this analysi ...

Characterizing Pattern Preserving Clustering - Hui Xiong

... Ding, Zhang, Kumar and Holbrook, 2005). If these patterns are not respected, then the value of a data analysis is greatly diminished for end users. If our interest is in patterns, such as hyperclique patterns, then we need a clustering approach that preserves these patterns, i.e., puts the objects o ...

... Ding, Zhang, Kumar and Holbrook, 2005). If these patterns are not respected, then the value of a data analysis is greatly diminished for end users. If our interest is in patterns, such as hyperclique patterns, then we need a clustering approach that preserves these patterns, i.e., puts the objects o ...

Quality Awareness for Managing and Mining Data

... data quality depending on the considered dimension (e.g., a data source may provide very accurate data for a specific domain and low accuracy for another one, another source may offer fresher data but not as accurate as the latter one depending on the domain of interest), - When data interpretations ...

... data quality depending on the considered dimension (e.g., a data source may provide very accurate data for a specific domain and low accuracy for another one, another source may offer fresher data but not as accurate as the latter one depending on the domain of interest), - When data interpretations ...

Chapter 5

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

1 1 1 1 1 1 1 1 1 1 1 0 0 1 1 1 1 1 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 0 0 0 0

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

... – If k is too small, sensitive to noise points – If k is too large, neighborhood may include points from other classes ...

Data Warehousing and Data Mining

... The advantage of using the Bottom Up approach is that they do not require high initial costs and have a faster implementation time; Bottom up approach is more realistic but the complexity of the integration may become a serious obstacle. ...

... The advantage of using the Bottom Up approach is that they do not require high initial costs and have a faster implementation time; Bottom up approach is more realistic but the complexity of the integration may become a serious obstacle. ...

Problems and Algorithms for Sequence

... aggregation problem that we study in Chapter 6. A preliminary version of this study has appeared in [MTT06]. This problem takes as input many different partitions of the same sequence. For example, different segmentation algorithms produce different partitions of the same underlying data points. In ...

... aggregation problem that we study in Chapter 6. A preliminary version of this study has appeared in [MTT06]. This problem takes as input many different partitions of the same sequence. For example, different segmentation algorithms produce different partitions of the same underlying data points. In ...

Information-theoretic graph mining - PuSH

... An important paradigm of data mining used in this thesis is the principle of Minimum Description Length (MDL). It follows the assumption: the more knowledge we have learned from the data, the better we are able to compress the data. The MDL principle balances the complexity of the selected model and ...

... An important paradigm of data mining used in this thesis is the principle of Minimum Description Length (MDL). It follows the assumption: the more knowledge we have learned from the data, the better we are able to compress the data. The MDL principle balances the complexity of the selected model and ...

Integrating Data Mining with Relational DBMS: A Tightly

... analysis. There are obvious advantages in integrating a database system in this process. In addition to such controlling parameters as support and con dence levels, the user has an additional degree of freedom in the choice of the data set to be analyzed, which can be generated as the result of a qu ...

... analysis. There are obvious advantages in integrating a database system in this process. In addition to such controlling parameters as support and con dence levels, the user has an additional degree of freedom in the choice of the data set to be analyzed, which can be generated as the result of a qu ...

... data streams and for the extraction of closed frequent trees. First, we deal with each of these tasks separately, and then we deal with them together, developing classification methods for data streams containing items that are trees. In the data stream model, data arrive at high speed, and the algo ...

Nonlinear dimensionality reduction

High-dimensional data, meaning data that requires more than two or three dimensions to represent, can be difficult to interpret. One approach to simplification is to assume that the data of interest lie on an embedded non-linear manifold within the higher-dimensional space. If the manifold is of low enough dimension, the data can be visualised in the low-dimensional space.Below is a summary of some of the important algorithms from the history of manifold learning and nonlinear dimensionality reduction (NLDR). Many of these non-linear dimensionality reduction methods are related to the linear methods listed below. Non-linear methods can be broadly classified into two groups: those that provide a mapping (either from the high-dimensional space to the low-dimensional embedding or vice versa), and those that just give a visualisation. In the context of machine learning, mapping methods may be viewed as a preliminary feature extraction step, after which pattern recognition algorithms are applied. Typically those that just give a visualisation are based on proximity data – that is, distance measurements.