* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Properties of Random Variables

Survey

Document related concepts

Transcript

Chapter 1

Properties of Random

Variables

Random variables are encountered in every discipline in science. In this

chapter we discuss how we may describe the properties random variables,

in particular by using probability distributions, as well as defining the mean

and the standard deviation of random variables. Since random variables

are encountered throughout chemistry and the other natural sciences, this

chapter is rather broad in scope. We do, however, introduce one particular

type of random variable, the normally-distributed random variable. One

of the most important skills you will need to obtain in this chapter is the

ability to use tables of the standard normal cumulative probabilities to solve

problems involving normally-distributed variables. A number of numerical

examples in the chapter will illustrate how to do so.

Chapter topics:

1. Random variables

2. Probability distributions

(especially the normal

distribution)

3. Measures of location and

dispersion

1.1 A First Look at Random Variables

In studying statistics, we are concerned with experiments in which the outcome is subject to some element of chance; these are statistical experiments.

Classical statistical experiments include coin flipping or drawing cards at

random from a deck. Let’s consider a specific experiment: we throw two

dice and add the numbers displayed. The list of possible outcomes of this

would be {2, 3, . . . , 12}. This list comprises the domain of the experiment.

The domain of a variable is defined simply as the list of all possible values

that the variable may assume.

The domain will depend on exactly how a variable is defined. For example, in our dice experiment, we might be interested in whether or not the

sum is odd or even; the domain will then be {even, odd}. Let’s consider a

different experiment: tossing a coin two times. We can think of the domain

as {HH, HT, TH, TT}, where H = heads and T = tails. Alternatively, we can

focus on the total number of heads after the two tosses, in which case the

domain is {0, 1, 2}. In all experiments, the domain associated with the

experiment will contain all the outcomes that are possible, no matter how

unlikely.

Chemical measurements using some instrument are also statistical measurements, with an associated domain. The domain of these measurements

will be all the possible values that can be assumed by the measurement device.

1

The domain contains all the

possible values of a variable

2

1. Properties of Random Variables

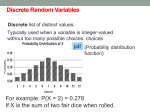

discrete values

continuous values

Figure 1.1: Difference between discrete and continuous random variables. A discrete variable can only assume certain values (e.g., the hash-marks on the number

line), whereas a continuous variable can assume any value within the range of all

possible values.

Random variables are variables

that cannot be predicted with

complete certainty

The outcome of an experiment will vary: in other words, the outcome

is a variable. Furthermore, the outcome of a vast majority of the experiments in science will contain a random component, so that the outcome

is not completely predictable. These types of variables are called random

variables. Since random variables cannot be predicted exactly, they must

be described in the language of probability. For example, we cannot say

for certain that the result of a single coin flip will be ‘heads,’ but we can

say that the probability is 0.5. Every outcome in the domain will have a

probability associated with it.

It is an advantage to be able to express experimental outcomes as numbers; such variables are quantitative random variables (as opposed to an

outcome such as ‘heads,’ which is a “qualitative” random variable). We

will be concerned exclusively with quantitative random variables, of which

there are two types: discrete and continuous variables.

The distinction between these variables is most easily understood by

using a few examples. If our experiment consists of rolling dice or surveying the number of children in households, then the random variable will

always be a whole number; these are discrete variables. A discrete variable

can only assume certain values within the range contained in the domain.

Unlike a discrete variable, a continuous variable is theoretically able to assume any value in an interval contained within its domain. If we wanted

to measure the height or weight of a group of people, then the resulting

values would be continuous variables.

If we think in terms of a number line, a discrete variable can only assume certain values on the line (for example, the values associated with

whole numbers) while continuous variables may assume any value on the

line. Figure 1.1 demonstrates this concept. The number line in the figure represents the entire domain for a variable. A discrete variable would

be constrained to assume only certain values within the interval, while a

continuous variable can assume any value on the number line. Within its

domain there are always an infinite number of possible values for a continuous variable. The number of discrete variables can be either finite or

infinite.

One final note: although the distinction between continuous and discrete variables is important in how we use probability to describe the possible outcomes, as a practical matter there is probably no such thing as a

truly continuous random variable in measurement science. This is because

any measuring device will limit the number of possible outcomes. For example, consider a digital analytical balance that has a range of 0–100 g and

1.2. Probability Distributions of Discrete Variables

3

displays the mass to the nearest 0.1 mg. There are 106 possible values in

this range — a large value, to be sure, but not infinitely large. For most purposes, however, we may treat this measurement as a continuous variable.

1.2 Probability Distributions of Discrete Variables

1.2.1 Introduction

Let’s briefly summarize what we have so far:

• a statistical experiment is one in which there is some element of

chance in the outcome;

• the outcome of the experiment is a random variable;

• the domain is a list (possibly infinite) of all possible outcomes of a

statistical experiment.

Now, although the domain gives us the possible outcomes of an experiment, we haven’t said anything about which of these are the most probable outcomes of the experiment. For example, if we wish to measure the

heights of all the students at the University of Richmond using a 30 ft. tape

measure, then the domain will consist of all the possible readings from

the tape, 0–30 ft. However, even though measurements of 6 in or 20 ft are

contained within the domain, the probability of observing these values is

vanishingly small.

A probability distribution describes the likelihood of the outcomes of

an experiment. Probability distributions are used to describe both discrete

and continuous random variables. Discrete distributions are a little easier

to understand, and so we will discuss them first.

Let’s consider a simple experiment: tossing a coin twice. Our random

variable will be the number of heads that are observed after two tosses.

Thus, the domain is {0 1 2}; no other outcomes are possible. Now, let’s

assign probabilities to each of these possible outcomes. The following table

lists the four possible outcomes along with the value of x associated with

each outcome.

Outcome

TT

TH

HT

HH

Random Variable (x)

0

1

1

2

If we assume that heads or tails is equally probable (probability of 0.5

for both), then each of the four outcomes is equally probable, with a probability of 0.25 each. It seems intuitive, then, that

P(x = 0) = 0.25

P(x = 1) = 0.5

P(x = 2) = 0.25

where P(x = x0 ) is the probability that the random variable x is equal to

the value x0 .

There! We have described the probability distribution of each possible

outcome of our experiment. The set of ordered pairs, [x, P(x = x0 )], where

Since random variables are

inherently unpredictable,

probability distributions must be

used to describe their properties

4

1. Properties of Random Variables

x is a random variable and P(x = x0 ) is the probability that x assumes any

one of the values in its domain, describe the probability distribution of the

random variable x for this experiment. Note that the sum of the probability

of all the outcomes in the domain equals one; this is a requirement for all

discrete probability distributions.

1.2.2 Examples of Discrete Distributions

The Binomial Distribution

A Bernoulli experiment consists

of a series of identical trials,

each of which has two possible

outcomes.

Coin-tossing experiments are an example of a general type of experiment

called a Bernoulli, or binomial, experiment. For example, a biologist may be

testing the effectiveness of a new drug in treating a disease. After infecting,

say, 30 rats, the scientist may then inject the drug into each rat and record

whether the drug is successful or not on a rat by rat basis. Each rat is a

“coin toss,” and the result is an either-or affair, just like heads-tails. Many

other experiments in all areas of science can be described in similar terms.

To generalize, a Bernoulli experiment has the following properties:

1. Each experiment consists of a number of identical trials (“coin flips”).

The random variable, x, is the total number of “successful” trials observed after all the trials are completed.

2. Each trial has only two possible results, “success” and “failure.” The

probability of success is p and the possibility of failure is q. Obviously, p + q = 1.

3. The probabilities p and q remain constant for all the trials in the

experiment.

In our simple coin-tossing example, we could deduce the probability

distribution of the experiment by simple inspection, but there is a more

general method. The probability distribution for any Bernoulli experiment

is given by the following function, p(x),

The binomial distribution

function describes the outcome

of Bernoulli experiments

p(x) =

n!

p x qn−x

x!(n − x)!

(1.1)

where n is the number of trials in the experiment, and n! is the factorial of

n. If we want to find the probability of a particular outcome P(x = x0 ), then

we must evaluate the binomial distribution functions at that value x0 .

Let’s imagine that, in our hypothetical drug-testing experiment (with

n = 30 rats), the probability of a successful drug treatment is p = 0.15.

Figure 1.2 shows the probability distribution of this experiment1 .

There are three common methods used to represent the probability distribution of a random variable:

1. As a table of values, where the probability every of possible outcome

in the domain is given. Obviously, this is only practical when the

number of possible outcomes is fairly small.

2. As a mathematical function. This is the most general format, but it

can be difficult to visualize. In some cases it may not be possible to

represent the probability distribution as a mathematical function.

1 Note that the binomial distribution becomes more difficult to calculate as the number of

trials, n, increases (due to the factorial terms involving n). There some other distribution

functions that can give reasonable approximations to the binomial function in such cases.

1.2. Probability Distributions of Discrete Variables

5

0.25

Probability, P(x)

0.20

0.15

0.10

0.05

0.00

0

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

Number of successes, x

Figure 1.2: Binomial Probability Distribution. A graphical depiction of the binomial

probability distribution as calculated from eqn. 1.1 with n = 30 and p = 0.15.

3. As a graphical plot. This is a common method to examine probability

distributions.

Figure 1.3 on page 6 describes the outcome of an experiment using a plot

and a table, both of which were constructed using equation 1.2.

The Poisson Distribution

Besides binomial experiments, counting experiments are also quite common

in science. Most often, we are interested in counting the number of occurrences of some event within some time interval or region in space. For

example, we might want to characterize a photon detection rate by counting the number of photons detected in a certain time interval, or we might

want to characterize the density of trees in a plot of land by counting the

number of trees that occur in a given acre. The random variable in any

counting experiment is a positive whole number, the number of “counts.”

It is often true that this discrete random variable follows a Poisson distribution.

Let’s say that we are counting alpha particles emitted by a sample of a

radioactive isotope at a rate of 2.5 counts/second. Our experimental measurement is thus “counts detected in one second” and the domain consists

of all positive whole numbers (and zero).

The Poisson probability distribution for this experiment can be determined from the following general formula:

p(x) =

e−λt (λt)x

x!

(1.2)

where λ is the average rate of occurrence of events, and t is the interval of

observation. Thus, for our experiment, the product λt = 2.5 events/second

× 1 second = 2.5 events. Let’s use this formula to calculate the probability

A counting experiment is an

experiment in which events or

objects are enumerated in a

given unit of time or space.

The Poisson distribution function

describes the outcome of many

counting experiments.

1. Properties of Random Variables

Probability

6

Measured alpha counts

Figure 1.3: The Poisson probability distribution, shown here as both a table and

a plot, describes the probability of observing alpha particle counts, as calculated

from eqn. 1.2 with λ = 2.5 counts/second and t = 1 second.

that we will measure 5 counts during one observation period:

P(x = 5) =

e−2.5 (2.5)5

= 0.0668

5!

Figure 1.3 shows the probabilities of measuring zero through 10 counts

during one measurement period for this experiment.

Just like the binomial distribution, the Poisson distribution of discrete

variables has two important properties:

• The probability is never negative: P(x = x0 ) ≥ 0

∞

• The sum of all probabilities is unity: i=0 P(x = xi ) = 1

These properties are shared by all discrete probability distributions.

Advanced Topic: The Boltzmann Distribution

Probability distributions are necessary in order to characterize the outcome of many experiments in science due to the presence of measurement

error, which introduces a random component to experimental measurements. However, probability distributions are also vital in understanding

the nature of matter on a more fundamental level. This is because many

properties of a system, when viewed at the atomic and molecular scale,

are actually random variables. There is an inherent “uncertainty” of matter

and energy that is apparent at small scales; this nature of the universe is

predicted by quantum mechanics. What this means is that we must again

resort to the language of probability (and probability distributions) in order

to describe such systems.

Let us consider the energy of a molecule, which is commonly considered to be partitioned as electronic, vibrational, and rotational energy. As

you should know from introductory chemistry, a molecule’s energy is quantized. In other words, the energy of a molecule is actually a discrete random

1.2. Probability Distributions of Discrete Variables

7

0.7

0.6

298 K

400 K

Probability

0.5

0.4

0.3

0.2

0.1

0.0

0

1

2

3

4

5

6

7

8

9

10

Vibrational quantum number

Figure 1.4: Probability distribution among vibrational energy levels of the I2

molecule at two different temperatures. The actual energy levels are given by

1

Eν = (ν + 2 ) · 214.6 cm−1 , where ν is the vibrational quantum number. The probability distribution assumes evenly spaced vibrational levels (i.e., the harmonic oscillator assumption). Notice that at higher temperatures, there is a greater probability

that a molecule will be in a higher energy level.

variable. The random nature of the energy is an innate property of matter

and not due to random error in any measuring process.

Since molecular energy is a random variable, it must be described by

a probability distribution. If a molecule is in thermal equilibrium with its

environment, the probability that the molecule has a particular energy at

any given time is described by the Boltzmann distribution:

e−βx

p(x) = βx

e

(1.3)

where β = (kT )−1 , T is the temperature in K, and the denominator is a summation over all the possible energy states of the molecule. If the different

states of a molecule have evenly spaced energy levels and no degeneracy,

then the Boltzmann distribution function can be simplified to

p(x) = e−βx 1 − e−β∆E

where ∆E is the separation between energy levels. Figure 1.4 shows the

probability distribution for the vibrational energy of an I2 molecule at two

different temperatures.

We can interpret the Boltzmann distribution in two ways, both of which

are useful:

• The Boltzmann distribution gives the probability distribution of the

energy of a single molecule at any given time. For example, if we measure the vibrational energy of an I2 molecule at 298 K, then according

The Boltzmann probability

distribution function describes

the energy of a molecule in

thermal equilibrium with its

surroundings.

8

1. Properties of Random Variables

to the Boltzmann distribution there is a 64.5% probability that the

molecule is in the ground vibrational level (ν = 0). If we wait for a

time (say 10 seconds), and then measure again, then there is a 22.9%

chance that the molecule has absorbed some heat and is now in the

first excited vibrational energy level (ν = 1). Of course, there is still a

64.5% chance that the molecule is in the ground state.

• The Boltzmann distribution gives the fractional distribution of molecular energy states in a chemical sample. Let’s imagine that we have a

sample of one million I2 molecules at 298K (which is not very many;

remember that one mole is about 1023 molecules). The Boltzmann distribution tells us that at any given time, about 645,000 molecules will

be in the ground vibrational energy level and about 229,000 molecules

will be in the first excited vibrational level. Molecules may be constantly gaining and losing vibrational energy, through collisions and

by absorbing/emitting infrared light, but since there are so many

molecules, the total number of molecules at each energy level will

remain fairly constant. For this reason, the energy probability density

function is sometimes called the Boltzmann distribution of states.

1.3 Important Characteristics of Random

Variables

Two important properties of

random variables are

location (‘central tendency’)

and dispersion (‘variability’).

We have discussed the idea of probability distributions, in particular the

distributions of discrete variables. We will proceed to continuous variables

momentarily, but first we will discuss two important properties by which

we may characterize random variables, irregardless of the probability distribution: location and the dispersion.

Let’s take stock of the situation thus far: for any random variable, the

domain gives all possible values of the variable and the probability distribution gives the likelihood of those values. Together these two pieces of

information provide a complete description of the properties of the random variable. Two important properties of a variable are contained in this

description are:

• Location: the central tendency of the variable, which describes a value

around which the variable tends to cluster, and

• Dispersion: the typical range of values that might be expected to be

observed in experiments. This gives some idea of the spread in values

that might result from our experiment(s).

The probability distribution contains all the information needed to determine these characteristics, as well as still more esoteric descriptors of

the properties of random variables. Since we have discussed the distributions of discrete variables, we will tend to use these in our discussions and

examples; however, the same concepts apply, with very little modification,

to continuous variables.

1.3.1 Central Tendency of a Random Variable

The central tendency, or location, of a variable can be indicated by any (or

all) of the following: the mode, the median, or the mean. Although most

1.3. Important Characteristics of Random Variables

9

people are familiar with means, the other two properties are actually easier

to understand.

Mode

The mode is the most probable value of a discrete variable. More generally,

it is the maximum of the probability distribution function: the value of

xmode such that

p(xmode ) = Pmax

probability

Multi-modal probability distributions have more than one mode — distributions with two modes are bimodal, and so on. Although multi-modal distributions may have several local maxima, there is usually a single global

maximum that is the single most probable value of the random variable.

In the example with the alpha particle measurements (see fig. 1.3), the

mode of the distribution — xmode = 2 — can be determined by glancing at

the bar graph of the Poisson distribution.

Multi-modal Distribution

value

Median

The median is only a little more complicated than the mode: it is the value

Q2 such that

P(x < Q2 ) = P(x > Q2 )

In other words, there is an equal probability of observing a value greater

than or less than the median.

The median is also the second quartile — hence the origin of the symbol

Q2 . Any distribution can be divided into four equal “pieces,” such that:

P(x < Q1 ) = P(Q1 < x < Q2 ) = P(Q2 < x < Q3 ) = P(x > Q3 )

The boundaries Q1 , Q2 (i.e., the median), and Q3 are the quartiles of the

probability distribution.

Mean

Before defining the mean, it is helpful to discuss a mathematical operation called the weighted sum. Most everybody performs weighted sums —

especially students calculating test averages or grade point averages! For

example, let’s imagine that a student has taken two tests and a final, scoring

85 and 80 points on the tests, and 75 points on the final. An “unweighted

average” of these three numbers is 80 points; however, the final is worth

(i.e., weighted) more than the tests. Suppose that the instructor feels that

the final is worth 60% of the test grade, while the other two tests are worth

20% each. The weighted sum would be calculated as follows.

Let w1 = w2 = 0.2, and w3 = 0.6

weighted score =

wi · scor e = w1 · 85 + w2 · 80 + w3 · 75 = 78

The final score, 78, is a weighted sum. Since the final is weighted more

than the test, the weighted sum is closer to the final score (75) than is the

unweighted average (80). Grade point averages are calculated on a similar

principle, where the weights for each grade are determined by the course

credit hours. To choose an example from chemistry, the atomic weights

A distribution is sometimes split

up ten ways, into deciles. The

median is the fifth decile, D5 .

10

1. Properties of Random Variables

listed in the periodic tables are weighted averages of isotope masses; the

weights are determined by the relative abundance of the isotopes.

In general, a weighted sum is represented by the expression

(1.4)

weighted sum =

wi xi

weights.

where xi are the individual values, and wi are the corresponding

When the sum of all the individual weights is one ( wi = 1) then the

weighted sum is often referred to as a weighted average.

The mean of a discrete random variable is simply a weighted average,

using the probabilities as the weights. In this way, the most probable values

have the most “influence” in determining the mean; this is why the mean is

a good indicator of central tendency. The mean, or expected value, E(x),

of a random variable is defined as follows: for a discrete variable, it is

xi p(xi )

(1.5a)

E(x) = µx =

while for a continuous variable, it is

+∞

E(x) = µx =

x p(x) dx

(1.5b)

−∞

where p(x) is a mathematical function that defines the probability distribution of the random variable x.

The means of binomial and Poisson distributions are given by the following general formulas:

µx = n · p

for the binomial distribution, where n is the number of trials and p is the

probability of success for each trial. For a variable described by the Poisson

distribution, the mean can be calculated as

µx = λ · t

where λ is the mean “rate” and t is the measurement interval (usually a

time or distance).

Comparison of Location Measures

We have defined three different indicators of the location of a random variable: the mean, median and mode. Each of these has a slightly different

meaning.

Imagine that you are betting on the outcome of a particular experiment:

• If you choose the mode, you are essentially betting on the single most

likely outcome of the experiment.

• If you choose the median, you are equally likely to be larger or smaller

than the outcome.

• If you choose the mean, you have the best chance of being closest to

the outcome.

A random variable cannot be predicted exactly, but each of the three

indicators gives a sense of the what value the random variable is likely to

mean

11

(a)

median

mode

mode

median

mean

1.3. Important Characteristics of Random Variables

(b)

Figure 1.5: Comparison of values of mean, median and mode for (a) positively

and (b) negatively skewed probability distributions. For symmetrical distributions

(so-called ‘bell-shaped’ curves) the three values are identical.

be near. In most applications, the mean gives the best single description of

the location of the variable.

Just how different are the values of the mean, median and mode? It

turns out that the three values are different only for assymetric distributions, such as the two shown in figure 1.5. If a distribution is skewed to the

right (or positively skewed; fig. 1.5(a)) then

The mean is the most common

descriptor of the location of a

random variable.

µx > Q2 > xmode

while for distributions skewed to the left (negatively skewed; fig. 1.5(b))

µx < Q2 < xmode

For symmetrical (“bell-shaped”) distributions, the mean, median and mode

all have exactly the same value.

1.3.2 Dispersion of a Random Variable

Some variables are more “variable,” more uncertain, than others. Of course,

theoretically speaking, a variable may assume any one of the range of values in the domain. However, when speaking of the variability of a random

variable, we generally mean the range of values that would commonly (i.e.,

most probably) be observed in an experiment. This property is called the

dispersion of the random variable. Dispersion refers to the range of values

that are commonly assumed by the variable.

Experiments that produce outcomes that are highly variable will be more

likely to give values that are farther from the mean than similar experiments that are not as variable. In other words, probability distributions

tend to be broader as the variability increases. Figure 1.6 compares the

probability functions (actually called “probability density functions”) of two

continuous variables.

As with the mean, it is convenient to describe variability with a single

value. Three common ways to do so are:

1. The interquartile range and the semi-interquartile range

2. The mean absolute deviation

Statisticians sometimes use the

term scale instead of dispersion.

12

1. Properties of Random Variables

0.14

Probability density

0.12

0.10

0.08

0.06

0.04

0.02

0.00

0

5

10

15

20

25

30

35

40

Value

Figure 1.6: Comparing the variability of two random variables. The variable described by the broader probability distribution (dotted line) is more likely to be

farther from the mean than the other variable.

3. The variance, and the standard deviation.

These will now be described.

(Semi-)Interquartile Range

The interquartile range, IQR, is the difference between the first and third

quartiles (see figure 1.7):

IQR = Q3 − Q1

This is a measure of dispersion because the “wider” a distribution gets, the

greater the difference between the quartiles.

The semi-interquartile range, QR is probably the more commonly used

measure of dispersion; it is simply half the interquartile range.

QR =

Q3 − Q1

2

(1.6)

Mean Absolute Deviation

The mean absolute deviation is

the expected value of | x − µx |

Since the dispersion describes the spread of a random variable about its

mean, it makes sense to have a quantitative descriptor of this quantity. The

mean absolute deviation, MD, is exactly what it sounds like: the expected

value (i.e., the man) of the absolute deviation of a variable from its mean

value, µx .

MD ≡ E (| x − µx |)

The concept behind the mean absolute deviation is quite simple: it indicates the mean (‘typical’) distance of a variable from its own mean, µx . For

1.3. Important Characteristics of Random Variables

13

Probability

Interquartile Range

Q1

Q2

Q3

Value

Figure 1.7: The interquartile range is a measure of the dispersion of a random

variable. It is the difference between the first and third quartiles of a distribution,

Q3 − Q1 , where the quartiles divide the distribution into four equal parts (see page

9). The semi-interquartile range is also a common measure of dispersion; it is half

the interquartile range.

a discrete variable,

MD =

| xi − µx | p(x)

while for a continuous variable,

+∞

MD =

| x − µx | p(x)

−∞

(1.7a)

(1.7b)

Variance and Standard Deviation

Like the mean absolute deviation, the variance and standard deviation measure the dispersion of a random variable about its mean µx . The variance

of a random variable x, σx2 , is the expected value of (x − µx )2 , which is the

squared deviation of x from its mean value:

σx2 ≡ E (x − µx )2

As you can see, the concept of the variance is very similar to that of the

mean absolute deviation. In fact, the variance is sometimes called the mean

squared deviation. The variance for discrete and continuous variables is

given by

σx2 = (xi − µx )2 p(xi )

(1.8a)

i

∞

σx2 =

(x − µx )2 p(x) dx

−∞

(1.8b)

The variance is the expected

value of (x − µx )2 , and the

standard deviation is the positive

root of the variance.

14

The standard deviation is

calculated

from the variance:

σx = + σx2

The RSD is an alternate way to

present the standard deviation

1. Properties of Random Variables

Look at the discrete variable (eqn. 1.8a): we have another weighted sum!

The values being summed, (xi − µx )2 , are the squared deviations of the

variable from the mean. The squared deviations indicate how far the value

xi is from the mean value µx , and the weights in the sum, as in eqn. 1.5, are

the probabilities of xi . Thus, “broader” probability distributions will tend

to have larger weights for values of x that have larger squared deviations

(x − µx )2 (and hence are more distant from the mean). Such distributions

will give larger values for the variance, σx2 . Higher variance signifies greater

variability of a random variable.

One problem with using the variance to describe the dispersion of a

random variable is that the units of variance are the squared units of the

original variable. For example, if x is a length measured in m, then σx2 has

units of m2 . The standard deviation, σx , has the same units as x, and so

is a little more convenient at times. The standard deviation is simply the

positive square root of the variance.

Sometimes the variability of a random variable is specified by the relative standard deviation, RSD:

σx

σx

RSD =

or RSD =

µx

x

Both of these expressions are commonly used to calculate RSD; which one

is used is usually obvious from the context. The RSD can be expressed as a

fraction or as a percentage. The RSD is sometimes called the coefficient of

variation (CV).

Comparison of Dispersion Measures

The standard deviation, σx , is

the most common measure of

dispersion.

We have described three common ways to measure a random variable’s

dispersion: semi-interquartile range, QR , mean absolute deviation, MD, and

the standard deviation, σ . These measures are all related to each other, so,

in a sense, it makes no difference which we use. In fact, for distributions

that are only moderately skewed, MD ≈ 0.8σ and QR ≈ 0.67σ . For a

variety of reasons (which are beyond the scope of this text), the variance

and standard deviation are the best measures of dispersion of a random

variable.

Aside: Quantum Variability

The term ‘uncertainty’ in

Heisenberg’s principle refers to

the standard deviation of values

used to describe properties at

the atomic/molecular level.

As stated earlier, quantum mechanics asserts that many of the properties

of matter at the atomic/molecular scale are inherently unpredictable (i.e.,

random). The magnitude of the variability of these properties only becomes

apparent on a sufficiently small scale. Hence, these variables must be described by a probability distribution with a certain mean and standard deviation. This ability to interpret system properties such as energy and position as random variables is an example of the broad scope of the concepts

contained in the study of probability and statistics.

One of the most important relationships in quantum mechanics is Heisenberg’s Uncertainty Principle. The Uncertainty Principle states that the product of the standard deviation of certain random variables, called complementary variables, or complementary properties, has a lower limit. For

example, the linear momentum p and the position q of a particle are complementary properties; the Heisenberg Uncertainty Principle states that

σp · σq ≥

h

4π

1.4. Probability Distributions of Continuous Variables

15

As stated in the Uncertainty Principle, the standard deviation of complementary variables are inversely related to one another. In other words, if a

particle such as an electron is constrained to remain confined to a certain

area, then the uncertainty in the linear momentum is great: i.e., if σq is

small (e.g., for a confined electron) then σp is large.

1.4 Probability Distributions of Continuous

Variables

1.4.1 Introduction

Properties such as mass or voltage are typically free to assume any value;

hence, they are continuous variables. There is one fundamental distinction

between discrete and continuous variables: the probability of a continuous

random variable, x, exactly assuming one of the values, x0 , in the domain

is zero! In other words,

P(x = x0 ) = 0

How, then, do we specify the probabilities of continuous random variables? Instead of calculating the probabilities of specific continuous variables, we determine the probability that the outcome is within a given range

of continuous variables. In order to find the probability that the random

variable x will be between two values x1 and x2 , we can use a function p(x)

such that

x2

P(x1 ≤ x ≤ x2 ) =

p(x) dx

(1.9)

x1

The function p(x) is called the probability density function of the continuous random variable x. Figure 1.8 demonstrates the general idea.

Just as the probability of a discrete variable must sum to one over the

entire domain, the area under the probability density function within the

range of possible values for x must be one. For example, if the domain

ranges from −∞ to ∞, then

∞

p(x) dx = 1

−∞

As in the discrete case, the value of the function p(x) must be positive over its entire range. The probability density function allows us to

construct a probability distribution for continuous variables; indeed, sometimes it is called simply a “distribution function,” as with discrete variables.

However, evaluation of the probability density function for a particular

value x0 does not yield the probability that x = x0 — that probability is

zero, after all — as it would for a discrete distribution function.

Probability distributions are thus a little more complicated for continuous variables than for discrete variables. The main difference is that probabilities of continuous variables are defined in terms of ranges of values,

rather than single values. The probability density function, p(x) (if one

exists) can be used to determine these probabilities.

The probability density function,

sometimes called the probability

mass function, is used to

determine probabilities of

continuous random variables.

1. Properties of Random Variables

x1

Probability density

16

x2

Value

Figure 1.8: Probability characteristics of continuous variables. The curve is the

probability density function, and the shaded area is the probability that the random

variable will be between x1 and x2 . The area under the entire curve is one.

1.4.2 Normal (Gaussian) Probability Distributions

The normal probability

distribution describes the

characteristics of many

continuous random variables

encountered in measurement

science.

By far, the most common probability distribution in science is the Gaussian

distribution. In very many situations, it is assumed that continuous random

variables follow this distribution; in fact, it is so common that it is simply

referred to as the normal probability distribution. The probability density

function of this distribution is given by the following equation:

(x − µ)2

1

√

exp −

N(x : µ, σ ) =

(1.10)

2σ 2

σ 2π

where the expression N(x : µ, σ ) conveys the information that x is a

normally-distributed variable with mean µ and standard deviation σ . Figure 1.9 shows a normal probability density function with µx = 50 and

σx = 10. Note that it is a symmetric distribution with the well-known “bellcurve” shape.

Calculating probability distributions of continuous variables using the

probability density function is a little more complicated than with discrete

variables, as shown in example 1.1.

Example 1.1

Johnny Driver is a conscientious driver; on a freeway with a posted speed

limit of 65 mph, he tries to maintain a constant speed of 60 mph. However, the car speed fluctuates during moments of inattention. Assuming

that car speed follows a normal distribution with a mean µx = 60 mph

and standard deviation σx = 3 mph, what is the probability that Johnny

is exceeding the speed limit at any time?

Figure 1.10 shows a sketch of the situation. The car speed is a random

variable that is normally distributed with µx = 60 mph and σx = 3 mph.

1.4. Probability Distributions of Continuous Variables

17

0.05

Probability density

0.04

0.03

0.02

0.01

0.00

0

20

40

60

80

100

Measurement value

Figure 1.9: Plot of the Gaussian (“normal”) probability distribution with µ = 50 and

σ = 10. Note that most of the area under the curve is within 3σ of the mean.

We need to determine the probability that x is greater than 65 mph, which

is the shaded area under the curve in the figure:

∞

P(x > 65) =

65

1

(x − µx )2

√

dx

exp −

2σ

2π σ

where µx and σx are given the appropriate values. When this integral is

evaluated, a value of 0.0478 is obtained. Thus, there is a 4.78% probability

that Johnny is speeding.

1.4.3 The Standard Normal Distribution

In calculating probabilities of continuous variables, it is usually necessary

to evaluate integrals, which can be inconvenient (a computer program is required in the case of normally-distributed variables) and tedious. It would

be preferable if there were tables of integration values available for reference; there are, in fact, many tables available for just this purpose. Of

course, an integration table will only be valid for a specific probability distribution. However, the normal distribution is not a single distribution, but

is actually a family of distributions: changing the mean or variance of the

variable will give a different distribution. It is not practical to formulate

integration tables for all possible values of µ and σ 2 ; fortunately, this is

not necessary, as we will see now.

A special case of the normal distribution (eqn. 1.10) occurs when the

mean is zero (µx = 0) and the variance is unity (σx2 = σx = 1); this particular

normal probability distribution is called the standard normal distribution,

N(z).

1

z2

N(z) = √

(1.11)

exp −

2

2π

The standard normal distribution

is a special version of the normal

distribution. It is useful in

solving problems like

example 1.1.

18

1. Properties of Random Variables

x = 65

0.14

Probability density

0.12

0.10

0.08

0.06

0.04

0.02

0.00

50

52

54

56

58

60

62

64

66

68

70

Car speed, mph

Figure 1.10: Sketch of distribution of the random variable in example 1.1. The

area under the curve is the value we want: P(x > 65) = 0.0478

Other than giving a simplified form of the normal distribution function,

the standard normal distribution is important because integration tables

of this function exist that can be used to calculate probability values for

normally-distributed variables. In order to use these tables, it is necessary

to transform a normal variable, with arbitrary values of µ and σ 2 , to the

standard normal distribution. This transformation is accomplished with

z-transformation, which is usually called standardization.

Taking a variable x, we define z such that

z=

x − µx

σx

(1.12)

The transformed value z is the z-score of the value x. The z-score of a

value is the deviation of the value from its mean µx in units of the standard

deviation, σx , as illustrated in the example 1.2.

Example 1.2

Let’s say we set up an experiment such that the outcome is described by

a normal distribution with µx = 25.0 and σx = 2.0. A single measurement

yields x0 = 26.4; what is the z-score of this measurement?

The value is calculated directly from eqn. 1.12

z0 =

x0 − µx

26.4 − 25.0

=

= 0.7

σx

2.0

Thus, the measurement is +0.7σ from the mean.

The process of standardization of a random variable x yields another

variable z; if x is normally distributed with mean µx and standard deviation

σx , then z is also normally distributed with µ = 0 and σ = 1. This illustrates an important concept: any value calculated using one or more random variables is also a random variable. In other words, the calculations

associated with standardization did not rid x of its innate “randomness.”

1.4. Probability Distributions of Continuous Variables

19

Although there are no integration tables for a normally-distributed variable x with arbitrary mean and standard deviation, we can apply the ztransformation and use the integration tables of the standard normal distribution. Tables of the standard normal distribution usually give cumulative probabilities, which correspond to the areas in one of the “tails” under

the density function. The ‘left tail’ is given by

z0

z0

P(z < z0 ) =

left tail

N(z)dz

(1.13)

−∞

P(z < z0 )

while the ‘right tail’ area is calculated from

right tail

+∞

P(z > z0 ) =

N(z)dz

(1.14)

z0

The next example will show how we can use the z-tables to calculate probabilities of normally-distributed variables.

Example 1.3

In example 1.1 we determined by integration the probability that a car

of variable speed was exceeding the speed limit (65 mph); the mean and

standard deviation of the car speed were 60 mph and 3 mph, respectively. Now solve this problem using z-tables.

The problem can be re-stated as follows: determine the probability

P(x > x0 ) =?

where x is a normally-distributed variable with µx = 60 mph, σx = 3 mph,

and x0 = 65 mph. The only way to solve this problem is by integration. We

can use the z-tables if we first standardize the variables. The z-transformed

problem reduces to

x − µx

x0 − µx

>

σx

σx

= P(z > z0 )

P(x > x0 ) = P

where z is described by the standard normal distribution, and z0 is the

appropriate z-score:

z0 =

x0 − µx

65 − 60

=

≈ 1.67

σx

3

Now we can use the z-table to find the area in the ‘right tail’ of the

z-distribution. From the z-table, we see that

P(x > 65) ≈ P(z > 1.67) = 0.0475

This answer agrees (more or less) with our previous value, 0.0478 (see

example 1.1). The slight difference is due to the fact that 53 does not exactly

equal 1.67.

z0

P(z > z0 )

20

1. Properties of Random Variables

Important Relationships for Standard Normal Distributions

You should become very familiar

with the concepts presented in

this section.

The Appendix presents a number of useful statistical tables, including one

for the standard normal distribution (i.e., a z-table). Since the normal distribution is symmetric, there is no need to list the areas corresponding to

both negative and positive z-score values, so most tables only present half

of the information. The z-table given in this book lists right-tail areas associated with positive z-scores. In order to calculate the areas corresponding

to various ranges of normally-distributed variables, using only right-tail areas, a few important relationships should be learned.

⇒ Calculating left-tail areas: P(z < −z0 )

Since the normal distribution is symmetric, the following relationship is

true:

P(z < −z0 ) = P(z > z0 )

(1.15)

This expression allows one to calculate left-tail areas from right-tail areas,

and vice versa. This symmetric nature of the normal probability distribution is illustrated here:

-z0

z0

=

⇒ Calculating probabilities greater than 0.5: P(z > −z0 )

As mentioned previously, most tables (including the one in this book) only

list the areas for half the normal curve. That is because areas corresponding to the other half — i.e., probabilities larger than 0.5 — can easily be

calculated. Out table only lists the right-tail areas for positive z-scores;

thus, we need a way to calculate right-tail areas for negative z-scores. We

would use the following equation:

P(z > −z0 ) = 1 − P(z > z0 )

(1.16)

A pictorial representation of this equation is:

-z0

=

–

z0

⇒ Calculating ‘middle’ Probabilities: P(z1 < z < z2 )

Instead of “tail” areas (i.e., P(z > z0 ) or P(z < −z0 )), it is often necessary to

calculate the area under the curve between two z-scores. The most general

expression for this situation is

P(z1 < z < z2 ) = P(z > z1 ) − P(z > z2 )

Again, in picture form:

z1

z1

z2

=

z2

–

(1.17)

1.4. Probability Distributions of Continuous Variables

21

It is important to become adept at using z-tables to calculating probabilities of normally-distributed variables. The following two examples illustrate some of the problems you might encounter.

Example 1.4

A soft-drink machine is regulated so that it discharges an average volume of 200. mL per cup. If the volume of drink discharged is normally

distributed with a standard deviation of 15 mL,

(a) what fraction of the cups will contain more than 224 mL of soft

drink?

(b) what is the probability that a 175 mL cup will overflow?

(c) what is the probability that a cup contains between 191 and 209 mL?

(d) below what volume do we get the smallest 25% of the drinks?

In answering these types of questions, it is always helpful to draw a quick

sketch of the desired area, as we do here (in the margins).

(a) This problem is similar to previous ones: we must find a right-tail area

P(x > x0 ), where x0 = 224 mL. To do so, we can use the z-tables if we first

calculate z0 , the z-score of x0 .

224

x − µx

z0 − µx

>

σx

σx

224 − 200

= P(z > 1.6)

=P z>

15

= 0.0548

P(x > x0 ) = P

150

175

200

225

250

P(x > 224 mL)

Looking in the z-tables yields the answer. There is a 5.48% probability that

a 224 mL cup will overflow.

(b) In this case, the z-score is negative, so that we must use eqn. 1.16 to find

the probability using the z-tables in the Appendix.

175 − 200

P(x > 175 mL) = P z >

15

= P(z > −1.67) = 1 − P(z > 1.67)

= 1 − 0.0475 = 0.9525

175

150

175

200

225

250

P(x > 175 mL)

There is a 95.25% probability that the 175 mL cup will overflow. A common

mistake in this type of problem is to calculate P(z > z0 ) (0.0475) instead

of 1 − P(z > z0 ) (0.9525); referring to a sketch helps to catch this problem,

since it is obvious from the sketch that the probability should be greater

than 50%.

191

209

(c) We must find P(x1 > x > x2 ), where x1 = 191 mL and x2 = 209 mL. To do

so using the z-tables, we must find the z-scores for both x1 and x2 , and

then use eqn. 1.17 to calculate the probability.

150

175

200

225

250

P(191 mL < x < 209 mL)

22

1. Properties of Random Variables

191 − 200

209 − 200

<z<

15

15

= P(−0.6 < z < +0.6)

P(191 mL < x < 209 mL) = P

= 1 − P(z < −0.6) − P(z > +0.6)

= 1 − 2 · P(z > +0.6) = 1 − 2 · 0.2743

= 0.4514

So there is a 45.14% probability that a cup contains 191–209 mL.

(d) This question is a little different than the others. We must find a value x0

such that P(x < x0 ) = 0.25. In all of the previous examples, we began with

a value (or a range of values) and then calculated a probability; now we are

doing the reverse — we must calculate the value associated with a stated

probability. In both cases we use the z-tables, but in slightly different ways.

x0

25%

150

175

200

225

250

P(x < x0 ) = 0.25

To begin, from the z-tables we must find a value z0 such that P(z < z0 ) =

0.25. Looking in the z-tables, we see that P(z > 0.67) = 0.2514 and P(z >

0.68) = 0.2483; thus, it appears that a value of 0.675 will give a right-tailed

area of approximately 0.25. Since we are looking for a left-tailed area, we

can state that

P(z < −0.675) ≈ 0.25

Our next task is to translate this z-score into a volume; in other words, we

want to “de-standardize” the value z0 = −0.675 to obtain x0 , the volume

that corresponds to this z-score. From eqn. 1.12 on page 18, we may write

x0 = µx + z0 · σx

= 200 + (−0.675)(15) mL

= 189.9 mL

Thus, we have determined that the drink volume will be less than 189.9 mL

with probability 25%.

Example 1.5

The mean inside diameter of washers produced by a machine is 0.502 in,

and the standard deviation is 0.005 in. The purpose for which these

washers are intended allows a maximum tolerance in the diameter of

0.496–0.508 in; otherwise, the washers are considered defective. Determine the percentage of defective washers produced by the machine, assuming that the diameters are normally distributed.

0.496

0.48

0.49

0.50

0.508

0.51

0.52

P(x < 0.496) + P(x > 0.508)

We are looking for the probability that the washer diameter is either less

than 0.496 in or greater than 0.508 in. In other words, we want to calculate

the sum P(x < 0.496 in) + P(x > 0.508 in).

First we must calculate the z-scores of the two values x1 and x2 , where

x1 = 0.496 in and x2 = 0.508 in. Then we can use the z-table to determine

the desired probability.

x1 − µx

z1 =

σx

0.496 − 0.502

=

= −1.2

0.005

x2 − µx

z2 =

σx

0.508 − 0.502

=

= 1.2

0.005

1.4. Probability Distributions of Continuous Variables

23

We can see that z1 = −z2 ; in other words, the two tails have the same area.

Thus,

P(x < x1 ) + P(x > x2 ) = P(z < z1 ) + P(z > z2 )

= 2 · P(z > 1.2) = 2 · 0.1151

= 0.2302

Remember:

x1 = 0.496 in

x2 = 0.508 in

Thus, 23.02% of the washers produced by this machine are defective.

Aside: Excel Tip

Modern spreadsheet programs, such as MS Excel, contain a number of statistical functions, including functions that will integrate the normal probability distribution. In Excel, the functions NORMDIST and NORMSDIST will

perform these integrations for normal and standard normal distributions,

respectively. These can be especially useful in determining integration values that are not in z-tables, or when the tables are not readily available.

View the on-line help documentation in Excel for more information on

how to use these functions. Note that NORMSDIST was used in generating

the z-table in the Appendix. In fact, all of the statistical tables were generated in Excel — other useful spreadsheet functions will be highlighted

throughout this book.

Further Characteristics of Normally-Distributed Variables

Before leaving this section, consider the following characteristics of all random variables that follow a normal distribution.

• Approximately two-thirds of the time the random variable will be

within one standard deviation of the mean value; to be exact,

P(µx − σx < x < µx + σx ) = 0.6827

• There is approximately a 95% probability that the variable will be

within two standard deviations of the mean:

P(µx − 2σx < x < µx + 2σx ) = 0.9545

You should be able to use the z-tables to obtain these probabilities;

you might want to verify these numbers as an exercise.

These characteristics, which are shown in figure 1.11, are useful rules of

thumb to keep in mind when dealing with normally-distributed variables.

For example, a measurement that is five standard deviations above the

mean is not very likely, unless there is something wrong with the measuring device (or there is some other source of error).

1.4.4 Advanced Topic: Other Continuous Probability

Distributions

At this point, we have described several important probability distributions, along with the types of experiments that might result in these

distributions.

Note that both functions return

left-tail areas rather than the

right-tail areas we use in this

book

24

1. Properties of Random Variables

68%

-4

-3

-2

-1

0

95%

1

2

3

4

-4

-3

-2

-1

0

z-score

z-score

(a)

(b)

1

2

3

4

Figure 1.11: Characteristic of normally-distributed variables: the shaded area represents the probability that a normally-distributed variable will assume a value

within (a) one or (b) two standard deviations of the mean.

• Bernoulli experiments (“coin tossing experiments”) are common and

their outcomes are described by the binomial probability distribution,

which is a discrete probability distribution.

• Counting experiments are also common, and these often result in variables described by a Poisson distribution, which is also a discrete distribution.

• Many continuous variables are adequately described by the Gaussian

(‘normal’) probability distribution.

Still, there are some situations that result in continuous variables that

cannot be described by a normal distribution. We will describe two other

continuous probability functions, but there are many more.

The Exponential Distribution

Let’s go back to counting experiments (see page 5). In this type of experiment, we are interested in counting the number of events that occur in a

unit of time or space. However, let’s say we change things around, as in the

following examples.

• We may count the number of photons detected per unit time (a discrete variable) or we may measure the time between detected photons

(a continuous variable).

• We may count the number of cells in a solution volume (a discrete

variable) or we may measure the distance between cells (a continuous

variable).

• We may count the numbers of molecules that react per unit time (i.e.,

the reaction rate, a discrete variable) or we may be interested in the

time between reactions (a continuous variable).

• We may count the number of cars present on a busy street (a discrete

variable) or we may measure the distance between the cars (a continuous variable).

1.4. Probability Distributions of Continuous Variables

25

Probability density

2.5

2.0

1.5

1.0

0.5

0.0

0.0

0.5

1.0

1.5

2.0

2.5

Time, s

Figure 1.12: Exponential probability distribution with µx = σx = 0.4 s. This distribution describes the time interval between α-particles emitted by a radioisotope;

see page 5 for more details.

And so on. We are essentially “flipping” the variable from events in a

given unit of time (or space) to the time (or space) between events. If the

discrete variable — the number of events — in these examples is described

well by a Poisson distribution, then the continuous variable is described by

the exponential probability density function.

p(x) = ke−kx

(1.18)

If the number of counts follows a

Poisson distribution, then the

interval between counts follows

an exponential distribution.

where k is a characteristic of the experiment. In fact, for an exponentially

distributed variable the mean, median, and standard deviation are given by

µx = k−1

Q2 = ln (2) · k

In certain applications, the mean

of the exponential distribution is

called the lifetime, τ, and the

median is the half-life, t1/2 .

−1

σx = k−1

The mean (and standard deviation) of the exponential distribution is

the inverse of the mean of the corresponding Poisson distribution. For example, we described an experiment on page 5 in which we were counting

α-particles emitted by a sample of a radioactive isotope at a mean rate of

2.5 counts/second. It only stands to reason that the mean time between

1

detected α-particles would be 2.5 = 0.4 seconds. The corresponding exponential distribution is shown in figure 1.12.

Statistical tables for the exponential distribution are not often given

because the integral of the exponential probability density function is easy

to evaluate: the probability that x is between x1 and x2 is given by

P(x1 < x < x2 ) = e−x1 /µx − e−x2 /µx

(1.19)

Exponential probability distributions are common in chemistry, but you

may not be used to thinking of them as probability distributions. Anytime you come across a process that experiences an “exponential decay,”

26

1. Properties of Random Variables

chances are that you can think of the process in terms of a counting experiment. Examples of exponential decays are:

• the decrease in concentration in a chemical reaction (first-order rate

law);

• the decrease in light intensity as photons travel through an absorbing

medium (Beer’s Law);

• the decrease in the population in an excited energy state of an atom

or molecule (lifetime measurements).

All of these processes can be observed in a counting experiment, with characteristic Poisson and exponential distributions.

Atomic and Molecular Orbitals

Atomic and molecular orbitals

are simply probability

distributions describing the

position of electrons in atoms

and molecules, respectively.

Radial probability distribution

function for atomic 1s orbitals.

Atomic and molecular orbitals are probability density functions for the position of an electron in an atom or molecule. Such orbitals are sometimes

called electron density functions. They allows us to determine the probability that the electron will be found in a given position relative to the

nucleus. The different orbitals (e.g., 2s or 3px atomic orbitals) correspond

to different probability density functions.

The electron density functions actually contain three random variables,

since they give the probability that an electron is at any given point in

space. As such they are really examples of joint probability distributions of

the three random variables corresponding to the coordinate axes (e.g., x, y

and z in a Cartesian coordinate system). For spherically-symmetric orbitals,

it is convenient to rewrite the joint probability distribution in terms of a

single variable r , which is the distance of the electron from the nucleus. For

the 1s orbital, this probability density function (called a radial distribution

function) has the following form:

p(r ) = kr 2 e−3r /µr

where µr is the mean electron-nucleus distance and k is a normalization

constant that ensures that the integrated area of the function is one. The

values of µr and k will depend on the identity of the atom. The radial

density function for the hydrogen 1s atomic orbital is shown in figure 1.13.

On page 6 we observed that the energy of a molecule is a random variable that can be described by the Boltzmann probability distribution; now

we have encountered yet another property at the atomic/molecular scale

that must be considered a random variable. Electron position can also only

be described in terms of a probability distribution. Understanding the nature and properties of random variables and their probability distributions

thus has applications beyond statistical data analysis.

1.5 Summary and Skills

The single most important skill

developed in this chapter is the

ability to use z-tables to do

probability calculations involving

normally-distributed random

variables.

A random variable is a variable that cannot be predicted with absolute certainty, and must be described using a probability distribution. The location

of the probability distribution is well described by the mean, µx , of the

variable, and the inherent uncertainty in the variable is usually described

by its standard deviation, σx .

1.5. Summary and Skills

27

52.9 pm

Probability density

0.010

0.008

0.006

0.004

0.002

0.000

0

50

100

150

200

250

Radial distance, pm

Figure 1.13: Radial distribution function of the hydrogen 1s orbital. The dotted

line at the mode indicates the Bohr radius, a0 , of the orbital, where a0 = 52.9 pm.

The mean radial distance µr for this orbital is 79.4 pm.

There are two general types of quantitative random variables: discrete

variables, which can only assume certain values (e.g., integers) and continuous variables. Examples of important discrete probability distributions

include the binomial and Poisson distributions — these functions allow one

to predict the outcome of Bernoulli and counting experiments, respectively.

Both of these types of experiments are quite common in science.

For continuous variables, the probability density function can be used

to find the probability that the variable is within a certain range of values,

P(x1 < x < x2 ). The most important family of probability density functions is the Gaussian, or normal, probability distribution. The standard

normal distribution specifically describes a normally-distributed variable

with µx = 0 and σx = 1; integration tables of the cumulative standard normal distribution (i.e., z-tables) can be used to calculate probabilities for any

normally-distributed variable.

Another important probability density function is the exponential distribution, which describes the interval between successive Poisson events

in a counting experiment.

Finally, properties of matter at the atomic/molecular scale must often

be described using probability distributions. In particular, molecular energy is a discrete random variable that may be described by the Boltzmann

probability distribution, and electron position is a continuous random variable whose probability density function is called an atomic (or molecular)

orbital. The Heisenberg Uncertainty Principle describes the relationship between the standard deviations of certain sets of random variables called

complementary variables.