* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Systems of Linear Equations

Rotation matrix wikipedia , lookup

Jordan normal form wikipedia , lookup

Linear least squares (mathematics) wikipedia , lookup

Determinant wikipedia , lookup

Principal component analysis wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

Matrix (mathematics) wikipedia , lookup

Singular-value decomposition wikipedia , lookup

Non-negative matrix factorization wikipedia , lookup

Perron–Frobenius theorem wikipedia , lookup

Orthogonal matrix wikipedia , lookup

Four-vector wikipedia , lookup

Cayley–Hamilton theorem wikipedia , lookup

Matrix calculus wikipedia , lookup

Matrix multiplication wikipedia , lookup

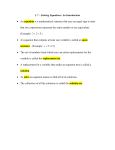

Systems of Linear Equations

Dr. Doreen De Leon

Math 152, Fall 2015

1

Introduction to Systems of Linear Equations

Definition. A linear equation in the variables x1 , x2 , . . . , xn is an equation that can be

written in the form

a1 x1 + a2 x2 + · · · + an xn = b,

where b and the coefficients ai , for i = 1, 2, . . . , n, are real or complex numbers and n > 0.

In your homework assignments, typically 2 ≤ n ≤ 4. In the real world, n is of the order of

hundreds or thousands or more

Definitions.

• A system of linear equations is a collection of one or more linear equations involving

the same variables, say x1 , x2 , . . . , xn .

• A solution of a system of equations is an ordered list (s1 , s2 , . . . , sn ) that makes each equation true when the values si are substituted for the corresponding xi , for i = 1, 2, . . . , n.

• The set of all possible solutions of a system of linear equations is the solution set.

• Two linear systems are called equivalent if they have the same solution sets.

Theorem 1. A system of linear equations has:

(i) no solution ←− inconsistent system

(ii) exactly one solution

consistent system

(iii) infinitely many solutions

In the case of no solutions, the solution set is the empty set, ∅.

We can think about this geometrically, for example in terms of a system involving two lines in

the xy-plane. We know that two lines will either

• intersect in one point, giving one solution;

• be the same line, giving infinitely many solutions; or

• be parallel and not intersect, giving no solution.

1

Matrix and Vector Notation for Systems of Equations

Note: This material is located in Section Reduced Row-Echelon Form of the textbook.

Definition. An m × n matrix is a rectangular array of elements from C having m rows and

n columns.

Matrices are denoted by upper-case letters and delimited using square brackets. The textbook

uses the nonstandard notation of [A]ij to refer to the element in row i and column j of matrix

A. The standard notation, which is what we will use throughout the class, is aij .

Definition. A column vector, or simply vector, is a matrix having only one column.

Vectors are denoted by lower case letters written in bold. When writing a vector by hand, since

we cannot write in bold, an arrow is drawn over the name of the vector. Again, the textbook

uses nonstandard notation to denote the ith element of a vector v; e.g., [v]i . The standard

notation, which we will use throughout the course, is vi .

For a system of equations

a11 x1

a21 x1

..

.

+ a12 x2

+ a22 x2

..

.

+ ···

+ ···

am1 x1 + am2 x2 + · · ·

+ a1n xn

+ a2n xn

..

.

=

=

b1

b2

..

.

(1)

+ amn xn = bm ,

the coefficient matrix is the m × n matrix that contains the coefficients of the system.

Each row of the coefficient matrix represents an equation and each column contains all of the

coefficients of a given variable. For (1), the coefficient matrix is given by

a11 a12 a13 · · · a1n

a21 a22 a23 · · · a2n

A = a31 a32 a33 · · · a3n .

..

..

..

.

..

..

.

.

.

.

am1 am2 am3 · · · amn

The vector of constants is the vector consisting of the right-hand side entries in the system,

so

b1

b2

b = .. .

.

bm

Finally, the solution vector is the vector containing the values of the variables:

x1

x2

x = .. .

.

xn

Using these definitions, the textbook defines the following.

2

Definition. If A is the coefficient matrix of a system of linear equations and b is the vector

of constants, then we will write LS(A, b) as a shorthand expression for the system of linear

equations, which we will refer to as the matrix representation of the linear system.

To write a system of equations in compact form for solving, we define the augmented matrix,

which is a matrix consisting of the coefficient matrix plus an extra column containing the righthand side values. In other words, given a system of equations LS(A, b), the augmented matrix

will be written as [A | b].

Example. Consider the system of equations

2x1 − 4x2 + 5x3 = −8

3x2 − 9x3 = 27 .

5x1

+ x3 = 0

The coefficient matrix is given by

2 −4 5

3 −9,

5 0

1

x1 x2 x3

0

and the augmented matrix is given by

2 −4 5 | −8

0 3 −9 | 27 .

5 0

1 | 0

2

Solving a Linear System of Equations

What are the basic operations to solve a linear system of equations?

→ multiply an equation by a nonzero constant;

→ interchange two equations;

→ replace one equation by the sum of itself and a multiple of another equation

Note: These operations do not change the solution of the system.

Example. Solve the system

x+

4x

2y − 3z = −4

−2y + 3z = 2

− 2z = 14

The augmented matrix for this problem

1

0

4

(1)

(2) .

(3)

is

2 −3 | −4

2 3 | 2 .

0 −2 | 14

3

Step 1: eq.(3) - 4·eq.(1) → eq.(3)

x + 2y − 3z = −4

−2y + 3z = 2

−8y + 10z = 30

(10 )

(20 )

(30 )

1 2 −3 | −4

0 −2 3 | 2

0 −8 10 | 30

(100 )

(200 )

(300 )

1 2 −3 | −4

0 −2 3 | 2

0 0 −2 | 22

Step 2: eq.(3’) - 4·eq.(2’) → eq(3’)

x + 2y − 3z = −4

−2y + 3z = 2

−2z = 22

Now we can solve for z in Eq.(3”):

−2z = 22 =⇒ z = −11.

Solve for y in Eq. (2”):

−2y + 3z = 2

−2y + 3(−11) = 2

−2y = 35

35

y=− .

2

Solve for x in Eq. (1”):

x + 2y − 3z = −4

35

x+2 −

− 3(−11) = −4

2

x − 2 = −4

x = −2.

35

So, −2, − , −11 is a solution. (Verify by plugging into (1), (2), (3).)

2

The operations on the augmented matrix track with the operations on the system of equations.

They are known as elementary row operations.

Elementary Row Operations

1. Replace one row by the sum of itself and a multiple of a nonzero row.

2. Interchange two rows.

3. Multiply a row by a nonzero constant (i.e., multiply every entry in the row).

Elementary row operations can be applied to any matrix.

4

Definition. Two matrices are row equivalent if there is a sequence of elementary row operations that transforms one matrix into another.

If the augmented matrices of two linear systems are row equivalent, then the two systems have

the same solution set.

Example: Determine if the following system is consistent.

2x − 3y + 5z = 5

2y − 3z = 0 .

4x

+ z = −5

2 −3

5 |

5

2 −3

5 |

5

2 −3

5 |

5

r3 →r3 −2r1

r3 →r3 −3r2

0

2 −3 |

0 −−

2 −3 |

0 −−

2 −2 |

0

−−−−→ 0

−−−−→ 0

4

0

1 | −5

0

6 −9 | −15

0

0

0 | −15

If we write the resulting augmented matrix in equation form, we have

2x − 3y + 5z = 5

2y − 3z = 0

0 = −15 ←− false statement

=⇒ No solution. Therefore, the system is inconsistent.

3

Row Reduction and Echelon Forms

We will now more formalize the procedure to solve systems of equations.

A nonzero row or column in a matrix is a row or column that contains at least one nonzero

entry.

Definition. A leading entry of a row is the leftmost nonzero entry (in a nonzero row).

Definition. A rectangular matrix is in echelon form if it satisfies the following:

1. All nonzero rows are above any rows of all zeros.

2. Each leading entry of a row is in a column to the right of the row above it.

3. All entries in a column below a leading entry are zero.

Definition. A rectangular matirx is in reduced row echelon form (RREF) if it is in echelon

form and it satisfies the following.

1. The leading entry in each nonzero row is 1.

2. Each leading 1 is the only nonzero entry in its column.

5

Gaussian elimination is the process of using elementary row ooperations to transform a

matrix into echelon form.

Gauss-Jordan elimination is the process of using elementary row operations to transform a

matrix into RREF.

Example:

1 0 2

0 2 3

0 0 0

EF

8

1 3 4 2

1 2 3 4

1

5 , 0 0 0 6 , 0 5 6 7 , 0

0

0 0 0 0

0 0 8 9

0

EF

EF

0 0 0

1 0 2

1

1 0 0 , 0 1 0 , 0

0 1 0

0 0 0

0

RREF

RREF

2 3 4

0 0 0 .

0 0 0

RREF

Theorem 2. Each matrix is row equivalent to one, and only one, reduced row echelon matrix.

Pivot Positions and Free Variables

When row operations on a matrix produce echelon form, further row operations to obtain RREF

do not change the position of leading entries.

Definition. A pivot position in a matrix A is a location in A that corresponds to a leading 1

in the RREF of A. A pivot column is a column of A that contains a pivot position. A pivot

is a nonzero entry in a pivot position.

Example. Row reduce the matrix to echelon

original matrix and the pivot columns.

0 3 5

1 2 4

3 0 5

0 3 5 7 9

1 2 4 6

r1 ↔r2

1 2 4 6 8 −

−−→ 0 3 5 7

3 0 5 9 2

3 0 5 9

1 2 4

r3 →r3 +2r2

−−−−−−→ 0 3 5

0 0 3

form and identify the pivot positions in the

7 9

6 8

9 2

8

1

2

4

6

8

r3 →r3 −3r1

9 −−

3

5

7

9

−−−−→ 0

2

0 −6 −7 −9 −22

6

8

7

9

5 −4

Solution:

0

1

3

3

2

0

5

4

5

↑ ↑ ↑

pivot columns

6

7 9

6 8

9 2

4

Solutions of Linear Systems

Use Gaussian (or Gauss-Jordan) elimination on the augmented matrix, then back substitution

to solve for the variables.

Example: Find the general solution of the linear system whose augmented matrix has been

reduced to:

2 2 4 | 5

(1) 0 1 3 | −1

0 0 2 | 6

First, write the system of equations:

2x1 + 2x3 + 4x3 = 5

x2 + 3x3 = −1

2x3 = 6

Back substitution:

2x3 = 6 =⇒ x3 = 3

x2 + 3x3 = −1 =⇒ x2 = −1 − 3x3 = −1 − 3(3) = −10

1

13

1

2x1 + 2x2 + 4x3 = 5 =⇒ x1 = (5 − 2x2 − 4x3 ) = (5 − 2(−10) − 4(3)) =

2

2

2

13

, x2 = −10, x3 = 3 .

2

2 3 −1 | 4

(2) 0 0 3 | 6

0 0 0 | 0

So, x1 =

Solution:

not a pivot column, so x2 is a free variable, or an independent variable.

↓

2 3 −1 | 4

0 0 3 | 6

0 0 0 | 0

↑

↑

pivot columns, so x1 , x3 are basic variables, or dependent variables.

If we write the system of equations, we have

2x1 + 3x2 − x3 = 4

3x3 = 6

0=0

7

Back substituting:

3x3 = 6 =⇒ x3 = 2

1

3

2x1 + 3x2 − x3 = 4 =⇒ x1 = (4 − 3x2 + x3 ) = − x2 + 3

2

2

So, we have

x1 = 3 − 32 x2

x2 is free

x3 = 2

We can write this solution in vector form in one of two ways: either leave x2 as a variable

or define x2 as an arbitrary constant. This gives

3

3 − x2

x = x2 .

2

2

We can write the solution set for this system as follows:

3

3 − x2

2 x ∈ C .

x2 2

2

1 0 5 | 2

(3) 0 0 3 | 6

0 0 0 | −1

First, write the system of equations:

x1 + 5x3 = 2

3x3 = 6

0 = −1 ←− false

=⇒ No solution (inconsistent).

For an inconsistent solution, the solution set is empty.

Theorem 3. A linear system is consistent if and only if the rightmost column of the augmented

matrix is not a pivot column; i.e., if and only if an echelon form of the augmented matrix has

no row of the form

0 . . . 0 | b , where b 6= 0.

If a linear system is consistent, then the solution set contains either (i) a unique solution,

where there are no free variables, or (ii) infinitely many solutions, where there is at least one

free variable.

8

5

Homogeneous Systems of Equations

Definition. A system of linear equations is homogeneous if the vector of constants is the

zero vector; i.e., if b = 0. The solution defined by setting each variable to zero, x1 = 0, x2 =

0, . . . , xn = 0 (i.e., x = 0) is called the trivial solution.

Setting each variable to 0 will always give a solution to a homogeneous system of equations.

Therefore, we obtain the following theorem.

Theorem 4. A homogeneous system of linear equations is consistent, i.e., a homogeneous

system of equations has either one solution or infinitely many solutions.

Note that this also leads us to the following theorem.

Theorem 5. Given a homogeneous system of m equations in n unknowns, if m < n, then the

system has infinitely many solutions.

Proof. Since the system is homogeneous, we know that it is consistent. Therefore, it has at

least one solution. Since there are more variables than equations, the augmented matrix of the

system will be row equivalent to a matrix B in echelon form having at most m nonzero rows,

or at most m dependent variables. Since n > m, there will be at least n − m independent

variables. Therefore, the system has infinitely many solutions.

Example:

(1) Solve

x1 + 2x2 − x3 = 0

2x1 + 2x2 + x3 = 0

3x1 + 5x2 − 2x3 = 0

We will use Gaussian elimination to solve this problem.

1 2 −1 | 0

1

2 −1 | 0

1

2 −1 | 0

r2 →r2 −2r1

r2 ↔r3

2 2

1 | 0 −−

3 | 0 −−

1 | 0

−−−−→ 0 −2

−→ 0 −1

r3 →r3 −3r1

3 5 −2 | 0

0 −1

1 | 0

0 −2

3 | 0

1

2 −1 | 0

1 2 −1 | 0

r2 →−r2

r3 →r3 +2r2

1 −1 | 0 −−

−−

−−→ 0

−−−−→ 0 1 −1 | 0

0 −2

3 | 0

0 0

1 | 0

By back substitution, we obtain

x3 = 0

x2 = 0

x1 = 0

9

(2) Solve

x1 + 2x2 − x3 = 0

2x1 + 2x2

= 0

3x1 + 5x2 − 2x3 = 0

We will use Gauss-Jordan elimination to solve this problem, just to get some practice using

it.

1 2 −1 | 0

1

2 −1 | 0

1

2 −1 | 0

r2 →r2 −2r1

r2 ↔r3

2 2

0 | 0 −−

2 | 0 −−

1 | 0

−−−−→ 0 −2

−→ 0 −1

r3 →r3 −3r1

3 5 −2 | 0

0 −1

1 | 0

0 −2

2 | 0

1

2 −1 | 0

1 0

1 | 0

r2 →−r2

r3 →r3 +2r2

1 −1 | 0 −−−−−−→ 0 1 −1 | 0

−−−−→ 0

r1 →r1 −2r2

0 −2

2 | 0

0 0

0 | 0

This matrix is in reduced row-echelon form. Since there is no leading entry for x3 , x3 is a

free variable and we have the following.

=⇒ x2 = x3

=⇒ x1 = −x3

So,

x1

−x3

−1

x3 = x3

1

x = x2 =

x3

x3

1

We can write the solution set as

−x3 x1 x2 x1 = −x3 x2 = x3 = x3 x3 ∈ C .

x3 x3 Null Space of a Matrix

Definition. The null space of a matrix A, denoted N (A), is the set of vectors that are

solutions to the homogeneous system LS(A, 0).

Note that the null space is a property of the matrix A.

Example: Determine the null space of the matrix

1 2 −1

0 .

A = 2 2

3 5 −2

N (A) is the set of solutions of LS(A, 0), which we determined in Example (2) above. So, we

have that

−x3

x3 x3 ∈ C .

N (A) =

x3 10

6

Nonsingular Matrices

Systems of n equations in n unknowns that have only one solution have special attributes,

which we will begin discussing here. First, the coefficient matrix is n × n, which means that

the matrix is a square matrix of size n. Square matrices have many characteristics, which

we will explore in greater detail later in the course. One of the most useful (and important)

characterizations of a coefficient matrix for such a system is defined as follows.

Definition. Suppose A is a square matrix such that the solution set to the homogeneous linear

system of equations LS(A, 0) is {0} (i.e., the system has only the trivial solution). Then A is

said to be a nonsingular matrix. Otherwise, we say that A is a singular matrix.

Using the above definition, we can easily determine if a matrix A is singular or nonsingular by

constructing the homogeneous system of equations having A as its coefficient matrix and then

using elimination to determine the solutions to that system. Thus, we see that the coefficient

matrix in Example (1) above is nonsingular, but the coefficient matrix in Example (2) above

is singular.

To simplify our work in determining if a matrix is nonsingular, we first define the n × n identity

matrix In as the matrix whose entries are defined by

(

1, if k = l,

ikl =

0, if k 6= l.

For example, I2 is defined as

1 0

I2 =

.

0 1

Note: The identity matrix is a square matrix in reduced row echelon form. Also, every column

is a pivot column.

Theorem 6. Suppose that A is an n × n matrix whose row equivalent matrix in reduced row

echelon form is B. A is nonsingular if and only if B = In .

Example: Determine if the matrix

2 1 −1

0

A = 1 3

0 2 −4

is nonsingular.

Solution: We just need to use elimination to get A into RREF.

1

3

0

1

3

0

1

3

0

2 1 −1

1

r3 → 2 r3

r2 →r2 −2r1

r2 ↔r3

1 3

1 −2

0 −−−−−

−→ 2 1 −1 −−

−−−−→ 0 −5 −1 −−

−→ 0

r1 ↔r2 −3r1

0 1 −2

0

1 −2

0 −5 −1

0 2 −4

1 0

6 r →− 1 r

1 0

6

1 0 0

3

r2 →r2 +2r3

r3 →r3 +5r2

11 3

−−

−−−−→ 0 1 −2 −−−−−

−→ 0 1 −2 −−

−−−−→ 0 1 0 .

r1 →r1 −r2

r1 →r1 −6r3

0 0 −11

0 0

1

0 0 1

11

Since A is row-equivalent to the I3 , A is nonsingular.

Notice that if a matrix A is nonsingular, its null space is the set containing only the zero vector;

i.e., N (A) = {0}. The converse is true, as well.

Theorem 7. A square matrix A is nonsingular if and only if N (A) = {0}.

We also have the following characterization of nonsingular matrices.

Theorem 8. A square matrix A is nonsingular if and only if the system LS(A, b) has a unique

solution for every choice of the constant vector b.

We can put all of the above theorems together into a theorem giving a list of equivalent statements.

Theorem 9 (Nonsingular Matrix Equivalences, Round 1). Suppose that A is a square matrix.

Then the following are equivalent.

1. A is nonsingular.

2. A row reduces to the identity matrix.

3. The null space of A contains only the zero vector, i.e., N (A) = {0}.

4. The linear system LS(A, b) has a unique solution for every possible choice of b.

Saying that the statements are equivalent means that if one statement is true, then all of the

others are true, as well.

7

Applications of Linear Systems

Balancing Chemical Equations

Chemical equations describe the quantities of substances consumed and produced by chemical

reactions.

Example: Boron sulfide reacts violently with water to form boric acid and hydrogen sulfide

gas (which smells of rotten eggs). The unbalanced equation is

B2 S3 + H2 O → H3 BO3 + H2 S.

To “balance” the equation, we need to find positive integers x1 , x2 , x3 , and x4 so that

x1 B2 S3 + x2 H2 O → x3 H3 BO3 + x4 H2 S

gives an equation in which the total numbers of each type of atom are the same on both sides

of the equation.

12

(B) boron: 2x1 = x3 → 2x1 − x3 = 0

(S) sulfur: 3x1 = x4 → 3x1 − x4 = 0

(H) hydrogen: 2x2 = 3x3 + 2x4 → 2x2 − 3x3 − 2x4 = 0

(O) oxygen: x2 = 3x3 → x2 − 3x3 = 0

We

2

3

0

0

next solve this system.

0 −1

0 | 0

−1

0

0 −1 | 0 r1 →r1 −r2 3

−−−−−−→

2 −3 −2 | 0 r3 →r3 −r4 0

1 −3

0 | 0

0

−1

0

r4 →r4 −r3

−−−−−−→

0

0

−1

r4 →r4 −r3 0

−−

−−−−→

0

0

0 −1

1 | 0

−1

0

0 −1 | 0 r2 →r2 +3r1 0

−−−−−−→

1

0 −2 | 0

0

1 −3

0 | 0

0

0 −1

1 | 0

−1 0

0 −3

2 | 0

0 1

r

↔r

2

3

−−

−→

1

0 −2 | 0

0 0

0 −3

2 | 0

0 0

0 −1

1 | 0

1

0 −2 | 0

0 −3

2 | 0

0

0

0 | 0

0 −1

1 |

0 −3

2 |

1

0 −2 |

1 −3

0 |

−1

1 | 0

0 −2 | 0

−3

2 | 0

−3

2 | 0

0

0

0

0

We thus obtain the system

−x1 − x3 + x4 = 0

x2 − 2x4 = 0

−3x3 + 2x4 = 0.

x4 is a free variable. So, we have

2

x3 = x4

3

x2 = 2x4

1

x1 = −x3 + x4 = x4 .

3

Choose the smallest value of x4 to obtain integers, which is x4 = 3. Then x3 = 2, x2 =

6, and x1 = 1, and the balanced equation takes the form

B2 S3 + 6H2 O → 2H3 BO3 + 3H2 S .

13