* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Y - Andreas Hess

Survey

Document related concepts

Transcript

Evaluating Predictive Models

Niels Peek

Department of Medical Informatics

Academic Medical Center

University of Amsterdam

Outline

1. Model evaluation basics

2. Performance measures

3. Evaluation tasks

• Model selection

• Performance assessment

• Model comparison

4. Summary

Basic evaluation procedure

1.

Choose performance measure

2. Choose evaluation design

3. Build model

4. Estimate performance

5. Quantify uncertainty

Basic evaluation procedure

1.

Choose performance measure

(e.g. error rate)

2. Choose evaluation design

(e.g. split sample)

3. Build model

(e.g. decision tree)

4. Estimate performance

(e.g. compute test sample error rate)

5. Quantify uncertainty

(e.g. estimate confidence interval)

Notation and terminology

xR

feature vector (stat: covariate pattern)

y {0,1}

class (med: outcome)

p(x)

density (probability mass) of x

P(Y=1| x)

class-conditional probability

h : Rm {0,1}

classifier

f : Rm [0,1]

probabilistic classifier

f (Y=1| x)

estimated class-conditional probability

m

(stat: discriminant model; Mitchell: hypothesis)

(stat: binary regression model)

Error rate

The error rate (misclassification rate, inaccuracy)

of a given classifier h is the probability that h will

misclassify an arbitrary instance x :

error (h)

P(Y h(x) | x)

p(x) dx

xRm

Probability that a given

x is misclassified by h

Error rate

The error rate (misclassification rate, inaccuracy)

of a given classifier h is the probability that h will

misclassify an arbitrary instance x :

error (h)

P(Y h(x) | x)

p(x) dx

xRm

Expectation over

instances x randomly

drawn from Rm

Sample error rate

Let S = { (xi , yi) | i =1,...,n } be a sample of

independent and identically distributed (i.i.d.)

m

instances, randomly drawn from R .

The sample error rate of classifier h in sample S is

the proportion of instances in S misclassified by h:

errorS (h)

n

1 I ( y h( x ) )

i

i

n

i 1

The estimation problem

How well does errors(h) estimate error(h) ?

To answer this question, we must look at some

basic concepts of statistical estimation theory.

Generally speaking, a statistic is a particular

calculation made from a data sample. It describes

a certain aspect of the distribution of the data in

the sample.

Understanding randomness

What can go wrong?

Sources of bias

• Dependence

using data for both training/optimization and

testing purposes

• Population drift

underlying densities have changed

e.g. ageing

• Concept drift

class-conditional distributions have changed

e.g. reduced mortality due to better treatments

Sources of variation

• Sampling of test data

“bad day” (more probable with small samples)

• Sampling of training data

instability of the learning method, e.g. trees

• Internal randomness of learning algorithm

stochastic optimization, e.g. neural networks

• Class inseparability

0 « P(Y=1| x) « 1 for many x Rm

Solutions

• Bias

– is usually be avoided through proper sampling,

i.e. by taking an independent sample

– can sometimes be estimated and then used to

correct a biased errors(h)

• Variance

– can be reduced by increasing the sample size

(if we have enough data ...)

– is usually estimated and then used to quantify

the uncertainty of errors(h)

30

25

20

15

10

5

0

0

5

10

15

20

25

30

Uncertainty = spread

10

15

20

25

30

35

40

10

15

20

25

30

35

We investigate the spread of a distribution by

looking at the average distance to the (estimated)

mean.

40

Quantifying uncertainty (1)

Let e1, ..., en be a sequence of observations, with

average e

1

n

n

e

.

i 1 i

• The variance of e1, ..., en is defined as

s

2

1

n 1

n

i 1

(ei e )

2

• When e1, ..., en are binary, then

s

2

1

n 1

e (1 e )

Quantifying uncertainty (2)

• The standard deviation of e1, ..., en is defined as

s

1

n 1

n

i 1

(ei e )

2

• When the distribution of e1, ..., en is approximately

Normal, a 95% confidence interval of e is

obtained by [e 1.96s, e 1.96s ] .

• Under the same assumption, we can also

compute the probability (p-value) that the true

mean equals a particular value (e.g., 0).

Example

training set

ntrain = 80

test set

ntest = 40

• We split our dataset into a training sample and

a test sample.

• The classifier h is induced from the training

sample, and evaluated on the independent

test sample.

• The estimated error rate is then unbiased.

Example (cont’d)

• Suppose that h misclassifies 12 of the 40

examples in the test sample.

• So errorS (h) 12 40 .30

• Now, with approximately 95% probability,

error(h) lies in the interval

errorS (h)(1 errorS (h))

errorS (h) 1.96

ntest 1

• In this case, the interval ranges from .16 to .44

Basic evaluation procedure

1.

Choose performance measure

(e.g. error rate)

2. Choose evaluation design

(e.g. split sample)

3. Build model

(e.g. decision tree)

4. Estimate performance

(e.g. compute test sample error rate)

5. Quantify uncertainty

(e.g. estimate confidence interval)

Outline

1. Model evaluation basics

2. Performance measures

3. Evaluation tasks

• Model selection

• Performance assessment

• Model comparison

4. Summary

Confusion matrix

A common way to refine the notion of prediction

error is to construct a confusion matrix:

Y=1

h(x)=1

outcome

Y=0

true positives

false positives

false negatives

true negatives

prediction

h(x)=0

Example

Y

h(x)

0

0

1

0

0

0

1

0

0

0

1

1

Y=1

Y=0

h(x)=1

1

0

h(x)=0

2

3

Sensitivity

Y=1

Y=0

h(x)=1

1

0

h(x)=0

2

3

• “hit rate”: correctness among positive instances

• TP / (TP + FN) = 1 / (1 + 2) = 1/3

• Terminology

sensitivity (medical diagnostics)

recall

(information retrieval)

Specificity

Y=1

Y=0

h(x)=1

1

0

h(x)=0

2

3

• correctness among negative instances

• TN / (TN + FP) = 3 / (0 + 3) = 1

• Terminology

specificity (medical diagnostics)

precision (information retrieval)

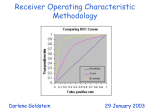

ROC analysis

• When a model yields probabilistic predictions,

e.g. f (Y=1| x) = 0.55, then we can evaluate its

performance for different classification

thresholds [0,1]

• This corresponds to assigning different (relative)

weights to the two types of classification error

• The ROC curve is a plot of sensitivity versus

1-specificity for all 0 1

ROC curve

(0,1): perfect model

sensitivity

=0

=1

each point

corresponds to

a threshold value

1- specificity

sensitivity

Area under ROC curve (AUC)

the area under

the ROC curve is

a good measure

of discrimination

1- specificity

sensitivity

Area under ROC curve (AUC)

when AUC=0.5,

the model does

not predict better

than chance

1- specificity

Area under ROC curve (AUC)

sensitivity

when AUC=1.0,

the model

discriminates

perfectly between

Y=0 and Y=1

1- specificity

Discrimination vs. accuracy

• The AUC value only depends on the ordering of

instances by the model

• The AUC value is insensitive to order-preserving

transformations of the predictions f(Y=1|x),

e.g. f’(Y=1|x) = f(Y=1|x) · 10-4711

In addition to discrimination, we must therefore

investigate the accuracy of probabilistic predictions.

Probabilistic accuracy

x

Y

f(Y=1|x)

P(Y=1|x)

10

0

0.10

0.15

17

0

0.25

0.20

…

…

32

1

0.30

0.25

100

1

0.90

0.75

Quantifying probabilistic error

Let (xi , yi) be an observation, and let f (Y | xi) be

the estimated class-conditional distribution.

• Option 1: i = | yi – f (Y=1| xi) |

Not good: does not lead to the correct mean

• Option 2: i = (yi – f (Y=1| xi))2

(variance-based)

Correct, but mild on severe errors

• Option 3: i = ln( f (Y=yi | xi))

(entropy-based)

Better from a probabilistic viewpoint

Outline

1. Model evaluation basics

2. Performance measures

3. Evaluation tasks

• Model selection

• Performance assessment

• Model comparison

4. Summary

Evaluation tasks

• Model selection

Select the appropriate size (complexity)

of a model

• Performance assessment

Quantify the performance of a given model

for documentation purposes

• Method comparison

Compare the performance of different

learning methods

1

0.024

n=4843

creatinin level

< 169

2

0.020

elective

surgery

n=4738

XI

emergency

procedure

4

age <67

creatinin level

169

0.200

n=105

3

age 67

0.015

0.076

n=4382

good

LVEF

I

0.006

n=356

5

0.027

n=2464

no mitral

valve surgery

n=1918

6

0.023

n=1800

first

cardiac

surgery

8

0.018

age < 81

n=1640

age 81

0.015

n=1293

COPD

10

0.037

BMI 25

age < 67

n=80

n=276

age 67

VII

0.093

VIII

0.026

IX

0.089

n=118

n=153

n=123

VI

0.069

n=160

n=101

n=1539

II

0.011

0.054

V

0.069

9

no COPD

prior

cardiac

surgery

X

0.150

7

mitral valve

surgery

mod./poor

LVEF

n=246

BMI < 25

III

0.014

IV

0.067

n=142

n=104

How far should

we grow a tree?

The model selection problem

• When we build a model, we must decide upon

its size (complexity)

• Simple models are robust but not flexible: they

may neglect important features of the problem

• Complex models are flexible but not robust:

they tend to overfit the data set

Model induction is a statistical estimation problem!

true error rate

optimistic

bias

training sample

error rate

How can we minimize the true error rate?

The split-sample procedure

training set

test set

1. Data set is randomly split into training set

and test set (usually 2/3 vs. 1/3)

2. Models are built on training set

Error rates are measured on test set

• Drawbacks

– data loss

– results are sensitive to split

Cross-validation

fold 1

1.

2.

3.

4.

fold 2

…

fold k

Split data set randomly into k subsets ("folds")

Build model on k-1 folds

Compute error on remaining fold

Repeat k times

• Average error on k test folds approximates

true error on independent data

• Requires automated model building procedure

Estimating the optimistic bias

• We can also estimate the error on the training

set and subtract an estimated bias afterwards.

• Roughly, there exist two methods to estimate

an optimistic bias:

a) Look at the model’s complexity

e.g. the number of parameters in a generalized

linear model (AIC, BIC)

b) Take bootstrap samples

simulate the sampling distribution

(computationally intensive)

Summary: model selection

• In model selection, we trade-off flexibility in the

representation for statistical robustness

• The problem is minimize the true error without

suffering from a data loss

• We are not interested in the true error (or its

uncertainty) itself – we just want to minimize it

• Methods:

– Use independent observations

– Estimate the optimistic bias

Performance assessment

In a performance assessment, we estimate how

well a given model would perform on new data.

The estimated performance should be unbiased

and its uncertainty must be quantified.

Preferrably, the performance measure used should

be easy to interpret (e.g. AUC).

Types of performance

• Internal performance

Performance on patients from the same

population and in the same setting

• Prospective performance

Performance for future patients from the same

population and in the same setting

• External performance

Performance for patients from another

population or another setting

Internal performance

Both the split-sample and cross-validation

procedures can be used to assess a model's

internal performance, but not with the same data

that was used in model selection

A commonly applied procedure looks as follows:

model selection

fold 1

fold 2

…

fold k

validation

Mistakes are frequently made

• Schwarzer et al. (2000) reviewed 43 applications

of artificial neural networks in oncology

• Most applications used a split-sample or crossvalidation procedure to estimate performance

• In 19 articles, an incorrect (optimistic)

performance estimate was presented

– E.g. model selection and validation on a single set

• In 6 articles, the test set contained less than 20

observations

Schwarzer G, et al. Stat Med 2000; 19:541–61.

Outline

1. Model evaluation basics

2. Performance measures

3. Evaluation tasks

• Model selection

• Performance assessment

• Model comparison

4. Summary

Summary

• Both model induction and evaluation are

statistical estimation problems

• In model induction we increase bias to reduce

variation (and avoid overfitting)

• In model evaluation we must avoid bias or

correct for it

• In model selection, we trade-off flexibility for

robustness by optimizing the true performance

• A common pitfall is to use data twice without

correcting for the resulting bias