* Your assessment is very important for improving the workof artificial intelligence, which forms the content of this project

Download Fraction-free matrix factors: new forms for LU and QR factors

Rotation matrix wikipedia , lookup

Eigenvalues and eigenvectors wikipedia , lookup

System of linear equations wikipedia , lookup

Determinant wikipedia , lookup

Four-vector wikipedia , lookup

Jordan normal form wikipedia , lookup

Singular-value decomposition wikipedia , lookup

Matrix (mathematics) wikipedia , lookup

Perron–Frobenius theorem wikipedia , lookup

Non-negative matrix factorization wikipedia , lookup

Orthogonal matrix wikipedia , lookup

Matrix calculus wikipedia , lookup

Gaussian elimination wikipedia , lookup

Fraction-free matrix factors: new forms for LU and QR factors

Wenqin Zhou, D.J. Jeffrey

University of Western Ontario

London, Ontario, Canada

{wzhou7, djeffrey}@uwo.ca

Abstract

Gaussian elimination and LU factoring have been greatly studied from the algorithmic point of view, but much less from the point view of the best output format. In this

paper, we give new output formats for fraction free LU factoring and for QR factoring.

The formats and the algorithms used to obtain them are valid for any matrix system in

which the entries are taken from an integral domain, not just for integer matrix systems.

After discussing the new output format of LU factoring, we give the complexity analysis for the fraction free algorithm and fraction free output. Our new output format

contains smaller entries than previously suggested forms, and it avoids the gcd computations required by some other partially fraction free computations. As applications of

our fraction free algorithm and format, we demonstrate how to construct a fraction free

QR factorization and how to solve linear systems within a given domain.

1

Introduction

Various applications including robot control and threat analysis have resulted in developing

efficient algorithms for the solution of systems of polynomial and differential equations.

This involves significant linear algebra subproblems which are not standard numerical linear

algebra problems. The “arithmetic” that is needed is usually algebraic in nature and must

be handled exactly [18]. In the standard numerical linear algebra approach, the cost of each

operation during the standard Gaussian elimination is regarded as being the same, and the

cost of numerical Gaussian elimination is obtained by counting the number of operations.

For a n × n matrix with floating point numbers as entries, the cost of standard Gaussian

elimination is O(n3 ).

However, in exact linear algebra problems, the cost of each operation during the standard

Gaussian elimination may vary because of the growth of the entries [29]. For instance, in

a polynomial ring over the integers, the problem of range growth manifests itself both in

increased polynomial degrees and in the size of the coefficients. It is easy to modify the

standard Gaussian elimination to avoid all division operations but this leads to a very rapid

growth [22, 13, 14, 28].

One way to reduce this intermediate expression swell problem is the use of fraction

free algorithms. Perhaps the first description of such an algorithm was due to Bareiss [1].

1

Afterwards, efficient methods for alleviating the expression swell problems from Gaussian

elimination are given in [2, 22, 8, 10, 17, 24, 25].

Let us shift from Gaussian elimination to LU factoring. Turing’s description of Gaussian

elimination as a matrix factoring is well established in linear algebra [27]. The factoring

takes different forms under the names Cholesky, Doolittle-LU, Crout-LU or Turing factoring.

Only relatively recently has there been an attempt to combine LU factoring with fraction

free Gaussian elimination [18, 3]. One approach was given by Theorem 2.1 in [3]. In this

paper, we use the standard fraction free Gaussian elimination algorithm given by Bareiss [1]

and give a new fraction free LU factoring and a new fraction free QR factoring.

A procedure called fraction free LU decomposition already exists in Maple. Here is an

example of its use.

Example 1.1. Let A be a 3×3 matrix with polynomials as entries,

x 1 3

A := 3 4 7 .

8 1 9

If we use Maple to compute the fraction free LU factoring, we have

> LUDecomposition(A, method = FractionFree);

1

1

x

1

3

1 · A = 3/x

· 4x − 3 7x − 9

1/x

1

x−8

8/x x(4x−3) 4x−3

1

29x − 58

(1)

The upper triangular matrix has polynomial entries. However, there are still fractions

in the lower triangular matrix. In this paper, we call this factoring a partially fraction free

LU factoring. We recall the corresponding algorithm below.

Section 2 presents a format for LU factoring that is completely fraction free. In Section 3,

we give a completely fraction free LU factoring algorithm and its time complexity, compared

with the time complexity of a partially fraction free LU factoring. Benchmarks follow in

Section 4 and illustrate the complexity results from Section 3. The last part of the paper,

Section 5, introduces the application of the completely fraction free LU factoring to obtain

a similar structure for a fraction free QR factoring. In addition, it introduces the fraction

free forward and backward substitutions to keep the whole computation in one domain for

solving a linear system. Section 6 gives our conclusions.

2

Fraction Free LU Factoring

In 1968, Bareiss [1] pointed out that his integer-preserving Gaussian elimination (or fraction

free Gaussian elimination) could reduce the magnitudes of the entries in the transformed

matrices and increase considerably the computational efficiency in comparison with the

corresponding standard Gaussian elimination. We also know that the conventional LU

decomposition is used for solving several linear systems with the same coefficient matrix

2

without the need to recompute the full Gaussian elimination. Here we combine these two

ideas and give a new fraction free LU factoring.

In 1997, Nakos, Turner and Williams [18] gave an incompletely fraction free LU factorization. In the same year, Corless and Jeffrey [3] gave the following result on fraction free

LU factoring.

Theorem 2.1. [Corless-Jeffrey] Any rectangular matrix A ∈ Zn×m may be written

F1 P A = LF2 U ,

(2)

where F1 = diag(1, p1 , p1 p2 , . . . , p1 p2 · · · pn−1 ), P is a permutation matrix, L ∈ Zn×n is a

unit lower triangular, F2 = diag(1, 1, p1 , p1 p2 , . . . , p1 p2 · · · pn−2 ), and U ∈ Zn×m are upper

triangular matrices. The pivots pi that arise are in Z.

This factoring is modeled on other fraction free definitions, such as pseudo-division, and

the idea is to inflate the given object or matrix so that subsequent divisions are guaranteed

to be exact. However, although this model is satisfactory for pseudo-division, the above

matrix factoring has two unsatisfactory features: first, two inflating matrices are required,

and secondly, the matrices are clumsy, containing entries that increase rapidly in size. If

the model of pseudo-division is abandoned, a tidier factoring is possible. This is the first

contribution of this paper.

Theorem 2.2. Let I be an integral domain and A = [ai,j ]i≤n,j≤m be a matrix in In×m with

n ≤ m and the submatrix [ai,j ]i,j≤n has a full rank. Then, A may be written

P A = LD−1 U,

where

p1

L2,1

..

.

p2

.

.

..

..

L =

,

Ln−1,1 Ln−1,2 · · ·

pn−1

Ln,1

Ln,2 · · · Ln,n−1 1

p1

p1 p2

..

D =

,

.

pn−2 pn−1

pn−1

p1 U1,2 · · · U1,n−1 U1,n · · ·

p2 · · · U2,n−1 U2,n · · ·

..

..

..

..

U =

.

.

.

.

pn−1 Un−1,n · · ·

pn

···

3

U1,m

U2,m

..

.

,

Un−1,m

Un,m

P is a permutation matrix, L and U are triangular as shown, and the pivots pi that arise

are in I. The pivot pn is also the determinant of the matrix A.

Proof. For a full-rank matrix, there always exists a permutation matrix such that the diagonal pivots are non-zero during Gaussian elimination. Let P be such a permutation matrix

for the full-rank matrix A (We will give details in the algorithm for finding this permutation

matrix). Classical Gaussian elimination shows that P A admits the following factorization.

a1,1 a1,2 a1,3 · · · a1,n · · · a1,m

a2,1 a2,2 a2,3 · · · a2,n · · · a2,m

P A = a3,1 a3,2 a3,3 · · · a3,n · · · a3,m

..

..

..

..

..

.

.

.

···

.

···

.

an,1 an,2 an,3 · · ·

an,n · · ·

1

a2,1

a1,1

a

3,1

= a1,1

.

..

a

1

(1)

a3,2

(1)

1

..

.

..

.

(1)

an,2

(1)

a2,2

(2)

an,3

(2)

a3,3

a2,2

n,1

a1,1

..

.

···

1

a2,1

a1,1

a

3,1

= a1,1

.

..

a

1

(1)

a3,2

(1)

1

..

.

..

.

a2,2

..

an,2

an,3

a1,1

(1)

a2,2

(2)

a3,3

a1,2

a1,1

1

.

(2)

(1)

n,1

a1,3

a1,1

(1)

a2,3

(1)

a2,2

1

···

···

···

···

..

a1,1 a1,2 a1,3 · · ·

(1)

(1)

a2,2 a2,3 · · ·

(2)

a3,3 · · ·

..

.

a1,n

(1)

a2,n

···

···

a3,n

..

.

···

(2)

(n−1)

an,n

1

1

an,m

.

1

a1,n

a1,1

(1)

a2,n

(1)

a2,2

(2)

a3,n

(2)

a3,3

a1,1

(1)

a2,2

(2)

a3,3

···

···

···

..

.

···

1

···

a1,m

a1,1

(1)

a2,m

(1)

a2,2

(2)

a3,m

(2)

a3,3

..

.

(n−1)

an,m

(n−1)

an,n

= ` · d · u.

4

..

.

(n−1)

an,n

···

···

a1,m

(1)

a2,m

(2)

a3,m

..

.

(n−1)

an,m

(k)

The coefficients ai,j are related by the following relations:

(0)

i = 1,

ai,j = ai,j ;

¯ (i−2)

¯a

¯ i−1,i−1

(i−1)

2 ≤ i ≤ j ≤ m, ai,j = ¯¯ a(i−2)

i,i−1

¯ a(i−2)

i−1,i−1

(j−1)

n ≥ i ≥ j ≥ 2,

ai,j

¯ (j−2)

¯a

¯ j−1,j−1

(j−2)

= ¯¯ ai,j−1

¯ a(j−2)

j−1,j−1

¯

¯a1,1 a1,2 · · ·

¯

¯a2,1 a2,2 · · ·

¯

(k)

(k)

Let us define Ai,j by Ai,j = ¯¯ · · · · · · · · ·

¯ak,1 ak,2 · · ·

¯

¯ ai,1 ai,2 · · ·

¯

¯A(k−1)

¯ k,k

(k)

1

We have the relations Ai,j = (k−2)

¯

Ak−1,k−1 ¯A(k−1)

i,k

the definitions, we have

(i−1)

m ≥ j ≥ i ≥ 1, ai,j

(i−1)

n ≥ j ≥ i ≥ 1, aj,i

¯

(i−2)

ai−1,j ¯¯

(i−2) ¯

ai,j

¯;

¯

(i−2)

a

i−1,i−1

¯

(j−2)

aj−1,j ¯¯

(j−2) ¯

ai,j

¯.

¯

(j−2)

a

j−1,j−1

¯

a1,k a1,j ¯¯

a2,k a2,j ¯¯

· · · · · · ¯¯ . Hence, these are in I.

ak,k ak,j ¯¯

ai,k ai,j ¯

¯

(k−1)

Ak,j ¯¯

(k−1) ¯, which was proved in [1]. From

Ai,j ¯

=

=

1

(i−2)

Ai−1,i−1

1

(i−2)

Ai−1,i−1

(i−1)

,

(i−1)

.

Ai,j

Aj,i

So

`i,k =

uk,j

=

(k−2)

1

(k−1)

ak,k

·

(k−1)

ai,k

=

(k−1)

=

Ak−1,k−1

(k−1)

Ak,k

(k−1)

·

(k−2)

1

(k−1)

ak,k

· ak,j

Ak−1,k−1

(k−1)

Ak,k

Ai,k

(k−2)

Ak−1,k−1

(k−1)

=

(k−1)

·

Ak,j

(k−2)

Ak−1,k−1

Ai,k

(k−1)

Ak,k

;

`k,k = 1;

;

uk,k = 1;

(k−1)

=

Ak,j

(k−1)

Ak,k

(k−1)

dk,k =

(k−1)

ak,k

Ak,k

=

(k−2)

Ak−1,k−1

Then the fraction free LU form P A = LD−1 U can be written as:

(k−1)

,

n ≥ i ≥ k ≥ 1,

(k−1)

,

m ≥ j ≥ k ≥ 1,

Li,k = Ai,k

Uk,j

= Ak,j

(k−2)

Dk,k =

Ak−1,k−1

(k−1)

Ak,k

(k−1)

· Ak,k

(k−1)

· Ak,k

5

(k−2)

(k−1)

= Ak−1,k−1 Ak,k

,

n ≥ k ≥ 1,

which is also equivalent to P A = LD−1 U , where

(0)

A1,1

(0)

(1)

A2,1

A

2,2

..

.

..

..

L = .

,

.

(1)

(n−2)

A(0)

A

·

·

·

A

n−1,1

n−1,2

n−1,n−1

(0)

(1)

(n−2)

An,1

An,2 · · ·

An,n−1 1

(0)

A1,1

(0) (1)

A1,1 A2,2

..

D =

.

(n−3)

(n−2)

An−2,n−2 An−1,n−1

U

(0)

(0)

A

A1,2 · · ·

1,1

(1)

A2,2 · · ·

..

=

.

A1,n−1

(0)

A1,n

(0)

···

..

.

(1)

A2,n

..

.

···

..

.

(1)

A2,n−1

(n−2)

(n−2)

An−1,n−1 An−1,n · · ·

(n−1)

An,n

···

(n−2)

,

An−1,n−1

(0)

A1,m

(1)

A2,m

..

.

.

(n−2)

An−1,m

(n−1)

An,m

Example 2.3. Let us compute the fraction free LU factoring of the same matrix A as in

Example 1.1 (here, we take P to be the identity matrix).

x 1 3

1

A := 3 4 7 ; P = 1 .

8 1 9

1

In Example 1.1, we found that there were still fractions in the fraction free LU factoring

from the Maple computation. When using our new LU factoring, there is no fraction

appearing in the upper or lower triangular matrix.

−1

x

1

3

x

x

· 4x − 3 7x − 9

P · A = 3 4x − 3 · x(4x − 3)

29x − 58

4x − 3

8 x−8 1

This new output is better than the old one for the following two aspects: first, this new

LU factoring form keeps the computation in the same domain; second, the division used in

the new factoring is an exact division, while in Example 1.1 fraction free LU factoring the

division needs gcd computations for the lower triangular matrix as in Equation 1. We give

a more general comparison of these two forms on their time complexity in Theorems 3.9 of

Section 3.

6

3

Completely Fraction Free Algorithm and Time Complexity

Analysis

Here we give an algorithm for computing a completely fraction free LU factoring (abbreviated as CFFLU). This is generic code; an actual Maple implementation would make

additional optimizations with respect to different input domains.

Algorithm 3.1. Completely Fraction free LU factoring (CFFLU)

Input: A n × m matrix A, with m ≥ n.

Output: Four matrices P, L, D, U, where P is a n × n permutation matrix, L is a n × n

lower triangular matrix, D is a n × n diagonal matrix, U is a n × m upper triangular matrix

and P A = LD−1 U.

U := Copy(A); (n,m) := Dimension(U): oldpivot := 1;

L:=IdentityMatrix(n,n,’compact’=false);

DD:=ZeroVector(n,’compact’=false); P := IdentityMatrix(n, n,

’compact’=false); for k from 1 to n-1 do

if U[k,k] = 0 then

kpivot := k+1;

Notfound := true;

while kpivot < (n+1) and Notfound do

if U[kpivot, k] <> 0 then

Notfound := false;

else

kpivot := kpivot +1;

end if;

end do:

if kpivot = n+1 then

error "Matrix is rank deficient";

else

swap := U[k, k..n];

U[k,k..n] := U[kpivot, k..n];

U[kpivot, k..n] := swap;

swap := P[k, k..n];

P[k, k..n] := P[kpivot, k..n];

P[kpivot, k..n] := swap;

end if:

end if:

L[k,k]:=U[k,k];

DD[k] := oldpivot * U[k, k];

Ukk := U[k,k];

for i from k+1 to n do

L[i,k] := U[i,k];

Uik := U[i,k];

7

for j from k+1 to m do

U[i,j]:=normal((Ukk*U[i,j]-U[k,j]*Uik)/oldpivot);

end do;

U[i,k] := 0;

end do;

oldpivot:= U[k,k];

end do; DD[n]:= oldpivot;

For comparison, we also recall a partially fraction free LU factoring (PFFLU).

Algorithm 3.2. Partially Fraction free LU factoring (PFFLU)

Input: A n × m matrix A.

Output: Three matrices P , L and U , where P is a n×n permutation matrix, L is a n×n

lower triangular matrix, U is a n × m fraction free upper triangular matrix and P A = LU.

U := Copy(A); (n,m) := Dimension(U): oldpivot := 1;

L:=IdentityMatrix(n,n,’compact’=false); P := IdentityMatrix(n, n,

’compact’=false); for k from 1 to n-1 do

if U[k,k] = 0 then

kpivot := k+1;

Notfound := true;

while kpivot < (n+1) and Notfound do

if U[kpivot, k] <> 0 then

Notfound := false;

else

kpivot := kpivot +1;

end if;

end do:

if kpivot = n+1 then

error "Matrix is rank deficient";

else

swap := U[k, k..n];

U[k,k..n] := U[kpivot, k..n];

U[kpivot, k..n] := swap;

swap := P[k, k..n];

P[k, k..n] := P[kpivot, k..n];

P[kpivot, k..n] := swap;

end if:

end if:

L[k,k]:=1/oldpivot;

Ukk := U[k,k];

for i from k+1 to n do

L[i,k] := normal(U[i,k]/(oldpivot * U[k, k]));

Uik := U[i,k];

8

for j from k+1 to m do

U[i,j]:=normal((Ukk*U[i,j]-U[k,j]*Uik)/oldpivot);

end do;

U[i,k] := 0;

end do;

oldpivot:= U[k,k];

end do; L[n,n]:= 1/oldpivot;

The main difference between Algorithm 3.1 and Algorithm 3.2 is that Algorithm 3.2 uses

non-exact divisions when computing the L matrix. The reason we give these two algorithms

is that we want to show the advantage of a fraction free output format.

Theorem 3.3. Let A be a n × m matrix of full rank with entries in a domain I and n ≤ m.

On input A, Algorithm 3.1 outputs four matrices P, L, D, U with entries in I such that

P A = LD−1 U , P is a n × n permutation matrix, L is a n × n lower triangular matrix, D is

a n × n diagonal matrix, U is a n × m upper triangular matrix. Furthermore, all divisions

are exact.

Proof. In Algorithm 3.1, each pass through the main loop starts by finding a non-zero pivot,

and reorders the row accordingly. For the sake of proof, we can suppose that the rows have

been permuted from the start, so that no permutation is necessary.

Then we prove by induction that at the end of step k, for k = 1, . . . , n − 1, we have

(0)

(i−2)

(i−1)

• D[1] = A1,1 and D[i] = Ai−1,i−1 Ai,i

for i = 2, . . . , k,

(j−1)

for j = 1, . . . , k and i = 1, . . . , n,

(i−1)

for i = 1, . . . , k and j = i, . . . , m,

• L[i, j] = Ai,j

• U [i, j] = Ai,j

(k)

• U [i, j] = Ai,j for i = k + 1, . . . , n and j = k + 1, . . . , m,

• all other entries are 0,

These equalities are easily checked for k = 1. Suppose that this holds at step k, and let

us prove it at step k + 1. Then:

(k)

• for i = k + 1, . . . , n, L[i, k + 1] gets the value U [i, k + 1] = Ai,k+1 ,

(k−1)

• D[k + 1] gets the value Ak,k

(k)

Ak+1,k+1 ,

• for i, j = k + 2, . . . , m, U [i, j] gets the value

(k−1)

Ak,k

(k−1)

Ai,j

(k−1)

− Ak,j

(k−2)

Ak−1,k−1

9

(k−1)

Ai,k

(k)

= Ai,j

Algorithm

classical

Karatsuba(Karatsuba & Ofman 1962)

FFT multiplication (provided that R supports the FFT)

Schönhage & Strassen (1971), Schönhage (1977)

Cantor & Kaltofen (1991); FFT based

M (n)

O(n2 )

O(n1.59 )

O(n log n)

O(n log n log log n)

Table 1: Various polynomial multiplication algorithms and their running times.

• U [i, k + 1] gets the value 0 for i = k + 2, . . . , n.

This proves our statement by induction. In particular, as claimed, all divisions are exact.

In the following part of this section, we discuss the advantages of the completely fraction

free LU factoring and give the time complexity analysis for the fraction free algorithms and

fraction free outputs in matrices with univariate polynomial entries and with integer entries.

First, let us introduce two definitions about a length of an integer and a multiplication

time(see [6, §2.1, §8.3]).

Definition 3.4. For a nonzero integer a ∈ Z, we define the length λ(a) of a as

λ(a) = blog |a| + 1c,

where b.c denotes rounding down to the nearest integer.

Definition 3.5. Let I be a ring (commutative, with 1). We call a function M : N>0 → R>0 a

multiplication time for I[x] if polynomials in I[x] of degree less than n can be multiplied using

at most M (n) operations in I. Similarly, a function M as above is called a multiplication

time for Z if two integers of length n can be multiplied using at most M (n) word operations.

In principle, any multiplication algorithm leads to a multiplication time. Table 1 summarizes the multiplication times for some general algorithms.

The cost of dividing two integers of length ` is O(M (`)) word operations, and the cost of

a gcd computation is O(M (`) log `) word operations. For two polynomials a, b ∈ K[x], where

K is an arbitrary field, of degrees less than d, the cost of division is O(M (d)), and the cost of

gcd computation is O(M (d) log d). For two univariate polynomials a, b ∈ Z[x] of degree less

than d and coefficient’s length bounded by `, if a divides b and if the quotient has coefficients

of length bounded by `0 , the cost of division is O(M (d(max(`, `0 ) + log d))). If the division

is non-exact, i.e. we need to compute the gcd of a and b, the cost is O(M (d) log d · (d + `) ·

M (log(d(log(d)+`)))·log log(d(log(d)+`))+dM ((d+`) log(d(log(d)+`)))·(log d+log `)) [6].

Lemma 3.6. From [15], for a k × k matrix A = [ai,j ], with ai,j ∈ Z[x1 , . . . , xm ] with degree

and length bounded by d and ` respectively, the degree and length of det(A) are bounded by

kd and k(` + log k + d log(m + 1)) respectively.

10

Based on the completely fraction free LU factoring algorithm 3.1, at each k th step of

fraction free Gaussian elimination, we have to compute

(k−1)

Uk,j = Ak,j

where

(k−1)

Ak,j

and

a1,1

a1,2

a2,1

a2,2

·

·

·

···

= det

ak−1,1 ak−1,2

ak,1

ak,2

(k−1)

Li,k = Ai,k

···

···

···

···

···

,

a1,k−1

a1,j

a2,k−1

a2,j

···

···

,

ak−1,k−1 ak−1,j

ak,k−1

ak,j k×k

(k−2)

(k−1)

, Dk,k = Ak−1,k−1 Ak,k

(3)

.

Lemma 3.7. If every entry ai,j of the matrix A = [ai,j ]n×n is a univariate polynomial over

(k)

a field K with degree less than d, we have deg(Ai,j ) ≤ kd.

If every entry ai,j of the matrix A = [ai,j ]n×n is in Z and has length bounded by `, we

(k)

have λ(Ai,j ) ≤ k(` + log k).

If every entry of the matrix A = [ai,j ]n×n is a univariate polynomial over Z[x] with degree

(k)

(k)

less than d and coefficient’s length bounded by `, we have deg(Ai,j ) ≤ kd and λ(Ai,j ) ≤

k(` + log k + d log 2).

(k)

Proof. If ai,j ∈ K[x] has degree less than d, from Lemma 3.6, we have deg(Ai,j ) ≤ kd.

If ai,j ∈ Z has length bounded by `, from Equation 3 and Lemma 3.6 with m = 0, we

(k)

have λ(Ai,j ) ≤ k(` + log k). If ai,j ∈ Z[x] has degree less than d and coefficient’s length

(k)

bounded by `, from Equation 3 and Lemma 3.6 with m = 1, we have deg(Ai,j ) ≤ kd and

(k)

λ(Ai,j ) ≤ k(` + log k + d log 2).

In the following part of this section, we want to demonstrate that the difference between

fraction free LU factoring and our completely fraction free LU factoring is the divisions used

in computing their lower triangular matrices L. We discuss here only three cases. In case

1, we will analyze the cost of two algorithms with A ∈ K[x], where K is a field, i.e. we only

consider the growth of degree during the factoring. For case 2, we will analyze the cost of

two algorithms with A ∈ Z, i.e. we only consider the growth of length during the factoring.

In case 3, we will analyze the cost of both algorithms with A ∈ Z[x]. For more cases, such

as A ∈ Z[x1 , ..., xm ], the basic idea will be the same as these three basic cases.

Theorem 3.8. For a matrix A = [ai,j ]n×n with entries in K[x], if every ai,j has degree less

than d, the time complexity of completely fraction free LU factoring for A is bounded by

O(n3 M (nd)) operations in K.

For a matrix A = [ai,j ]n×n with entries in Z, if every ai,j has length bounded by `, the

time complexity of completely fraction free LU factoring for A is bounded by O(n3 M (n log n+

n`)) word operations.

11

For a matrix A = [ai,j ]n×n with univariate polynomial entries in Z[x], if every ai,j has

degree less than d and has length bounded by `, the time complexity of completely fraction

free LU factoring for A is bounded by O(n3 M (n2 d` + nd2 )) word operations.

Proof. Let case 1 be the case ai,j ∈ K[x] with d = maxi,j deg(ai,j ) + 1, case 2 be the case

ai,j ∈ Z with ` = maxi,j λ(ai,j ) and case 3 be the case ai,j ∈ Z[x] with d = maxi,j deg(ai,j )+1

(k)

and ` = maxi,j λ(ai,j ). From Lemma 3.7, at each step k, deg(Ai,j ) ≤ kd in case 1 and

(k)

(k)

(k)

λ(Ai,j ) ≤ k(` + log k) in case 2, and λ(Ai,j ) ≤ k(` + log k + d log 2) and deg(Ai,j ) ≤ kd in

case 3.

When we do completely fraction free LU factoring, at the kth step, we have (n − k)2

entries to compute. For each new entry from step k − 1 to step k, we need to do at most two

multiplication, one subtraction and one division. The cost will be bounded by O(M (kd)) for

case 1, by O(M (k(`+log k))) for case 2 and by O(M (kd(((2k)`+log(2k)+d log 2)+log(kd))))

for case 3.

Let c1 , c2 and c3 be constants, such that the previous estimates are bounded by

c1 × M (kd),

c2 × M (k(` + log k))

and

c3 × M (k 2 d` + kd log k + kd2 log 2 + kd log(kd)).

For case 1, the total cost for the completely fraction free LU factoring will be bounded

by

n−1

X

c1 (n − k)2 M (kd) = O(n3 M (nd)).

k=1

For case 2, the total cost for the completely fraction free LU factoring will be bounded

by

n−1

X

c2 (n − k)2 M (k(` + log k)) = O(n3 M (n log n + n`)).

k=1

For case 3, the total cost for the completely fraction free LU factoring will be bounded

by

n−1

X

c3 (n − k)2 M (2k 2 d` + kd log(2k) + kd2 log 2 + kd log(kd)) = O(n3 M (n2 d` + nd2 )).

k=1

The extra gcd computation in Algorithm 3.2 when computing L[i, k] := U [i, k] / (oldpivot∗

U [k, k]) makes the fraction free LU factoring more expensive, as shown in the following theorem.

12

Theorem 3.9. For a matrix A = [ai,j ]n×n , if every ai,j ∈ K[x] has degree less than

d, the time complexity of the partially fraction free LU factoring for A is bounded by

O((n2 log(nd) + n3 )M (nd)).

For a matrix A = [ai,j ]n×n , if every ai,j ∈ Z has length bounded by `, the time complexity of partially fraction free LU factoring for A is bounded by O((n2 log(n log n + n`) +

n3 )M (n log n + n`)).

For a matrix A = [ai,j ]n×n , if every ai,j ∈ Z[x] has degree less than d and coefficient

length bounded by `, the time complexity of the partially fraction free LU factoring for A

is bounded by O(n3 M (n2 d` + nd2 ) + n2 δM ), where δM = M (nd) log(nd)(n(` + log n +

d))M (log(nd(` + d))) log log(nd(` + d)) + ndM (n(` + log n + d) log(nd(` + d))) log(n(` + d)).

Proof. As above, case 1 is ai,j ∈ K[x] with d = maxi,j deg(ai,j ) + 1, case 2 is ai,j ∈ Z with

` = maxi,j λ(ai,j ) and case 3 is ai,j ∈ Z[x] with d = maxi,j deg(ai,j )+1 and ` = maxi,j λ(ai,j ).

(k)

(k)

From Lemma 3.7, at each step kth, deg(Ai,j ) ≤ kd in case 1, λ(Ai,j ) ≤ k(` + log k) in case

(k)

(k)

2, and λ(Ai,j ) ≤ k(` + log k + d log 2) and deg(Ai,j ) ≤ kd in case 3.

When we do fraction free LU factoring, at the kth step, we need to do the same computation for U matrix as in Algorithm 3.1. In addition, we have n − k entries, which are

the entries of kth column of L matrix, containing gcd computation for its division in order

to get the common factors out of division.

For case 1, the total cost for the partially fraction free LU factoring will be bounded by

n−1

X

(c1 (n − k)2 M (kd) + c2 (n − k)M (kd) log(kd)) = O((n2 log(nd) + n3 )M (nd)),

k=1

where c1 and c2 are constants.

For case 2, the total cost for the partially fraction free LU factoring will be bounded by

n−1

X

(c3 (n − k)2 M (k(` + log k)) + c4 (n − k)M (k(` + log k)) log(k(` + log k)))

k=1

= O((n2 log(n log n + n`) + n3 )M (n log n + n`)),

where c3 and c4 are constants.

For case 3, after a few simplification the total cost for the partially fraction free LU

factoring will be bounded by

O(n3 M (n2 d` + nd2 ) + n2 δM ),

where

δM

= (n(` + log n + d)) log(nd) log log(nd(` + d))M (nd)M (log(nd(` + d)))

+nd log(n(` + d))M (n(` + log n + d) log(nd(` + d))).

13

Comparing Theorem 3.8 with Theorem 3.9, we see that partially fraction free LU factoring costs a little more than our completely fraction free LU. More precisely, consider for

instance the case of polynomial matrices, for fixed degree d, both algorithms have complexity O(n3 M (n)). However, when n is fixed, the partially fraction free LU factoring algorithm

has complexity O(M (d) log(d)), where ours features a better bound O(M (d)). The behavior

is the same for the other classes of matrices and will be confirmed by our benchmarks.

4

Benchmarks

We compare Algorithm 3.1 and Algorithm 3.2 on matrices with integer entries and univariate

polynomial entries. We do not give a benchmark for matrices with multivariate polynomial

entries because the running time is too long for the matrix whose size is larger than 10.

We also give measure the time of the Maple command for fraction free LU. Because the

implementation details are not published for the Maple command we use, we will only use

it for our references.

As we know, the main difference in Algorithm 3.1 and Algorithm 3.2 is in their divisions.

In our codes, we use the divide command for exact division in univariate polynomial case

and the iquo command for integer case instead of the normal command. All results are

obtained using the TTY version of Maple 10, running on an 2.8Ghz Intel P4 with 1024Megs

of memory running Linux, and with time limit set to 2000 seconds for each fraction-free

factoring operation, and garbage collection “frequency” (gcfreq) set to 2 · 107 bytes.

In the following tables, we label the time used by Algorithm 3.1 as CFFLU, the time used

by Algorithm 3.2 as PFFLU and the time used by the Maple command LUDecomposition(A,

method = FractionFree) as MapleLU. We also denote the size of a matrix as n and the

length of an integer entry of a matrix as `.

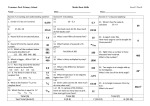

Table 2 gives the times of three algorithms on different sizes of matrices with integer

entries of fixed length (drawn in Figure 1). Table 3 gives the relations between the logarithm

of the time of completely fraction free LU factoring with the logarithm of the size of matrix.

If we use the Maple command Fit(a + b · t, x, y, t) to fit Table 3, we find a slope equal

to 4.335. This tells us that the relation between the time used by completely fraction free

LU factoring and the size of the matrix is t = O(n4.335 ). In view of Theorem 3.8, this

suggest that M (n log n) behaves like O(n1.335 ) for integer multiplication in our range. This

subquadratic behavior is consistent with the fact that Maple uses the GMP library for

long integers multiplication. We also could use block operations instead of explicit loops in

our codes: this is an efficient method in Matlab, but it will be 10 times slower in Maple.

For fixed-size matrices with integer entries, we have the following two tables. Table

4 gives the times of three algorithms on matrices with different length of integer entries

(drawn in Figure 3). Table 5 gives the relations between the logarithm of the time of

completely fraction free LU factoring with the logarithm of the length of integer entry. If

we use the Maple command Fit(a + b · t, x, y, t) to fit Table 5, we find a slope equal to

1.265. This tells us that the relation between the time used by completely fraction free LU

factoring and the length of integer entry is t = O(`1.265 ). This suggests (by Theorem 3.8)

14

n

10

20

30

40

50

60

70

80

90

100

110

120

130

140

150

160

170

CFFLU

1.00E-02

0.133

0.735

2.617

6.94

15.672

32.003

56.598

97.881

155.757

237.916

351.737

512.428

709.42

975.429

1298.419

1714.003

PFFLU

1.40E-02

0.188

0.959

4.127

8.622

19.574

35.938

65.553

110.931

171.989

264.304

396.445

562.352

775.139

1060.02

1410.204

1868.879

MapleLU

2.80E-02

0.263

1.375

4.831

13.182

31.342

61.974

117.111

208.665

335.407

531.193

803.898

1184.575

1684.698

>2000

>2000

>2000

Table 2: Timings for fraction free LU factoring of random integer matrices generated by

RandomMatrix(n,n, generator= −10100 ..10100 )

.

that M (`) = O(`1.265 ) in the considered range.

For matrices with univariate polynomial entries, we have the following two tables. Table

6 gives Figure 5. We can see that completely fraction free LU factoring is a little bit faster

than the fraction free LU factoring given in Algorithm 3.2. It has a similar speed as the

Maple command. Table 7 gives the logarithms of the times used by completely fraction free

LU factoring and the sizes of matrices. If we use the Maple command Fit(a + b · t, x, y, t)

to fit Table 7, we find a slope equal to 5.187, i.e. we have O(n2.187 ) in our implementation.

5

Application of Completely Fraction Free LU Factoring

In this section, we give applications of our completely fraction free LU factoring. Our first

application is to solve a symbolic linear system of equations in a domain. We will introduce

fraction-free forward and backward substitutions from [18]. Our second application is to

get a new completely fraction free QR factoring, using the relation between LU factoring

and QR factoring given in [20].

15

ln(n)

2.302585093

2.995732274

3.401197382

3.688879454

3.912023005

4.094344562

4.248495242

4.382026635

4.49980967

4.605170186

4.700480366

4.787491743

4.86753445

4.941642423

5.010635294

5.075173815

5.135798437

ln(CFFLU)

-4.605170186

-2.017406151

-0.30788478

0.962028624

1.937301775

2.751875681

3.465829648

4.035973649

4.583752455

5.0482971

5.47191767

5.862883737

6.239160213

6.564447735

6.882877374

7.168902649

7.446586849

Table 3: Logarithms of timings for completely fraction free LU factoring of random integer

matrices from Table 2

`

400

800

1200

1600

2000

2400

2800

3200

3600

4000

4400

4800

5200

5600

6000

CFFLU

4.00E-03

7.00E-03

1.00E-02

1.40E-02

2.20E-02

2.80E-02

3.60E-02

4.40E-02

4.90E-02

6.30E-02

7.10E-02

7.90E-02

7.50E-02

9.40E-02

9.90E-02

PFFLU

6.00E-03

1.30E-02

2.30E-02

3.30E-02

5.20E-02

6.90E-02

9.10E-02

0.114

0.137

0.17

0.195

0.235

0.249

0.29

0.324

MapleLU

9.00E-03

1.80E-02

3.10E-02

4.80E-02

6.90E-02

9.30E-02

0.121

0.155

0.19

0.231

0.272

0.334

0.37

0.425

0.485

Table 4: Timings for completely fraction free LU factoring of random integer matrices with

length `, A := LinearAlgebra[RandomMatrix](5,5, generator = −10` ..10` )

16

ln(`)

5.991464547

6.684611728

7.090076836

7.377758908

7.60090246

7.783224016

7.937374696

8.070906089

8.188689124

8.29404964

8.38935982

8.476371197

8.556413905

8.630521877

8.699514748

ln(CFFLU)

-5.521460918

-4.96184513

-4.605170186

-4.268697949

-3.816712826

-3.575550769

-3.324236341

-3.123565645

-3.015934981

-2.764620553

-2.645075402

-2.538307427

-2.590267165

-2.364460497

-2.312635429

Table 5: Logarithms of timings for completely fraction free LU factoring of random integer

matrices from Table 4

n

5

10

15

20

25

30

35

40

45

50

CFFLU

8.00E-03

0.242

2.322

10.028

31.971

86.361

179.937

354.22

664.608

1178.374

PFFLU

1.20E-02

0.335

2.77

11.873

35.124

92.146

189.297

373.181

686.26

1210.766

MapleLU

2.50E-02

0.305

3.098

10.598

33.316

86.01

181.42

354.728

667.406

1179.499

Table 6: Timings for fraction free LU factoring of random matrices with univariate entries,

generated by RandomMatrix(n,n, generator = (() -> randpoly([x], degree=4))).

17

1,500

1,000

time

500

0

25

50

75

100

125

size

Figure 1: Times for random integer matrices. The line style of CFFLU is SOLID, the line

style of PFFLU is DASH, and the line style of MapleLU is DASHDOT.

5.1

Fraction Free Forward and Backward Substitutions

In order to solve a linear system of equations in one domain, we need not only fraction free

LU factoring of the coefficient matrix but also fraction free forward substitution (FFFS)

and fraction free backward substitution (FFBS) algorithms.

Let A be a n × n matrix, and let P, L, D, U, be as in Theorem 2.2 with m = n.

Definition 5.1. Given a vector b in I, fraction free forward substitution consists in finding

a vector Y , such that LD−1 Y = P b holds.

Theorem 5.2. The vector Y from Definition 5.1 has entries in I.

Proof. From the proof of Theorem 2.2, if P A = LD−1 U , for i = 1 . . . n, we have

(i−1)

Li,i = Ai,i

and

(i−2)

,

(i−1)

Di,i = Ai−1,i−1 Ai,i

For

LD−1 Y = P b,

18

.

7.5

5.0

ln_CFFLU

2.5

0.0

2.5

3.0

3.5

−2.5

4.0

4.5

5.0

ln_size

Figure 2: Logarithms of completely fraction free LU time and integer matrix size, slope =

4.335.

i.e.

#

"

i−1

X

Di,i

Li,k Yk ,

Yi =

bPi −

Li,i

k=1

Pn

where bPi = j=1 Pi,j bj , it is obvious that

fraction free for any i = 1 . . . n.

Di,i

Li,i

is an exact division. So the solution Yi is

Definition 5.3. Given the vector Y from Definition 5.1, fraction free backward substitution

consists in finding a vector X, such that U X = Un,n Y holds.

Theorem 5.4. The vector X from Definition 5.3 has entries in I, and satisfies AX =

det(A)b.

Proof. As we have proved in theorem 2.2, Un,n is actually the determinant of A. From the

relations

U X = Un,n Y,

and

LD−1 Y = P b,

19

0.4

0.3

time

0.2

0.1

0.0

1

2

3

4

5

6

3

10

length

Figure 3: Time of random integer matrices. The line style of CFFLU is SOLID, the line

style of PFFLU is DASH, and the line style of MapleLU is DASHDOT.

we get

P AX = LD−1 U X = det(A)LD−1 Y = P b.

So

AX = det(A)b.

Let B be the adjoint matrix of A, so that BA = det(A)I, where I is an identity matrix.

We deduce that X = Bb. Since B has coefficients in I, the result follows.

If we have a list of linear systems with the same coefficient n by n matrix A, i.e.

Ax = b1 , Ax = b2 , Ax = b3 , ..., after completely fraction free LU decomposition of matrix

P A = LD−1 U , the number of operations for solving a second linear system can be reduced

from O(n3 ) to O(n2 ) for a matrix with floating point entries. Furthermore, for a matrix with

symbolic entries, such as an integer matrix entry length bounded by `, the time complexity

can be reduced from O(n3 M (n log n + n`)) to O(n2 M (n log n + n`)).

Here we give our fraction free forward and backward substitution algorithms using the

same notation as before.

Algorithm 5.5. Fraction free forward substitution

Input: Matrices L, D, P and a vector b

20

ln_length

6

7

8

−3

ln_time

−4

−5

−6

Figure 4: Logarithms of completely fraction free LU time and integer length, slope = 1.265.

Output: A n × 1 vector Y , such that LD−1 Y = P b

For i from P

1 to n do

bPi = nj=1 Pi,j bj ,

P

Di,i

Yi = Li,i

[bPi − i−1

k=1 Li,k Yk ],

end loops;

Algorithm 5.6. Fraction free backward substitution

Input: A matrix U , a vector Y from LD−1 Y = P b.

Output: A scaled solution X

For i from n by -1 to 1 P

do

1

Xi = Ui,i [Un,n Yi − nk=i+1 Ui,k Xk ],

end loops;

5.2

Fraction Free QR Factoring

In a numerical context, the QR factoring of a matrix is well-known. We recall that this

accounts to find Q and R such that A = QR, with Q being an orthonormal matrix (i.e.

having the property QT Q = QQT = I).

21

1,000

750

time

500

250

0

10

20

30

40

50

size

Figure 5: Time of random matrices with univariate entries. The line style of CFFLU is

SOLID, the line style of PFFLU is DASH, and the line style of MapleLU is DASHDOT.

Pursell and Trimble [20] have given an algorithm for computing QR factoring using

LU factoring which is simple and has some surprisingly happy side effects although it is

not numerically preferable to existing algorithms. We use their idea to obtain fraction free

QR factoring using left orthogonal matrices and based on our completely fraction-free LU

factoring. In Theorem 5.7, we prove the existence of fraction free QR factoring and give an

algorithm using completely fraction-free LU factoring to get fraction free QR factoring of a

given matrix, i.e. how to orthogonalize a given set of vectors which are the columns of the

matrix.

Theorem 5.7. Let I is an integral domain and A be a n × m matrix whose columns are

linearly independent vectors in In . There exist a m × m lower triangular matrix L, a m × m

diagonal matrix D, a m × m upper triangular matrix U and a n × m left orthogonal matrix

Θ, such that

[AT A|AT ] = LD−1 [U |ΘT ].

(4)

Definition 5.8. In the condition of Theorem 5.7, the fraction free QR factoring of A is

A = ΘD−1 R,

(5)

where R = LT .

Proof. The linear independence of the columns of A implies that AT A is a full rank matrix.

Hence, there are m × m fraction free matrices, L, D and U as in Theorem 2.2, such that

AT A = LD−1 U

22

(6)

ln(n)

1.609437912

2.302585093

2.708050201

2.995732274

3.218875825

3.401197382

3.555348061

3.688879454

3.80666249

3.912023005

ln(CFFLU)

-4.828313737

-1.418817553

0.842428883

2.30538118

3.464829242

4.458536185

5.19260679

5.869918189

6.499197393

7.071890801

Table 7: Logarithms of timings for completely fraction free LU factoring of random matrices

from Table 6

5.0

ln_time

2.5

0.0

2.0

2.5

3.0

3.5

ln_size

−2.5

−5.0

Figure 6: Logarithms of completely fraction free LU time and random univariate polynomial

matrix size, slope = 5.187

Applying the same row operations to the matrix AT , we have a fraction free m × n matrix.

23

Let us call this fraction free m × n matrix as ΘT , which is

ΘT = DL−1 AT ,

(7)

then

ΘT Θ = (DL−1 AT · A(DL−1 )T ) = DL−1 (LD−1 U )(DL−1 )T = U (DL−1 )T .

Because ΘT Θ is symmetric and both U and (DL−1 )T are upper triangular matrices, ΘT Θ

must be a diagonal matrix. So the columns of Θ are left orthogonal.

Based on Equation 7, we have AT = LD−1 ΘT , i.e. A = Θ(LD−1 )T = Θ(DT )−1 LT =

ΘD−1 LT . Set R = LT , then R is a fraction free upper triangular matrix.

In the following algorithm 5.9, we assume the existence of a function CFFLU implementing Algorithm 3.1.

Algorithm 5.9. Fraction free QR factoring

Input: A n × m matrix A

Output: Three matrices Θ, D, R, where Θ is a n × m left orthogonal matrix, D is a

m × m diagonal matrix, R is a m × m upper triangular matrix and A = ΘD−1 R

1. compute B := [AT A|AT ]

2. L, D, U := CFFLU(B)

3. for i = 1...m, j = 1...n Θj,i := Ui,m+j ,

4. for i = 1...m, j = 1...m

Rj,i := Li,j ,

end loops

end loops

¤

Example 5.10. We revisit the example in Pursell and Trimble [20].

0

−2

1

1

3

1

a1 :=

0 , a2 := 0 , a3 := 1 .

1

1

5

Let A be the 4 × 3 matrix containing ak as its k th column. Then

2 4 6

AT A = 4 14 6

6 6 28

Applying the fraction free row operation matrices L and D to the augmented matrix

[AT A|AT ], we have the fraction free matrix U , where

2 4

6

0

1

0

1

2

2

, U = 12 −12 −4

, D = 24

2

0 −2

L = 4 12

48 −12 −12 12 12

12

6 −12 1

24

Notice that the matrix U differs from that given in Pursell and Trimble [20], which is

as follows:

2 4 6

0

1 0 1

6 −6

−2 1 0 −1 .

4

−1 −1 1 1

This is because they implicitly divided each row by the GCD of the row. They also observed

for this example that cross-multiplication is not needed during Gaussian elimination and

the squares of the each row on the right side equal the diagonal of the left side of the matrix.

However, these observations are specific to their particular numerical example. Any change

to these initial vectors will invalidate their observation, as example 5.11 will show.

Now from our fraction free QR Theorem 5.7, we have

0 −4 −12

2 4

6

0

1

0

1

1 2 −12

2

0 −2 or Θ =

R = LT = 12 −12 , ΘT = −4

0 0

12

1

−12 −12 12 12

1 −2 12

So the fraction free QR factoring of matrix A is as follows.

−1

0 −4 −12

0 −2 1

2 4

6

1 2 −12 2

24

12 −12 = 1 3 1 = A

ΘD−1 R =

0 0

12

0 0 1

12

1

1 −2 12

1 1 5

¤

Example 5.11. We slightly change the vector a1 and keep the other vectors the same as

in example 5.10.

0

−2

1

2

3

1

a1 :=

0 , a2 := 0 , a3 := 1 .

1

1

5

Let C be the 4 × 3 matrix containing ak as its k th column. Then

5 7 7

C T C = 7 14 6

7 6 28

Applying the fraction free row operation matrices L and D to the augmented matrix

[C T C|C T ], we have the fraction free matrix U , where

5 7

7

0

2

0

1

5

5

, U = 21 −19 −10

, D = 105

1

0 −2

L = 7 21

310 −17 −34 21 68

21

7 −19 1

25

We can verify that the square of the second row on the right side of matrix U is not

equal to the diagonal of the left side of the matrix U . It means the observation of Pursell

and Trimble is not valid in this example.

The fraction free QR factoring of matrix C is as follows.

−1

0 −2 1

0 −10 −17

5

5

7

7

2

1

−34

105

21 −19 = 2 3 1 = C

ΘD−1 R =

0 0 1

0

0

21

21

1

1 1 5

1 −2

68

¤

6

Conclusions

The main contributions of this paper are firstly the definition of a new fraction free format

for matrix LU factoring, with a proof of the existence; then from the proof, a general way

for computing the new format and complexity analysis on the two main algorithms.

One of the difficulties constantly faced by computer algebra systems is the perception of

new users that numerical techniques carry over unchanged into symbolic contexts. This can

force inefficiencies on the system. For example, if a system tries to satisfy user preconceptions that Gram—Schmidt factoring should still lead to QR factors with Q orthonormal,

then the system must struggle with square-roots and fractions. By defining alternative

forms for the factors, the system can take advantage of alternative schemes of computation.

In this paper, the application of the completely fraction free LU factoring format gives a

new fraction-free QR factoring format which avoid the square-roots and fractions problems

for QR factoring. On the other hand, together with the fraction-free forward and backward

substitutions, fraction-free LU factoring gives one way to solve linear systems in their own

input domains.

References

[1] Bareiss, E. H. Sylvester’s identity and multistep integer-preserving Gaussian elimination. Mathematics of Computation, 22(103), 565-578, 1968.

[2] Bareiss, E. H. Computational solutions of matrix problems over an integral domain. J.

Inst. Maths Applics., 10, 68-104, 1972.

[3] Corless, R. M. and Jeffrey, D. J. The Turing factorization of a rectangular matrix.

SIGSAM Bull., ACM Press, 31(3), 20-30, 1997.

[4] Demmel, J. W. Applied numerical linear algebra. Society for Industrial and Applied

Mathematics, 1997.

26

[5] Erlingsson, Ú., Kaltofen, E. and Musser, D. Generic Gram—Schmidt orthogonalization

by exact division. ISSAC’96, ACM, 275-282, 1996.

[6] von zur Gathen, J. and Gerhard, J. Modern computer algebra. Cambridge University

Press, 1999.

[7] Geddes, K. O., Labahn, G. and Czapor, S. Algorithms for computer algebra. Kluwer,

1992.

[8] Gentleman, W. M. and Johnson, S. C. Analysis of algorithms, a case study: determintants of polynomials. Proc 5th Annual ACM Symp on Theory of Computing

(Austin, Tex.), 135-142, 1973.

[9] Golub, G and Van Loan, C. Matrix computations, 2nd edition. Johns Hopkins Series

in the Mathematical Sciences, 1989.

[10] Griss, M. L. An efficient sparse minor expansion algorithm. ACM (Houston, Tex.),

429-434, 1976.

[11] Higham, N. J. Accuracy and stability of numerical algorithms. SIAM, 1996.

[12] Higham, N. J. Accuracy and stability of numerical algorithms. SIAM, 2002.

[13] Kirsch, B. J. and Turner, P. R. Modified Gaussian elimination for adaptive beamforming using complex RNS arithmetic. NAWC-AD Tech Rep., NAWCADWAR 94112-50,

1994.

[14] Kirsch, B. J. and Turner, P. R. Adaptive beamforming using RNS arithmetic. Pro

ARTH 11, IEEE Computer Society, Washington DC, 36-43, 1993.

[15] Krick, T., Pardo, L. M. and Sombra, M. Sharp estimates for the arithmetic Nullstellensatz. Duke Mathematical Journal, 109(3), 521-598, 2001.

[16] Lenstra, A. K., Lenstra, H. W. and Lovász, L. Factoring polynomials with rational

coefficients. Math. Ann., 261, 1982.

[17] McClellan, M. T. The exact solution of systems of linear equations with polynomial

coefficients. J ACM, 20(4), 563-588, 1973.

[18] Nakos, G. C., Turner, P. R. and Williams, R. M. Fraction-free algorithms for linear

and polynomial equations. SIGSAM Bull., ACM Press, 31(3), 11-19, 1997.

[19] Noble, B. and Daniel, J. W. Applied linear algebra, 3rd edition. Prentice-Hall, Englewood Cliffs, N.J., 1988.

[20] Pursell, L. and Trimble, S. Y. Gram-Schmidt orthogonalization by Gaussian elimination. American Math. Monthly, 98(6), 544-549, 1991.

27

[21] Rice, J. R. Experiments on Gram-Schmidt orthogonalization. Math. Comp., 20, 325328, 1996.

[22] Sasaki, T. and Nurao, H. Efficient Gaussian elimination method for symbolic determinants and linear systems. ACM Transactions on Mathematical Software, 8(3), 277-289,

1982.

[23] Schönhage, A. and Strassen, V. Schnelle Multiplikation großer Zahlen. Computing, 7,

281-292, 1971.

[24] Smit, J. The efficient calculation of symbolic determinants. ISSAC’76, ACM, 105-113,

1976.

[25] Smit, J. A cancellation free algorithm, with factoring capabilities, for the efficient

solution of large sparse sets of equations. ISSAC’81, ACM, 146-154, 1981.

[26] Trefethen, L. N. and Bau, III D. Numerical linear algebra. SIAM, 1997.

[27] Turing, A. M. Rounding-off errors in matrix processes. Quart. J. Mech. Appl. Math.,

1, 287-308, 1948.

[28] Turner, P. R. Gauss elimination: workhorse of linear algebra. NAWC-AD Tech Rep,

NAWCAD-PAX 96-194-TR, 1996.

[29] Zhou, W., Carette, J., Jeffrey, D. J. and Monagan, M. B. Hierarchical representations

with signatures for large expression management. AISC, Springer-Verlag LNAI 4120,

254-268, 2006.

28