* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Process Data Analysis

Survey

Document related concepts

Transcript

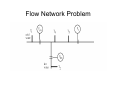

Process Data Analysis Dr M. A. A. Shoukat Choudhury Department of Chemical Engineering BUET, Dhaka - 1000 DATA MINING • Data explosion problem – Automated data collection tools and mature database technology lead to tremendous amounts of data stored in databases, data warehouses and other information repositories • We are drowning in data, but starving for knowledge! • Solution: Data mining and knowledge extraction • Data mining (knowledge discovery in databases): – Extraction of interesting (non-trivial, implicit, previously unknown and potentially useful) information or patterns from data in large databases Elementary Concepts • Variables: Variables are things that we measure, control, or manipulate in research. They differ in many respects, most notably in the role they are given in our research and in the type of measures that can be applied to them. • Observational vs. experimental research. Most empirical research belongs clearly to one of those two general categories. In observational research we do not (or at least try not to) influence any variables but only measure them and look for relations (correlations) between some set of variables. In experimental research, we manipulate some variables and then measure the effects of this manipulation on other variables. • Dependent vs. independent variables. Independent variables are those that are manipulated whereas dependent variables are only measured or registered. Random Variable • In most cases, cannot know what the “true” value is unless there is an independent determination (i.e. different measurement technique). • Only can consider estimates of the error. • Discrepancy is the difference between two or more observations. This gives rise to uncertainty. Population vs. sample • Population - an entire collection of measurements – (e.g. reaction times, IQ scores, height or even height of male BUET students) • Sample – smaller subset of observations taken from population – sample should be drawn randomly to make inferences about population. Random assignment to groups improves validity In General: (Parent Parameter) = lim (Sample Parameter) N ->∞ When the number of observations, N, goes to infinity. Population vs. sample • In general: – population parameters =Greek letters – sample statistics=English letters Population mean variance μ (mu) σ2 (sigma) Sample X s2 Statistics • Two essential components of data are: (i) central tendency of the data & (ii) spread of the data (e.g. standard deviation) • Although mean (central tendency) and standard deviation (spread) are most commonly used, other measures can also be useful Measures of central tendency • Mode – the most frequent observation: 1, 2, 2, 3, 4 ,5 • Median – the middle number of a dataset arranged in numerical order: 0, 1, 2, 5, 1000 (average of middle two numbers when even number of scores exist) – relatively uninfluenced by outliers • Mean = Measures of dispersion • Several ways to measure spread of data: – Range (max-min), IQR or Inter-Quartile Range (middle 50%), Mean Absolute Deviation – Variance – average of the squared deviations – Variance for population of 3 scores (-10,0,10) is 66.66 (200/3) – Standard deviation is simply the square root of the variance Calculating sample variance • Population variance (σ2) is the true variance of the population calculated by -this equation is used when we have all values in a population (unusual) • However, the variance of a sample (S2) tends to be larger than the population from which it was drawn. So, we use this equation: standard deviation: positive square root of the variance small std dev: observations are clustered tightly around the mean large std dev: observations are scattered widely around the mean Data Distribution Histogram is a useful graphic representation of information content of sample or parent population many statistical tests assume values are normally distributed not always the case! examine data prior to processing Normal/Gaussian Distribution • Many real-life variables (height, weight, IQ etc etc) are distributed like this • Mathematical equation mimics this normal (or Gaussian) distribution Normal Distribution • The mathematical normal distribution is useful as its known mathematical properties give us useful info about our real-life variable (assuming our real-life variable is normally distributed) • For example, 2 standard deviations above the mean represent the extreme 2.5% of scores • Consequently, a person with an IQ score of 130 (M=100, SD=15), would be in the top 2.5% (assuming IQ is normally distributed) For gaussian or normal error distributions: Total area underneath curve is 1.00 (100%) 68.27% of observations lie within ± 1 std dev of mean 95% of observations lie within ± 2 std dev of mean 99.9% of observations lie within ± 3 std dev of mean Variance, standard deviation, probable error, mean, and weighted root mean square error are commonly used statistical terms in geodesy. compare (rather than attach significance to numerical value) Steps of Data Analysis • • • • Extract Data from Database Visualize the data Select an appropriate segment Preprocessing of Data : Removal and replacement of outliers • Transform data, if needed • Analyse the data to find patterns or to extract information Data Visualisation • Plot the Data and have a look • Basic rule is to select plot which represents what you want to say in the clearest and simplest way • Avoid ‘chart junk’ (e.g. plotting in 3D where 2D would be clearer) • Popular options include time trends, bar charts, histograms, pie charts etc Transforming data • One reason we might ‘transform’ data is to convert from one scale to another – e.g. feet into inches, centigrade into fahrenheit, raw IQ scores into standard IQ scores • Scale conversion can usually be achieved by simple linear transformation (multiplying/dividing by a constant and adding/subtracting a constant) Xnew = b*Xold + c – So to convert centigrade data into fahrenheit we would apply the following: Transforming data • Z-transform (standardisation) is one common type of linear transform, which produces a new variable with M=0 & SD=1 • Z -scores= X • Standardisation is useful when comparing the same dimension measured on different scales • After standardisation these scales could also be added together (adding two quantities on different scales is obviously problematic) Process Time trends Correlation Analysis • Correlation is a measure of the relation between two or more variables. The measurement scales used should be at least interval scales, but other correlation coefficients are available to handle other types of data. Correlation coefficients can range from -1.00 to +1.00. The value of -1.00 represents a perfect negative correlation while a value of +1.00 represents a perfect positive correlation. A value of 0.00 represents a lack of correlation. A Nice Statistic Website http://www.statsoft.com/textbook/stathome.html Data Analysis • Outliers – Removal and replacement Flow Network Problem