* Your assessment is very important for improving the work of artificial intelligence, which forms the content of this project

Download Chapter 4 Dependent Random Variables

Birthday problem wikipedia , lookup

Ars Conjectandi wikipedia , lookup

Random variable wikipedia , lookup

Inductive probability wikipedia , lookup

Infinite monkey theorem wikipedia , lookup

Probability interpretations wikipedia , lookup

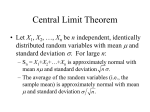

Central limit theorem wikipedia , lookup

Chapter 4

Dependent Random Variables

4.1

Conditioning

One of the key concepts in probability theory is the notion of conditional

probability and conditional expectation. Suppose that we have a probability

space (Ω, F , P ) consisting of a space Ω, a σ-field F of subsets of Ω and a

probability measure on the σ-field F . If we have a set A ∈ F of positive

measure then conditioning with respect to A means we restrict ourselves to

the set A. Ω gets replaced by A. The σ-field F by the σ-field FA of subsets

of A that are in F . For B ⊂ A we define

P (B)

PA (B) =

P (A)

We could achieve the same thing by defining for arbitrary B ∈ F

PA (B) =

P (A ∩ B)

P (A)

in which case PA (·) is a measure defined on F as well but one that is concentrated on A and assigning 0 probability to Ac . The definition of conditional

probability is

P (A ∩ B)

P (B|A) =

.

P (A)

Similarly the definition of conditional expectation of an integrable function

f (ω) given a set A ∈ F of positive probability is defined to be

R

f (ω)dP

E{f |A} = A

.

P (A)

101

CHAPTER 4. DEPENDENT RANDOM VARIABLES

102

In particular if we take f = χB for some B ∈ F we recover the definition

of conditional probability. In general if we know P (B|A) and P (A) we can

recover P (A ∩ B) = P (A)P (B|A) but we cannot recover P (B). But if we

know P (B|A) as well as P (B|Ac ) along with P (A) and P (Ac ) = 1 − P (A)

then

P (B) = P (A ∩ B) + P (Ac ∩ B) = P (A)P (B|A) + P (Ac )P (B|Ac ).

More generally if P is a partition of Ω into a finite or even a countable number

of disjoint measurable sets A1 , · · · , Aj , · · ·

X

P (B) =

P (Aj )P (B|Aj ).

j

If ξ is a random variable taking distinct values {aj } on {Aj } then

P (B|ξ = aj ) = P (B|Aj )

or more generally

P (B|ξ = a) =

P (B ∩ ξ = a)

P (ξ = a)

provided P (ξ = a) > 0. One of our goals is to seek a definition that makes

sense when P (ξ = a) = 0. This involves dividing 0 by 0 and should involve

differentiation of some kind. In the countable case we may think of P (B|ξ =

aj ) as a function fB (ξ) which is equal to P (B|Aj ) on ξ = aj . We can rewrite

our definition of

fB (aj ) = P (B|ξ = aj )

as

Z

fB (ξ)dP = P (B ∩ ξ = aj )

for each j

ξ=aj

or summing over any arbitrary collection of j’s

Z

fB (ξ)dP = P (B ∩ {ξ ∈ E}).

ξ∈E

Sets of the form ξ ∈ E form a sub σ-field Σ ⊂ F and we can rewrite the

definition as

Z

fB (ξ)dP = P (B ∩ A)

A

4.1. CONDITIONING

103

for all A ∈ Σ. Of course in this case A ∈ Σ if and only if A is a union

of the atoms ξ = a of the partition over a finite or countable subcollection

of the possible values of a. Similar considerations apply to the conditional

expectation of a random variable G given ξ. The equation becomes

Z

Z

g(ξ)dP =

G(ω)dP

A

or we can rewrite this as

A

Z

Z

g(ω)dP =

A

G(ω)dP

A

for all A ∈ Σ and instead of demanding that g be a function of ξ we demand

that g be Σ measurable which is the same thing. Now the random variable

ξ is out of the picture and rightly so. What is important is the information

we have if we know ξ and that is the same if we replace ξ by a one-to-one

function of itself. The σ-field Σ abstracts that information nicely. So it turns

out that the proper notion of conditioning involves a sub σ-field Σ ⊂ F . If G

is an integrable function and Σ ⊂ F is given we will seek another integrable

function g that is Σ measurable and satisfies

Z

Z

g(ω)dP =

G(ω)dP

A

A

for all A ∈ Σ. We will prove existence and uniqueness of such a g and call it

the conditional expectation of G given Σ and denote it by g = E[G|Σ].

The way to prove the above result will take us on a detour. A signed

measure on a measurable space (Ω, F ) is a set function λ(.) defined for A ∈

F which is countably additive but not necessarily nonnegative. Countable

addivity is again in any of the following two equivalent senses.

X

λ(∪An ) =

λ(An )

for any countable collection of disjoint sets in F , or

lim λ(An ) = λ(A)

n→∞

whenver An ↓ A or An ↑ A.

Examples of such λ can be constructed by taking the difference µ1 − µ2

of two nonnegative measures µ1 and µ2 .

104

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Definition 4.1. A set A ∈ F is totally positive (totally negative) for λ if

for every subset B ∈ F with B ⊂ A λ(B) ≥ 0. (≤ 0)

Remark 4.1. A measurable subset of a totally positive set is totally positive.

Any countable union of totally positive subsets is again totally positive.

Lemma 4.1. If λ is a countably additive signed measure on (Ω, F ),

sup |λ(A)| < ∞

A∈F

Proof. The key idea in the proof is that, since λ(Ω) is a finite number, if

λ(A) is large so is λ(Ac ) with an opposite sign. In fact, it is not hard to

see that ||λ(A)| − |λ(Ac )|| ≤ |λ(Ω)| for all A ∈ F . Another fact is that if

supB⊂A |λ(B)| and supB⊂Ac |λ(B)| are finite, so is supB |λ(B)|. Now let us

complete the proof. Given a subset A ∈ F with supB⊂A |λ(B)| = ∞, and

any positive number N, there is a subset A1 ∈ F with A1 ⊂ A such that

|λ(A1 )| ≥ N and supB⊂A1 |λ(B)| = ∞. This is obvious because if we pick

a set E ⊂ A with |λ(E)| very large so will λ(E c ) be. At least one of the

two sets E, E c will have the second property and we can call it A1 . If we

proceed by induction we have a sequence An that is ↓ and |λ(An )| → ∞ that

contradicts countable additivity.

Lemma 4.2. Given a subset A ∈ F with λ(A) = ` > 0 there is a subset

Ā ⊂ A that is totally positive with λ(Ā) ≥ `.

Proof. Let us define m = inf B⊂A λ(B). Since the empty set is included,

m ≤ 0. If m = 0 then A is totally positive and we are done. So let us assume

that m < 0. By the previous lemma m > −∞.

Let us find B1 ⊂ A such that λ(B1 ) ≤ m2 . Then for A1 = A − B1 we have

A1 ⊂ A, λ(A1 ) ≥ ` and inf B⊂A1 λ(B) ≥ m2 . By induction we can find Ak

with A ⊃ A1 ⊃ · · · ⊃ Ak · · · , λ(Ak ) ≥ ` for every k and inf B⊂Ak λ(Ak ) ≥ 2mk .

Clearly if we define Ā = ∩Ak which is the decreasing limit, Ā works.

Theorem 4.3. (Hahn-Jordan Decomposition). Given a countably additive signed measure λ on (Ω, F ) it can be written always as λ = µ+ − µ−

the difference of two nonnegative measures. Moreover µ+ and µ− may be

chosen to be orthogonal i.e, there are disjoint sets Ω+ , Ω− ∈ F such that

µ+ (Ω− ) = µ− (Ω+ ) = 0. In fact Ω+ and Ω− can be taken to be subsets of

Ω that are respectively totally positive and totally negative for λ. µ± then

become just the restrictions of λ to Ω± .

4.1. CONDITIONING

105

Proof. Totally positive sets are closed under countable unions, disjoint or

not. Let us define m+ = supA λ(A). If m+ = 0 then λ(A) ≤ 0 for all A and

we can take Ω+ = Φ and Ω− = Ω which works. Assume that m+ > 0. There

exist sets An with λ(A) ≥ m+ − n1 and therefore totally positive subsets Ān

of An with λ(Ān ) ≥ m+ − n1 . Clearly Ω+ = ∪n Ān is totally positive and

λ(Ω+ ) = m+ . It is easy to see that Ω− = Ω − Ω+ is totally negative. µ± can

be taken to be the restriction of λ to Ω± .

Remark 4.2. If λ = µ+ − µ− with µ+ and µ− orthogonal to each other,

then they have to be the restrictions of λ to the totally positive and totally

negative sets for λ and such a representation for λ is unique. It is clear that

in general the representation is not unique because we can add a common µ

to both µ+ and µ− and the µ will cancel when we compute λ = µ+ − µ− .

Remark 4.3. If µ is a nonnegative measure and we define λ by

Z

Z

λ(A) =

f (ω) dµ = χA (ω)f (ω) dµ

A

where f is an integrable function, then λ is a countably additive signed

measure and Ω+ = {ω : f (ω) > 0} and Ω− = {ω : f (ω) < 0}. If we define

f ± (ω) as the positive and negative parts of f , then

Z

±

µ (A) =

f ± (ω) dµ.

A

The signed measure λ that was constructed in the preceeding remark

enjoys a very special relationship to µ. For any set A with µ(A) = 0, λ(A) = 0

because the integrand χA (ω)f (ω) is 0 for µ-almost all ω and for all practical

purposes is a function that vanishes identically.

Definition 4.2. A signed measure λ is said to be absolutely continuous with

respect to a nonnegative measure µ, λ << µ in symbols, if whenever µ(A) is

zero for a set A ∈ F it is also true that λ(A) = 0.

Theorem 4.4. (Radon-Nikodym Theorem). If λ << µ then there is an

integrable function f (ω) such that

Z

λ(A) =

f (ω) dµ

(4.1)

A

106

CHAPTER 4. DEPENDENT RANDOM VARIABLES

for all A ∈ F . The function f is uniquely determined almost everywhere and

is called the Radon-Nikodym derivative of λ with respect to µ. It is denoted

by

dλ

f (ω) =

.

dµ

Proof. The proof depends on the decomposition theorem. We saw that if the

relation 4.1 holds, then Ω+ = {ω : f (ω) > 0}. If we define λa = λ − aµ,

then λa is a signed measure for every real number a. Let us define Ω(a) to

be the totally positive subset of λa . These sets are only defined up to sets of

measure zero, and we can only handle a countable number of sets of measure

0 at one time. So it is prudent to restrict a to the set Q of rational numbers.

Roughly speaking Ω(a) will be the sets f (ω) > a and we will try to construct

f from the sets Ω(a) by the definition

f (ω) = [sup a ∈ Q : ω ∈ Ω(a)].

The plan is to check that the function f (ω) defined above works. Since λa

is getting more negative as a increases, Ω(a) is ↓ as a ↑. There is trouble

with sets of measure 0 for every comparison between two rationals a1 and

a2 . Collect all such troublesome sets (only a countable number and throw

them away). In other words we may assume without loss of generality that

Ω(a1 ) ⊂ Ω(a2 ) whenever a1 > a2 . Clearly

{ω : f (ω) > x} = {ω : ω ∈ Ω(y) for some rational y > x}

= ∪ y>x Ω(y)

y∈Q

and this makes f measurable. If A ⊂ ∩a Ω(a) then λ(A) − aµ(A) ≥ 0 for all

A. If µ(A) > 0, λ(A) has to be infinite which is not possible. Therefore µ(A)

has to be zero and by absolute continuity λ(A) = 0 as well. On the other

hand if A ∩ Ω(a) = Φ for all a, then λ(A) − aµ(A) ≤ 0 for all a and again

if µ(A) > 0, λ(A) = −∞ which is not possible either. Therefore µ(A), and

by absolute continuity, λ(A) are zero. This proves that f (ω) is finite almost

everywhere with respect to both λ and µ. Let us take two real numbers a < b

and consider Ea,b = {ω : a ≤ f (ω) ≤ b}. It is clear that the set Ea,b is in

Ω(a0 ) and Ωc (b0 ) for any a0 < a and b0 > b. Therefore for any set A ⊂ Ea,b by

letting a0 and b0 tend to a and b

a µ(A) ≤ λ(A) ≤ b µ(A).

4.1. CONDITIONING

107

Now we are essentially done. Let us take a grid {n h} and consider En =

{ω : nh ≤ f (ω) < (n + 1)h} for −∞ < n < ∞. Then for any A ∈ F and

each n,

Z

λ(A ∩ En ) − hµ(A ∩ En ) ≤ n h µ(A ∩ En ) ≤

f (ω) dµ

A∩En

≤ (n + 1) hµ(A ∩ En ) ≤ λ(A ∩ En ) + h µ(A ∩ En ).

Summing over n we have

Z

λ(A) − h µ(A) ≤

f (ω) dµ ≤ λ(A) + h µ(A)

A

proving the integrability of f and if we let h → 0 establishing

Z

λ(A) =

f (ω) dµ

A

for all A ∈ F .

Remark 4.4. (Uniqueness). If we have two choices of f say f1 and f2 their

difference g = f1 − f2 satisfies

Z

g(ω) dµ = 0

A

for all A ∈ F . If we take A = {ω : g(ω) ≥ }, then 0 ≥ µ(A ) and this

implies µ(A ) = 0 for all > 0 or g(ω) ≤ 0, almost everywhere with respect to

µ. A similar argument establishes g(ω) ≥ 0 almost everywhere with respect

to µ. Therefore g = 0 a.e. µ proving uniqueness.

Exercise 4.1. If f andR g are two integrable

functions, maesurable with respect

R

to a σ-filed B and if A f (ω)dP = A g(ω)dP for all sets A ∈ B0 , a field that

generates the σ-field B, then f = g a.e. P .

Exercise 4.2. If λ(A) ≥ 0 for all A ∈ F , prove that f (ω) ≥ 0 almost everywhere.

Exercise 4.3. If Ω is a countable set and µ({ω}) > 0 for each single point

set prove that any measure λ is absolutely continuous with respect to λ and

calculate the Radon-Nikodym derivative.

108

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Exercise 4.4. Let F (x) be a distribution function on the line with F (0) = 0

and F (1) = 1 so that the probability measure α corresponding to it lives on

the interval [0, 1]. If F (x) satisfies a Lipschitz condition

|F (x) − F (y)| ≤ A|x − y|

then prove that α << m where m is the Lebesgue measure on [0, 1]. Show

dα

also that 0 ≤ dm

≤ A almost surely.

If ν, λ, µ are three nonnegative measures such that ν << λ and λ << µ

then show that ν << µ and

dν

dν dλ

=

dµ

dλ dµ

a.e.

dλ

Exercise 4.5. If λ, µ are nonnegative measures with λ << µ and dµ

= f , then

show that g is integrable with respect to λ if and only if g f is integrable with

respect to µ and

Z

Z

g(ω) dλ = g(ω) f (ω) dµ.

Exercise 4.6. Given two nonnegative measures λ and µ, λ is said to be uniformly absolutely continuous with respect to µ on F if for any > 0 there

exists a δ > 0 such that for any A ∈ F with µ(A) < δ it is true that λ(A) < .

Use the Radon-Nikodym theorem to show that absolute continuity on a σfield F implies uniform absolute continuity. If F0 is a field that generates the

σ-field F show by an example that absolute continuity on F0 does not imply

absolute continuity on F . Show however that uniform absolute continuity

on F0 implies uniform absolute continuity and therefore absolute continuity

on F .

Exercise 4.7. If F is a distribution function on the line show that it is absolutely continuous with respect to Lebesgue measure on the line, if and only

if for any > 0, there exists a δ > 0 suchP

that for arbitrary finite collection

of

disjoint

intervals

I

=

[a

,

b

]

with

j

j j

j |bj − aj | < δ it follows that

P

j [F (bj ) − F (aj )] ≤ .

4.2

Conditional Expectation

In the Radon-Nikodym theorem, if λ << µ are two probability distributions

dλ

on (Ω, F ), we defined the Radon-Nikodym derivative f (ω) = dµ

as an F

4.2. CONDITIONAL EXPECTATION

measurable function such that

Z

λ(A) =

f (ω) dµ

109

for all A ∈ F

A

If Σ ⊂ F is a sub σ-field, the absolute continuity of λ with respect to µ on Σ

is clearly implied by the absolute continuity of λ with respect to µ on F . We

can therefore apply the Radon-Nikodym theorem on the measurable space

(Ω, Σ), and we will obtain a new Radon-Nikodym derivative

g(ω) =

dλ

dλ =

dµ

dµ Σ

Z

such that

λ(A) =

g(ω) dµ

for all A ∈ Σ

A

and g is Σ measurable. Since the old function f (ω) was only F measurable,

in general, it cannot be used as the Radon-Nikodym derivative for the sub

σ-field Σ. Now if f is an integrable function on (Ω, F , µ) and Σ ⊂ F is a sub

σ-field we can define λ on F by

Z

λ(A) =

f (ω) dµ

for all A ∈ F

A

and recalculate the Radon-Nikodym derivative g for Σ and g will be a Σ

measurable, integrable function such that

Z

λ(A) =

g(ω) dµ

for all A ∈ Σ

A

In other words g is the perfect candidate for the conditional expectation

g(ω) = E f (·)|Σ

We have therefore proved the existence of the conditional expectation.

Theorem 4.5. The conditional expectation as mapping of f → g has the

following properties.

1. If g = E f |Σ then E[g] = E[f ]. E[1|Σ] = 1 a.e.

2. If f is nonnegative then g = E f |Σ is almost surely nonnegative.

110

CHAPTER 4. DEPENDENT RANDOM VARIABLES

3. The map is linear. If a1 , a2 are constants

E a1 f1 + a2 f2 |Σ = a1 E f1 |Σ + a2 E f2 |Σ

a.e.

4. If g = E f |Σ , then

Z

Z

|g(ω)| dµ ≤

|f (ω)| dµ

5. If h is a bounded Σ measurable function then

E f h|Σ = h E f |Σ

a.e.

6. If Σ2 ⊂ Σ1 ⊂ F , then

7. Jensen’s

Inequality. If φ(x) is a convex function of x, and g =

E f |Σ then

E φ(f (ω))|Σ ≥ φ(g(ω)) a.e.

(4.2)

and if we take expectations

E[φ(f )] ≥ E[φ(g)].

Proof. (i), (ii) and (iii) are obvious. For (iv) we note that if dλ = f dµ

Z

|f | dµ = sup λ(A) − inf λ(A)

A∈F

A∈F

and if we replace F by a sub σ-field Σ the right hand side is decreased. (v)

is obvious if h is the indicator function of a set A in Σ. To go from indicator

functions to simple functions to bounded measurable functions is routine.

(vi) is an easy consequence of the definition. Finally (vii) corresponds to

Theorem 1.7 proved for ordinary

expectations

and is proved analogously.

We

note that if f1 ≥ f2 then E f1 |Σ ≥ E f2 |Σ a.e. and consequently

E max(f1 , f2 )|Σ ≥ max(g1 , g2 ) a.e. where gi = E fi |Σ for i = 1, 2. Since

we can represent any convex function φ as φ(x) = supa [ax − ψ(a)], limiting

4.2. CONDITIONAL EXPECTATION

111

ourselves to rational a, we have only a countable set of functions to deal with,

and

E φ(f )|Σ = E sup[af − ψ(a)]|Σ

a ≥ sup a E f |Σ − ψ(a)

a

= sup a g − ψ(a)

a

= φ(g)

a.e. and after taking expectations

E[φ(f )] ≥ E[φ(g)].

Remark 4.5. Conditional expecation is a form of averaging, i.e. it is linear,

takes constants into constants and preserves nonnegativity. Jensen’s inequality is now a cosequence of convexity.

In a somewhat more familiar context if µ = λ1 ×λ2 is a product measure

on (Ω, F ) = (Ω1 × Ω2 , F1 × F2 ) and we take Σ = A × Ω2 : A ∈ F1 } then

for any function f (ω) = f (ω1 , ω2), E[f (·)|Σ] = g(ω) where g(ω) = g(ω1) is

given by

Z

g(ω1 ) =

Ω2

f (ω1, ω2 ) dλ2

so that the conditional expectation is just integrating the unwanted variable

ω2 . We can go one step more. If φ(x, y) is the joint density on R2 of two

random variables X, Y (with respect to the Lebesgue measure on R2 ), and

ψ(x) is the marginal density of X given by

Z ∞

ψ(x) =

φ(x, y) dy

−∞

then for any integrable function f (x, y)

E[f (X, Y )|X] = E[f (·, ·)|Σ] =

R∞

−∞

f (x, y) φ(x, y) dy

ψ(x)

where Σ is the σ-field of vertical strips A × (−∞, ∞) with a measurable

horizontal base A.

112

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Exercise 4.8. If f is already Σ measurable then E[f |Σ] = f . This suggests

that the map f → g = E[f |Σ] is some sort of a projection. In fact if

we consider the Hilbert space H = L2 [Ω, F , µ] of all F measurable square

integrable functions with an inner product

Z

< f, g >µ = f g dµ

then

H0 = L2 [Ω, Σ, µ] ⊂ H = L2 [Ω, F , µ]

and f → E[f |Σ] is seen to be the same as the orthogonal projection from H

onto H0 . Prove it.

Exercise 4.9. If F1 ⊂ F2 ⊂ F are two sub σ-fields of F and X is any integrable function, we can define Xi = E[X|Fi] for i = 1, 2. Show that

X1 = E[X2 |F1 ] a.e.

Conditional expectation is then the best nonlinear predictor if the loss

function is the expected (mean) square error.

4.3

Conditional Probability

We now turn our attention to conditional probability. If we take f = χB (ω)

then E[f |Σ] = P (ω, B) is called the conditional probability of B given Σ. It

is characterized by the property that it is Σ measurable as a function of ω

and for any A ∈ Σ

Z

µ(A ∩ B) =

P (ω, B) dµ.

A

Theorem 4.6. P (·, ·) has the following properties.

1. P (ω, Ω) = 1, P (ω, Φ) = 0 a.e.

2. For any B ∈ F , 0 ≤ P (ω, B) ≤ 1 a.e.

3. For any countable collection {Bj } of disjoint sets in F ,

X

P (ω, ∪j Bj ) =

P (ω, Bj ) a.e.

j

4.3. CONDITIONAL PROBABILITY

113

4. If B ∈ Σ, P (ω, B) = χB (ω) a.e.

Proof. All are easy consequences of properties of conditional expectations.

Property (iii) perhaps needs an explanation. If E[|fn − f |] → 0 by the

properties of conditional expectation E[|E{fn |Σ} − E{f |Σ}| → 0. Property

(iii) is an easy consequence of this.

The problem with the above theorem is that every property is valid only

almost everywhere. There are exceptional sets of measure zero for each case.

While each null set or a countable number of them can be ignored we have an

uncountable number of null sets and we would like a single null set outside

which all the properties hold. This means constructing a good version of the

conditional probability. It may not be always possible. If possible, such a

version is called a regular conditional probability. The existence of such a

regular version depends on the space (Ω, F ) and the sub σ-field Σ being nice.

If Ω is a complete separable metric space and F are its Borel stes, and if Σ

is any countably generated sub σ-field of F , then it is nice enough. We will

prove it in the special case when Ω = [0, 1] is the unit interval and F are the

Borel subsets B of [0, 1]. Σ can be any countably generated sub σ-field of F .

Remark 4.6. In fact the case is not so special. There is theorem [6] which

states that if (Ω, F ) is any complete separable metric space that has an uncountable number of points, then there is one-to-one measurable map with

a measurable inverse between (Ω, F ) and ([0, 1], B). There is no loss of generality in assuming that (Ω, F ) is just ([0, 1], B).

Theorem 4.7. Let P be a probability distribution on ([0, 1], B). Let Σ ⊂ B

be a sub σ-field. There exists a family of probability distributions Qx on

([0, 1], B) such that for every A ∈ B, Qx (A) is Σ measurable and for every B

measurable f ,

Z

f (y)Qx (dy) = E P [f (·)|Σ]

a.e. P.

(4.3)

If in addition Σ is countably generated, i.e there is a field Σ0 consisting of a

countable number of Borel subsets of [0, 1] such that the σ-field generated by

Σ0 is Σ, then

Qx (A) = 1A (x)

for all A ∈ Σ.

(4.4)

114

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Proof. The trick is not to be too ambitious in the first place but try to

construct the conditional expectations

Q(ω, B) = E χB (ω)|Σ}

only for sets B given by B = (−∞, x) for rational x. We call our conditional

expectation, which is in fact a conditional probability, by F (ω, x). By the

properties of conditional expectations for any pair of rationals x < y, there

is a null set Ex,y , such that for ω ∈

/ Ex,y

F (ω, x) ≤ F (ω, y).

Moreover for any rational x < 0, there is a null set Nx outside which

F (ω, x) = 0 and similar null sets Nx for x > 1, ouside which F (ω, x) = 1.

If we collect all these null sets, of which there are only countably many, and

take their union, we get a null set N ∈ Σ such that for ω ∈

/ N, we have have

a family F (ω, x) defined for rational x that satisfies

F (ω, x) ≤ F (ω, y) if x < y are rational

F (ω, x) = 0 for rational x < 0

F (ω, x) = 1 for rational x > 1

Z

P (A ∩ [0, x]) =

F (ω, x) dP for all A ∈ Σ.

A

For ω ∈

/ N and real y we can define

G(ω, y) =

lim F (ω, x).

x↓y

x rational

For ω ∈

/ N, G is a right continuous nondecreasing function (distribution

function) with G(ω, y) = 0 for y < 0 and G(ω, y) = 1 for y ≥ 1. There is

then a probability measure Q̂(ω, B) on the Borel subsets of [0, 1] such that

Q̂(ω, [0, y]) = G(ω, y) for all y. Q̂ is our candidate for regular conditional

probability. Clearly Q̂(ω, I) is Σ measurable for all intervals I and by standard arguments will continue to be Σ measurable for all Borel sets B ∈ F .

If we check that

Z

P (A ∩ [0, x]) =

G(ω, x) dP for all A ∈ Σ

A

4.3. CONDITIONAL PROBABILITY

for all 0 ≤ x ≤ 1 then

115

Z

P (A ∩ I) =

Q̂(ω, I) dP

for all A ∈ Σ

A

for all intervals I and by standard arguments this will extend to finite disjoint

unions of half open intervals that constitute a field and finally to the σ-field

F generated by that field. To verify that for all real y,

Z

P (A ∩ [0, y]) =

G(ω, y) dP for all A ∈ Σ

A

we start from

Z

P (A ∩ [0, x]) =

F (ω, x) dP

for all A ∈ Σ

A

valid for rational x and let x ↓ y through rationals. From the countable additivity of P the left hand side converges to P (A ∩ [0, y]) Rand by the bounded

convergence theorem, the right hand side converges to A G(ω, y) dP and

we are done.

Finally from the uniqueness of the conditional expectation if A ∈ Σ

Q̂(ω, A) = χA (ω)

provided ω ∈

/ NA , which is a null set that depends on A. We can take a

countable set Σ0 of generators A that forms a field and get a single null set

N such that if ω ∈

/N

Q̂(ω, A) = χA (ω)

for all A ∈ Σ0 . Since both side are countably additive measures in A and as

they agree on Σ0 they have to agree on Σ as well.

Exercise 4.10. (Disintegration Theorem.) Let µ be a probability measure on

the plane R2 with a marginal distribution α for the first coordinate. In other

words if we denote α is such that, for any f that is a bounded measurable

function of x,

Z

Z

f (x) dµ =

f (x) dα

R2

R

Show that there exist measures βx depending measurably on x such that

βx [{x}×R]

R = 1, i.e. βx is supported on the vertical line through (x, y) : y ∈ R

and µ = R βx dα. The converse is of course easier. Given α and βx we can

construct a unique µ such that µ disintegrates as expected.

116

4.4

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Markov Chains

One of the ways of generating a sequence of dependent random variables is

to think of a system evolving in time. We have time points that are discrete

say T = 0, 1, · · · , N, · · · . The state of the system is described by a point

x in the state space X of the system. The state space X comes with a

natural σ-field of subsets F . At time 0 the system is in a random state and

its distribution is specified by a probability distribution µ0 on (X , F ). At

successive times T = 1, 2, · · · , the system changes its state and given the past

history (x0 , · · · , xk ) of the states of the system at times T = 0, · · · , k − 1

the probability that system finds itself at time k in a subset A ∈ F is given

by πk (x0 , · · · , xk−1 ; A ). For each (x0 , · · · , xk−1 ), πk defines a probability

measure on (X , F ) and for each A ∈ F , πk (x0 , · · · , xk−1 ; A ) is assumed to

be a measurable function of (x0 , · · · , xk−1 ), on the space (X k , F k ) which is

the product of k copies of the space (X , F ) with itself. We can inductively

define measures µk on (X k+1, F k+1) that describe the probability distribution

of the entire history (x0 , · · · , xk ) of the system through time k. To go from

µk−1 to µk we think of (X k+1, F k+1) as the product of (X k , F k ) with (X , F )

and construct on (X k+1, F k+1 ) a probability measure with marginal µk−1 on

(X k , F k ) and conditionals πk (x0 , · · · , xk−1 ; ·) on the fibers (x1 , · · · , xk−1 )×X .

This will define µk and the induction can proceed. We may stop at some finite

terminal time N or go on indefinitely. If we do go on indefinitely, we will have

a consitent family of finite dimensional distributions {µk } on (X k+1 , F k+1)

and we may try to use Kolmogorov’s theorem to construct a probability

measure P on the space (X ∞ , F ∞ ) of sequences {xj : j ≥ 0} representing

the total evolution of the system for all times.

Remark 4.7. However Kolmogorov’s theorem requires some assumptions on

(X , F ) that are satisfied if X is a complete separable metric space and F

are the Borel sets. However, in the present context, there is a result known

as Tulcea’s theorem (see [8]) that proves the existence of a P on (X ∞ , F ∞ )

for any choice of (X , F ), exploiting the fact that the consistent family of

finite dimensional distributions µk arise from well defined successive regular

conditional probability distributions.

An important subclass is generated when the transition probability depends

on the past history only through the current state. In other words

πk (x0 , · · · , xk−1 ; ·) = πk−1,k (xk−1 ; ·).

4.4. MARKOV CHAINS

117

In such a case the process is called a Markov Process with transition probabilities πk−1,k (·, ·). An even smaller subclass arises when we demand that

πk−1,k (·, ·) be the same for different values of k. A single transition probability π(x, A) and the initial distribution µ0 determine the entire process i.e.

the measure P on (X ∞ , F ∞ ). Such processes are called time-homogeneous

Markov Proceses or Markov Processes with stationary transition probabilities.

Chapman-Kolmogorov Equations:. If we have the transition probabilities πk,k+1 of transition from time k to k + 1 of a Markov Chain it is possible

to obtain directly the transition probabilities from time k to k + ` for any

` ≥ 2. We do it by induction on `. Define

Z

πk,k+`+1(x, A) =

πk,k+` (x, dy) πk+`,k+`+1(y, A)

(4.5)

X

or equivalently, in a more direct fashion

Z

Z

πk,k+`+1(x, A) =

···

πk,k+1(x, dyk+1 ) · · · πk+`,k+`+1(yk+` , A)

X

X

Theorem 4.8. The transition probabilities πk,m (·, ·) satisfy the relations

Z

πk,n (x, A) =

πk,m (x, dy) πm,n (y, A)

(4.6)

X

for any k < m < n and for the Markov Process defined by the one step

transition probabilities πk,k+1 (·, ·), for any n > m

P [xn ∈ A|Σm ] = πm,n (xm , A) a.e.

where Σm is the σ-field of past history upto time m generated by the coordinates x0 , x1 , · · · , xm .

Proof. The identity is basically algebra. The multiple integral can be carried

out by iteration in any order and after enough variables are integrated we

get our identity. To prove that the conditional probabilities are given by the

right formula we need to establish

Z

P [{xn ∈ A} ∩ B] =

πm,n (xm , A) dP

B

CHAPTER 4. DEPENDENT RANDOM VARIABLES

118

for all B ∈ Σm and A ∈ F . We write

Z

dP

P [{xn ∈ A} ∩ B] =

{xn ∈A}∩B

Z

Z

dµ(x0 ) π0,1 (x0 , dx1 ) · · · πm−1,m (xm−1 , dxm )

= ···

{xn ∈A}∩B

Z

=

π

(x , dxm−1 ) · · · πn−1,n (xn−1 , dxn )

Z m,m+1 m

· · · dµ(x0 ) π0,1 (x0 , dx1 ) · · · πm−1,m (xm−1 , dxm )

B

Z

=

Z

=

π

(x , dxm−1 ) · · · πn−1,n (xn−1 , A)

Z m,m+1 m

· · · dµ(x0 ) π0,1 (x0 , dx1 ) · · · πm−1,m (xm−1 , dxm ) πm,n (xm , A)

B

πm,n (xm , A) dP

B

and we are done.

Remark 4.8. If the chain has stationary transition probabilities then the

transition probabilities πm,n (x, dy) from time m to time n depend only on

the difference k = n − m and are given by what are usually called the k step

transition probabilities. They are defined inductively by

Z

(k+1)

π

(x, A) =

π (k) (x, dy) π(y, A)

X

and satisfy the Chapman-Kolmogorov equations

Z

Z

(k+`)

(k)

(`)

(x, A) =

π (x, dy)π (x, A) = π (`) (x, dy)π (k)(y, A)

π

X

§

Suppose we have a probability measure P on the product space X ×Y ×Z

with the product σ-field. The Markov property in this context refers to

equality

E P [g(z)|Σx,y ] = E P [g(z)|Σy ]

a.e. P

(4.7)

for bounded measurable functions g on Z, where we have used Σx,y to denote

the σ-field generated by projection on to X × Y and Σy the corresponding

4.4. MARKOV CHAINS

119

σ-field generated by projection on to Y . The Markov property in the reverse

direction is the similar condition for bounded measurable functions f on X.

E P [f (x)|Σy,z ] = E P [f (x)|Σy ]

a.e. P

(4.8)

They look different. But they are both equivalent to the symmetric condition

E P [f (x)g(z)|Σy ] = E P [f (x)|Σy ]E P [g(z)|Σy ]

a.e. P

(4.9)

which says that given the present, the past and future are conditionally

independent. In view of the symmetry it sufficient to prove the following:

Theorem 4.9. For any P on (X × Y × Z) the relations (4.7) and (4.9) are

equivalent.

Proof. Let us fix f and g. Let us denote the common value in (4.7) by ĝ(y)

Then

E P [f (x)g(z)|Σy ] = E P [E P [f (x)g(z)|Σx,y ]|Σy ]

P

P

a.e. P

= E [f (x)E [g(z)|Σx,y ]|Σy ]

a.e. P

= E P [f (x)ĝ(y)|Σy ]

a.e. P (by (4.5))

P

= E [f (x)|Σy ]ĝ(y)

P

P

= E [f (x)|Σy ]E [g(z)|Σy ]

a.e. P

a.e. P

which is (4.9). Conversely, we assume (4.9) and denote by ḡ(x, y) and ĝ(y)

the expressions on the left and right side of (4.7). Let b(y) be a bounded

measurable function on Y .

E P [f (x)b(y)ḡ(x, y)] = E P [f (x)b(y)g(z)]

= E P [b(y)E P [f (x)g(z)|Σy ]]

= E P [b(y) E P [f (x)|Σy ] E P [g(z)|Σy ] ]

= E P [b(y) E P [f (x)|Σy ] ĝ(y)]

= E P [f (x)b(y)ĝ(y)].

Since f and b are arbitrary this implies that ḡ(x, y) = ĝ(y) a.e. P .

Let us look at some examples.

120

CHAPTER 4. DEPENDENT RANDOM VARIABLES

1. Suppose we have an urn containg a certain number of balls (nonzero)

some red and others green. A ball is drawn at random and its color

is noted. Then it is returned to the urn along with an extra ball of

the same color. Then a new ball is drawn at random and the process

continues ad infinitum. The current state of the system can be characterized by two integers r, g such that r + g ≥ 1. The initial state if the

system is some r0 , g0 with r0 + g0 ≥ 1. The system can go from (r, g)

r

to either (r + 1, g) with probability r+g

or to (r, g + 1) with probability

g

.

This

is

clearly

an

example

of

a

Markov

Chain with stationary

r+g

transition probabilities.

2. Consider a queue for service in a store. Suppose at each of the times

1, 2, · · · , a random number of new customers arrive and and join the

queue. If the queue is non empty at some time, then exactly one

customer will be served and will leave the queue at the next time point.

The distribution of the number of new arrivals is specified by {pj : j ≥

0} where pj is the probability that exactly j new customers arrive

at a given time. The number of new arrivals at distinct times are

assumed to be independent. The queue length is a Markov Chain on

the state space X = {0, 1, · · · , } of nonegative integers. The transition

probabilities π(i, j) are given by π(0, j) = pj because there is no service

and nobody in the queue to begin with and all the new arrivals join

the queue. On the other hand π(i, j) = pj−i+1 if j + 1 ≥ i ≥ 1 because

one person leaves the queue after being served.

3. Consider a reservoir into which water flows. The amount of additional

water flowing into the reservoir on any given day is random, and has

a distribution α on [0, ∞). The demand is also random for any given

day, with a probability distribution β on [0, ∞). We may also assume

that the inflows and demands on successive days are random variables

ξn and ηn , that have α and β for their common distributions and are all

mutually independent. We may wish to assume a percentage loss due

to evaporation. In any case the storage level at successive days have a

recurrence relation

Sn+1 = [(1 − p)Sn + ξn − ηn ]+

p is the loss and we have put the condition that the outflow is the

demand unless the stored amount is less than the demand in which case

4.4. MARKOV CHAINS

121

the outflow is the available quantity. The current amount in storage is

a Markov Process with Stationary transition probabilities.

4. Let X1 , · · · , Xn , · · · be a sequence of independent random variables

with a common distribution α. Let Sn = Y + X1 + · · · + Xn for

n ≥ 1 with S0 = Y where Y is a random variable independent of

X1 , . . . , Xn , . . . with distribution µ. Then Sn is a Markov chain on

R with one step transition probaility π(x, A) = α(A − x) and initial

distribution µ. The n step transition probability is αn (A − x) where

αn is the n-fold convolution of α. This is often referred to as a random

walk.

The last two examples can be described by models of the type

xn = f (xn−1 , ξn )

where xn is the current state and ξn is some random external disturbance. ξn

are assumed to be independent and identically distributed. They could have

two components like inflow and demand. The new state is a deterministic

function of the old state and the noise.

Exercise 4.11. Verify that the first two examples can be cast in the above

form. In fact there is no loss of generality in assuming that ξj are mutually

independent random variables having as common distribution the uniform

distribution on the interval [0, 1].

Given a Markov Chain with stationary transition probabilities π(x, dy) on

a state space (X , F ), the behavior of π (n) (x, dy) for large n is an important

and natural question. In the best situation of independent random variables

π (n) (x, A) = µ(A) are independent of x as well as n. Hopefully after a long

time the Chain will ‘forget’ its origins and π (n) (x, ·) → µ(·), in some suitable

sense, for some µ that does not depend on x . If that happens, then from

the relation

Z

(n+1)

π

(x, A) = π (n) (x, dy)π(y, A),

Z

we conclude

µ(A) =

π(y, A) dµ(y) for all A ∈ F

122

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Measures that satisfy the above property, abbreviated as µπ = µ, are called

invariant measures for the Markov Chain. If we start with the initial distribution µ which is inavariant then the probability measure P has µ as marginal

at every time. In fact P is stationary i.e., invariant with respect to time

translation, and can be extended to a stationary process where time runs

from −∞ to +∞.

4.5

Stopping Times and Renewal Times

One of the important notions in the analysis of Markov Chains is the idea of

stopping times and renewal times. A function

τ (ω) : Ω → {n : n ≥ 0}

is a random variable defined on the set Ω = X ∞ such that for every n ≥ 0 the

set {ω : τ (ω) = n} (or equivalently for each n ≥ 0 the set {ω : τ (ω) ≤ n})

is measurable with respect to the σ-field Fn generated by Xj : 0 ≤ j ≤ n.

It is not necessary that τ (ω) < ∞ for every ω. Such random variable τ are

called stopping times. Examples of stopping times are, constant times n ≥ 0,

the first visit to a state x, or the second visit to a state x. The important

thing is that in order to decide if τ ≤ n i.e. to know if what ever is supposed

to happen did happen before time n the chain need be observed only up to

time n. Examples of τ that are not stopping times are easy to find. The last

time a site is visited is not a stopping time nor is is the first time such that

at the next time one is in a state x. An important fact is that the Markov

property extends to stopping times. Just as we have σ-fields Fn associated

with constant times, we do have a σ field Fτ associated to any stopping

time. This is the information we have when we observe the chain upto time

τ . Formally

∞

Fτ = A : A ∈ F

and A ∩ {τ ≤ n} ∈ Fn for each n

One can check from the definition that τ is Fτ measurable and so is Xτ on

the set τ < ∞. If τ is the time of first visit to y then τ is a stopping time and

the event that the chain visits a state z before visiting y is Fτ measurable.

Lemma 4.10. ( Strong Markov Property.) At any stopping time τ

the Markov property holds in the sense that the conditional distribution of

4.6. COUNTABLE STATE SPACE

123

Xτ +1 , · · · , Xτ +n , · · · conditioned on Fτ is the same as the original chain

starting from the state x = Xτ on the set τ < ∞. In other words

Px {Xτ +1 ∈ A1 , · · · , Xτ +n ∈ An |Fτ }

Z

Z

=

···

π(Xτ , dx1 ) · · · π(xn−1 , dxn )

A1

An

a.e. on {τ < ∞}.

Proof. Let A ∈ Fτ be given with A ⊂ {τ < ∞}. Then

Px {A ∩ {Xτ +1 ∈ A1 , · · · , Xτ +n ∈ An }}

X

=

Px {A ∩ {τ = k} ∩ {Xk+1 ∈ A1 , · · · , Xk+n ∈ An }}

XZ

Z

k

=

Zk Z

A∩{τ =k}

Z

···

=

A

A1

Z

···

A1

π(Xk , dxk+1 ) · · · π(xk+n−1 , dxk+n ) dPx

An

π(Xτ , dx1 ) · · · π(xn−1 , dxn ) dPx

An

We have used the fact that if A ∈ Fτ then A ∩ {τ = k} ∈ Fk for every

k ≥ 0.

Remark 4.9. If Xτ = y a.e. with respect to Px on the set τ < ∞, then at

time τ , when it is finite, the process starts afresh with no memory of the past

and will have conditionally the same probabilities in the future as Py . At

such times the process renews itself and these times are called renewal times.

4.6

Countable State Space

From the point of view of analysis a particularly simple situation is when the

state space X is a countable set. It can be taken as the integers {x : x ≥ 1}.

Many applications fall in this category and an understanding of what happens

in this situation will tell us what to expect in general.

The one step transition

probability is a matrix π(x, y) with nonnegative enP

tries such that y π(x, y) = 1 for each x. Such matrices are called stochastic

124

CHAPTER 4. DEPENDENT RANDOM VARIABLES

matrices. The n step transition matrix is just the n-th power of the matrix

defined inductively by

X

π (n+1) (x, y) =

π (n) (x, z)π(z, y)

z

To be consistent one defines π (0) (x, y) = δx,y which is 1 if x = y and 0

otherwise. The problem is to analyse the behaviour for large n of π (n) (x, y). A

state x is said to communicate with a state y if π (n) (x, y) > 0 for some n ≥ 1.

We will assume for simplicity that every state communicates with every other

state. Such Markov Chains are called irreducible. Let us first limit ourselves

to the study of irreducible chains. Given an irreducible Markov chain with

transition probabilities π(x, y) we define fn (x) as the probability of returning

to x for the first time at the n-th step assuming that the chain starts from

the state x.. Using the convention that Px refers to the measure on sequences

for the chain starting from x and {Xj } are the successive positions of the

chain

fn (x) = Px Xj 6= x for 1 ≤ j ≤ n − 1 and Xn = x

X

=

π(x, y1 ) π(y1, y2 ) · · · π(yn−1, x)

y1 6=x

···

yn−1 6=x

P

Since fn (x) are probailities

of

disjoint

events

≤ 1. The state x

n fn (x)P

P

is called transient if n fn (x) < 1 and recurrent if n fn (x) = 1. The

recurrent case is divided into two situations. If we denote by τx = inf{n ≥

1 : Xn = x}, the time of first visit to x, then recurrence is Px {τx < ∞} = 1.

A recurrent state x is called positive recurrent if

X

E Px {τx } =

n fn (x) < ∞

n≥1

and null recurrent if

E Px {τx } =

X

n fn (x) = ∞

n≥1

Lemma 4.11. If for a (not necessarily irreducible) chain starting from x, the

probability of ever visiting y is positive then so is the probability of visiting y

before returning to x.

4.6. COUNTABLE STATE SPACE

125

Proof. Assume that for the chain starting from x the probability of visiting

y before returning to x is zero. But when it returns to x it starts afresh and

so will not visit y until it returns again. This reasoning can be repeated and

so the chain will have to visit x infinitely often before visiting y. But this

will use up all the time and so it cannot visit y at all.

Lemma 4.12. For an irreducible chain all states x are of the same type.

Proof. Let x be recurrent and y be given. Since the chain is irreducible, for

some k, π (k) (x, y) > 0. By the previous lemma, for the chain starting from x,

there is a positive probability of visiting y before returning to x. After each

successive return to x, the chain starts afresh and there is a fixed positive

probability of visiting y before the next return to x. Since there are infinitely

many returns to x, y will be visited infinitely many times as well. Or y is

also a recurrent state.

We now prove that if x is positive recurrent then so is y. We saw already

that the probability p = Px {τy < τx } of visiting y before returning to x is

positive. Clearly

E Px {τx } ≥ Px {τy < τx } E Py {τx }

and therefore

1

E Py {τx } ≤ E Px {τx } < ∞.

p

On the other hand we can write

Z

Z

Px

E {τy } ≤

τx dPx +

τy dPx

τy <τx

τx <τy

Z

Z

=

τx dPx +

{τx + E Px {τy }} dPx

τ <τ

τ <τ

Zy x

Zx y

=

τx dPx +

τx dPx + (1 − p) E Px {τy }

τ <τ

τx <τy

Zy x

= τx dPx + (1 − p) E Px {τy }

by the renewal property at the stopping time τx . Therefore

1

E Px {τy } ≤ E Px {τx }.

p

We also have

2

E Py {τy } ≤ E Py {τx } + E Px {τy } ≤ E Px {τx }

p

126

CHAPTER 4. DEPENDENT RANDOM VARIABLES

proving that y is positive recurrent.

Transient Case: We have the following theorem regarding transience.

Theorem 4.13. An irreducible chain is transient if and only if

G(x, y) =

∞

X

π (n) (x, y) < ∞ for all x, y.

n=0

Moreover for any two states x and y,

G(x, y) = f (x, y)G(y, y)

and

G(x, x) =

1

1 − f (x, x)

where f (x, y) = Px {τy < ∞}.

Proof. Each time the chain returns to x there is a probability 1 − f (x, x) of

never returning. The number of returns has then the geometric distribution

Px { exactly n returns to x} = (1 − f (x, x))f (x, x)n

and the expected number of returns is given by

∞

X

π (k) (x, x) =

k=1

f (x, x)

.

1 − f (x, x)

The left hand side comes from the calculation

E Px

∞

X

k=1

χ{x} (Xk ) =

∞

X

π (k) (x, x)

k=1

and the right hand side from the calculation of the mean of a Geometric

distribution. Since we count the visit at time 0 as a visit to x we add 1 to

both sides to get our formula. If we want to calculate the expected number of

visits to y when we start from x, first we have to get to y and the probability

of that is f (x, y). Then by the renewal property it is exactly the same as the

expected number of visits to y starting from y, including the visit at time 0

and that equals G(y, y).

4.6. COUNTABLE STATE SPACE

127

Before we study the recurrent behavior we need the notion of periodicity.

For each state x let us define Dx = {n : π (n) (x, x) > 0} to be the set of times

at which a return to x is possible if one starts from x. We define dx to be

the greatest common divisor of Dx .

Lemma 4.14. For any irreducible chain dx = d for all x ∈ X and for each

x, Dx contains all sufficiently large multiples of d.

Proof. Let us define

Dx,y = {n : π (n) (x, y) > 0}

so that Dx = Dx,x . By the Chapman-Kolmogorov equations

π (m+n) (x, y) ≥ π (m) (x, z)π (n) (z, y)

for every z, so that if m ∈ Dx,z and n ∈ Dz,y , then m + n ∈ Dx,y . In

particular if m, n ∈ Dx it follows that m + n ∈ Dx . Since any pair of states

communicate with each other, given x, y ∈ X , there are positive integers n1

and n2 such that n1 ∈ Dx,y and n2 ∈ Dy,x . This implies that with the choice

of ` = n1 + n2 , n + ` ∈ Dx whenever n ∈ Dy ; similarly n + ` ∈ Dy whenever

n ∈ Dx . Since ` itself belongs to both Dx and Dy both dx and dy divide `.

Suppose n ∈ Dx . Then n + ` ∈ Dy and therefore dy divides n + `. Since dy

divides `, dy must divide n. Since this is true for every n ∈ Dx and dx is the

greatest common divisor of Dx , dy must divide dx . Similarly dx must divide

dy . Hence dx = dy . We now complete the proof of the lemma. Let d be the

greatest common divisor of Dx . Then it is the greatest common divisor of a

finite subset n1 , n2 , · · · , nq of Dx and there will exist integers a1 , a2 , · · · , aq

such that

a1 n1 + a2 n2 + · · · + aq nq = d

Some of the a’s will be positive and others negative. Seperating them out,

and remembering that all the ni are divisible by d, we find two integers

md, (m + 1)d such that they both belong to Dx . If now n = kd with k > m2

we can write k = `m + r with a large ` ≥ m and the remainder r is less than

m.

kd = (`m + r)d = `md + r(m + 1)d − rmd = (` − r)md + r(m + 1)d ∈ Dx

since ` ≥ m > r.

128

CHAPTER 4. DEPENDENT RANDOM VARIABLES

Remark 4.10. For an irreducible chain the common value d is called the period of the chain and an irreducible chain with period d = 1 is called aperiodic.

The simplest example of a periodic chain is one with 2 states and the chain

shuttles back and forth between the two. π(x, y) = 1 if x 6= y and 0 if x = y.

A simple calculation yields π (n) (x, x) = 1 if n is even and 0 otherwise. There

is oscillatory behavior in n that persists. The main theorem for irreducible,

aperiodic, recurrent chains is the following.

Theorem 4.15. Let π(x, y) be the one step transition probability for a recurrent aperiodic Markov chain and let π (n) (x, y) be the n-step transition

probabilities. If the chain is null recurrent then

lim π (n) (x, y) = 0 for all x, y

n→∞

If the chain is positive recuurrent then of course E Px {τx } = m(x) < ∞ for

all x, and in that case

lim π (n) (x, y) = q(y) =

n→∞

1

m(y)

exist for all x and y is independent of the starting point x and

P

y

q(y) = 1.

The proof is based on

Theorem 4.16. (Renewal Theorem.) Let {fn : n ≥ 1} be a sequence of

nonnegative numbers such that

X

X

fn = 1,

n fn = m ≤ ∞

n

n

and the greatest common divisor of {n : fn > 0} is 1. Suppose that {pn : n ≥

0} are defined by p0 = 1 and recursively

pn =

n

X

fj pn−j

j=1

Then

1

n→∞

m

where if m = ∞ the right hand side is taken as 0.

lim pn =

(4.10)

4.6. COUNTABLE STATE SPACE

129

Proof. The proof is based on several steps.

Step 1. We have inductively pn ≤ 1. Let a = lim supn→∞ pn . We can

choose a subsequence nk such that pnk → a. We can assume without loss of

generality that pnk +j → qj as k → ∞ for all positive and negative integers j

as well. Of course the limit q0 for j = 0 is a. In relation 4.10 we can pass to

the limit along the subsequence and use the dominated convergence theorem

to obtain

∞

X

qn =

fj qn−j

(4.11)

j=1

valid for −∞ < n < ∞. In particular

q0 =

∞

X

fj q−j

(4.12)

j=1

Step 2: Because a = lim sup pn we can conclude that qj ≤ a for all j. If

we denote by S = {n : fn > 0} then q−k = a for k ∈ S. We can then

deduce from equation 4.11 that q−k = a for k = k1 + k2 with k1 , k2 ∈ S. By

repeating the same reasoning q−k = a for k = k1 + k2 + · · · + k` . By lemma

3.6 because the greatest common factor of the integers in S is 1, there is a k0

such that for k ≥ k0 ,we have q−k = a. We now apply the relation 4.11 again

to conclude that qj = a for all positve as well as negative j.

Step 3: If we add up equation 4.10 for n = 1, · · · , N we get

p1 + p2 + · · · + pN = (f1 + f2 + · · · + fN ) + (f1 + f2 + · · · + fN −1 )p1

+ · · · + (f1 + f2 + · · · + fN −k )pk + · · · + f1 pN −1

P∞

P

If we denote by Tj = i=j fi , we have T1 = 1 and ∞

j=0 Tj = m. We can

now rewrite

N

N

X

X

Tj pN −j+1 =

fj

j=1

j=1

Step

4: Because pN −j → a for every j along the subsequence N = nk , if

P

j Tj = m < ∞, we can deduce from the dominated convergence theorem

that m a = 1 and we conclude that

lim sup pn = v1m

n→∞

130

CHAPTER 4. DEPENDENT RANDOM VARIABLES

P

If j Tj = ∞, by Fatou’s Lemma a = 0. Exactly the same argument applies

to liminf and we conclude that

1

lim inf pn =

n→∞

m

This concludes the proof of the renewal theorem.

We now turn to

Proof. (of Theorem 4.15). If we take a fixed x ∈ X and consider fn =

Px {τx = n}, then fn and pn = π (n) (x, x) are related by (1) and m = E Px {τx }.

In order to apply the renewal theorem we need to establish that the greatest

common divisor of S = {n : fn > 0} is 1. In general if fn > 0 so is pn . So

the greatest common divisor of S is always larger than that of {n : pn > 0}.

That does not help us because the greatest common divisor of {n : pn > 0}

is 1. On the other hand if fn = 0 unless n = k d for some k, the relation 4.10

can be used inductively to conclude that the same is true of pn . Hence both

sets have the same greatest common divisor. We can now conclude that

lim π (n) (x, x) = q(x) =

n→∞

1

m(x)

On the other hand if fn (x, y) = Px {τy = n}, then

π

(n)

and recurrence implies

(x, y) =

P∞

k+1

n

X

fk (x, y) π (n−k)(y, y)

k=1

fk (x, y) = 1 for all x and y. Therefore

lim π (n) (x, y) = q(y) =

n→∞

1

m(y)

and is independent of x, the starting point. In order to complete the proof

we have to establish that

X

Q=

q(y) = 1

y

It is clear by Fatou’s lemma that

X

y

q(y) = Q ≤ 1

4.6. COUNTABLE STATE SPACE

131

By letting n → ∞ in the Chapman-Kolmogorov equation

X

π (n+1) (x, y) =

π n (x, z)π(z, y)

z

and using Fatou’s lemma we get

q(y) ≥

X

π(z, y)q(z)

z

Summing with repect to y we obtain

X

Q≥

π(z, y)q(z) = Q

z,y

and equality holds in this relation. Therefore

X

q(y) =

π(z, y)q(z)

z

for every y or q(·) is an invariant measure. By iteration

X

q(y) =

π n (z, y)q(z)

z

and if we let n → ∞ again an application of the bounded convergence theorem yields

q(y) = Q q(y)

implying Q = 1 and we are done.

Let us now consider an irreducible Markov Chain with one step transition

probability π(x, y) that is periodic with period d > 1. Let us choose and fix

a reference point x0 ∈ X . For each x ∈ X let Dx0 ,x = {n : π (n) (x0 , x) > 0}.

Lemma 4.17. If n1 , n2 ∈ Dx0 ,x then d divides n1 − n2 .

Proof. Since the chain is irreducible there is an m such tha π (m) (x, x0 ) > 0.

By the Chapman-Kolmogorov equations π (m+ni ) (x0 , x0 ) > 0 for i = 1, 2.

Therefore m + ni ∈ Dx0 = Dx0 ,x0 for i = 1, 2. This implies that d divides

both m + n1 as well as m + n2 . Thus d divides n1 − n2 .

CHAPTER 4. DEPENDENT RANDOM VARIABLES

132

The residue modulo d of all the integers in Dx0 ,x are the same and equal

some number r(x), satisfying 0 ≤ r(x) ≤ d − 1. By definition r(x0 ) = 0. Let

us define Xj = {x : r(x) = j}. Then {Xj : 0 ≤ j ≤ d − 1} is a partition of X

into disjoint sets with x0 ∈ X0 .

Lemma 4.18. If x ∈ X , then π (n) (x, y) = 0 unless r(x) + n = r(y) mod d.

Proof. Suppose that x ∈ X and π(x, y) > 0. Then if m ∈ Dx0 ,x then

(m + 1) ∈ Dx0 ,y . Therefore r(x) + 1 = r(y) modulo d. The proof can be

completed by induction. The chain marches through {Xj } in a cyclical way

from a state in Xj to one in Xj+1

Theorem 4.19. Let X be irreducible and positive recurrent with period d.

Then

d

lim

π (n) (x, y) =

n→∞

m(y)

n+r(x)=r(y) modulo d

Of course

π (n) (x, y) = 0

unless n + r(x) = r(y) modulo d.

Proof. If we replace π by π̃ where π̃(x, y) = π (d) (x, y), then π̃(x, y) = 0 unless

both x and y are in the same Xj . The restriction of π̃ to each Xj defines an

irreducible aperiodic Markov chain. Since each time step under π̃ is actually

d units of time we can apply the earlier results and we will get for x, y ∈ Xj

for some j,

d

lim π (k d) (x, y) =

k→∞

m(y)

We note that

π (n) (x, y) =

X

fm (x, y) π (n−m) (y, y)

1≤m≤n

fm (x, y) = Px {τy = m} = 0 unless r(x) + m = r(y) modulo d

π (n−m) (y, y) = 0 unless n − m = 0 modulo d

X

fm (x, y) = 1.

m

The theorem now follows.

4.6. COUNTABLE STATE SPACE

133

Suppose now we have a chain that is not irreducible. Let us collect all

the transient states and call the set Xtr . The complement consists of all the

recurrent states and will be denoted by Xre .

Lemma 4.20. If x ∈ Xre and y ∈ Xtr , then π(x, y) = 0.

Proof. If x is a recuurrent state, and π(x, y) > 0, the chain will return to x

infinitely often and each time there is a positive probability of visiting y. By

the renewal property these are independent events and so y will be recurrent

too.

The set of recurrent states Xre can be divided into one or more equivalence

classes accoeding to the following procedure. Two recurrent states x and y are

in the same equivalence class if f (x, y) = Px {τy < ∞}, the probability of ever

visiting y starting from x is positive. Because of recurrence if f (x, y) > 0 then

f (x, y) = f (y, x) = 1. The restriction of the chain to a single equivalence class

is irreducible and possibly periodic. Different equivalence classes could have

different periods, some could be positive recurrent and others null recurrent.

We can combine all our observations into the following theorem.

P (n)

Theorem 4.21. If y is transient then

(x, y) < ∞ for all x. If y

nπ

is null recurrent (belongs toP

an equivalence class that is null recurrent) then

π (n) (x, y) → 0 for all x, but n π (n) (x, y) = ∞ if x is in the same equivalence

class or x ∈ Xtr with f (x, y) > 0. In all other cases π (n) (x, y) = 0 for all

n ≥ 1. If y is positive recurrent and belongs to an equivalence class with

period d with m(y) = E Py {τy }, then for a nontransient x, π (n) (x, y) = 0

unless x is in the same equivalence class and r(x) + n = r(y) modulo d. In

such a case,

d

lim

.

π (n) (x, y) =

n→∞

m(y)

r(x)+n=r(y) modulo d

If x is transient then

lim

n→∞

π (n) (x, y) = f (r, x, y)

n=r modulo d

d

m(y)

where

f (r, x, y) = Px {Xkd+r = y

for some k ≥ 0}.

CHAPTER 4. DEPENDENT RANDOM VARIABLES

134

Proof. The only statement that needs an explanation is the last one. The

chain starting from a transient state x may at some time get into a positive

recurrent equivalence class Xj with period d. If it does, it never leaves that

class and so gets absorbed in that class. The probability of this is f (x, y)

where y can be any state in Xj . However if the period d is greater than

1, there will be cyclical subclasses C1 , · · · , Cd of Xj . Depending on which

subclass the chain enters and when, the phase of its future is determined.

There are d such possible phases. For instance, if the subclasses are ordered

in the correct way, getting into C1 at time n is the same as getting into

C2 at time n + 1 and so on. f (r, x, y) is the probability of getting into the

equivalence class in a phase that visits the cyclical subclass containing y at

times n that are equal to r modulo d.

Example 4.1. (Simple Random Walk).

If X = Z d , the integral lattice in Rd , a random walk is a Markov chain

with transition probability π(x, y) = p(y − x) where {p(z)} specifies the

probability distribution of a single step. We will assume for simplicity that

p(z) = 0 except when z ∈ F where F consists of the 2 d neighbors of 0 and

1

p(z) = 2d

for each z ∈ F . For ξ ∈ Rd the characteristic function of p̂(ξ) of

p(·) is given by d1 (cos ξ1 + cos ξ2 + · · · + cos ξd ). The chain is easily seen to

irreducible, but periodic of period 2. Return to the starting point is possible

only after an even number of steps.

Z

1 d

(2n)

π (0, 0) = ( )

[p̂(ξ)]2n dξ

2π

d

T

C

'

d .

n2

To see this asymptotic behavior let us first note that the integration can be

restricted to the set where |p̂(ξ)| ≥ 1 − δ or near the 2 points (0, 0, · · · , 0)

and (π, π, · · · , π) where |p̂(ξ)| = 1. Since the behaviour is similar at both

points let us concentrate near the origin.

X

X

1X

cos ξj ≤ 1 − c

ξj2 ≤ exp[−c

ξj2 ]

d j=1

j

j

d

for some c > 0 and

d

X

1 X

2n

cos ξj

≤ exp[−2 n c

ξj2 ]

d j=1

j

4.6. COUNTABLE STATE SPACE

135

and with a change of variables the upper bound is clear. We have a similar

lower bound as well. The random walk is recurrent if d = 1 or 2 but transient

if d ≥ 3.

Exercise 4.12. If the distribution p(·) is arbitrary, determine when the chain

is irreducible and when it is irreducible and aperiodic.

P

Exercise 4.13. If z zp(z) = m 6= 0 conclude that the chain is transient by

an application of the strong law of large numbers.

P

Exercise

4.14.

If

z zp(z) = m = 0, and if the covariance matrix given by ,

P

z

z

p(z)

=

σ

,

is

nondegenerate show that the transience or recurrence

i,j

z i j

is determined by the dimension as in the case of the nearest neighbor random

walk.

Exercise 4.15. Can you make sense of the formal calculation

X

X 1 Z

(n)

π (0, 0) =

( )d

[p̂(ξ)]n dξ

2π

d

T

n

n

Z

1 d

1

= ( )

dξ

2π

Td (1 − p̂(ξ))

Z

1

1 d

Real Part

= ( )

dξ

2π

1 − p̂(ξ)

Td

to conclude that a necessary and sufficient condition for transience or recurrece is the convergence or divergence of the integral

Z

1

Real Part

dξ

1 − p̂(ξ)

Td

with an integrand

Real Part

1

1 − p̂(ξ)

that is seen to be nonnegative ?

Hint: Consider instead the sum

∞

X

n=0

n (n)

ρ π

X 1 Z

(0, 0) =

( )d

ρn [p̂(ξ)]n dξ

2π

d

T

n

Z

1

1

dξ

= ( )d

2π

Td (1 − ρp̂(ξ))

136

CHAPTER 4. DEPENDENT RANDOM VARIABLES

1

= ( )d

2π

Z

Td

Real Part

1

dξ

1 − ρp̂(ξ)

for 0 < ρ < 1 and let ρ → 1.

Example 4.2. (The Queue Problem).

In the example of customers arriving, except in the trivial cases of p0 = 0

or p0 + p1 = 1 the chain is irreducible and

P aperiodoc. Since the service

rate is at most 1 if the arrival rate m = j j pj > 1, then the queue will

get longer and by an application of law of large numbers it is seen that the

queue length will become infinite as time progresses. This is the transient

behavior of the queue. If m < 1, one can expect the situation to be stable and

there should be an asymptotic distribution for the queue length. If m = 1,

it is the borderline case and one should probably expect this to be the null

recurent case. The actual proofs are not hard. In time n the actual number

of customers served is at most n because the queue may sometomes be empty.

If {ξi : i ≥ 1} are the number of new customers arriving at time i and X0 is

the initial number inP

the queue, then the number Xn in the queue at time n

satisfies Xn ≥ X0 +( ni=1 ξi )−n and if m > 1, it follows from the law of large

numbers that limn→∞ Xn = +∞, thereby establishing transience. To prove

positive recurrence when m < 1 it is sufficient to prove that the equations

X

q(x)π(x, y) = q(y)

x

P

has a nontrivial nonnegative solution such that

x q(x) < ∞. We shall

proceed to show that this is indeed the case.PSince the equation is linear we

can alaways normalize tha solution so that x q(x) = 1. By iteration

X

q(x)π (n) (x, y) = q(y)

x

P

for every n. If limn→∞ π (x, y) = 0 for every x and y, because x q(x) =

1 < ∞, by the bounded convergence theorem the right hand side tends to

0 as n → ∞. therefore q ≡ 0 and is trivial. This rules out the transient

and the null recurrent case. In our case π(0, y) = py and π(x, y) = py−x+1

if y ≥ x − 1 and x ≥ 1. In all other cases π(x, y) = 0. The equations for

{qx = q(x)} are then

(n)

q0 py +

y+1

X

x=1

qx py−x+1 = qy

for y ≥ 0.

(4.13)

4.6. COUNTABLE STATE SPACE

137

Multiplying equation 4.13 by z n and summimg from 1 to ∞, we get

q0 P (z) +

1

P (z) [Q(z) − q0 ] = Q(z)

z

where P (z) and Q(z) are the generating functions

P (z) =

∞

X

px z x

x=0

Q(z) =

∞

X

qx z x .

x=0

We can solve for Q to get

−1

Q(z)

P (z) − 1

= P (z) 1 −

q0

z−1

∞

X P (z) − 1 k

= P (z)

z−1

k=0

k

∞

∞

X

X

j−1

= P (z)

pj (1 + z + · · · + z )

k=0

j=1

is a power series in z with nonnegative coefficients. If m < 1, we can let

z → 1 to get

k X

∞ ∞

∞

Q(1) X X

1

<∞

=

j pj =

mk =

q0

1−m

j=1

k=0

k=0

proving positive recurrence.

The case m = 1 is a little bit harder. The calculations carried out earlier

are still valid and we knowP

in this case that there exists q(x) ≥ 0 such that

each q(x) < ∞ for each x, x q(x) = ∞, and

X

q(x) π(x, y) = q(y).

x

In other words the chain admits an infinite invariant measure. Such a chain

cannot be positive recurrent. To see this we note

X

q(y) =

π (n) (x, y)q(x)

x

CHAPTER 4. DEPENDENT RANDOM VARIABLES

138

and if the chain were positive recurrent

lim π (n) (x, y) = q̃(y)

n→∞

would exist and

P

y

q̃(y) = 1. By Fatou’s lemma

q(y) ≥

X

q̃(y)q(x) = ∞

x

giving us a contradiction. To decide between transience and null recurrence

a more detiled investigation is needed. We will outline a general procedure.

Suppose we have a state x0 that is fixed and would like to calculate

Fx0 (`) = Px0 {τx0 ≤ `}. If we can do this, then we can answer questions

about transience, recurrence etc. If lim`→∞ Fx0 (`) < 1 then the chain is

transient and otherwise recurrent. In the recurrent case the convergence or

divergence of

X

E Px0 {τx0 } =

[1 − Fx0 (`)]

`

determines if it is positive or null recurrent. If we can determine

Fy (`) = Py {τx0 ≤ `}

for y 6= x0 , then for ` ≥ 1

Fx0 (`) = π(x0 , x0 ) +

X

π(x0 , y)Fy (` − 1).

y6=x0

We shall outline a procedure for determining for λ > 0,

U(λ, y) = Ey exp[−λτx0 ] .

Clearly U(x) = U(λ, x) satisfies

X

π(x, y)U(y) for x 6= x0

U(x) = e−λ

(4.14)

y

and U(x0 ) = 1. One would hope that if we solve for these equations then

we have our U. This requires uniqueness. Since our U is bounded in fact by

1, it is sufficient to prove uniqueness within the class of bounded solutions

4.6. COUNTABLE STATE SPACE

139

of equation 4.14. We will now establish that any bounded solution U of

equation 4.14 with U(x0 ) = 1, is given by

U(y) = U(λ, y) = Ey exp[−λτx0 ] .

Let us define En = {X1 6= x0 , X2 6= x0 , · · · , Xn−1 6= x0 , Xn = x0 }. Then

we will prove, by induction, that for any solution U of equation (3.7), with

U(λ, x0 ) = 1,

Z

n

X

−λ j

−λ n

U(y) =

e

Py {Ej } + e

U(Xn ) dPy .

(4.15)

τx0 >n

j=1

By letting n → ∞ we would obtain

U(y) =

∞

X

e−λ j Py {Ej } = E Py {e−λτx0 }

j=1

because U is bounded and λ > 0.

Z

U(Xn ) dPy

τx0 >n

Z

X

−λ

=e

[

π(Xn , y) U(y)] dPy

τx0 >n

−λ

=e

y

−λ

Z

Py {En+1 } + e

[

X

π(Xn , y) U(y)] dPy

τx0 >n y6=x

0

−λ

=e

−λ

Z

Py {En+1 } + e

U(Xn+1 ) dPy

τx0 >n+1

completing the induction argument. In our case, if we take x0 = 0 and try

Uσ (x) = e−σ x with σ > 0, for x ≥ 1

X

X

π(x, y)Uσ (y) =

e−σ y py−x+1

y

y≥x−1

=

X

e−σ (x+y−1) py

y≥0

= e−σ x eσ

X

y≥0

e−σ y py = ψ(σ)Uσ (x)

CHAPTER 4. DEPENDENT RANDOM VARIABLES

140

where

ψ(σ) = eσ

X

e−σ y py .

y≥0

Let us solve eλ = ψ(σ) for σ which is the same as solving log ψ(σ) = λ for

λ > 0 to get a solution σ = σ(λ) > 0. Then

U(λ, x) = e−σ(λ) x = E Px {e−λτ0 }.

We see now that recurrence is equivalent to σ(λ) → 0 as λ ↓ 0 and positive

recurrence to σ(λ) being differentiable at λ = 0. The function log ψ(σ)

is convex and its slope at the origin is 1 − m. If m > 1 it dips below 0

initially for σ > 0 and then comes back up to 0 for some positive σ0 before

turning positive for good. In that situation limλ↓0 σ(λ) = σ0 > 0 and that

is transience. If m < 1 then log ψ(σ) has a positive slope at the origin and

1

σ 0 (0) = ψ01(0) = 1−m

< ∞. If m = 1, then log ψ has zero slope at the origin

0

and σ (0) = ∞. This concludes the discussion of this problem.

Example 4.3. ( The Urn Problem.)

We now turn to a discussion of the urn problem.

π(p, q ; p + 1, q) =

p

p+q

and π(p, q ; p, q + 1) =

q

p+q

and π is zero otherwise. In this case the equation

F (p, q) =

p

q

F (p + 1, q) +

F (p, q + 1) for all p, q

p+q

p+q

which will play a role later, has lots of solutions. In particular, F (p, q) =

is one and for any 0 < x < 1

Fx (p, q) =

p

p+q

1

xp−1 (1 − x)q−1

β(p, q)

where

β(p, q) =

Γ(p)Γ(q)

Γ(p + q)

is a solution as well. The former is defined on p + q > 0 where as the latter is

defined only on p > 0, q > 0. Actually if p or q is initially 0 it remains so for

4.6. COUNTABLE STATE SPACE

141

ever and there is nothing to study in that case. If f is a continuous function

on [0, 1] then

Z 1

Ff (p, q) =

Fx (p, q)f (x) dx

0

is a solution and if we want we can extend Ff by making it f (1) on q = 0

and f (0) on p = 0. It is a simple exercise to verify

lim Ff (p, q) = f (x)

p,q→∞

p

q →x

n

for any continuous f on [0, 1]. We will show that the ratio ξn = pnp+q

which

n

is random, stabilizes asymptotically (i.e. has a limit) to a random variable

ξ and if we start from p, q the distribution of ξ is the Beta distribution on

[0, 1] with density

Fx (p, q) =

1

xp−1 (1 − x)q−1

β(p, q)

Suppose we have a Markov Chain on some state space X with transition

probability π(x, y) and U(x) is a bounded function on X that solves

X

U(x) =

π(x, y) U(y).

y

Such functions are called (bounded) Harmonic functions for the Chain. Consider the random variables ξn = U(Xn ) for such an harmonic function. ξn

are uniformly bounded by the bound for U. If we denote by ηn = ξn − ξn−1

an elementary calculation reveals

E Px {ηn+1 } = E Px {U(Xn+1 ) − U(Xn )}

= E Px {E Px {U(Xn+1 ) − U(Xn )}|Fn }}

where Fn is the σ-field generated by X0 , · · · , Xn . But

X

E Px {U(Xn+1 ) − U(Xn )}|Fn } =

π(Xn , y)[U(y) − U(Xn )] = 0.

y

A similar calculation shows that

E Px {ηn ηm } = 0

CHAPTER 4. DEPENDENT RANDOM VARIABLES

142

for m 6= n. If we write

U(Xn ) = U(X0 ) + η1 + η2 + · · · + ηn

this is an orthogonal sum in L2 [Px ] and because U is bounded

2

2

E {|U(Xn )| } = |U(x)| +

Px

n

X

E Px {|ηi |2 } ≤ C

i=1

is bounded in n. Therefore limn→∞ U(Xn ) = ξ exists in L2 [Px ] and E Px {ξ} =

U(x). Actually the limit exists almost surely and we will show it when

p

we discuss martingales later. In our example if we take U(p, q) = p+q

, as

remarked earlier, this is a harmonic function bounded by 1 and therefore

pn

=ξ

lim

n→∞ pn + qn

exists in L2 [Px ]. Moreover if we take U(p, q) = Ff (p, q) for some continuous

f on [0, 1], because Ff (p, q) → f (x) as p, q → ∞ and pq → x, U(pn , qn ) has a

limit as n → ∞ and this limit has to be f (ξ). On the other hand

E Pp,q {U(pn , qn )} = U(p0 , q0 ) = Ff (p0 , q0 )

Z 1

1

=

f (x)xp0 −1 (1 − x)q0 −1 dx

β(p0, q0 ) 0

giving us

Z 1

1

f (x) xp−1 (1 − x)q−1 dx

E {f (ξ)} =

β(p, q) 0

thereby identifying the distribution of ξ under Pp,q as the Beta distribution

with the right parameters.

Pp,q

Example 4.4. (Branching Process). Consider a population, in which each

individual member replaces itself at the beginning of each day by a random

number of offsprings. Every individual has the same probability distribution,

but the number of offsprings for different individuals are distibuted independently of each other. The distribution of the number N of offsprings is given

by P [N = i] = pi for i ≥ 0. If there are Xn individuals in the population on

a given day, then the number of individuals Xn+1 present on the next day,

has the represenation

Xn+1 = N1 + N2 + · · · + NXn

4.6. COUNTABLE STATE SPACE

143

as the sum of Xn independent random variables each having the offspring

distribution {pi : i ≥ 0}. Xn is seen to be a Markov chain on the set

of nonnegative integers. Note that if Xn ever becomes zero, i.e. if every

member on a given day produces no offsprings, then the population remains

extinct.

If one uses generating functions, then the transition probability πi,j of the

chain are

X

πi,j z j =

X

j

i

pj z j .

j

What is the long time behavior of the chain?

Let us denote by m the expected number of offsprings of any individual,

i.e.

X

m=

ipi .

i≥0

Then

E[Xn+1 |Fn ] = mXn .

1. If m < 1, then the population becomes extinct sooner or later. This is

easy to see. Consider

X

X

E[

Xn |F0 ] =

mn X0 =

n≥0

n≥0