Java Threads (a review, and some new things)

... At this point, it seems that we throw a bunch of threads in, and we don’t really know what happens To some extent it’s true, but we have ways to have some ...

... At this point, it seems that we throw a bunch of threads in, and we don’t really know what happens To some extent it’s true, but we have ways to have some ...

Threads Threads, User vs. Kernel Threads, Java Threads, Threads

... Question: what will the output be? Answer: Impossible to tell for sure If you know the implementation of the JVM on your particular machine, then you may be able to tell But if you write this code to be run anywhere, then you can’t expect to know what happens ...

... Question: what will the output be? Answer: Impossible to tell for sure If you know the implementation of the JVM on your particular machine, then you may be able to tell But if you write this code to be run anywhere, then you can’t expect to know what happens ...

Concurrent Programming in Java

... machines Cons: a. Harder to program b. High memory usage due to switching, instantiation, and synchronization c. Race condition ...

... machines Cons: a. Harder to program b. High memory usage due to switching, instantiation, and synchronization c. Race condition ...

Threads

... Serial portion of an application has disproportionate effect on performance gained by adding additional cores But does the law take into account contemporary multicore systems? NO ...

... Serial portion of an application has disproportionate effect on performance gained by adding additional cores But does the law take into account contemporary multicore systems? NO ...

Threading A thread is a thread of execution in a program. The Java

... Even a single application is often expected to do more than one thing at a time. For example, that streaming audio application must simultaneously read the digital audio off the network, decompress it, manage playback, and update its display. Even the word processor should always be ready to respond ...

... Even a single application is often expected to do more than one thing at a time. For example, that streaming audio application must simultaneously read the digital audio off the network, decompress it, manage playback, and update its display. Even the word processor should always be ready to respond ...

Threads - Wikispaces

... Both M:M and Two-level models require communication to maintain the appropriate number of kernel threads allocated to the application Many systems use an intermediate data structure between user and kernel threads – ...

... Both M:M and Two-level models require communication to maintain the appropriate number of kernel threads allocated to the application Many systems use an intermediate data structure between user and kernel threads – ...

Threads

... Apple technology for Mac OS X and iOS operating systems Extensions to C, C++ languages, API, and run-time library Allows identification of parallel sections Manages most of the details of threading Block is in “^{ }” - ˆ{ printf("I am a block"); } ...

... Apple technology for Mac OS X and iOS operating systems Extensions to C, C++ languages, API, and run-time library Allows identification of parallel sections Manages most of the details of threading Block is in “^{ }” - ˆ{ printf("I am a block"); } ...

ppt - Dr. Sadi Evren SEKER

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

Chapter 4: Threads

... Economy – cheaper than process creation, thread switching lower overhead than context switching ...

... Economy – cheaper than process creation, thread switching lower overhead than context switching ...

ppt

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

Chapter 4: Threads

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

... Thread creation is done through clone() system call clone() allows a child task to share the address space of the ...

Threads

... Economy – cheaper than process creation, thread switching lower overhead than context switching ...

... Economy – cheaper than process creation, thread switching lower overhead than context switching ...

Threads - TheToppersWay

... To explore several strategies that provide implicit threading To examine issues related to multithreaded programming To cover operating system support for threads in Windows and ...

... To explore several strategies that provide implicit threading To examine issues related to multithreaded programming To cover operating system support for threads in Windows and ...

Win32 Programming

... There is actually a structure for the thread context documented by Microsoft… ooh! Declared in winnt.h (see here for details) So, if we wanted to we could stop a running thread and modify its context… well, let’s look at the example in the book… ...

... There is actually a structure for the thread context documented by Microsoft… ooh! Declared in winnt.h (see here for details) So, if we wanted to we could stop a running thread and modify its context… well, let’s look at the example in the book… ...

Threads

... • with other threads of the process thread B thread A shares process resources executes concurrently can block, fork, terminate, synchronize ...

... • with other threads of the process thread B thread A shares process resources executes concurrently can block, fork, terminate, synchronize ...

on page 2-2

... The VDK scheduler can be disabled by entering an unscheduled region. The ability to disable scheduling is necessary when you need to free multiple system resources without being switched out, or access global variables that are modified by other threads without preventing interrupts from being servi ...

... The VDK scheduler can be disabled by entering an unscheduled region. The ability to disable scheduling is necessary when you need to free multiple system resources without being switched out, or access global variables that are modified by other threads without preventing interrupts from being servi ...

Threads - McMaster Computing and Software

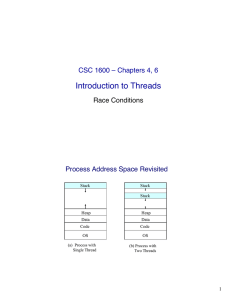

... Multi-threaded applications have multiple threads within a single ...

... Multi-threaded applications have multiple threads within a single ...

ppt - TAMU Computer Science Faculty Pages

... ignored while reasoning about program behavior • Declarative/pure languages are not the mainstream. Imperative languages with threads as their main model for concurrency dominates ...

... ignored while reasoning about program behavior • Declarative/pure languages are not the mainstream. Imperative languages with threads as their main model for concurrency dominates ...

Introduction to Threads

... API for thread creation and synchronization" Common in UNIX operating systems " ...

... API for thread creation and synchronization" Common in UNIX operating systems " ...

CS2504, Spring`2007 ©Dimitris Nikolopoulos

... the layers above it Example: binary code, instruction set, high-level programming language, component programming Simplifies computing tasks, such as programming, compiling, running programs CS has been raising the level of abstraction for almost 50 years Some indications that this trend may change ...

... the layers above it Example: binary code, instruction set, high-level programming language, component programming Simplifies computing tasks, such as programming, compiling, running programs CS has been raising the level of abstraction for almost 50 years Some indications that this trend may change ...

Thread Basics

... Processes are inert – they are simply a container for one or more threads Always solve a problem by adding threads not processes if you possibly can! ...

... Processes are inert – they are simply a container for one or more threads Always solve a problem by adding threads not processes if you possibly can! ...

Parallel computing

Parallel computing is a form/type of computation in which many calculations are carried out simultaneously, operating on the principle that large problems can often be divided into smaller ones, which are then solved at the same time. There are several different forms of parallel computing: bit-level, instruction level, data, and task parallelism. Parallelism has been employed for many years, mainly in high-performance computing, but interest in it has grown lately due to the physical constraints preventing frequency scaling. As power consumption (and consequently heat generation) by computers has become a concern in recent years, parallel computing has become the dominant paradigm in computer architecture, mainly in the form of multi-core processors.Parallel computing is closely related to concurrent computing – they are frequently used together, and often conflated, though the two are distinct: it is possible to have parallelism without concurrency (such as bit-level parallelism), and concurrency without parallelism (such as multitasking by time-sharing on a single-core CPU).In parallel computing, a computational task is typically broken down in several, often many, very similar subtasks that can be processed independently and whose results are combined afterwards, upon completion. In contrast, in concurrent computing, the various processes often do not address related tasks; when they do, as is typical in distributed computing, the separate tasks may have a varied nature and often require some inter-process communication during execution.Parallel computers can be roughly classified according to the level at which the hardware supports parallelism, with multi-core and multi-processor computers having multiple processing elements within a single machine, while clusters, MPPs, and grids use multiple computers to work on the same task. Specialized parallel computer architectures are sometimes used alongside traditional processors, for accelerating specific tasks.In some cases parallelism is transparent to the programmer, such as in bit-level or instruction-level parallelism, but explicitly parallel algorithms, particularly those that use concurrency, are more difficult to write than sequential ones, because concurrency introduces several new classes of potential software bugs, of which race conditions are the most common. Communication and synchronization between the different subtasks are typically some of the greatest obstacles to getting good parallel program performance.A theoretical upper bound on the speed-up of a single program as a result of parallelization is given by Amdahl's law.