EC220 - Web del Profesor

... because their values are predetermined or given. Variables can be exogenous or endogenous. An endogenous variable is one whose value(s) is(are) determined within the system while an exogenous variable is one whose value is determined outside the system. For instance, in some simple Economic models, ...

... because their values are predetermined or given. Variables can be exogenous or endogenous. An endogenous variable is one whose value(s) is(are) determined within the system while an exogenous variable is one whose value is determined outside the system. For instance, in some simple Economic models, ...

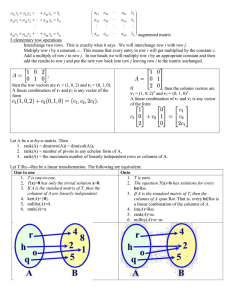

Solutions of Systems of Linear Equations in a Finite Field Nick

... Linear algebra has roots which date back as far as the birth of calculus. In the late 1600’s, Leibnitz used coefficients of linear equations in much of his work, and later in the 1700’s Lagrange further developed the idea of determinants through his Lagrangian multipliers. The term “matrix” wasn’t a ...

... Linear algebra has roots which date back as far as the birth of calculus. In the late 1600’s, Leibnitz used coefficients of linear equations in much of his work, and later in the 1700’s Lagrange further developed the idea of determinants through his Lagrangian multipliers. The term “matrix” wasn’t a ...

X - studyfruit

... A generalized eigenvector of A is a nonzero vector v, which is associated with λ having algebraic multiplicity k ≥1, satisfying The set spanned by all generalized eigenvectors for a given λ, form the generalized eigenspace for λ. Solve for where v1 is the first eigenvector, and v2 is the generalized ...

... A generalized eigenvector of A is a nonzero vector v, which is associated with λ having algebraic multiplicity k ≥1, satisfying The set spanned by all generalized eigenvectors for a given λ, form the generalized eigenspace for λ. Solve for where v1 is the first eigenvector, and v2 is the generalized ...

Proofs Homework Set 5

... (a) Show that if A ∼ B (that is, if they are row equivalent), then EA = B for some matrix E which is a product of elementary matrices. Proof. By definition, if A ∼ B, there is some sequence of elementary row operations which, when performed on A, produce B. Further, multiplying on the left by the co ...

... (a) Show that if A ∼ B (that is, if they are row equivalent), then EA = B for some matrix E which is a product of elementary matrices. Proof. By definition, if A ∼ B, there is some sequence of elementary row operations which, when performed on A, produce B. Further, multiplying on the left by the co ...

Document

... Now using the previous problem we will allow the calculator to do the work. Enter this as matrix A in the calc ...

... Now using the previous problem we will allow the calculator to do the work. Enter this as matrix A in the calc ...

1. (a) Solve the system: x1 + x2 − x3 − 2x 4 + x5 = 1 2x1 + x2 + x3 +

... (b) Is V closed under scalar multiplication? Justify. (c) Is V closed under vector addition? Justify. (d) Is V a subspace of R4 ? 13. Let P : x − 4y + 2z = 3 be a plane in R3 . ...

... (b) Is V closed under scalar multiplication? Justify. (c) Is V closed under vector addition? Justify. (d) Is V a subspace of R4 ? 13. Let P : x − 4y + 2z = 3 be a plane in R3 . ...

Matrix Multiplication

... is a matrix with three rows and four columns (we would call this a 3 by 4 matrix). Matrices are usually denoted in print by bolded upper case characters. The individual entries in a matrix are called its elements, and are often referred to using a subscript notation indicating the row and column in ...

... is a matrix with three rows and four columns (we would call this a 3 by 4 matrix). Matrices are usually denoted in print by bolded upper case characters. The individual entries in a matrix are called its elements, and are often referred to using a subscript notation indicating the row and column in ...

Matrices Linear equations Linear Equations

... 2. Find roots (eigenvalues) of the polynomial such that determinant = 0 3. For each eigenvalue solve the equation (1) For larger matrices – alternative ways of computation ...

... 2. Find roots (eigenvalues) of the polynomial such that determinant = 0 3. For each eigenvalue solve the equation (1) For larger matrices – alternative ways of computation ...

GRE math study group Linear algebra examples

... but can we get other dimensions. You certainly can’t get 3, because the intersection can’t be bigger than V or W . That’s enough to answer the question. It’s got to be (D). In fact, two planes through the origin in 4-space can intersect only at the origin. For example, let one plane be the x, y-plan ...

... but can we get other dimensions. You certainly can’t get 3, because the intersection can’t be bigger than V or W . That’s enough to answer the question. It’s got to be (D). In fact, two planes through the origin in 4-space can intersect only at the origin. For example, let one plane be the x, y-plan ...

M.E. 530.646 Problem Set 1 [REV 1] Rigid Body Transformations

... 2. Polynomials can be conceived as vectors: a. Show that polynomials of a single variable, s, of order n − 1 or less, form a linear vector space (LVS), where vector addition is just the addition of two polynomials, scalar multiplication is just the multiplication of a polynomial by a scalar. For exa ...

... 2. Polynomials can be conceived as vectors: a. Show that polynomials of a single variable, s, of order n − 1 or less, form a linear vector space (LVS), where vector addition is just the addition of two polynomials, scalar multiplication is just the multiplication of a polynomial by a scalar. For exa ...

118 CARL ECKART AND GALE YOUNG each two

... The availability of the principal axis transformation for hermitian matrices often simplifies the proof of theorems concerning them. In working with non-hermitian matrices (square or rectangular) it was found t h a t a generalization of this transformation has a similar use for them.* A special case ...

... The availability of the principal axis transformation for hermitian matrices often simplifies the proof of theorems concerning them. In working with non-hermitian matrices (square or rectangular) it was found t h a t a generalization of this transformation has a similar use for them.* A special case ...

![M.E. 530.646 Problem Set 1 [REV 1] Rigid Body Transformations](http://s1.studyres.com/store/data/017245963_1-2f60978169e1255dbeaf6de62def96e1-300x300.png)