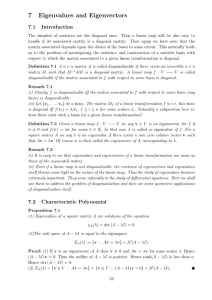

7 Eigenvalues and Eigenvectors

... Definition 7.9 We say A is triangularizable if there exists an invertible matrix C such that C −1 AC is upper triangular. Remark 7.7 Obviously all diagonalizable matrices are triangularizable. The following result says that triangularizability causes least problem: Proposition 7.9 Over the complex n ...

... Definition 7.9 We say A is triangularizable if there exists an invertible matrix C such that C −1 AC is upper triangular. Remark 7.7 Obviously all diagonalizable matrices are triangularizable. The following result says that triangularizability causes least problem: Proposition 7.9 Over the complex n ...

restrictive (usually linear) structure typically involving aggregation

... the first situation in which there exist many consistent solutions. One can think of the entire set of consistent solutions as y = yR + yN where yR is the linear combination of the rows of A consistent with AyR = x and yN = NTk where k is a (n-r)-length vector of free variables or weights on the bas ...

... the first situation in which there exist many consistent solutions. One can think of the entire set of consistent solutions as y = yR + yN where yR is the linear combination of the rows of A consistent with AyR = x and yN = NTk where k is a (n-r)-length vector of free variables or weights on the bas ...

PDF version of lecture with all slides

... Inner product represents a row matrix mul>plied by a column matrix. A row matrix can be mul>plied by a column matrix, in that order, only if they each have the same number of elements! In ...

... Inner product represents a row matrix mul>plied by a column matrix. A row matrix can be mul>plied by a column matrix, in that order, only if they each have the same number of elements! In ...

Solutions of Systems of Linear Equations in a Finite Field Nick

... n ≥ 3. It does, however, follow from the research given here that a similar result should be obtained for matrices of higher dimensions. A singular 2X2 matrix can only have rank equal to one, but higher dimension singular matrices may have rank from 1 to p − 1. Thus for any given value you may have ...

... n ≥ 3. It does, however, follow from the research given here that a similar result should be obtained for matrices of higher dimensions. A singular 2X2 matrix can only have rank equal to one, but higher dimension singular matrices may have rank from 1 to p − 1. Thus for any given value you may have ...

Lab 2 solution

... 7. Prove that a (real) symmetric matrix A is positive semidefinite if and only if all of its eigenvalues are non-negative, and positive definite if and only if all of its eigenvalues are strictly positive. Solution: First note that since A is symmetric we can find an orthogonal P such that P T AP = ...

... 7. Prove that a (real) symmetric matrix A is positive semidefinite if and only if all of its eigenvalues are non-negative, and positive definite if and only if all of its eigenvalues are strictly positive. Solution: First note that since A is symmetric we can find an orthogonal P such that P T AP = ...

product matrix equation - American Mathematical Society

... \-matrix which has an inverse which is also a X-matrix is called unimodular. If TA =B where T, A, and B are X-matrices and T is unimodular, then A is said to be a left associate of B. Every square X-matrix is the left associate of a unique X-matrix of the following form: Every element below the main ...

... \-matrix which has an inverse which is also a X-matrix is called unimodular. If TA =B where T, A, and B are X-matrices and T is unimodular, then A is said to be a left associate of B. Every square X-matrix is the left associate of a unique X-matrix of the following form: Every element below the main ...

Notes on laws of large numbers, quantiles

... • Sensitivity to scaling is not the only pitfall to look out for in using the results of a principal components analysis. If a large number of closely related variables are added to the X vector, the first principal component will eventually reflect mainly the common component of those variables. • ...

... • Sensitivity to scaling is not the only pitfall to look out for in using the results of a principal components analysis. If a large number of closely related variables are added to the X vector, the first principal component will eventually reflect mainly the common component of those variables. • ...

Algebra I

... A pointwise.Let B1 and B2 be bases for C m , C n respectively , and let (A) = ((α) + i(β)) be the matrix of A with respect to them.What is the matrix of RA with respect to RB1 , RB2 ? (b) Denote by CRm the ’complexification’ of Rm constructed as follows:the elements of CRm are (u + iv),u, v in Rm . ...

... A pointwise.Let B1 and B2 be bases for C m , C n respectively , and let (A) = ((α) + i(β)) be the matrix of A with respect to them.What is the matrix of RA with respect to RB1 , RB2 ? (b) Denote by CRm the ’complexification’ of Rm constructed as follows:the elements of CRm are (u + iv),u, v in Rm . ...

Math 312 Lecture Notes Linear Two

... This is the eigenvalue problem for the matrix A. To solve (2), we must find the eigenvalues (λ) and the corresponding eigenvectors (~v) of A. Recall from linear algebra that an n × n matrix has at most n eigenvalues, and always has at least one eigenvalue. Thus for our 2 × 2 system, we expect to fin ...

... This is the eigenvalue problem for the matrix A. To solve (2), we must find the eigenvalues (λ) and the corresponding eigenvectors (~v) of A. Recall from linear algebra that an n × n matrix has at most n eigenvalues, and always has at least one eigenvalue. Thus for our 2 × 2 system, we expect to fin ...

If A and B are n by n matrices with inverses, (AB)-1=B-1A-1

... If W is a finite-dimensional subspace of an inner product space V, and if u is a vector in V, then projWu is the best approximation to u from W in the sense that ||u-projWu||<||u-w|| for every vector w in W that is dfferent from projWu If A is an m by n matrix with linearly independent column vector ...

... If W is a finite-dimensional subspace of an inner product space V, and if u is a vector in V, then projWu is the best approximation to u from W in the sense that ||u-projWu||<||u-w|| for every vector w in W that is dfferent from projWu If A is an m by n matrix with linearly independent column vector ...

Jordan normal form

In linear algebra, a Jordan normal form (often called Jordan canonical form)of a linear operator on a finite-dimensional vector space is an upper triangular matrix of a particular form called a Jordan matrix, representing the operator with respect to some basis. Such matrix has each non-zero off-diagonal entry equal to 1, immediately above the main diagonal (on the superdiagonal), and with identical diagonal entries to the left and below them. If the vector space is over a field K, then a basis with respect to which the matrix has the required form exists if and only if all eigenvalues of the matrix lie in K, or equivalently if the characteristic polynomial of the operator splits into linear factors over K. This condition is always satisfied if K is the field of complex numbers. The diagonal entries of the normal form are the eigenvalues of the operator, with the number of times each one occurs being given by its algebraic multiplicity.If the operator is originally given by a square matrix M, then its Jordan normal form is also called the Jordan normal form of M. Any square matrix has a Jordan normal form if the field of coefficients is extended to one containing all the eigenvalues of the matrix. In spite of its name, the normal form for a given M is not entirely unique, as it is a block diagonal matrix formed of Jordan blocks, the order of which is not fixed; it is conventional to group blocks for the same eigenvalue together, but no ordering is imposed among the eigenvalues, nor among the blocks for a given eigenvalue, although the latter could for instance be ordered by weakly decreasing size.The Jordan–Chevalley decomposition is particularly simple with respect to a basis for which the operator takes its Jordan normal form. The diagonal form for diagonalizable matrices, for instance normal matrices, is a special case of the Jordan normal form.The Jordan normal form is named after Camille Jordan.