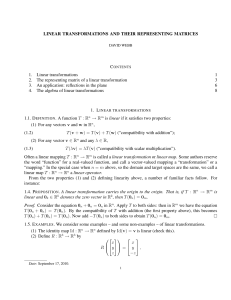

LINEAR TRANSFORMATIONS AND THEIR

... defined above! But [ ] is even better than just a linear map: it has an inverse L( ) : Mm×n (R) → L(Rn , Rm ) that sends a matrix A ∈ Mm×n (R) to the linear transformation LA : Rn → Rm given by LA (v) = Av. The fact that [ ] and L( ) are inverses of each other is just the observation (2.1). Since [ ...

... defined above! But [ ] is even better than just a linear map: it has an inverse L( ) : Mm×n (R) → L(Rn , Rm ) that sends a matrix A ∈ Mm×n (R) to the linear transformation LA : Rn → Rm given by LA (v) = Av. The fact that [ ] and L( ) are inverses of each other is just the observation (2.1). Since [ ...

Introduction to systems of linear equations

... Introduction to systems of linear equations These slides are based on Section 1 in Linear Algebra and its Applications by David C. Lay. ...

... Introduction to systems of linear equations These slides are based on Section 1 in Linear Algebra and its Applications by David C. Lay. ...

The solutions to the operator equation TXS − SX T = A in Hilbert C

... The equation T XS ∗ − SX ∗ T ∗ = A was studied by Yuan [8] for finite matrices and Xu et al. [6] generalized the results to Hilbert C∗ -modules, under the condition that ran(S) is contained in ran(T). When T equals an identity matrix or identity operator, this equation reduces to XS ∗ − SX ∗ = A, wh ...

... The equation T XS ∗ − SX ∗ T ∗ = A was studied by Yuan [8] for finite matrices and Xu et al. [6] generalized the results to Hilbert C∗ -modules, under the condition that ran(S) is contained in ran(T). When T equals an identity matrix or identity operator, this equation reduces to XS ∗ − SX ∗ = A, wh ...

On the complexity of integer matrix multiplication

... way, by cutting up each entry into chunks of about n bits, performing FFTs over S, and then multiplying the matrices of Fourier coefficients. When n is much larger than d, the latter step takes negligible time, and the bulk of the time is spent performing FFTs. Since each matrix entry is only transf ...

... way, by cutting up each entry into chunks of about n bits, performing FFTs over S, and then multiplying the matrices of Fourier coefficients. When n is much larger than d, the latter step takes negligible time, and the bulk of the time is spent performing FFTs. Since each matrix entry is only transf ...

Notes from Unit 1

... when we know the matrix A and the product vector b, and we want to find the vector x for which Ax = b — or even to know whether such a x exists. If we could “divide by A”, it would be an easy problem, just like 8th grade algebra; but that’s not always possible, and sometimes not easy even when it is ...

... when we know the matrix A and the product vector b, and we want to find the vector x for which Ax = b — or even to know whether such a x exists. If we could “divide by A”, it would be an easy problem, just like 8th grade algebra; but that’s not always possible, and sometimes not easy even when it is ...

Jordan normal form

In linear algebra, a Jordan normal form (often called Jordan canonical form)of a linear operator on a finite-dimensional vector space is an upper triangular matrix of a particular form called a Jordan matrix, representing the operator with respect to some basis. Such matrix has each non-zero off-diagonal entry equal to 1, immediately above the main diagonal (on the superdiagonal), and with identical diagonal entries to the left and below them. If the vector space is over a field K, then a basis with respect to which the matrix has the required form exists if and only if all eigenvalues of the matrix lie in K, or equivalently if the characteristic polynomial of the operator splits into linear factors over K. This condition is always satisfied if K is the field of complex numbers. The diagonal entries of the normal form are the eigenvalues of the operator, with the number of times each one occurs being given by its algebraic multiplicity.If the operator is originally given by a square matrix M, then its Jordan normal form is also called the Jordan normal form of M. Any square matrix has a Jordan normal form if the field of coefficients is extended to one containing all the eigenvalues of the matrix. In spite of its name, the normal form for a given M is not entirely unique, as it is a block diagonal matrix formed of Jordan blocks, the order of which is not fixed; it is conventional to group blocks for the same eigenvalue together, but no ordering is imposed among the eigenvalues, nor among the blocks for a given eigenvalue, although the latter could for instance be ordered by weakly decreasing size.The Jordan–Chevalley decomposition is particularly simple with respect to a basis for which the operator takes its Jordan normal form. The diagonal form for diagonalizable matrices, for instance normal matrices, is a special case of the Jordan normal form.The Jordan normal form is named after Camille Jordan.