Appendix E6 ICM (IBIS Interconnect Modeling Specification)

... 6) To facilitate portability between operating systems, file names used in the ICM file must only have lower case characters. File names should have a basename followed by a period ("."), followed by a file name extension of no more than three characters. There is no length restriction on the basena ...

... 6) To facilitate portability between operating systems, file names used in the ICM file must only have lower case characters. File names should have a basename followed by a period ("."), followed by a file name extension of no more than three characters. There is no length restriction on the basena ...

a comparative evaluation of matlab, octave, freemat - here

... In the teaching context, two types of courses should be distinguished: There are courses in which Matlab is simply used to let the student solve larger problems (e.g., to solve eigenvalue problems with larger matrices than 4×4) or to let the student focus on the application instead of mathematical a ...

... In the teaching context, two types of courses should be distinguished: There are courses in which Matlab is simply used to let the student solve larger problems (e.g., to solve eigenvalue problems with larger matrices than 4×4) or to let the student focus on the application instead of mathematical a ...

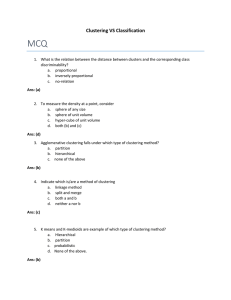

MCQ Clustering VS Classification

... 3. In Supervised learning, class labels of the training samples are a. Known b. Unknown c. Doesn’t matter d. Partially known Ans: (a) 4. The upper bound of the number of non-zero Eigenvalues of S w -1S B (C = No. of Classes) a. C - 1 b. C + 1 c. C d. None of the above Ans: (a) 5. If S w is singular ...

... 3. In Supervised learning, class labels of the training samples are a. Known b. Unknown c. Doesn’t matter d. Partially known Ans: (a) 4. The upper bound of the number of non-zero Eigenvalues of S w -1S B (C = No. of Classes) a. C - 1 b. C + 1 c. C d. None of the above Ans: (a) 5. If S w is singular ...

Tutorial: Linear Algebra In LabVIEW

... LabVIEW ties the creation of user interfaces (called front panels) into the development cycle. LabVIEW programs/subroutines are called virtual instruments (VIs). Each VI has three components: a block diagram, a front panel, and a connector panel. The last is used to represent the VI in the block ...

... LabVIEW ties the creation of user interfaces (called front panels) into the development cycle. LabVIEW programs/subroutines are called virtual instruments (VIs). Each VI has three components: a block diagram, a front panel, and a connector panel. The last is used to represent the VI in the block ...

Appendix B Introduction to MATLAB - UTK-EECS

... MATLAB. The syntax for assigning values to a row vector is illustrated with the following two assignment statements which are typed on a single line and both terminated by a semicolon. »b = [1 3 2] ; c = [2,5,1] ; ...

... MATLAB. The syntax for assigning values to a row vector is illustrated with the following two assignment statements which are typed on a single line and both terminated by a semicolon. »b = [1 3 2] ; c = [2,5,1] ; ...

Sufficient conditions for convergence of the Sum

... such as 3-SAT and graph coloring [4]) and computer vision (stereo matching [5] and image restoration [6]). LBP can be regarded as the most elementary one in a family of related algorithms, consisting of double-loop algorithms [7], GBP [8], EP [9], EC [10], the Max-Product Algorithm [11], the Survey ...

... such as 3-SAT and graph coloring [4]) and computer vision (stereo matching [5] and image restoration [6]). LBP can be regarded as the most elementary one in a family of related algorithms, consisting of double-loop algorithms [7], GBP [8], EP [9], EC [10], the Max-Product Algorithm [11], the Survey ...

Linear Algebra and Differential Equations

... Combining the operations of vector addition and multiplication by scalars we can form expressions αu + βv + ... + γw which are called linear combinations of vectors u, v, ..., w with coefficients α, β, ..., γ. Linear combinations will regularly occur throughout the course. 1.1.2. Inner product. Metr ...

... Combining the operations of vector addition and multiplication by scalars we can form expressions αu + βv + ... + γw which are called linear combinations of vectors u, v, ..., w with coefficients α, β, ..., γ. Linear combinations will regularly occur throughout the course. 1.1.2. Inner product. Metr ...

Linear Algebra Chapter 6

... This is the correct way to understand the declaration in line b. With respect to pointers this means that matr is pointer-to-a-pointer-to-an-integer which we can write ∗∗matr. Furthermore ∗matr is a-pointer-to-a-pointer of five integers. This interpretation is important when we want to transfer vect ...

... This is the correct way to understand the declaration in line b. With respect to pointers this means that matr is pointer-to-a-pointer-to-an-integer which we can write ∗∗matr. Furthermore ∗matr is a-pointer-to-a-pointer of five integers. This interpretation is important when we want to transfer vect ...

Linear Algebra

... This is the correct way to understand the declaration in line b. With respect to pointers this means that matr is pointer-to-a-pointer-to-an-integer which we can write ∗∗matr. Furthermore ∗matr is a-pointer-to-a-pointer of five integers. This interpretation is important when we want to transfer vect ...

... This is the correct way to understand the declaration in line b. With respect to pointers this means that matr is pointer-to-a-pointer-to-an-integer which we can write ∗∗matr. Furthermore ∗matr is a-pointer-to-a-pointer of five integers. This interpretation is important when we want to transfer vect ...

Random projections and applications to

... for their simplicity and strong error guarantees. We provide a theoretical result relating to projections and how they might be used to solve a general problem, as well as theoretical results relating to various guarantees they provide. In particular, we show how they can be applied to a generic dat ...

... for their simplicity and strong error guarantees. We provide a theoretical result relating to projections and how they might be used to solve a general problem, as well as theoretical results relating to various guarantees they provide. In particular, we show how they can be applied to a generic dat ...

Sketching as a Tool for Numerical Linear Algebra

... The most glaring omission from the above algorithm is which random familes of matrices S will make this procedure work, and for what values of r. Perhaps one of the simplest arguments is the following. Suppose r = Θ(d/ε2 ) and S is a r × n matrix of i.i.d. normal random variables with mean zero and ...

... The most glaring omission from the above algorithm is which random familes of matrices S will make this procedure work, and for what values of r. Perhaps one of the simplest arguments is the following. Suppose r = Θ(d/ε2 ) and S is a r × n matrix of i.i.d. normal random variables with mean zero and ...

Linear Algebra Notes - An error has occurred.

... Proof. A system Ax = b has a unique solution if and only if it has no free variables and rref A | b has no inconsistent rows. This happens exactly when each row and each column of rref(A) contain leading 1’s, which is equivalent to rank(A) = n. The matrix above is the only n × n matrix in RREF with ...

... Proof. A system Ax = b has a unique solution if and only if it has no free variables and rref A | b has no inconsistent rows. This happens exactly when each row and each column of rref(A) contain leading 1’s, which is equivalent to rank(A) = n. The matrix above is the only n × n matrix in RREF with ...

Strict Monotonicity of Sum of Squares Error and Normalized Cut in

... In a typical clustering problem we have n input objects that we want to divide into K < n clusters, where K is a user-chosen parameter. To do so we usually minimize a functional which represent our notion of cluster. The resulting clustering C : {1, . . . , n} → {1, . . . , K} is a surjective functi ...

... In a typical clustering problem we have n input objects that we want to divide into K < n clusters, where K is a user-chosen parameter. To do so we usually minimize a functional which represent our notion of cluster. The resulting clustering C : {1, . . . , n} → {1, . . . , K} is a surjective functi ...

Introduction to Linear Transformation

... Question: Describe all vectors ~u so that T (~u ) = ~b. Answer: This is the same as finding all vectors ~u so that A~u = ~b. Could be no ~u , could be exactly one ~u , or could be a parametrized family of such ~u ’s. Recall the idea: row reduce the augmented matrix [A : ~b] to merely echelon form. A ...

... Question: Describe all vectors ~u so that T (~u ) = ~b. Answer: This is the same as finding all vectors ~u so that A~u = ~b. Could be no ~u , could be exactly one ~u , or could be a parametrized family of such ~u ’s. Recall the idea: row reduce the augmented matrix [A : ~b] to merely echelon form. A ...

Lecture6

... Gauss Elimination Method • The method consists of four steps 1. Construct an augmented matrix for the given system of equations. 2. Use elementary row operations to transform the augmented matrix into an augmented matrix in row-reduced form. 3. Write the equations associated with the resultin ...

... Gauss Elimination Method • The method consists of four steps 1. Construct an augmented matrix for the given system of equations. 2. Use elementary row operations to transform the augmented matrix into an augmented matrix in row-reduced form. 3. Write the equations associated with the resultin ...

Matlab - University of Sunderland

... MATLAB History (FAQ) • In the mid-1970s, Cleve Moler and several colleagues developed the FORTRAN subroutine libraries called LINPACK and EISPACK under a grant from the National Science Foundation. • LINPACK was a collection of FORTRAN subroutines for solving linear equations, while EISPACK contain ...

... MATLAB History (FAQ) • In the mid-1970s, Cleve Moler and several colleagues developed the FORTRAN subroutine libraries called LINPACK and EISPACK under a grant from the National Science Foundation. • LINPACK was a collection of FORTRAN subroutines for solving linear equations, while EISPACK contain ...

Sufficient conditions for convergence of Loopy

... In the general case, when the domains Xi are arbitrarily large (but finite), we do not know of a natural parameterization of the messages that automatically takes care of the invariance of the messages µI→j under scaling (like (5) does in the binary case).4 Instead of handling the scale invariance b ...

... In the general case, when the domains Xi are arbitrarily large (but finite), we do not know of a natural parameterization of the messages that automatically takes care of the invariance of the messages µI→j under scaling (like (5) does in the binary case).4 Instead of handling the scale invariance b ...

Secure Distributed Linear Algebra in a Constant Number of

... Securely Solving Regular Systems is a protocol that starts from sharings of an invertible matrix and a vector, and generates a sharing of the unique preimage of that vector under the given invertible matrix. This protocol follows immediately from the above protocols. Secure Unbounded Fan-In Multipli ...

... Securely Solving Regular Systems is a protocol that starts from sharings of an invertible matrix and a vector, and generates a sharing of the unique preimage of that vector under the given invertible matrix. This protocol follows immediately from the above protocols. Secure Unbounded Fan-In Multipli ...

Lecture 5 Least

... (which make first term zero) • residual with optimal x is Axls − y = −Q2QT2 y • Q1QT1 gives projection onto R(A) • Q2QT2 gives projection onto R(A)⊥ ...

... (which make first term zero) • residual with optimal x is Axls − y = −Q2QT2 y • Q1QT1 gives projection onto R(A) • Q2QT2 gives projection onto R(A)⊥ ...

Observable operator models for discrete stochastic time series

... This section introduces OOMs again, but this time in a top-down fashion, starting from general stochastic processes. This alternative route clari es the fundamental nature of observable operators. Furthermore, the insights obtained in this section will yield a short and instructive proof of the cent ...

... This section introduces OOMs again, but this time in a top-down fashion, starting from general stochastic processes. This alternative route clari es the fundamental nature of observable operators. Furthermore, the insights obtained in this section will yield a short and instructive proof of the cent ...

Introduction. This primer will serve as a introduction to Maple 10 and

... symbolically. But you want the answer! We use the command fsolve ( f is for floating point) instead to get the decimal approximation to the answers found above: > fsolve(x^3-3*x+1); ...

... symbolically. But you want the answer! We use the command fsolve ( f is for floating point) instead to get the decimal approximation to the answers found above: > fsolve(x^3-3*x+1); ...

Linear Transformations

... A linear transformatio n T : V W that is one to one and onto is called an isomorphis m. Moreover, if V and W are vector spaces such that there exists an isomorphis m from V to W , then V and W are said to be isomorphic to each other. Thm 6.9: (Isomorphic spaces and dimension) Two finite-dimensiona ...

... A linear transformatio n T : V W that is one to one and onto is called an isomorphis m. Moreover, if V and W are vector spaces such that there exists an isomorphis m from V to W , then V and W are said to be isomorphic to each other. Thm 6.9: (Isomorphic spaces and dimension) Two finite-dimensiona ...

Mathematics for Economic Analysis I

... The function y = f (x) is often called a ‘single valued function’ because there is a unique ‘y’ in the range for each specified x. A function whose domain and range are sets of real number is called a real valued function of a real variable. ...

... The function y = f (x) is often called a ‘single valued function’ because there is a unique ‘y’ in the range for each specified x. A function whose domain and range are sets of real number is called a real valued function of a real variable. ...

Linear Algebra and Introduction to MATLAB

... Text strings can be displayed with the function disp. For example: disp( p This message is displayed. p ) Error messages are best displayed with the function error: error( p Something is wrong. p ) since when placed in an M-file, it aborts execution of the M-file. In an M-file the user can be prompt ...

... Text strings can be displayed with the function disp. For example: disp( p This message is displayed. p ) Error messages are best displayed with the function error: error( p Something is wrong. p ) since when placed in an M-file, it aborts execution of the M-file. In an M-file the user can be prompt ...

Ordinary least squares

In statistics, ordinary least squares (OLS) or linear least squares is a method for estimating the unknown parameters in a linear regression model, with the goal of minimizing the differences between the observed responses in some arbitrary dataset and the responses predicted by the linear approximation of the data (visually this is seen as the sum of the vertical distances between each data point in the set and the corresponding point on the regression line - the smaller the differences, the better the model fits the data). The resulting estimator can be expressed by a simple formula, especially in the case of a single regressor on the right-hand side.The OLS estimator is consistent when the regressors are exogenous and there is no perfect multicollinearity, and optimal in the class of linear unbiased estimators when the errors are homoscedastic and serially uncorrelated. Under these conditions, the method of OLS provides minimum-variance mean-unbiased estimation when the errors have finite variances. Under the additional assumption that the errors be normally distributed, OLS is the maximum likelihood estimator. OLS is used in economics (econometrics), political science and electrical engineering (control theory and signal processing), among many areas of application. The Multi-fractional order estimator is an expanded version of OLS.