Analysis of Privacy-preserving distributed data mining protocols

... Algorithm – a finite sequence of instructions, an explicit, step-by-step procedure for solving a problem. A priori – a classic algorithm for learning association rules on transaction databases. Boolean vector – a vector with its only possible values being 0 and 1. Broadcast – refers to transmitting ...

... Algorithm – a finite sequence of instructions, an explicit, step-by-step procedure for solving a problem. A priori – a classic algorithm for learning association rules on transaction databases. Boolean vector – a vector with its only possible values being 0 and 1. Broadcast – refers to transmitting ...

Unsupervised Feature Selection for the k

... Clustering is ubiquitous in science and engineering, with numerous and diverse application domains, ranging from bioinformatics and medicine to the social sciences and the web [15]. Perhaps the most well-known clustering algorithm is the so-called “k-means” algorithm or Lloyd’s method [22], an itera ...

... Clustering is ubiquitous in science and engineering, with numerous and diverse application domains, ranging from bioinformatics and medicine to the social sciences and the web [15]. Perhaps the most well-known clustering algorithm is the so-called “k-means” algorithm or Lloyd’s method [22], an itera ...

Iterative Projected Clustering by Subspace Mining

... determine the subspaces of the clusters. After the subspaces have been determined, each point is assigned to the cluster of the nearest medoid. A medoid is bad if the corresponding cluster has a smaller size than a predefined density threshold. Bad medoids are iteratively replaced by other candidate ...

... determine the subspaces of the clusters. After the subspaces have been determined, each point is assigned to the cluster of the nearest medoid. A medoid is bad if the corresponding cluster has a smaller size than a predefined density threshold. Bad medoids are iteratively replaced by other candidate ...

Advancing the discovery of unique column combinations

... all possible k-candidates need to be checked for uniqueness. Effective candidate generation leads to the reduction of the number of uniqueness verifications by excluding apriori known uniques and non-uniques. E.g., if a column C is a unique and a column D is a non-unique, it is apriori known that the ...

... all possible k-candidates need to be checked for uniqueness. Effective candidate generation leads to the reduction of the number of uniqueness verifications by excluding apriori known uniques and non-uniques. E.g., if a column C is a unique and a column D is a non-unique, it is apriori known that the ...

KLASSIFIKATION UND PROGNOSE

... A decision tree for concept buys_computer, indicating whether or not a customer at AllElectronics is likely to purchase a computer. Each internal (nonleaf) node represents a test on an attribute. Each leaf node represents a class (either buys_computer = yes or buys_computer = no). ...

... A decision tree for concept buys_computer, indicating whether or not a customer at AllElectronics is likely to purchase a computer. Each internal (nonleaf) node represents a test on an attribute. Each leaf node represents a class (either buys_computer = yes or buys_computer = no). ...

Comparative Studies of Various Clustering Techniques and Its

... 1994). It combines the sampling techniques with PAM. The clustering process can be presented as searching a graph where every node is a potential solution, that is, a set of k-medoids. The clustering obtained after replacing a medoid is called the neighbor of the current clustering. In this clusteri ...

... 1994). It combines the sampling techniques with PAM. The clustering process can be presented as searching a graph where every node is a potential solution, that is, a set of k-medoids. The clustering obtained after replacing a medoid is called the neighbor of the current clustering. In this clusteri ...

Learning From Massive Noisy Labeled Data for Image

... classification [12], attribute learning [29], and scene classification [8]. While state-of-the-art results have been continuously reported [23, 25, 28], all these methods require reliable annotations from millions of images [6] which are often expensive and time-consuming to obtain, preventing deep ...

... classification [12], attribute learning [29], and scene classification [8]. While state-of-the-art results have been continuously reported [23, 25, 28], all these methods require reliable annotations from millions of images [6] which are often expensive and time-consuming to obtain, preventing deep ...

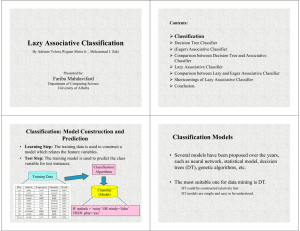

Document

... model. Usually, the given data set is divided into training and test sets, with training set used to build the model and test set used to validate it. ...

... model. Usually, the given data set is divided into training and test sets, with training set used to build the model and test set used to validate it. ...

Orthogonal Range Searching on the RAM, Revisited

... to date for a number of problems, including: 2-d orthogonal range emptiness, 3-d orthogonal range reporting, and offline 4-d dominance range reporting. ...

... to date for a number of problems, including: 2-d orthogonal range emptiness, 3-d orthogonal range reporting, and offline 4-d dominance range reporting. ...

A k-mean clustering algorithm for mixed numeric and categorical data

... rithms in this category [9]. Assuming that there are n objects, PAM finds k-clusters by first finding a representative object for each cluster. The representative, which is the most centrally located point in a cluster, is called a medoid. After selecting k medoids, the algorithm repeatedly tries to ma ...

... rithms in this category [9]. Assuming that there are n objects, PAM finds k-clusters by first finding a representative object for each cluster. The representative, which is the most centrally located point in a cluster, is called a medoid. After selecting k medoids, the algorithm repeatedly tries to ma ...

A New Procedure of Clustering Based on Multivariate Outlier Detection

... that the medoids produced by PAM provide better class separation than the means produced by the k-means clustering algorithm. PAM starts by selecting an initial set of medoids (cluster centers) and iteratively replaces each one of the selected medoids by one of the none-selected medoids in the data ...

... that the medoids produced by PAM provide better class separation than the means produced by the k-means clustering algorithm. PAM starts by selecting an initial set of medoids (cluster centers) and iteratively replaces each one of the selected medoids by one of the none-selected medoids in the data ...

Finding the Frequent Items in Streams of Data

... total number of items. Variations arise when the items are given weights, and further when these weights can also be negative. Finding the Frequent Items in Streams of Data ...

... total number of items. Variations arise when the items are given weights, and further when these weights can also be negative. Finding the Frequent Items in Streams of Data ...

Lecture 9 - MyCourses

... encountered when computing a2 m , i = 1, . . . , k − 1. If -1 is not encountered i then some of a2 m is a non-trivial square root of 1 proving that n is composite. ◮ The Miller-Rabin primality test is a yes-biased Monte Carlo algorithm for COMPOSITES-problem. The error probability can be shown to be ...

... encountered when computing a2 m , i = 1, . . . , k − 1. If -1 is not encountered i then some of a2 m is a non-trivial square root of 1 proving that n is composite. ◮ The Miller-Rabin primality test is a yes-biased Monte Carlo algorithm for COMPOSITES-problem. The error probability can be shown to be ...

"Approximate Kernel k-means: solution to Large Scale Kernel Clustering"

... k -means algorithm. It replaces the Euclidean distance function d2 (xa , xb ) = kxa − xb k2 employed in the k -means algorithm with a non-linear kernel distance function defined as d2κ (xa , xb ) = κ(xa , xa ) + κ(xb , xb ) − 2κ(xa , xb ), where xa ∈ ℜd and xb ∈ ℜd are two data points and κ(., .) : ...

... k -means algorithm. It replaces the Euclidean distance function d2 (xa , xb ) = kxa − xb k2 employed in the k -means algorithm with a non-linear kernel distance function defined as d2κ (xa , xb ) = κ(xa , xa ) + κ(xb , xb ) − 2κ(xa , xb ), where xa ∈ ℜd and xb ∈ ℜd are two data points and κ(., .) : ...

K-nearest neighbors algorithm

In pattern recognition, the k-Nearest Neighbors algorithm (or k-NN for short) is a non-parametric method used for classification and regression. In both cases, the input consists of the k closest training examples in the feature space. The output depends on whether k-NN is used for classification or regression: In k-NN classification, the output is a class membership. An object is classified by a majority vote of its neighbors, with the object being assigned to the class most common among its k nearest neighbors (k is a positive integer, typically small). If k = 1, then the object is simply assigned to the class of that single nearest neighbor. In k-NN regression, the output is the property value for the object. This value is the average of the values of its k nearest neighbors.k-NN is a type of instance-based learning, or lazy learning, where the function is only approximated locally and all computation is deferred until classification. The k-NN algorithm is among the simplest of all machine learning algorithms.Both for classification and regression, it can be useful to assign weight to the contributions of the neighbors, so that the nearer neighbors contribute more to the average than the more distant ones. For example, a common weighting scheme consists in giving each neighbor a weight of 1/d, where d is the distance to the neighbor.The neighbors are taken from a set of objects for which the class (for k-NN classification) or the object property value (for k-NN regression) is known. This can be thought of as the training set for the algorithm, though no explicit training step is required.A shortcoming of the k-NN algorithm is that it is sensitive to the local structure of the data. The algorithm has nothing to do with and is not to be confused with k-means, another popular machine learning technique.