X - Physics

... For example suppose we had a 100 sided dice instead of a 6 sided dice. Here p = 1/100 instead of 1/6. Suppose we had a 1000 sided dice, p = 1/1000...etc b) N is very large, it approaches . For example, instead of throwing 2 dice, we could throw 100 or 1000 dice. The number of counts c) The product ...

... For example suppose we had a 100 sided dice instead of a 6 sided dice. Here p = 1/100 instead of 1/6. Suppose we had a 1000 sided dice, p = 1/1000...etc b) N is very large, it approaches . For example, instead of throwing 2 dice, we could throw 100 or 1000 dice. The number of counts c) The product ...

portable document (.pdf) format

... (4) Compute µj = λj − j−1 k=1 αki αkj µk )/µi for k=1 αkj µk for 2 ≤ j ≤ p and αij = (λij − 2 ≤ i < j ≤ p, iteratively. (5) Generate realizations (x1 , · · · , xp ) of Poisson random vector X with mean vector µ and identity covariance matrix, which can be generated by using standard software package ...

... (4) Compute µj = λj − j−1 k=1 αki αkj µk )/µi for k=1 αkj µk for 2 ≤ j ≤ p and αij = (λij − 2 ≤ i < j ≤ p, iteratively. (5) Generate realizations (x1 , · · · , xp ) of Poisson random vector X with mean vector µ and identity covariance matrix, which can be generated by using standard software package ...

this PDF file - IndoMS Journal on Statistics

... This manuscript is concerned with investigation of a consistent estimator for the mean function of a compound cyclic Poisson process in the presence of linear trend. The cyclic component of intensity function of this process is not assumed to have any parametric form, but its period is assumed to be ...

... This manuscript is concerned with investigation of a consistent estimator for the mean function of a compound cyclic Poisson process in the presence of linear trend. The cyclic component of intensity function of this process is not assumed to have any parametric form, but its period is assumed to be ...

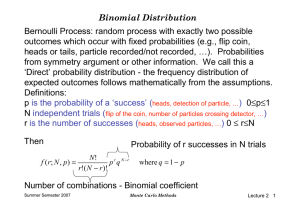

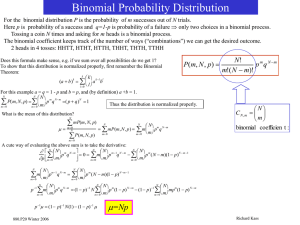

Binomial Distribution Bernoulli Process: random process with

... Notes: • for large N, p near 0.5 distribution is approx. symmetric • for p near 0 or 1, the variance is reduced ...

... Notes: • for large N, p near 0.5 distribution is approx. symmetric • for p near 0 or 1, the variance is reduced ...

The Poisson Distribution

... occurrences of some phenomenon in a specified unit of space or time. For example, ...

... occurrences of some phenomenon in a specified unit of space or time. For example, ...

Assignment 29 - STEP Correspondence Course

... Solve the equation u2 + 2u sinh x − 1 = 0 giving u in terms of x. Find the solution of the differential equation ...

... Solve the equation u2 + 2u sinh x − 1 = 0 giving u in terms of x. Find the solution of the differential equation ...

Probability Distributions - Sys

... • A random variable, X associates a unique numerical value with every outcome of an experiment. The value of the random variable will vary from trial to trial as the experiment is repeated. • There are two types of random variable - discrete and continuous. • A random variable has either an associat ...

... • A random variable, X associates a unique numerical value with every outcome of an experiment. The value of the random variable will vary from trial to trial as the experiment is repeated. • There are two types of random variable - discrete and continuous. • A random variable has either an associat ...

SIMULATING THE POISSON PROCESS Contents 1. Introduction 1 2

... store at a fixed rate, or the number of radioactive particles discharged in a fixed amount of time [1]. The Poisson process is a random process which counts the number of random events that have occurred up to some point t in time. The random events must be independent of each other and occur with a ...

... store at a fixed rate, or the number of radioactive particles discharged in a fixed amount of time [1]. The Poisson process is a random process which counts the number of random events that have occurred up to some point t in time. The random events must be independent of each other and occur with a ...

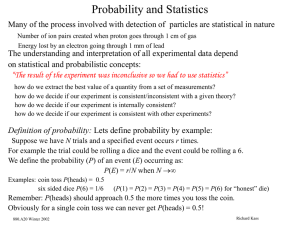

Probability and Statistics, part II

... c) The product Np is finite. A good example of the above conditions occurs when one considers radioactive decay. anumber of Prussian soldiers kicked to death by horses per year per army corps! Suppose we have 25 mg of an element. This is 1020 atoms. aquality control, failure rate predictions Suppo ...

... c) The product Np is finite. A good example of the above conditions occurs when one considers radioactive decay. anumber of Prussian soldiers kicked to death by horses per year per army corps! Suppose we have 25 mg of an element. This is 1020 atoms. aquality control, failure rate predictions Suppo ...

Lecture 12 - Mathematics

... Pitman [11]: Sections 2.4,3.8, 4.2 Larsen–Marx [10]: Sections 3.8, 4.2, 4.6 ...

... Pitman [11]: Sections 2.4,3.8, 4.2 Larsen–Marx [10]: Sections 3.8, 4.2, 4.6 ...

Practice Exam 2 solutions

... subsequent box will contain a new prize. Let X1 , X2 , X3 , X4 be the discrete random variables X1 = number of box containing first new prize, X2 = number of additional boxes until obtaining second new prize, X3 = number of additional boxes until obtaining third new prize, X4 = number of additional ...

... subsequent box will contain a new prize. Let X1 , X2 , X3 , X4 be the discrete random variables X1 = number of box containing first new prize, X2 = number of additional boxes until obtaining second new prize, X3 = number of additional boxes until obtaining third new prize, X4 = number of additional ...

Solution - Statistics

... 2. A light bulb has a lifetime that is exponential with a mean of µ = 200 days. When it burns out, a janitor replaces it immediately. In addition, there is a handyman who comes, on average, h = 3 times per year according to a Poisson process and replaces the lightbulb as “preventative maintenance.” ...

... 2. A light bulb has a lifetime that is exponential with a mean of µ = 200 days. When it burns out, a janitor replaces it immediately. In addition, there is a handyman who comes, on average, h = 3 times per year according to a Poisson process and replaces the lightbulb as “preventative maintenance.” ...

Math 215 Lecture notes for 10/29/98: Poisson Distribution 1

... Let X be the number of orders today. It is a Poisson random variable, with parameter λ equal to 3 (the average number of orders in a day). We would like to find the probability of X > 5, which (in principle) involves adding up the probability for X = 6 to the probability for X = 7, and adding this t ...

... Let X be the number of orders today. It is a Poisson random variable, with parameter λ equal to 3 (the average number of orders in a day). We would like to find the probability of X > 5, which (in principle) involves adding up the probability for X = 6 to the probability for X = 7, and adding this t ...

ppt

... A random experiment with set of outcomes Random variable is a function from set of outcomes to real numbers ...

... A random experiment with set of outcomes Random variable is a function from set of outcomes to real numbers ...

S2 Poisson Distribution

... The probability that a component coming off a production line is faulty is 0.01. (a) If a sample of size 5 is taken, find the probability that one of the components is faulty. (b) What is the probability that a batch of 250 of these components has more than 3 faulty components in it? ...

... The probability that a component coming off a production line is faulty is 0.01. (a) If a sample of size 5 is taken, find the probability that one of the components is faulty. (b) What is the probability that a batch of 250 of these components has more than 3 faulty components in it? ...

Probability Theory

... Prove that, given that the number of geomagnetic reversals in the first 100 Bernoulli trials is equal to 4 (that is, {S100 = 4}), the joint distribution of (T1 , . . . , T4 ), the vector of the number of 282 ky periods until the 1st., 2nd., 3rd. and 4th. geomagnetic reversals, is the same as the dis ...

... Prove that, given that the number of geomagnetic reversals in the first 100 Bernoulli trials is equal to 4 (that is, {S100 = 4}), the joint distribution of (T1 , . . . , T4 ), the vector of the number of 282 ky periods until the 1st., 2nd., 3rd. and 4th. geomagnetic reversals, is the same as the dis ...

Sect. 1.5: Probability Distribution for Large N

... The Poisson Distribution • Poisson Distribution: An approximation to the binomial distribution for the SPECIAL CASE when the average number (mean µ) of successes is very much smaller than the possible number n. i.e. µ << n because p << 1. • This distribution is important for the study of such pheno ...

... The Poisson Distribution • Poisson Distribution: An approximation to the binomial distribution for the SPECIAL CASE when the average number (mean µ) of successes is very much smaller than the possible number n. i.e. µ << n because p << 1. • This distribution is important for the study of such pheno ...

Sect. 1.5: Probability Distribution for Large N

... • Poisson Distribution: An approximation to the binomial distribution for the SPECIAL CASE when the average number (mean µ) of successes is very much smaller than the possible number n. i.e. µ << n because p << 1. • This distribution is important for the study of such phenomena as radioactive decay. ...

... • Poisson Distribution: An approximation to the binomial distribution for the SPECIAL CASE when the average number (mean µ) of successes is very much smaller than the possible number n. i.e. µ << n because p << 1. • This distribution is important for the study of such phenomena as radioactive decay. ...

The Poisson distribution The Poisson distribution is, like the

... where X is defined as the number of occurrences of the variable and µ is the mean number of occurrences over a specific interval (explained below). In fact, µ is the only parameter of the Poisson distribution and also happens to be the variance of the distribution. We hence write X ~ Po( µ ) Unlike ...

... where X is defined as the number of occurrences of the variable and µ is the mean number of occurrences over a specific interval (explained below). In fact, µ is the only parameter of the Poisson distribution and also happens to be the variance of the distribution. We hence write X ~ Po( µ ) Unlike ...