∑ ( ) ( )

... sx = n n −1 n n −1 ∑ zxz y z = x − x z = y − y r= x y n −1 sx sy sy b=r a = y − bx yˆ = a + bx residual = y − yˆ sx Sampling Distribution of p̂ : ...

... sx = n n −1 n n −1 ∑ zxz y z = x − x z = y − y r= x y n −1 sx sy sy b=r a = y − bx yˆ = a + bx residual = y − yˆ sx Sampling Distribution of p̂ : ...

Reviews

... Words/Phrases you should know: Statistics Sampling error The process of statistics Non-sampling error (8 types discussed in class) Qualitative variable Double-blind Quantitative variable Types of experiments Discrete variable 1. Completely randomized design Continuous variable 2. ...

... Words/Phrases you should know: Statistics Sampling error The process of statistics Non-sampling error (8 types discussed in class) Qualitative variable Double-blind Quantitative variable Types of experiments Discrete variable 1. Completely randomized design Continuous variable 2. ...

Lecture2

... population distr., this can be used in resampling. In this case, when we draw randomly from the sample we can use population distr. For example, if we know that the population distr. is normal then estimate its parameters using the sample mean and variance. Then approximate the population distr. wit ...

... population distr., this can be used in resampling. In this case, when we draw randomly from the sample we can use population distr. For example, if we know that the population distr. is normal then estimate its parameters using the sample mean and variance. Then approximate the population distr. wit ...

April 21

... 1. Assumptions: X1 , . . . , Xm and Y1 , . . . , Yn are independent random samples from populations that have a normal distribution with unknown means µX , µY and unknown variances. (a) As in the last section, we use also consider the case that the X’s and Y ’s result from a randomized comparative e ...

... 1. Assumptions: X1 , . . . , Xm and Y1 , . . . , Yn are independent random samples from populations that have a normal distribution with unknown means µX , µY and unknown variances. (a) As in the last section, we use also consider the case that the X’s and Y ’s result from a randomized comparative e ...

Notes 19 - Wharton Statistics

... Final report on your project due Wed., Dec. 17th, 5 p.m. For complex surveys, it is nearly impossible to develop a closed-form expression for the variance of many estimators. An alternative approach to estimating variances (i.e., finding standard errors) and forming approximate confidence intervals ...

... Final report on your project due Wed., Dec. 17th, 5 p.m. For complex surveys, it is nearly impossible to develop a closed-form expression for the variance of many estimators. An alternative approach to estimating variances (i.e., finding standard errors) and forming approximate confidence intervals ...

Word document

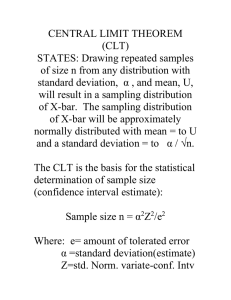

... • Note these definitions are equivalent only if the elements are drawn ________ __________________ from the population. • If the population size is very large, whether the sampling was done with or without replacement makes little practical difference. ...

... • Note these definitions are equivalent only if the elements are drawn ________ __________________ from the population. • If the population size is very large, whether the sampling was done with or without replacement makes little practical difference. ...

Slide 1

... Create nonparametric bootstrap estimates for the unknown parameters in Q* Now find Q* by maximizing over the j ...

... Create nonparametric bootstrap estimates for the unknown parameters in Q* Now find Q* by maximizing over the j ...

Bootstrapping (statistics)

In statistics, bootstrapping can refer to any test or metric that relies on random sampling with replacement. Bootstrapping allows assigning measures of accuracy (defined in terms of bias, variance, confidence intervals, prediction error or some other such measure) to sample estimates. This technique allows estimation of the sampling distribution of almost any statistic using random sampling methods. Generally, it falls in the broader class of resampling methods.Bootstrapping is the practice of estimating properties of an estimator (such as its variance) by measuring those properties when sampling from an approximating distribution. One standard choice for an approximating distribution is the empirical distribution function of the observed data. In the case where a set of observations can be assumed to be from an independent and identically distributed population, this can be implemented by constructing a number of resamples with replacement, of the observed dataset (and of equal size to the observed dataset).It may also be used for constructing hypothesis tests. It is often used as an alternative to statistical inference based on the assumption of a parametric model when that assumption is in doubt, or where parametric inference is impossible or requires complicated formulas for the calculation of standard errors.