MISCELLANEOUS REGRESSION TOPICS

... Normally, this variance will not be the same, a situation that violates the assumption of constant variance. ii. Recall the discussion and examples earlier in the course that showed the distribution of Y's at various values of X. We said that the variance of these distributions is assumed to be the ...

... Normally, this variance will not be the same, a situation that violates the assumption of constant variance. ii. Recall the discussion and examples earlier in the course that showed the distribution of Y's at various values of X. We said that the variance of these distributions is assumed to be the ...

Regression Line for Standardized Values (z_x,z_y )

... directly in this software package. You need to look in two places for it. Taking the square root of the “R squared” (coefficient of determination) is not enough. You must look at the sign of the slope too. Positive slope is a positive r-value. Negative slope is a negative r-value. ...

... directly in this software package. You need to look in two places for it. Taking the square root of the “R squared” (coefficient of determination) is not enough. You must look at the sign of the slope too. Positive slope is a positive r-value. Negative slope is a negative r-value. ...

Slide 1

... random factors: 1 - r2 r2 is the Coefficient of Determination or Variation explained e.g. r = 0.661 r2 = = 0.44 less than half of the variation (44%) in the dependent variable due to independent variable ...

... random factors: 1 - r2 r2 is the Coefficient of Determination or Variation explained e.g. r = 0.661 r2 = = 0.44 less than half of the variation (44%) in the dependent variable due to independent variable ...

Simple Linear Regression and Correlation

... • Univariate data: 1 variable is measured on each sample unit or population unit e.g. height of each student in a sample • Bivariate data: 2 variables are measured on each sample unit or population unit e.g. height and GPA of each student in a sample; (caution: data from 2 separate samples is not bi ...

... • Univariate data: 1 variable is measured on each sample unit or population unit e.g. height of each student in a sample • Bivariate data: 2 variables are measured on each sample unit or population unit e.g. height and GPA of each student in a sample; (caution: data from 2 separate samples is not bi ...

Mind On Statistics Test Bank

... A. There is a positive association. B. There is no association. C. There is a negative association. D. The direction of the association can’t be determined from the equation. 40. Suppose that the latitudes of two cities differ by 10. What is the estimated difference in the average January temperatur ...

... A. There is a positive association. B. There is no association. C. There is a negative association. D. The direction of the association can’t be determined from the equation. 40. Suppose that the latitudes of two cities differ by 10. What is the estimated difference in the average January temperatur ...

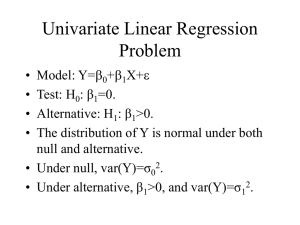

Lecture 23 - University of Pennsylvania

... related to the dependent variable (Are any of the X’s useful in predicting Y)? • To answer the question we test the hypothesis H0: b1 = b2 = … = bk=0 H1: At least one bi is not equal to zero. • If at least one bi is not equal to zero, the model has ...

... related to the dependent variable (Are any of the X’s useful in predicting Y)? • To answer the question we test the hypothesis H0: b1 = b2 = … = bk=0 H1: At least one bi is not equal to zero. • If at least one bi is not equal to zero, the model has ...

Regression and Forecasting Models

... a. Y = β0 + β1X + ε b. correlation of 0.68 is moderately high. R2 in the regression would be a bit less than ...

... a. Y = β0 + β1X + ε b. correlation of 0.68 is moderately high. R2 in the regression would be a bit less than ...

Regression and Correlation

... etc… Often times, several predictors are used to make a prediction of one variable (ex. height, weight, age, smoking status, gender can all be used to predict blood pressure). We focus on the special case of using one predictor variable for a response, where the relationship is linear. Exam ...

... etc… Often times, several predictors are used to make a prediction of one variable (ex. height, weight, age, smoking status, gender can all be used to predict blood pressure). We focus on the special case of using one predictor variable for a response, where the relationship is linear. Exam ...

Regression 565 included in explanatory matrix X. As in simple linear

... Such an equation is linear in its parameters (if one considers the terms x2, …, xk as so many explanatory variables), although the modelled response of y to the explanatory variable x is nonlinear. The degree of the equation, which is its highest exponent, determines the shape of the curve: each deg ...

... Such an equation is linear in its parameters (if one considers the terms x2, …, xk as so many explanatory variables), although the modelled response of y to the explanatory variable x is nonlinear. The degree of the equation, which is its highest exponent, determines the shape of the curve: each deg ...

Linear regression

In statistics, linear regression is an approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted X. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, the process is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.)In linear regression, data are modeled using linear predictor functions, and unknown model parameters are estimated from the data. Such models are called linear models. Most commonly, linear regression refers to a model in which the conditional mean of y given the value of X is an affine function of X. Less commonly, linear regression could refer to a model in which the median, or some other quantile of the conditional distribution of y given X is expressed as a linear function of X. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of y given X, rather than on the joint probability distribution of y and X, which is the domain of multivariate analysis.Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine.Linear regression has many practical uses. Most applications fall into one of the following two broad categories: If the goal is prediction, or forecasting, or error reduction, linear regression can be used to fit a predictive model to an observed data set of y and X values. After developing such a model, if an additional value of X is then given without its accompanying value of y, the fitted model can be used to make a prediction of the value of y. Given a variable y and a number of variables X1, ..., Xp that may be related to y, linear regression analysis can be applied to quantify the strength of the relationship between y and the Xj, to assess which Xj may have no relationship with y at all, and to identify which subsets of the Xj contain redundant information about y.Linear regression models are often fitted using the least squares approach, but they may also be fitted in other ways, such as by minimizing the ""lack of fit"" in some other norm (as with least absolute deviations regression), or by minimizing a penalized version of the least squares loss function as in ridge regression (L2-norm penalty) and lasso (L1-norm penalty). Conversely, the least squares approach can be used to fit models that are not linear models. Thus, although the terms ""least squares"" and ""linear model"" are closely linked, they are not synonymous.